Accelerator technologies

Particle-accelerator performance depends critically on the underlying technology. Thus the construction of larger, more powerful and more sophisticated accelerators has resulted in technological progress, yielding applications in other areas.

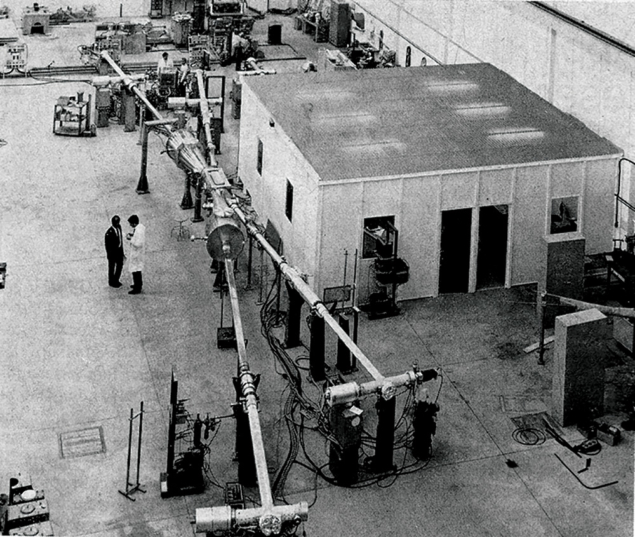

The basic particle accelerator technologies are electrical and radiofrequency engineering, for the powerful electric and magnetic fields needed respectively to accelerate the particles and control the beams.

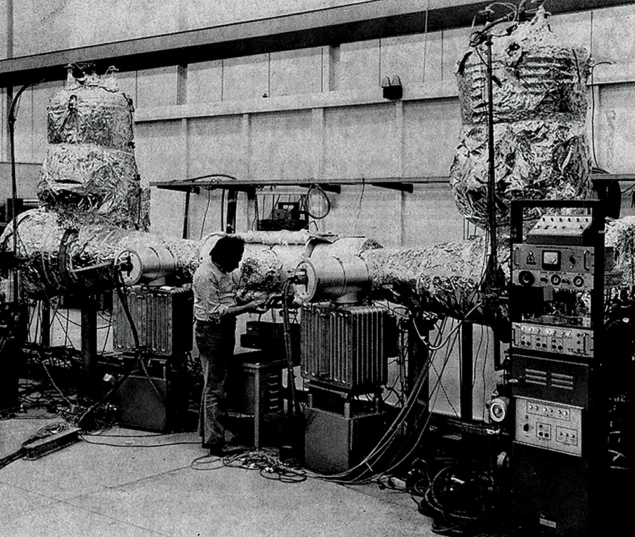

Superconductivity, with its suppression of ohmic losses, makes the generation of these fields more efficient. With present superconducting materials requiring extremely low temperatures, cryogenics has also become a key accelerator technology.

Beams also need a high vacuum to minimize unwanted collisions. Mechanical engineering appears in the design of nearly every component, while another essential ingredient is the particle source.

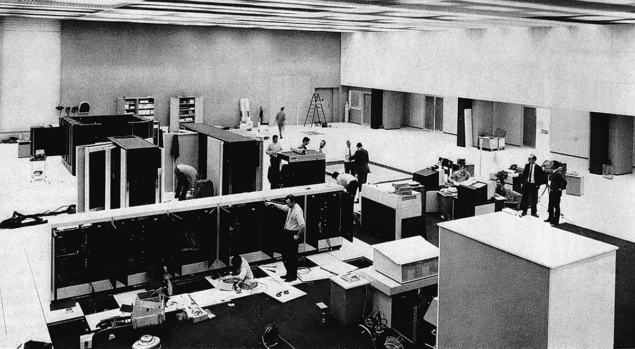

Finally, accelerators have led to the development of a variety of monitoring and controlling techniques, both for their construction (high-precision surveying) and for their operation.

Superconductivity

Large-scale applications of superconductivity have been pioneered by particle accelerator engineers. Improved accelerator performance needed increased magnetic fields and electric fields while keeping the energy consumption within acceptable limits.

This has stimulated the development of superconducting dipole and quadrupole magnets and of superconducting radiofrequency accelerating cavities. The first super-conducting cables (for bubble chambers and nuclear magnetic resonance – NMR – spectrometers) were capable only of d.c. operation. Accelerator requirements seeded the development of superconducting cables for a.c. operation, at the heart of all major ongoing applications. These cables are made of intrinsically stable conductors, twisted thin strands of superconducting wires embedded in a copper matrix and suitable for ramped fields.

The implementation of a fusion reactor, either based on magnetic or inertial confinement, will probably rely on superconductivity. In the case of magnetic confinement, a net energy gain can only be achieved if the confining magnetic field does not require excessive power. Furthermore, the high magnetic fields permitted by superconductivity may allow the design of more compact machines. ITER, the future large international research tokamak, will be designed around superconducting magnets.

Research on inertial confinement fusion explores several ways of imploding the fuel pellet. Particle beam fusion systems are based on ideas resulting directly from particle physics research, while the most promising laser system, the free electron laser, also derives from particle accelerator technology.

The output of electric power generators has grown considerably in recent decades, performance also having been boosted by improved cooling, allowing higher current densities. However this also produces a rise in ohmic losses and a corresponding reduction in efficiency, prompting a closer look at superconductivity.

Transmission of electric power is another possible application of superconductivity. The prospect of replacing the vast electrical highways feeding large cities by underground superconducting cables is an attractive proposition. Successful tests of a twin-conductor 60 Hz 115 m-long flexible cable transporting triple-phase 1000 MVA at Brookhaven National Laboratory in the 1970s have opened the way to longer transmission lines. Increased environmental consciousness will strongly encourage the development of compact underground power lines.

Another potentially far-reaching application of superconductivity in power engineering is the large scale storage of electricity. Large coal-fired and nuclear power plants are designed to operate close to full capacity. Their efficiency and expected lifetime is decreased significantly if they have to suppress large fractions of their capacity. On the other hand electricity demand has large seasonal, weekly and daily variations. A variety of technologies, ranging from gas turbines to pumped hydroelectricity, are currently used to handle these variations. Reducing unwanted gas emission and the difficulty of finding suitable hydrostorage sites would make new techniques such as SMES (Superconducting Magnetic Energy

Storage) attractive.

One of its main advantages is that energy is stored in its electrical form and requires no intermediate conversion from or into thermal or kinetic energy. Reference systems for 5 GVA have been designed in the US and Japan. The feasibility of the concept has successfully been tested in a 30 MJ system installed in 1982 to stabilize the electric power transmission between the Pacific Northwest and Southern California.

High speed ground transportation could also become a large scale application of superconductivity. Prototypes have been demonstrated in Germany and Japan. A 10 ton Japanese test vehicle, using levitation from eddy currents created by an electromagnet moving above a conducting rail, has exceeded 500 km/h. Superconducting coils fulfil the three functions of suspension, guiding and propelling the vehicle.

Research on the application of superconducting magnetic coils for marine propulsion is also underway in Japan and a prototype boat has recently been tested successfully.

Another industrial application of superconductivity is magnetic separation for mineral and scrap metal processing – requiring high magnetic forces over large volumes.

Eddy currents induced by superconducting magnets could slow convection currents during the crystallization process of silicon for semiconductor production. This would lead to more homogeneous crystals and open up the manufacture of larger single chip devices.

Another avenue worth exploring is ultra-fast computers based on the rapid switching of Josephson diodes.

Superconducting magnets are used all over the world for the characterization and identification of chemical compounds by nuclear magnetic resonance spectroscopy. While some 10 years ago typical systems used magnets in the 5 Tesla range, commercial devices now use magnets operating near 10 Tesla, with correspondingly increased performance.

Superconductivity is also finding applications in medical diagnosis through magnetic resonance imaging scanners, less invasive than classical X-ray diagnosis. Again, performance improvements would follow from higher field magnets.

The high temperature superconductors discovered only a few years ago have not yet found their way into accelerator technology as they can neither be made into high density current-carrying cables for magnet coils nor deposited on large surfaces for radiofrequency cavities. However, this rapidly developing field is being closely monitored. Any materials breakthrough would open the way to wider applications.

Cryogenics

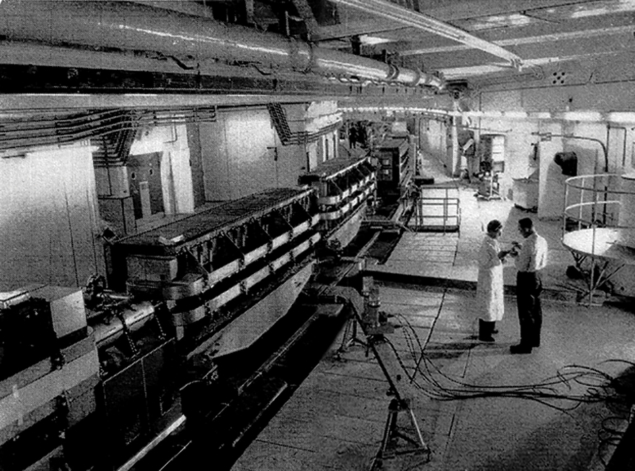

Cryogenics, the technique of low temperature, goes hand in hand with superconductivity. Classical superconductors operate at a few degrees K, provided by liquid helium cooling. Physicists had become familiar with large-scale low temperature work through the liquid hydrogen bubble chamber, one of the most widely used detectors of the 1960s and early 70s.

Most superconducting magnets now use niobium-titanium wire and operate at temperatures close to 4.2 K, the boiling point of helium. At this temperature, the field achievable with NbTi is limited to about 6.5 Tesla.

In the quest for higher magnetic fields, superconductors with better magnetic properties, such as niobium-tin, are troublesomely brittle. For its next accelerator project, the LHC proton collider, CERN thus prefers to exploit the improved NbTi performance at lower temperatures (2 K).

Such temperatures offer attractive features: liquid helium becomes superfluid, with an enormous increase in thermal conductivity and reduction of viscosity and much practical payoff.

Cryogenics is applied in other fields, for instance in vacuum and space science and for sensitive instrumentation such as low noise amplifiers and infra-red night vision devices.

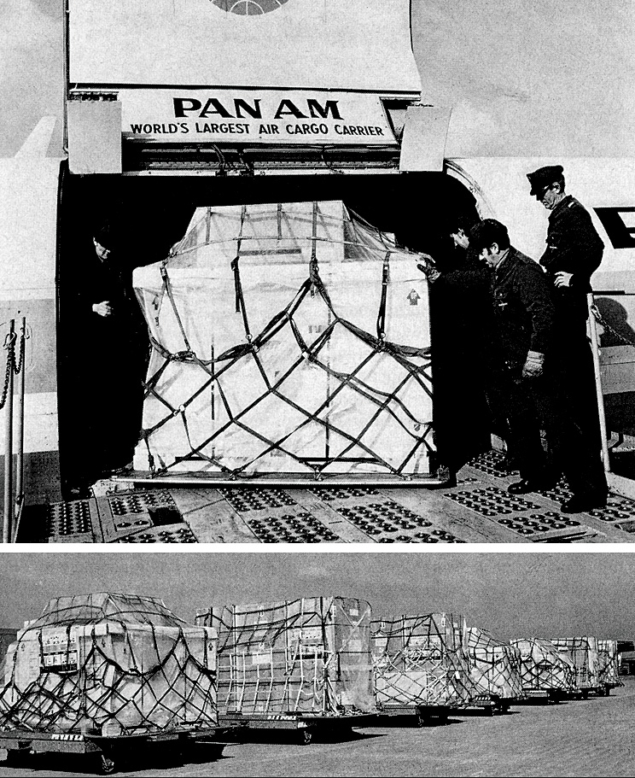

The NMR superconducting magnets used both in the laboratory and for medical diagnostics employ cryogenics technology developed for accelerator magnets. What used to be delicate, fragile and complex systems requiring continuous attention have today become a reliable technology.

Another cryogenics outlet is the production through liquefaction of extremely pure gases, useful in any industrial processes (e.g. in the semiconductor industry) requiring extreme cleanliness or purity.

Vacuum and surface science

Particle acceleration requires a good vacuum to avoid scattering the beam on residual gas. Pressures in the region of 10–6 – 10–7 Torr are generally sufficient for synchrotrons, where acceleration lasts only a few seconds. However storage rings and colliders which must hold beams over several days have more critical requirements, calling for the 10–10 – 10–11 Torr range. Even lower pressures are needed near the detectors to reduce background.

The valuable experience at CERN’s Intersecting Storage Rings (ISR) brought considerable progress in this field. The ISR was the first large machine to be operated using the advanced technology of ultra-high vacuum systems (UHV), eventually reaching 10–12 Torr. This catalysed the vacuum industry to develop UHV components (e.g. sputter ion pumps, all-metal valves, seals, gauges).

Equally important for UHV systems is the cleanliness of all surfaces. Techniques for cleaning and preparing surfaces – chemical treatments, bakeout and glow discharge to reduce gas desorption – were developed.

The construction of CERN’s Large Electron Positron collider (LEP) has further stimulated progress in vacuum technology. Although the vacuum level is less than the ISR, evacuating a 27-kilometre ring posed special problems. This led to the development of a linear non-evaporable getter (NEG) pump using an aluminium-zirconium alloy bonded in powder form on a constantan ribbon. Another development has been the all-aluminium vacuum chamber with better thermal conductivity and lower residual radioactivity than stainless steel and which can be extruded into complicated shapes.

Other vacuum components have been developed following accelerator experience, particularly where mechanical motion under vacuum is needed. As pressure falls, lubrication is inhibited and friction increases dramatically. Ingenious solutions had to be found for fast closing valves, beam diagnostic devices or shutters and movable sensing electrodes and deflectors.

Vacuum seals have also undergone considerable improvements. Elastomers can sustain neither high radiation nor bakeout at 300–400C, and metal joints have now generally been introduced.

This progress in vacuum technology is finding direct applications in space science and fusion test facilities, and in industry, for example in the technologies for semiconductor manufacture.

Even when extremely low pressures are not required, for example in surgery, the pharmaceuticals industry or in food preparation and conservation, the extreme cleanliness of UHV systems and their reliability have brought benefits. Cleanliness and special surface conditions are essential for quality and performance in many high technology areas. Vacuum and surface technology therefore play an increasingly important role.

Particle sources

Accelerators require intense sources of electrons and ions. An important application of such sources is the implantation of ions for semiconductor circuit elements. Ion beams are also used in material preparation such as pre-deposition surface cleaning/conditioning and low energy Ion Beam Assisted Deposition (IBAD). IBAD films have remarkable properties (adhesion, hardness, optical transmission,…). Another important industrial application is the electron beam technique used for precision welding.

Finally the classic applications example is the range of electron tubes used for telecommunications, broadcasting and radar which derive from the cathode-ray tube protoaccelerator devices developed at the turn of the century for basic physics research.

- This article was adapted from text in CERN Courier vol. 34, May 1994, pp6–10.