Kicking off a special issue on computing in March 1972 – the year Intel’s 8008 processor was launched and the compact disc invented – CERN’s Lew Kowarski explained why computers were here to stay.

CERN is the favourite showpiece of international co-operation in advanced scientific research. The public at large is, by now, quite used to the paradox of CERN’s outstandingly large-scale electromagnetic machines (accelerators) being needed to investigate outstandingly small-scale physical phenomena. A visitor finds it natural that this, largest-in-Europe, centre of particle research should possess the largest, most complex and costly accelerating apparatus.

But when told that CERN is also the home of the biggest European collection of computers, the layman may wonder: why is it precisely in this branch of knowledge that there is so much to compute? Some sciences such as meteorology and demography appear to rely quite naturally on enormously vast sets of numerical data, on their collection and manipulation. But high energy physics, not so long ago, was chiefly concerned with its zoo of ‘strange particles’ which were hunted and photographed like so many rare animals. This kind of preoccupation seems hardly consistent with the need for the most powerful ‘number crunchers’.

Perplexities of this sort may arise if we pay too much attention to the (still quite recent) beginnings of the modern computer and to its very name. Electronic digital computers did originate in direct descent from mechanical arithmetic calculators; yet their main function today is far more significant and universal than that suggested by the word ‘computer’. The French term ‘ordinateur’ or the Italian ‘elaboratore’ are better suited to the present situation and this requires some explanation.

What is a computer?

When, some forty years ago, the first attempts were made to replace number-bearing cogwheels and electro-mechanical relays by electronic circuits, it was quickly noticed that, not only were the numbers easier to handle if expressed in binary notation (as strings of zeros and ones) but also that the familiar arithmetical operations could be presented as combinations of two-way (yes or no) logical alternatives. It took some time to realize that a machine capable of accepting an array of binary-coded numbers, together with binary-coded instructions of what to do with them (stored program) and of producing a similarly coded result, would also be ready to take in any kind of coded information, to process it through a prescribed chain of logical operations and to produce a structured set of yes-or-no conclusions. Today a digital computer is no longer a machine primarily intended for performing numerical calculations; it is more often used for non-numerical operations such as sorting, matching, retrieval, construction of patterns and making decisions which it can implement even without any human intervention if it is directly connected to a correspondingly structured set of open-or-closed switches.

Automatic ‘black boxes’ capable of producing a limited choice of responses to a limited variety of input (for example, vending machines or dial telephones) were known before; their discriminating and logical capabilities had to be embodied in their rigid internal hardware. In comparison, the computer can be seen as a universally versatile black box, whose hardware responds to any sequence of coded instructions. The complication and the ingenuity are then largely transferred into the writing of the program.

The new black box became virtually as versatile as the human brain; at the same time it offered the advantages of enormously greater speed, freedom from error and the ability to handle, in a single operation, any desired volume of incoming data and of ramified logical chains of instructions. In this latter respect the limit appears to be set only by the size (and therefore the cost) of the computer, i.e. by the total number of circuit elements which are brought together and interconnected in the same computing assembly.

High energy physics as a privileged user

We are only beginning to discover and explore the new ways of acquiring scientific knowledge which have been opened by the advent of computers. During the first two decades of this exploration, that is since 1950, particle physics happened to be the most richly endowed domain of basic research. Secure in their ability to pay, high energy physicists were not slow to recognize those features of their science which put them in the forefront among the potential users of the computing hardware and software in all of their numerical and non-numerical capabilities. The three most relevant characteristics are as follows:

1. Remoteness from the human scale of natural phenomena

Each individual ‘event’ involving sub-nuclear particles takes place on a scale of space, time and momentum so infinitesimal that it can be made perceptible to human senses only through a lengthy and distorting chain of larger-scale physical processes serving as amplifiers. The raw data supplied by a high energy experiment have hardly any direct physical meaning; they have to be sorted out, interpreted and re-calculated before the experimenter can see whether they make any sense at all – and this means that the processing has to be performed, if possible, in the ‘real time’ of the experiment in progress, or at any rate at a speed only a computer can supply.

2. The rate and mode of production of physical data

Accelerating and detecting equipment is very costly and often unique; there is a considerable pressure from the user community and from the governments who invest in this equipment that it should not be allowed to stand idle. As a result, events are produced at a rate far surpassing the ability of any human team to observe them on the spot. They have to be recorded (often with help from a computer) and the records have to be scanned and sifted – a task which, nowadays, is usually left to computers because of its sheer volume. In this way, experiments in which a prolonged ‘run’ produces a sequence of mostly trivial events, with relatively few significant ones mixed in at random, become possible without wasting the valuable time of competent human examiners.

3. High statistics experiments

As the high energy physics community became used to computer-aided processing of events, it became possible to perform experiments whose physical meaning resided in a whole population of events, rather than in each taken singly. In this case the need grew from an awareness of having the means to satisfy the need; a similar evolution may yet occur in other sciences (e.g. those dealing with the environment), following the currents of public attention and possibly de-throning our physics from pre-eminence in scientific computation.

Modes of application

In order to stress here our main point, which is the versatility of the modern computer and the diversity of its applications in a single branch of physical research, we shall classify all the ways in which the ‘universal black box’ can be put to use in CERN’s current work into eight ‘modes of application’ (roughly corresponding to the list of ‘methodologies’ adopted in 1968 by the U.S. Association for Computing Machinery):

1. Numerical mathematics

This mode is the classical domain of the ‘computer used as a computer’ either for purely arithmetic purposes or for more sophisticated tasks such as the calculation of less common functions or the numerical solution of differential and integral equations. Such uses are frequent in practically every phase of high energy physics work, from accelerator design to theoretical physics, including such contributions to experimentation as the kinematic analysis of particle tracks and statistical deductions from a multitude of observed events.

2. Data processing

Counting and measuring devices used for the detection of particles produce a flow of data which have to be recorded, sorted and otherwise handled according to appropriate procedures. Between the stage of the impact of a fast-moving particle on a sensing device and that of a numerical result available for a mathematical computation, data processing may be a complex operation requiring its own hardware, software and sometimes a separate computer.

3. Symbolic calculations

Elementary logical operations which underline the computers’ basic capabilities are applicable to all sorts of operands such as those occurring in algebra, calculus, graph theory, etc. High-level computer languages such as LISP are becoming available to tackle this category of problems which, at CERN, is encountered mostly in theoretical physics but, in the future, may become relevant in many other domains such as apparatus design, analysis of track configurations, etc.

4. Computer graphics

Computers may be made to present their output in a pictorial form, usually on a cathode-ray screen. Graphic output is particularly suitable for quick communication with a human observer and intervener. Main applications at present are the study of mathematical functions for the purposes of theoretical physics, the design of beam handling systems and Cherenkov counter optics and statistical analysis of experimental results.

5. Simulation

Mathematical models expressing ‘real world’ situations may be presented in a computer-accessible form, comprising the initial data and a set of equations and rules which the modelled system is supposed to follow in its evolution. Such ‘computer experiments’ are valuable for advance testing of experimental set-ups and in many theoretical problems. Situations involving statistical distributions may require, for their computer simulation, the use of computer-generated random numbers during the calculation. This kind of simulation, known as the Monte-Carlo method, is widely used at CERN.

6. File management and retrieval

As a big organization, CERN has its share of necessary paper-work including administration (personnel, payroll, budgets, etc.), documentation (library and publications) and the storage of experimental records and results. Filing and retrieval of information tend nowadays to be computerized in practically every field of organized human activity; at CERN, these pedestrian applications add up to a non-negligible fraction of the total amount of computer use.

7. Pattern recognition

Mainly of importance in spark-chamber and bubble-chamber experiments – the reconstruction of physically coherent and meaningful tracks out of computed coordinates and track elements is performed by the computer according to programmed rules.

8. Process control

Computers can be made to follow any flow of material objects through a processing system by means of sensing devices which, at any moment, supply information on what is happening within the system and what is emerging from it. Instant analysis of this information by the computer may produce a ‘recommendation of an adjustment’ (such as closing a valve, modifying an applied voltage, etc.) which the computer itself may be able to implement. Automation of this kind is valuable when the response must be very quick and the logical chain between the input and the output is too complicated to be entrusted to any rigidly constructed automatic device. At CERN the material flow to be controlled is usually that of charged particles (in accelerators and beam transport systems) but the same approach is applicable in many domains of engineering, such as vacuum and cryogenics.

Centralization versus autonomy

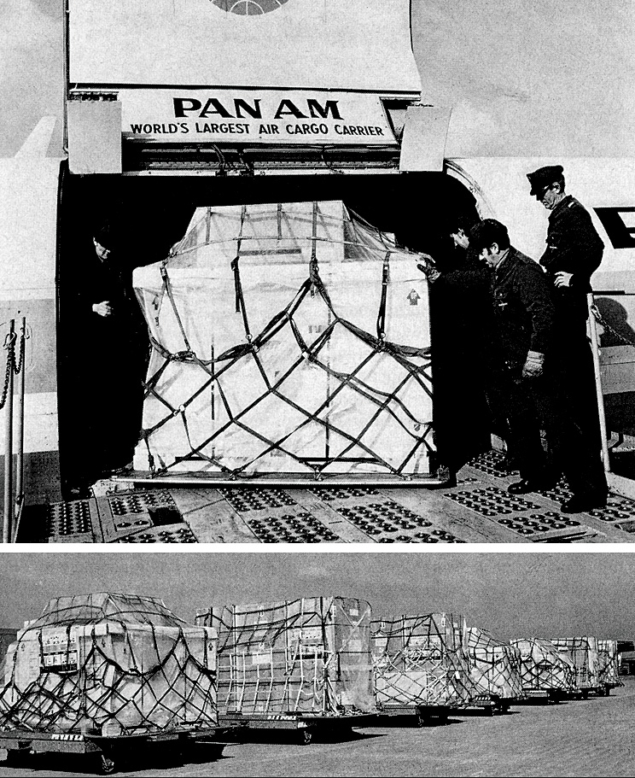

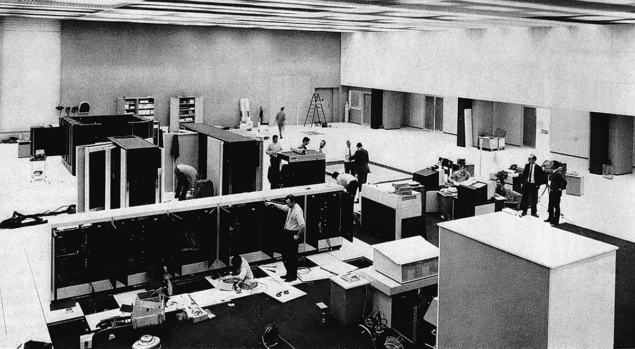

The numerous computers available at CERN are of a great variety of sizes and degrees of autonomy, which reflects the diversity of their uses. No user likes to share his computer with any other user; yet some of his problems may require a computing system so large and costly, that he cannot expect it to be reserved for his exclusive benefit nor to be kept idle when he does not need it. The biggest computers available at CERN must perforce belong to a central service, accessible to the Laboratory as a whole. In recent years, the main equipment of this service has consisted of a CDC 6600 and CDC 6500. The recent arrival of a 7600 (coupled with a 6400) will multiply the centrally available computing power by a factor of about five.

We are only beginning to discover and explore the new ways of acquiring scientific knowledge which have been opened by the advent of computers

For many applications, much smaller units of computing power are quite adequate. CERN possesses some 80 other computers of various sizes, some of them for use in situations where autonomy is essential (for example, as an integral part of an experimental set-up using electronic techniques or for process control in accelerating systems). In some applications there is need for practically continuous access to a smaller computer together with intermittent access to a larger one. A data-conveying link between the two may then become necessary.

Conclusion

The foregoing remarks are meant to give some idea of how the essential nature of the digital computer and that of high energy physics have blended to produce the present prominence of CERN as a centre of computational physics. The detailed questions of ‘how’ and ‘what for’ are treated in the other articles of this [special March 1972 issue of CERN Courier devoted to computing] concretely enough to show the way for similar developments in other branches of science. In this respect, as in many others, CERN’s pioneering influence may transcend the Organization’s basic function as a centre of research in high energy physics.