Silicon detectors placed as close as possible to particle beams measure the trajectories of particles as they emerge from collisions. At CERN’s flagship accelerator, the Large ElectronPositron collider (LEP), silicon detectors can expect to be traversed by around ten thousand million particles per square centimetre over their lifetime. However, at CERN’s next big accelerator, the Large Hadron Collider (LHC), this number will rise to a mammoth thousand million million passing particles per square centimetre.

Silicon detectors used as they have been in the past would not be able to cope with this enormous integrated particle flux. After prolonged exposure to passing particles, defects begin to appear in the silicon lattice as atoms become displaced, leaving lattice vacancies and atoms at interstitial sites. These have the effect of temporarily trapping the electrons and holes (holes are missing electron states that behave like positively charged particles), which are created when particles pass through the detector. Since it is these electrons and holes that announce the passage of a particle, lattice defects destroy the signal.

Substantial progress has been made in designing radiation-hard detectors by paying special attention to the design of detectors and improving on silicon’s properties. Now, serendipity seems to have brought another new solution. Using experience gained in searches for cold dark matter particles, where small signals demand the sensitivity of cryogenic detectors, a group of physicists at Bern decided to see what would happen to radiation damaged silicon detectors when they were cooled to cryogenic temperatures. The researchers found that, below 100 K, dead detectors come back to life.

The explanation for this phenomenon appears to be that, at such low temperatures, the electrons and holes that are normally present in silicon detectors, which form a constant so-called “leakage” current, are themselves trapped by the radiation-induced defects. Moreover, the rate at which these electrons and holes become untrapped is greatly reduced as a result of the reduced thermal energy. Consequently, a consistent and large fraction of radiation-induced defects remains filled. This means that electrons and holes that are released by passing particles cannot be trapped and the signal is not lost.

However, the story is not as simple as this. Closer investigation reveals that, for extreme radiation doses, exceeding those expected after 10 years of LHC operation, the signal is only partially recovered. Understanding this behaviour requires further study.

Another advantage of low-temperature operation is the possibility of using simplified detector designs and low purity material, thus opening the way to a substantial reduction in cost.

The Lazarus effect

This is not the first time that low temperatures have been used to improve the performance of particle detectors. In fact, the Lazarus effect is very similar to a technique that has been known since 1981 to physicists using charge-coupled device (CCD) detectors.

CCDs were first tried in a test beam at CERN by the NA32 collaboration, which went on to use them successfully in its experiment. Similar detectors have been used for Stanford’s SLD vertex detector and its upgrade. These detectors were run at a relatively tropical 220 K to reduce the leakage current and to freeze out radiation-induced damage in the detector.

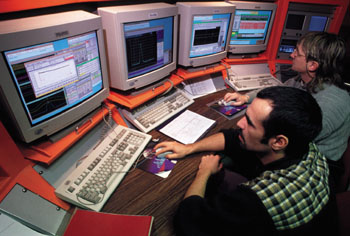

CERN’s RD39 collaboration plans to study the Lazarus effect in depth. The first step came in August 1998 when two silicon detectors with full read-out from the Delphi experiment at LEP were put into a test beam along with prototype detectors for the forthcoming COMPASS experiment. Members of the LHCb collaboration were also involved, because close-to-the-beam tracking is a vital feature of the group’s planned experiment.

One of the Delphi detectors had previously been irradiated with a comparable particle dose to that expected after a few years of LHC operation; the other was undamaged. The test beam demonstrated not only that the signal recovers at low temperatures, but also that the positional accuracy of silicon was not impaired, the two silicon detectors producing compatible results. Delphi’s standard read-out electronics also worked well at low temperatures.

A second test in November placed a healthy 3 x 3 pad array of silicon detectors in the beam line of the NA50 experiment, fully intercepting the highest intensity lead-ion beam at CERN. Permanently operated at 77 K, the 1.5 square millimetre pad centred on the beam performed well, even after being traversed by some ten thousand million lead ions. The pulses from each incident ion and the total ionization current from the detector were continuously monitored during and between beam pulses, with the results clearly showing that the detector survived this extreme environment without any noticeable change in performance.

These test beam results suggest that conventional silicon detectors operated at liquid nitrogen temperature could still remain the detectors of choice for the next generation of particle physics experiments. RD39 plans a series of proof-of-principle experiments to confirm its early findings and to demonstrate the feasibility of a low-cost cryogenic silicon tracker. The collaboration will also optimize a new high-intensity radiation-hard beam monitor based on the Lazarus effect. The results will be closely followed by the collaborations preparing for physics at the LHC.