The beginning of Willibald Jentschke’s mandate at CERN coincided with the start-up of the world’s first hadron collider, the Intersecting Storage Rings (ISR). Moreover, Jentschke had already been involved with the ISR before as chairman of the ISR Committee, so it is appropriate to look back at the ISR during his mandate.

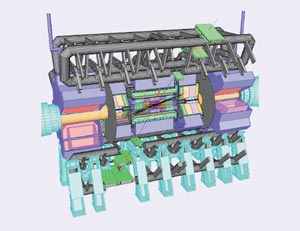

Let us first take a look at the working principle of the ISR. The idea was to build two rings slightly distorted so that they could intersect in eight different places. You would take the beams out of the Proton Synchrotron (PS), bring them to one of these rings and accumulate them into the vacuum chambers.

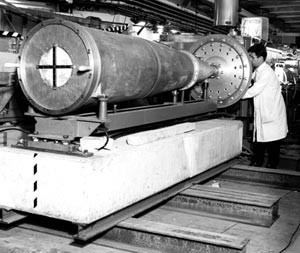

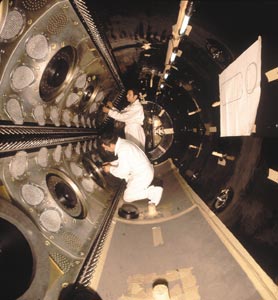

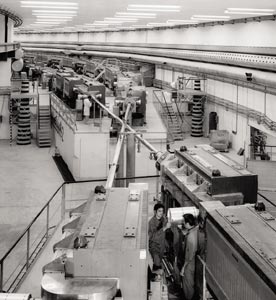

The tunnel in which these two magnet rings were built is about 15 m wide and it has 150 m radius on average compared with 100 m for the PS, despite the fact that the energy of the two machines was more or less the same. The main challenge for the ISR was to accumulate high-enough currents and maintain small-enough beam dimensions to achieve a luminosity that would be interesting for physics. This could be realized only after the invention of various ways of accumulating beams. We built on the idea developed by the MURA group of accelerator physicists from the US Midwestern Universities Research Association to accumulate a high current beam from hundreds of injected pulses by using an RF stacking process. In our initial design, this would lead to ISR beams 60 mm wide and about 10 mm high.

High currents, and therefore high instantaneous luminosity, are important. An equally important design goal, however, was long beam lifetimes so that the average luminosity would also be high. That required a very good vacuum compared with what was normal in those days. The planned vacuum for the ISR was 10-9 to 10-10 Torr. As we will see, we went far beyond that. Good vacuum, of course, was also important for the experiments to have manageable backgrounds.

Authorization for the ISR project was given at the end of Viki Weisskopf’s mandate and construction took place during Bernard Gregory’s time as director-general, starting in 1966 and finishing early in 1971. When Jentschke arrived, both rings had been installed and one of the rings – ring one – had been tested at the end of October 1970. We had been able to accumulate several amps of protons and we had found very promising lifetimes. But we had also met the first beam instabilities that limited the beam currents to around 3 A.

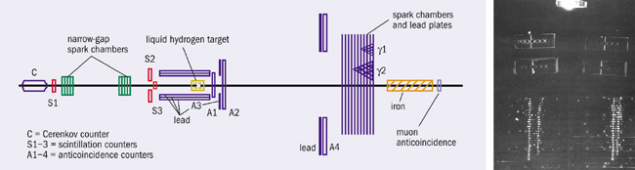

In January 1971 simple detectors had been installed to observe the first collisions. We tested ring two so that both rings had been tested independently, and on 27 January 1971 we operated the two rings together and observed the first collisions. This was a great event for many of us. We had achieved proton-proton collisions in colliding beams for the first time ever.

Jentschke was present for the test. He brought champagne with him, and I recall that someone was not too happy that this was served rather late in the night. Some of us felt that it was more important to watch the lifetimes of the two beams before popping the corks. When we were satisfied that the lifetime was good we opened the bottles. The champagne may have been late, but it was well deserved! Physics started about one month later, and Jentschke hosted the official inauguration in October 1971.

The ISR in action

So much for the early history of the ISR. Let us turn to the characteristics of the machine and how the performance developed during the first few years of operation. Luminosity rapidly climbed, reaching the design goal of 4 x 1030 cm-2s-1 in early 1973. During Jentschke’s mandate, it increased by a factor of 10, and ultimately went on to reach 1.4 x 1032 cm-2s-1, some thirty times the design goal, before the ISR was closed in 1984.

I’d like to explain a little how the luminosity developed. I’ve already mentioned that we encountered instability in the beams very early. That was both expected and unexpected. We knew we were aiming for very intense beams and so it would not be strange to have instabilities. But the first instability that we met was one that we thought we had taken care of. The current came up to about 3 A, when suddenly we lost part of the beam. Then it started building up again until we again lost part of it and so on. This turned out to come from instability arising from the interaction between the beam and the vacuum walls – the resistive wall instability as it was called, although it was not due only to resistance.

Coping with instability

This effect turned out to require extremely careful beam handling to remove. We found, for example, that it didn’t take current away from the whole beam uniformly, instead it more or less punched holes in the beam. In other words, the instability was much more local than the beam itself and this meant that we had to have the right field shape not only globally for the beam, but also locally. So we had to fine-tune the field shape in the apertures with the help of the pole-face windings. This allowed us to improve the situation and build up higher currents.

We applied the cure step by step, but as we went up in current we suddenly found that we were again losing part of the beam, but this time the time-scale for the loss was much longer. Not only that, after careful investigation, we saw a pressure bump building up. In other words, we had moved into a completely different kind of instability – not a beam instability but an instability due to pressure in the vacuum chamber caused by beam protons hitting the residual gas. Resulting secondary particles striking the vacuum-chamber wall released more particles, and this had a run-away effect that led to a pressure bump.

This effect required completely different cures – in particular, a tremendously increased pumping capacity and exceedingly clean walls. Again, we took it step by step. Where we found pressure build-up, we improved pumping in that area during the next available scheduled shutdown. We also improved the cleaning of the vacuum walls by using higher temperatures for the bake-out, and also by employing techniques such as gas discharge cleaning. Progress came in a series of jumps. We got a few more amps after we cured the first problem, then came the second and we cured that. Then we went back to the first, but at a higher current, and so on. In the end we had rather sophisticated beam tuning and vacuum treatment, and by solving these two problems we’d found the way to high luminosity.

We also manipulated the geometry of the beam cross-section. The height of the beam had a direct impact on luminosity, so we tried to reduce the height as much as possible, reaching about half what we had originally foreseen. We also put in a squeezing system – a low-beta section as it was called – to further reduce the beam height considerably in the interaction regions. This required a lot of inter-laboratory collaboration. We did not have the quadrupoles that were needed, so we got them from DESY, from the Rutherford Laboratory and from the PS Division at CERN.

The improvements in the vacuum had the added benefit that they led to great improvements in beam lifetimes, which were typically 50-60 hours. We had very small beam losses, which meant that we had very low background in the interaction regions except for when halo built up. Backgrounds were so low that at one point when the experiments were asking for further improvements we had to point out that what they were seeing was coming from proton-proton interactions and not from collisions with residual gas in the rings!

The invention of stochastic cooling

These were the main features of the ISR during Jentschke’s time, but we also had some special things happening, and there’s one that I can’t resist mentioning: stochastic cooling. To me, the stochastic cooling story happened in the following way. Simon van der Meer had a fit of pessimism about the planned performance of the ISR. He was afraid that the machine wouldn’t work as promised, and he put all his mental energy into finding a way of saving it if this came to pass. Happily, he turned out to be wrong about the ISR, but he nevertheless invented stochastic cooling.

When he had worked out the theory, he concluded that it would not help the ISR and he put the idea to one side. Fortunately, he told his colleagues about the idea first. Later on, as the ISR got going, people realised that although stochastic cooling wouldn’t help the ISR much, it was a wonderful invention and we’d better take a look at it. So we managed to build in stochastic cooling to the ISR. When we switched it on we saw a reduction in beam height, so we had a clear demonstration that stochastic cooling worked.

An ideal application for stochastic cooling would be in a machine where beam currents are not as high as in the ISR, and such an application was not long in coming. Stochastic cooling came into its own, as you all know, in the proton-antiproton project that ultimately led to the Nobel prize for Van der Meer and Carlo Rubbia. It was mainly applied in the antiproton accumulator, where stacking and cooling was the mechanism whereby the antiproton currents were accumulated.

Comments on the exploitation

Not everything always went well at the ISR. For example, on more than one occasion part of the beam went astray and punched holes all the way along a bellows structure, which made a mess not only of the bellows but also of the vacuum. On another occasion a spectrometer arm went out of control and broke the beam pipe, leading to a similar effect.

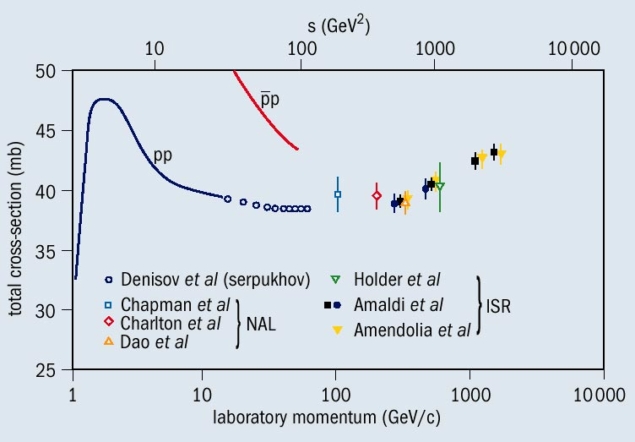

But these were isolated incidents, and didn’t harm the excellent physics programme at the ISR. One result that caused a stir was the observed increase in proton-proton total cross-section. I remember that shortly after this had been announced, I was sitting at the dinner table with a theorist who pointed at me and said “I will eat my hat if you machine people don’t find that you are making a mistake with your measurement of the effective height”. We didn’t find a mistake in our measurement, but since I did not meet the lady again I don’t know what became of her hat.

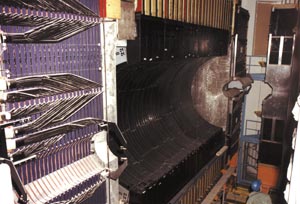

One of the ISR’s important contributions to particle physics was that it provided a place where experimentalists learned how to do physics with colliding beam machines, which are so different from fixed-target machines. This was extremely useful for the proton-antiproton programme some years later. During a very productive life, the ISR reached an energy of 63 GeV in the centre of mass, or in other words the equivalent of 2 TeV for a fixed-target machine. The average vacuum reached an impressive 3 x 10-12 Torr, and for one fill with antiprotons, using stochastic cooling, a beam lifetime of 345 hours was achieved.

Concluding remarks

To conclude, it must have been very satisfying for Jentschke to be in charge of CERN during these pioneering years of hadron colliders. It must also have been satisfying later for him to follow the development not only of the ISR but the whole approach to particle physics with its shift from fixed target to almost entirely colliders. He was fortunate to be present at the beginning and then to follow it for a long time. His life as a scientist was rich and varied, and the ISR was an important part of it.