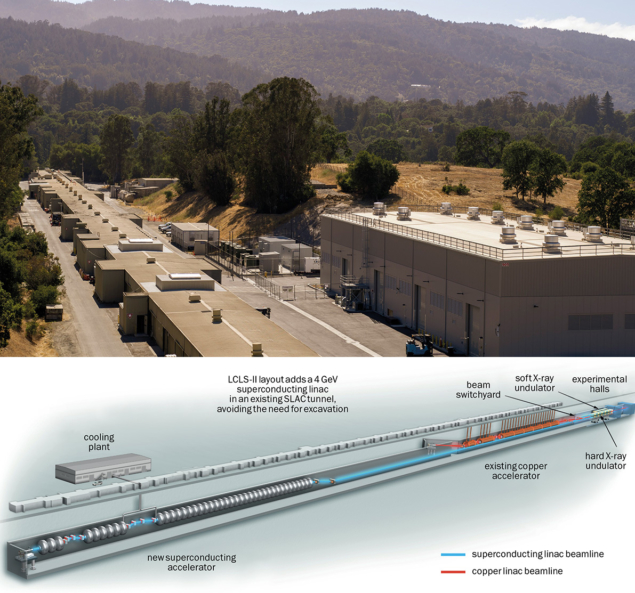

An ambitious upgrade of the US’s flagship X-ray free-electron-laser facility – the Linac Coherent Light Source (LCLS) at the SLAC National Accelerator Laboratory in California – is nearing completion. Set for “first light” in 2022, LCLS-II will deliver X-ray laser beams that are 10,000 times brighter than LCLS at repetition rates of up to a million pulses per second – generating more X-ray pulses in just a few hours than the current laser has delivered through the course of its 12-year operational lifetime. The cutting-edge physics of the new X-ray laser – underpinned by a cryogenically cooled superconducting radiofrequency (SRF) linac – will enable the two beams from LCLS and LCLS-II to work in tandem. This, in turn, will help researchers observe rare events that happen during chemical reactions and study delicate biological molecules at the atomic scale in their natural environments, as well as potentially shed light on exotic quantum phenomena with applications in next-generation quantum computing and communications systems.

Strategic commitment

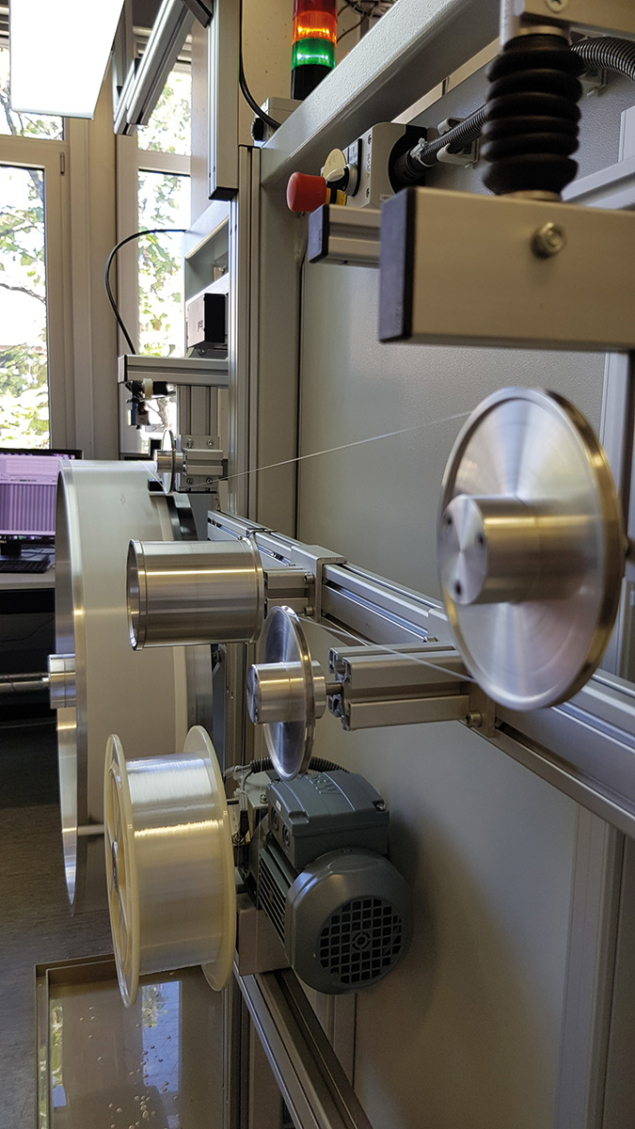

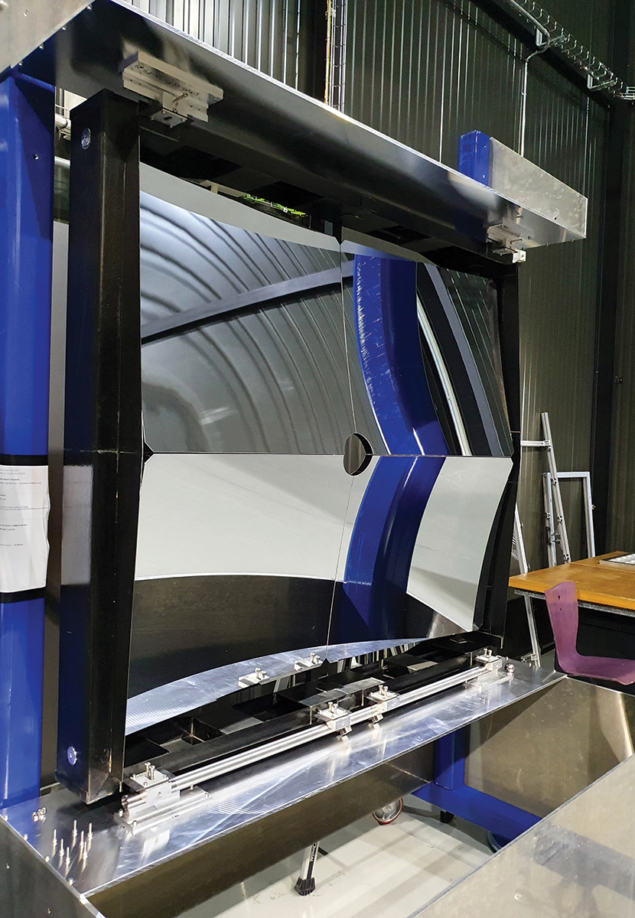

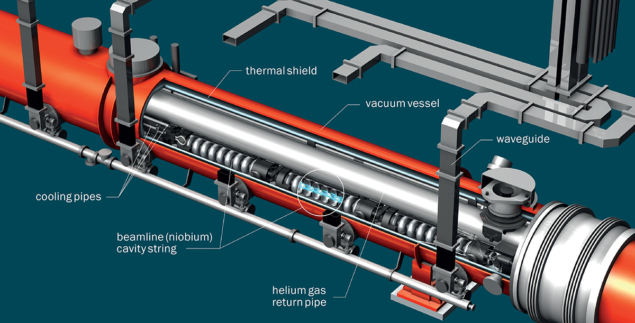

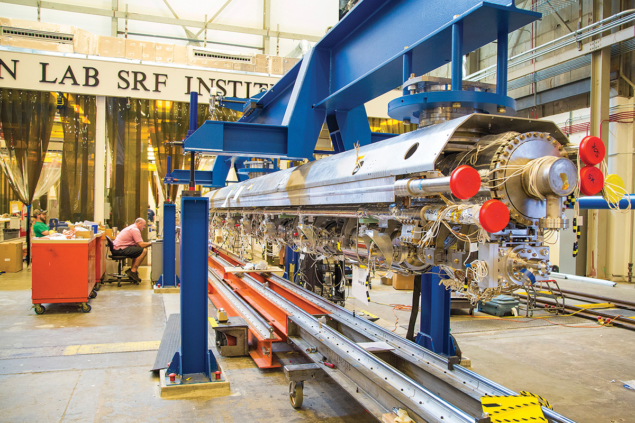

Successful delivery of the LCLS-II linac was possible thanks to a multicentre collaborative effort involving US national and university laboratories, following the decision to pursue an SRF-based machine in 2014 through the design, assembly, test, transportation and installation of a string of 37 SRF cryomodules (most of them more than 12 m long) into the SLAC tunnel (see figures “Tunnel vision” and “Keeping cool”). All told, this non-trivial undertaking necessitated the construction of 40 1.3 GHz SRF cryomodules (five of them spares) and three 3.9 GHz cryomodules (one spare) – with delivery of approximately one cryomodule per month from February 2019 until December 2020 to allow completion of the LCLS-II linac installation on schedule by November 2021.

This industrial-scale programme of works was shaped by a strategic commitment, early on in the LCLS-II design phase, to transfer, and ultimately iterate, the established SRF capabilities of the European XFEL project into the core technology platform used for the LCLS-II SRF cryomodules. Put simply: it would not have been possible to complete the LCLS-II project, within cost and on schedule, without the sustained cooperation of the European XFEL consortium – in particular, colleagues at DESY (Germany), CEA Saclay (France) and several other European laboratories (as well as KEK in Japan) that generously shared their experiences and know-how so that the LCLS-II collaboration could hit the ground running.

Better together

These days, large-scale accelerator or detector projects are very much a collective endeavour. Not only is the sprawling scope of such projects beyond a single organisation, but the risks of overspend and slippage can greatly increase with a “do-it-on-your-own” strategy. When the LCLS-II project opted for an SRF technology pathway in 2014 (to maximise laser performance and future-proofing), the logical next step was to build a broad-based coalition with other US Department of Energy (DOE) national laboratories and universities. In this case, SLAC, Fermilab, Jefferson Lab (JLab) and Cornell University contributed expertise for cryomodule production, while Argonne National Laboratory and Lawrence Berkeley National Laboratory managed delivery of the undulators and photoinjector for the project. For sure, the start-up time for LCLS-II would have increased significantly without this joint effort, extending the overall project by several years.

Each partner brought something unique to the LCLS-II collaboration. While SLAC was still a relative newcomer to SRF technologies, the lab had a management team that was familiar with building large-scale accelerators (following successful delivery of the LCLS). The priority for SLAC was therefore to scale up its small nucleus of SRF experts by recruiting experienced SRF technologists and engineers to the staff team.

In contrast, the JLab team brought an established track-record in the production of SRF cryomodules, having built its own machine, the Continuous Electron Beam Accelerator Facility (CEBAF), as well as cryomodules for the Spallation Neutron Source (SNS) linac at Oak Ridge National Laboratory in Tennessee. Cornell, too, came with a rich history in SRF R&D – capabilities that, in turn, helped to solidify the SRF cavity preparation process for LCLS-II.

Finally, Fermilab had, at the time, recently built two cutting-edge cryomodules of the same style as that chosen for LCLS-II. To fabricate these modules, Fermilab worked closely with the team at DESY to set up the same type of production infrastructure used on the European XFEL. From that perspective, the required tooling and fixtures were all ready to go for the LCLS-II project. While Fermilab was the “designer of record” for the SRF cryomodule, with primary responsibility for delivering a working design to meet LCLS-II requirements, the realisation of an optimised technology platform was, in large part, a team effort involving SRF experts from across the collaboration.

Challenges are inevitable when developing new facilities at the limits of known technology

Operationally, the use of two facilities to produce the SRF cryomodules – Fermilab and JLab – ensured a compressed delivery schedule and increased flexibility within the LCLS-II programme. On the downside, the dual-track production model increased infrastructure costs (with the procurement of duplicate sets of tooling) and meant additional oversight to ensure a standardised approach across both sites. Ongoing procurements were divided equally between Fermilab and JLab, with deliveries often made to each lab directly from the industry suppliers. Each facility, in turn, kept its own inventory of parts, so as to minimise interruptions to cryomodule assembly owing to any supply-chain issues (and enabling critical components to be transferred between labs as required). What’s more, the close working relationship between Fermilab and JLab kept any such interruptions to a minimum.

Collective problems, collective solutions

While the European XFEL provided the template for the LCLS-II SRF cryomodule design, several key elements of the LCLS-II approach subsequently evolved to align with the CW operation requirements and the specifics of the SLAC tunnel. Success in tackling these technical challenges – across design, assembly, testing and transportation of the cryomodules – is testament to the strength of the LCLS-II collaboration and the collective efforts of the participating teams in the US and Europe.

For starters, the thermal performance specification of the SRF cavities exceeded the state-of-the-art and required development and industrialisation of the concept of nitrogen doping (a process in which SRF cavities are heat-treated in a nitrogen atmosphere to increase their cryogenic efficiency and, in turn, lower the overall operating costs of the linac). The nitrogen-doping technique was invented at Fermilab in 2012 but, prior to LCLS-II construction, had been used only in an R&D setting.

Adapatability in real-time

The priority was clear: to transfer the nitrogen-doping capability to LCLS-II’s industry partners, so that the cavity manufacturers could perform the necessary materials processing before final helium-vessel jacketing. During this knowledge transfer, it was found that nitrogen-doped cavities are particularly sensitive to the base niobium sheet material – something the collaboration only realised once the cavity vendors were into full production. This resulted in a number of process changes for the heat treatment temperature, depending on which material supplier was used and the specific properties of the niobium sheet deployed in different production runs. JLab, for its part, held the contract for the cavities and pulled out all stops to ensure success.

At the same time, the conversion from pulsed to CW operation necessitated a faster cooldown cycle for the SRF cavities, requiring several changes to the internal piping, a larger exhaust chimney on the helium vessel, as well as the addition of two new cryogenic valves per cryomodule. Also significant is the 0.5% slope in the longitudinal floor of the existing SLAC tunnel, which dictated careful attention to liquid-helium management in the cryomodules (with a separate two-phase line and liquid-level probes at both ends of every module).

However, the biggest setback during LCLS-II construction involved the loss of beamline vacuum during cryomodule transport. Specifically, two cryomodules had their beamlines vented and required complete disassembly and rebuilding – resulting in a five-month moratorium on shipping of completed cryomodules in the second half of 2019. It turns out that a small, what was thought to be inconsequential, change in a coupler flange resulted in the cold coupler assembly being susceptible to resonances excited by transport. The result was a bellows tear that vented the beamline. Unfortunately, initial “road-tests” with a similar, though not exactly identical, prototype cryomodule had not surfaced this behaviour.

Shine on: from LCLS-II to LCLS-II HE

As with many accelerator projects, LCLS-II is not an end-point in itself, more an evolutionary transition within a longer term development roadmap. In fact, work is already under way on LCLS-II HE – a project that will increase the energy of the CW SRF linac from 4 to 8 GeV, enabling the photon energy range to be extended to at least 13 keV, and potentially up to 20 keV at 1 MHz repetition rates.

To ensure continuity of production for LCLS-II HE, 25 next-generation cryomodules are in the works, with even higher performance specifications versus their LCLS-II counterparts, while upgrades to the source and beam transport are also being finalised.

In addition to LCLS-II HE, other SRF disciplines will benefit from the R&D and technological innovation that has come out of the LCLS-II construction programme. SRF technologies are constantly evolving and advancing the state-of-the-art, whether that’s in single-cavity cryogen-free systems, additional FEL CW upgrades to existing machines, or the building blocks that will underpin enormous new machines like the proposed International Linear Collider.

Such challenges are inevitable when developing new facilities at the limits of known technology. In the end, the problem was successfully addressed using the diverse talents of the collaboration to brainstorm solutions, with the available access ports allowing an elastomer wedge to be inserted to secure the vulnerable section. A key take-away here is the need for future projects to perform thorough transport analysis, verify the transport loads using mock-ups or dummy devices, and install adequate instrumentation to ensure granular data analysis before long-distance transport of mission-critical components.

Upon completion of the assembly phase, all LCLS-II cryo-modules were subsequently tested at either Fermilab or JLab, with one module tested at both locations to ensure reproducibility and consistency of results. For high Q0 performance in nitrogen-doped cavities, cooldown flow rates of at least 30 g/s of liquid helium were found to give the best results, helping to expel magnetic flux that could otherwise be trapped in the cavity.

Overall, cryomodule performance on the test stands exceeded specifications, with an average energy gain per cryomodule of 158 MV (versus specification of 128 MV) and average Q0 of 3 × 1010 (versus specification of 2.7 × 1010). Looking ahead, attention is already shifting to the real-world cryomodule performance in the SLAC tunnel – something that will be measured for the first time in 2022.

Transferable lessons

For all members of the collaboration working on the LCLS-II cryomodules, this challenging project holds many lessons. Most important is the nature of collaboration itself, building a strong team and using that strength to address problems in real-time as they arise. The mantra “we are all in this together” should be front-and-centre for any multi-institutional scientific endeavour – as it was in this case. With all parties making their best efforts, the goal should be to utilise the combined strengths of the collaboration to mitigate challenges. Solutions need to be thought of in a more global sense, since the best answer might mean another collaborator taking more onto their plate. Collaboration implies true partnership and a working model very different to a transactional customer–vendor relationship.

Collaboration implies true partnership and a working model very different to a transactional relationship

From a planning perspective, it’s vital to ensure that the initial project cost and schedule are consistent with the technical challenges and preparedness of the infrastructure. Prototypes and pre-series production runs reduce risk and cost in the long term and should be part of the plan, but there must be sufficient time for data analysis and changes to be made after a prototype run in order for it to be useful. Time spent on detailed technical reviews is also time well spent. New designs of complex components need detailed oversight and review, and should be controlled by a team, rather than a single individual, so that sign-off on any detailed design changes are made by an informed collective.

Planning ahead

Work planning and control is another essential element for success and safety. This idea needs to be built into the “manufacturing system”, including into the cost and schedule, and be part of each individual’s daily checklist. No one disagrees with this concept, but good intentions on their own will not suffice. As such, required safety documentation should be clear and unambiguous, and be reviewed by people with relevant expertise. Production data and documentation need to be collected, made easily available to the entire project team, and analysed regularly for trends, both positive and negative.

Supply chain, of course, is critical in any production environment – and LCLS-II is no exception. When possible, it is best to have parts procured, inspected, accepted and on-the-shelf before production begins, thereby eliminating possible workflow delays. Pre-stocking also allows adequate time to recycle and replace parts that do not meet project specifications. Also worth noting is that it’s often the smaller components – such as bellows, feedthroughs and copper-plated elements – that drive workflow slowdowns. A key insight from LCLS-II is to place purchase orders early, stay on top of vendor deliveries, and perform parts inspections as soon as possible post-delivery. Projects also benefit from having clearly articulated pass/fail criteria and established procedures for handling non-conformance – all of which alleviates the need to make critical go/no-go acceptance decisions in the face of schedule pressures.

Finally, it’s worth highlighting the broader impact – both personal and professional – to individual team members participating on a big-science collaboration like LCLS-II. At the end of the build, what remained after designs were completed, problems solved, production rates met, and cryomodules delivered and installed, were the friendships that had been nurtured over several years. The collaboration amongst partners, both formal and informal, who truly cared about the project’s success, and had each other’s backs when there were issues arising: these are the things that solidified the mutual respect, the camaraderie and, in the end, made LCLS-II such a rewarding project.