How would you describe DESY’s scientific culture?

DESY is a large laboratory with just over 3000 employees. It was founded 65 years ago as an accelerator lab, and at its heart it remains one, though what we do with the accelerators has evolved over time. It is fully funded by Germany.

In particle physics, DESY has performed many important studies, for example to understand the charm quark following the November Revolution of 1974. The gluon was discovered here in the late 1970s. In the 1980s, DESY ran the first experiments to study B mesons, laying the groundwork for core programmes such as LHCb at CERN and the Belle II experiment in Japan. In the 1990s, the HERA accelerator focused on probing the structure of the proton, which, incidentally, was the subject of my PhD, and those results have been crucial for precision studies of the Higgs boson.

Over time, DESY has become much more than an accelerator and particle-physics lab. Even in the early days, it used what is called synchrotron radiation, the light emitted when electrons change direction in the accelerator. This light is incredibly useful for studying matter in detail. Today, our accelerators are used primarily for this purpose: they generate X-rays that image tiny structures, for example viruses.

DESY’s culture is shaped by its very engaged and loyal workforce. People often call themselves “DESYians” and strongly identify with the laboratory. At its heart, DESY is really an engineering lab. You need an amazing engineering workforce to be able to construct and operate these accelerators.

Which of DESY’s scientific achievements are you most proud of?

The discovery of the gluon is, of course, an incredible achievement, but actually I would say that DESY’s greatest accomplishment has been building so many cutting-edge accelerators: delivering them on time, within budget, and getting them to work as intended.

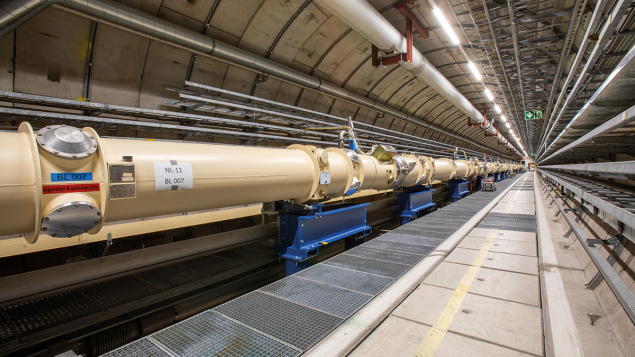

Take the PETRA accelerator, for example – an entirely new concept when it was first proposed in the 1970s. The decision to build it was made in 1975; construction was completed by 1978; and by 1979 the gluon was discovered. So in just four years, we went from approving a 2.3 km accelerator to making a fundamental discovery, something that is absolutely crucial to our understanding of the universe. That’s something I’m extremely proud of.

I’m also very proud of the European X-ray Free-Electron Laser (XFEL), completed in 2017 and now fully operational. Before that, in 2005 we launched the world’s first free-electron laser, FLASH, and of course in the 1990s HERA, another pioneering machine. Again and again, DESY has succeeded in building large, novel and highly valuable accelerators that have pushed the boundaries of science.

What can we look forward to during your time as chair?

We are currently working on 10 major projects in the next three years alone! PETRA III will be running until the end of 2029, but our goal is to move forward with PETRA IV, the world’s most advanced X-ray source. Securing funding for that first, and then building it, is one of my main objectives. In Germany, there’s a roadmap process, and by July this year we’ll know whether an independent committee has judged PETRA IV to be one of the highest-priority science projects in the country. If all goes well, we aim to begin operating PETRA IV in 2032.

Our FLASH soft X-ray facility is also being upgraded to improve beam quality, and we plan to relaunch it in early September. That will allow us to serve more users and deliver better beam quality, increasing its impact.

In parallel, we’re contributing significantly to the HL-LHC upgrade. More than 100 people at DESY are working on building trackers for the ATLAS and CMS detectors, and parts of the forward calorimeter of CMS. That work needs to be completed by 2028.

Astroparticle physics is another growing area for us. Over the next three years we’re completing telescopes for the Cherenkov Telescope Array and building detectors for the IceCube upgrade. For the first time, DESY is also constructing a space camera for the satellite UltraSat, which is expected to launch within the next three years.

At the Hamburg site, DESY is diving further into axion research. We’re currently running the ALPS II experiment, which has a fascinating “light shining through a wall” setup. Normally, of course, light can’t pass through something like a thick concrete wall. But in ALPS II, light inside a magnet can convert into an axion, a hypothetical dark-matter particle that can travel through matter almost unhindered. On the other side, another magnet converts the axion back into light. So, it appears as if the light has passed through the wall, when in fact it was briefly an axion. We started the experiment last year. As with most experiments, we began carefully, because not everything works at once, but two more major upgrades are planned in the next two years, and that’s when we expect ALPS II to reach its full scientific potential.

We’re also developing additional axion experiments. One of them, in collaboration with CERN, is called BabyIAXO. It’s designed to look for axions from the Sun, where you have both light and magnetic fields. We hope to start construction before the end of the decade.

Finally, DESY also has a strong and diverse theory group. Their work spans many areas, and it’s exciting to see what ideas will emerge from them over the coming years.

How does DESY collaborate with industry to deliver benefits to society?

We already collaborate quite a lot with industry. The beamlines at PETRA, in particular, are of strong interest. For example, BioNTech conducted some of its research for the COVID-19 vaccine here. We also have a close relationship with the Fraunhofer Society in Germany, which focuses on translating basic research into industrial applications. They famously developed the MP3 format, for instance. Our collaboration with them is quite structured, and there have also been several spinoffs and start-ups based on technology developed at DESY. Looking ahead, we want to significantly strengthen our ties with industry through PETRA IV. With much higher data rates and improved beam quality, it will be far easier to obtain results quickly. Our goal is for 10% of PETRA IV’s capacity to be dedicated to industrial use. Furthermore, we are developing a strong ecosystem for innovation on the campus and the surrounding area, with DESY in the centre, called the Science City Hamburg Bahrenfeld.

What’s your position on “dual use” research, which could have military applications?

The discussion around dual-use research is complicated. Personally, I find the term “dual use” a bit odd – almost any high-tech equipment can be used for both civilian and military purposes. Take a transistor for example, which has countless applications, including military ones, but it wasn’t invented for that reason. At DESY, we’re currently having an internal discussion about whether to engage in projects that relate to defence. This is part of an ongoing process where we’re trying to define under what conditions, if any, DESY would take on targeted projects related to defence. There are a range of views within DESY, and I think that diversity of opinion is valuable. Some people are firmly against this idea, and I respect that. Honestly, it’s probably how I would have felt 10 or 20 years ago. But others believe DESY should play a role. Personally, I’m open to it.

If our expertise can help people defend themselves and our freedom in Europe, that’s something worth considering. Of course, I would love to live in a world without weapons, where no one attacks anyone. But if I were attacked, I’d want to be able to defend myself. I prefer to work on shields, not swords, like in Asterix and Obelix, but, of course, it’s never that simple. That’s why we’re taking time with this. It’s a complex and multifaceted issue, and we’re engaging with experts from peace and security research, as well as the social sciences, to help us understand all dimensions. I’ve already learned far more about this than I ever expected to. We hope to come to a decision on this later this year.

You are DESY’s first female chair. What barriers do you think still exist for women in physics, and how can institutions like DESY address them?

There are two main barriers, I think. The first is that, in my opinion, society at large still discourages girls from going into maths and science.

Certainly in Germany, if you stopped a hundred people on the street, I think most of them would still say that girls aren’t naturally good at maths and science. Of course, there are always exceptions: you do find great teachers and supportive parents who go against this narrative. I wouldn’t be here today if I hadn’t received that kind of encouragement.

That’s why it’s so important to actively counter those messages. Girls need encouragement from an early age, they need to be strengthened and supported. On the encouragement side, DESY is quite active. We run many outreach activities for schoolchildren, including a dedicated school lab. Every year, more than 13,000 school pupils visit our campus. We also take part in Germany’s “Zukunftstag”, where girls are encouraged to explore careers traditionally considered male-dominated, and boys do the same for fields seen as female-dominated.

Looking ahead, we want to significantly strengthen our ties with industry

The second challenge comes later, at a different career stage, and it has to do with family responsibilities. Often, family work still falls more heavily on women than men in many partnerships. That imbalance can hold women back, particularly during the postdoc years, which tend to coincide with the time when many people are starting families. It’s a tough period, because you’re trying to advance your career.

Workplaces like DESY can play a role in making this easier. We offer good childcare options, flexibility with home–office arrangements, and even shared leadership positions, which help make it more manageable to balance work and family life. We also have mentoring programmes. One example is dynaMENT, where female PhD students and postdocs are mentored by more senior professionals. I’ve taken part in that myself, and I think it’s incredibly valuable.

Do you have any advice for early-career women physicists?

If I could offer one more piece of advice, it’s about building a strong professional network. That’s something I’ve found truly valuable. I’m fortunate to have a fantastic international network, both male and female colleagues, including many women in leadership positions. It’s so important to have people you can talk to, who understand your challenges, and who might be in similar situations. So if you’re a student, I’d really recommend investing in your network. That’s very important, I think.

What are your personal reflections on the next-generation colliders?

Our generation has a responsibility to understand the electroweak scale and the Higgs boson. These questions have been around for almost 90 years, since 1935 when Hideki Yukawa explored the idea that forces might be mediated by the exchange of massive particles. While we’ve made progress, a true understanding is still out of reach. That’s what the next generation of machines is aiming to tackle.

The problem, of course, is cost. All the proposed solutions are expensive, and it is very challenging to secure investments for such large-scale projects, even though the return on investment from big science is typically excellent: these projects drive innovation, build high-tech capability and create a highly skilled workforce.

Europe’s role is more vital than ever

From a scientific point of view, the FCC is the most comprehensive option. As a Higgs factory, it offers a broad and strong programme to analyse the Higgs and electroweak gauge bosons. But who knows if we’ll be able to afford it? And it’s not just about money. The timeline and the risks also matter. The FCC feasibility report was just published and is still under review by an expert committee. I’d rather not comment further until I’ve seen the full information. I’m part of the European Strategy Group and we’ll publish a new report by the end of the year. Until then, I want to understand all the details before forming an opinion.

It’s good to have other options too. The muon collider is not yet as technically ready as the FCC or linear collider, but it’s an exciting technology and could be the machine after next. Another could be using plasma-wakefield acceleration, which we’re very actively working on at DESY. It could enable us to build high-energy colliders on a much smaller scale. This is something we’ll need, as we can’t keep building ever-larger machines forever. Investing in accelerator R&D to develop these next-gen technologies is crucial.

Still, I really hope there will be an intermediate machine in the near future, a Higgs factory that lets us properly explore the Higgs boson. There are still many mysteries there. I like to compare it to an egg: you have to crack it open to see what’s inside. And that’s what we need to do with the Higgs.

One thing that is becoming clearer to me is the growing importance of Europe. With the current uncertainties in the US, which are already affecting health and climate research, we can’t assume fundamental research will remain unaffected. That’s why Europe’s role is more vital than ever.

I think we need to build more collaborations between European labs. Sharing expertise, especially through staff exchanges, could be particularly valuable in engineering, where we need a huge number of highly skilled professionals to deliver billion-euro projects. We’ve got one coming up ourselves, and the technical expertise for that will be critical.

I believe science has a key role to play in strengthening Europe, not just culturally, but economically too. It’s an area where we can and should come together.