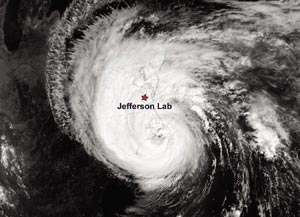

Hurricane Isabel was at category five – the most violent on the Saffir-Simpson scale of hurricane strength – when it began threatening the central Atlantic seaboard of the US. Over the course of several days, precautions against the extreme weather conditions were taken across the Jefferson Lab site in south-east Virginia. On 18 September 2003, when Isabel struck North Carolina’s Outer Banks and moved northward, directly across the region around the laboratory, the storm was still quite destructive, albeit considerably reduced in strength. The flood surge and trees felled by wind substantially damaged or even devastated buildings and homes, including many belonging to Jefferson Lab staff members.

For the laboratory itself, Isabel delivered an unplanned and severe challenge in another form: a power outage that lasted nearly three-and-a-half days, and which severely tested the robustness of Jefferson Lab’s two superconducting machines, the Continuous Electron Beam Accelerator Facility (CEBAF) and the superconducting radiofrequency “driver” accelerator of the laboratory’s free-electron laser. Robustness matters greatly for science at a time when microwave superconducting linear accelerators (linacs) are not only being considered, but in some cases already being built for projects such as neutron sources, rare-isotope accelerators, innovative light sources and TeV-scale electron-positron linear colliders.

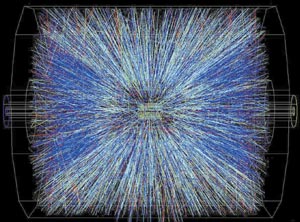

Hurricane Isabel interrupted a several-week-long maintenance shutdown of CEBAF, which serves nuclear and particle physics and represents the world’s pioneering large-scale implementation of superconducting radiofrequency (SRF) technology. The racetrack-shaped machine is actually a pair of 500-600 MeV SRF linacs interconnected by recirculation arc beamlines. CEBAF delivers simultaneous beams at up to 6 GeV to three experimental halls. An imminent upgrade will double the energy to 12 GeV and add an extra hall for “quark confinement” studies.

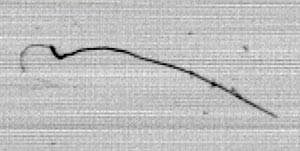

On a smaller scale, Jefferson Lab’s original kilowatt-scale infrared free-electron laser (FEL) is “driven” by a high-current cousin of CEBAF, a 70 MeV SRF linac with a high-current injector. The FEL serves multidisciplinary science and technology as the world’s highest-average-power source of tunable coherent infrared light. An upgrade to 10 kW is in commissioning – as it was when Isabel began threatening.

The power outage

The accelerator site lost electrical power at approximately 11 a.m. on 18 September during the hurricane, and was not restored until about 8:30 p.m. on 21 September. About an hour later, after the Central Helium Liquefier (CHL) control system was restored, it was found that all cryomodule temperatures were approaching ambient values. Without power, the CHL could not keep the CEBAF linacs or the FEL’s driver linac cooled to the 2 K temperature required for superconducting operation. The liquid helium in both systems warmed up, boiled off, and was vented harmlessly but expensively to the atmosphere.

Some $200,000 (~€160,000) in helium was lost – two-thirds of the overall inventory and an amount equivalent to a year’s worth of losses from routine operation (comparable in mass to more than 70,000 litres of 4.22 K liquid helium). The episode was also costly to users’ experiments and experimental schedules, to other aspects of CEBAF’s status of operational readiness, and to the FEL upgrade commissioning. One consequence, in parallel with recovery activities, has been to revisit the construction-era determination that installing CHL backup power would not be cost-effective; now various options for reducing vulnerability to power outages are being considered.

For the six weeks following the incident, key activities included the procurement of replacement helium, pumping out of all the linac vacuum elements, cooling down the entire CEBAF accelerator complex, RF conditioning of the linacs and the commissioning of CEBAF’s electron beam. The experimental nuclear/particle-physics programme was resumed on 2 December, a delay of six weeks, and commissioning the upgrade of the FEL was restarted.

The recovery

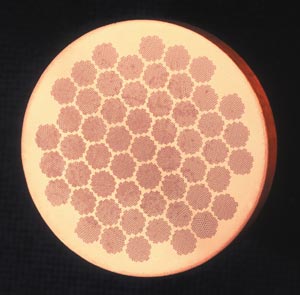

In anticipation of the approaching storm, the laboratory had stored one-third of its liquid-helium inventory, thus enabling the “cooldown” of the CEBAF linacs to begin on 8 October. The plan was to cool eight or nine cryomodules per day, starting with the first three cryomodules. All cooldown rates were within the guidelines (150 K per hour) to avoid excessive thermal stresses and degradation of the cavity quality factor (“Q disease”). While no major mechanical-vacuum-related issues caused concern down to 4 K, cooldown to 2 K (the temperature at which liquid helium is superfluid) was approached gingerly indeed, being a further test of vacuum integrity.

Nearly 38,000 litres of 4 K liquid helium arrived at the laboratory early on 16 October, and the cryomodules were topped off at a rate of about 2400 litres per hour. The tasks to follow were cooldown to 2 K, further filling and stabilization, re-establishing a very low-gradient tune of all the cavities, operation of the cavities to determine their waveguide vacuum response, and RF conditioning of the cavities. Waveguide vacuum had been a challenge during CEBAF’s early operational years in the 1990s, but it had cleared up with use.

On 20 October the cryogenic group, using manual control, pulled CEBAF’s liquid-helium temperature down to below the lambda point, the transition to the superfluid state. Some 17 hours later, on 21 October, more than 99% of the CEBAF and FEL accelerator modules had been cooled to 2.09 K, the normal operating temperature. The End Station Refrigerator was then powered up to start delivering liquid helium for experiment preparation – the cooling of magnets and cryogenic target work.

As the liquid helium crossed the lambda point, one ailing cryomodule in north linac zone 5 was monitored carefully. The pressure in the waveguide feeds to the first and second of its four cavity pairs went up at a rapid rate; within hours the ion pump had saturated in the millitorr range and it tripped soon after. The four cavities in question were assessed as inoperable due to vacuum considerations; they were the only casualties of the storm. Out of CEBAF’s total of 300, they represent at most 1% of the machine’s overall energy reach. RF conditioning has since revealed no other low-level leaks. After 36 hours of thermal stabilization at 2 K, the tasks at hand consisted of attaining low-gradient (2 MV/m) RF operation, followed by the assessment of vacuum stability with high-power RF operation.

The RF recovery of CEBAF and the FEL, following the complete cryogenic recovery, eliminated two major uncertainties: resonant frequencies were close enough to 1497 MHz that the cavities could be brought up without difficulty, and gradients in excess of 5 MV/m were sustained without extensive vacuum faults. By 7 November the CEBAF linacs had run in a stable fashion with RF but without electron beam for more than a week – an opportunity unlike any since CEBAF’s commissioning a decade ago. The newly installed high-performance cryomodule in south linac zone 21 had operated in a quiet mode at an equivalent RF energy gain of 60 MeV, considerably higher than its operating voltage (approximately 40 MeV) before the Isabel shutdown.

The coming months

The observations and data processing on CEBAF’s cavities to date give a more than 95% confidence level in the energy estimation of the linacs, promising a 5 GeV physics run for the next six months followed by possible runs at 5.5 GeV and above later in 2004. The linac RF appears at least comparable to its pre-Isabel capability, notwithstanding the four nonfunctional cavities in north linac zone 5.

A single global phase adjustment allowed the electron beam to go through the entire linac complex, indicating that the RF phases were reproducible after the temperature cycle. A detailed analysis of cavity and waveguide vacuum response during cooldown is being performed to gain an understanding of the actual vacuum conditions and the molecular species contributing to them. This will help us to understand better the vacuum trips and to set them properly in order to eliminate unnecessary trips due to lack of knowledge in the software. The warm window temperatures of the cavities are being monitored carefully and simultaneously with residual gas analyses to provide input for a knowledge-based control system for the RF vacuum trips, which should lead to improved linac performance.

Thanks to the dedicated work of the physicists, engineers and operating crew (including throughout the traditional four-day Thanksgiving weekend), the implementation of all the above has brought CEBAF back to operational readiness. The machine is now rising to the significant challenge, never attempted before, of developing electron beams with very stringent characteristics for simultaneous use in all three halls.

A continuous-wave parity-quality beam of 40 microamperes is required in Hall C for the G0 experiment, with very small relative “helicity correlations” in beam properties (e.g. less than 20 nanometers of movement in the beam spot when the beam helicity is flipped, averaged over the entire experimental run time). In Hall A a 100 microampere beam is needed for the hypernuclear experiment, with the stringent requirement of a relative energy spread of less than 25 in one million. Finally, a low-current but high-quality beam is destined for Hall B. The first beam for the physics experiment in Hall C, the G0 engineering run, was delivered on schedule on 2 December. The experimental programme in Hall B has also begun, while Hall A is being prepared for the start of the hypernuclear experiment in January.

The recovery of the FEL followed on the heels of that of CEBAF, and as I write today the high-power commissioning has fully resumed, with lasing achieved at nearly the kilowatt level and the laser power level continuing to rise steadily.

Acknowledgments

The achievements reported here were made possible by the dedication and hard work of the Jefferson Lab staff.