by Gordon Fraser, Cambridge University Press, ISBN 0521893097, £12.95 ($18).

Former CERN Courier editor Gordon Fraser has added brand-new material for the paperback edition of his fast-paced account of the story of antimatter.

edited by Beate Block and Maggie DeWolf, Mountainair press, ISBN 092952618X, $25 (€ 29).

It is somewhat unusual to review a cookbook in CERN Courier, but then this is a somewhat unusual cookbook. A collection of recipes assembled by the wife of a physicist at the Aspen Center for Physics, the book is as much a glimpse into the mindset of physics as it is a book about cooking.

The introductory pages deal with Aspen, but you’d have had to have been there to get the most out of it. The recipes begin with sections devoted to extraordinary chefs. There you’ll learn how to make risk-free mayonnaise, and why it’s best to whip egg whites in copper bowls. You’ll also learn some of the culinary secrets of Fermilab’s famed Chez Leon. One of the extraordinary chefs is Tita Alvarez Johnson, who founded the restaurant and gives it a memorable atmosphere to this day.

The rest of the book is divided into chapters sorted by region. Contributors are often mentioned by name, occasionally along with tasters. This makes for interesting, if slightly voyeuristic, reading. Here physicists will find the recipes of colleagues, their wives and even mothers-in-law. The presence of fondue Chinois betrays a CERN influence, and at least one Aspen visitor must have been to a La Thuille meeting, since the Aosta valley speciality “la Grolla” makes an appearance. In these chapters, you can even learn that at least one delegate to CERN Council has a soft spot for chocolate (a Belgian, of course).

The final section, “Drinks and amusements”, is definitely by physicists for physicists. There you’ll find a learned treatise on “Interparticle forces in multiphase colloid systems” – or how to resurrect coagulated sauce béarnaise. The thermodynamics of the perfect Martini are also covered here.

A chef once told me that to review a cookbook properly, you have to make all of the recipes. After spotting mysterious ingredients, such as powder steam and others still more exotic, this reviewer shied away from that approach and chose instead to dip into the book simply for the pleasure of it. Making the recipes will follow, starting with those from Chez Leon. Although the book may tell you how to make Tita’s recipes, unfortunately it doesn’t give her recipe for creating a memorable atmosphere – that you will have to discover for yourself.

All proceeds from Chaos in the Kitchen = Symmetry at the Table go to the Aspen Center for Physics. Ordering information is available from The Aspen Center for Physics, 700 West Gillespie St, Aspen, Colorado 81611, USA, or order by email.

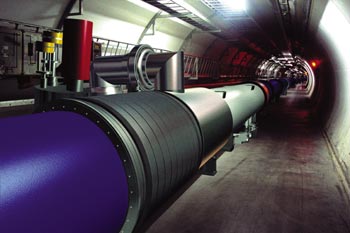

At the March meetings of CERN’s governing body, Council, the laboratory’s management presented preliminary ideas for absorbing the cost overrun for the Large Hadron Collider (LHC) project identified last year. These focus more of the laboratory’s resources on the LHC, with compensatory reductions being made in other scientific programmes.

Under the management’s proposals, the running time for CERN’s existing accelerators could be reduced by up to 30% each year until the LHC starts up. The largest accelerator, the Super Proton Synchrotron, which provides test beams for the LHC experiments and supports the current high-energy programme, would not run at all in 2005. Other potential areas for savings have been identified in long-range research and development, LHC computing, the fellows and associates programme (CERN fellowships are fixed-term appointments for young people; associateships allow sabbatical periods to be spent at CERN later on), and general overheads. Savings could also be made in services contracted in to the laboratory. The total amount to be redirected to the LHC is expected to amount to SwFr 500 million (€ 341 million).

The plan envisages LHC start-up in 2007 and full payment for the new facility by 2010 with no budget increase. CERN’s director-general, Luciano Maiani, nevertheless urged Council to consider an increase in the laboratory’s budget over the medium term. This would allow the LHC to be financed by 2009 and would enable limited research and development to continue, standing the laboratory in good stead for the longer term.

CERN’s staff association, along with French and Swiss unions representing employees of companies working on the CERN site, also made their opinions known by presenting letters to Council. The staff association argued that in its opinion, more resources are needed to complete the LHC. The unions expressed their concerns over the impact of cutbacks at CERN on local employment.

A decision on the management’s proposals will be taken at the next meeting of Council in June. By then, the report of an external review committee set up in November (see CERN reacts to increased LHC costs) will be ready, and management proposals will also be complete. In the meantime, Council agreed to release SwFr 20 million from the 5% of the laboratory’s 2002 budget initially held back pending resolution of LHC funding issues. In a separate initiative, the Swiss delegation said that Switzerland would advance SwFr 90 million to CERN over the next three years, to be deducted from later contributions.

More than a year after being asked to study the opportunities and priorities for US nuclear physics research in the coming decade, the Department of Energy/National Science Foundation Nuclear Science Advisory Committee (NSAC) has recently submitted its latest long-range plan for the field. This is the fifth in an influential series of reports that NSAC has prepared on a regular basis since 1979. The US nuclear physics community is a diverse one which has its roots in nuclear structure studies, but which has branched out in recent years to address questions at the forefront of a number of related areas including nucleon structure, nuclear astrophysics, the nature of hot nuclear matter and searches for physics beyond the Standard Model. As part of the planning process, town meetings sponsored by the Division of Nuclear Physics of the American Physical Society for major subfields have provided a forum for presenting new ideas. A long-range plan working group then drafted overall priorities, taking into account current developments in nuclear physics on the world scene.

Recent investments in facilities such as the Relativistic Heavy-Ion Collider (RHIC) at Brookhaven, CEBAF at Jefferson Laboratory and the newly upgraded National Superconducting Cyclotron Laboratory at Michigan State University have positioned the field well for the future. Because of this, the plan concludes that “the highest priority of the nuclear science community is to exploit the extraordinary opportunities for scientific discoveries made possible by these investments.” Unfortunately, as with many branches of the physical sciences, funding for nuclear physics in the US has not kept pace with inflation in recent years. The plan’s first recommendation therefore calls for a 15% increase in base funding, which would allow more effective operation of accelerator facilities, increase support for university researchers, and revitalize the nuclear theory programme.

Looking further into the future, the plan recommends investment in areas where capabilities in the US can be dramatically improved, providing significant new capabilities on the international scene. The highest priority for major new construction is given to the Rare Isotope Accelerator – RIA (see Climbing out of the nuclear valley). This will provide higher intensities of radioactive beams than any present or planned facility worldwide, and will be used primarily for nuclear structure and astrophysics studies, with opportunities also for experiments on fundamental symmetries and in a number of applied areas.

Next, the plan recommends the construction of the world’s deepest underground science laboratory, noting that: “This laboratory will provide a compelling opportunity for nuclear scientists to explore fundamental questions in neutrino physics and astrophysics.” The plan also recommends the upgrade of CEBAF to 12 GeV by the addition of additional, high-field, superconducting cavities (see How CERN became popular with US physicists).

Finally, the plan endorses a number of smaller initiatives, including R&D towards an electron-ion collider that could be integrated into the RHIC facility. The scientific case for such a facility is currently under active consideration within the nuclear physics community.

In the late 1970s, CERN made the bold decision to convert its new Super Proton Synchrotron (SPS), only just getting into its stride as a fixed-target machine, into a proton-antiproton collider. The fast-tracked project began operation in 1981 and soon led to CERN’s first Nobel prize. It was a new watershed for European physics.

The innovative idea to convert a major proton synchrotron in this way came from David Cline, Peter McIntyre and Carlo Rubbia, then all working in the US. It had been initially proposed to Fermilab, but the US laboratory committed itself instead to increasing the beam energy of its existing synchrotron by adding superconducting magnets. Converting the Fermilab machine into a proton-antiproton collider became a longer-term goal. With such a project scheduled in the US, there was no immediate migration to CERN’s fast-tracked version.

For the CERN collider, the lessons of the Intersecting Storage Rings (ISR) had been learned. “Keyhole” physics was not the way to go. Carlo Rubbia’s 2000 tonne UA1 experiment for the new collider completely surrounded the proton-antiproton collision point, and its sheer size was impressive by the standards of the day. From the US, it had on board a Riverside contingent (a tradition having been set at the ISR) and David Cline, then at Wisconsin. As UA1 gained momentum, more physicists came from Rubbia’s base at Harvard, from MIT, and from Wisconsin.

The CERN proton-antiproton collider was built to house more than one experiment, and there were several contenders. An unsuccessful bid was made by Sam Ting of MIT, who at that time was the leader of the Mark-J experiment at the PETRA electron-positron collider at the DESY laboratory, Hamburg. UA2, the second major experiment approved for the proton-antiproton collider, was essentially European. Additional high-energy antiproton experiments at CERN included a gas-jet target, attracting groups from Michigan and Rockefeller (including ISR pioneer Rod Cool), and a study of jet structure by a dedicated UCLA group.

For low-energy antiprotons, CERN had the LEAR ring, and several US groups contributed to experiments here. The tradition continues with the AD antiproton decelerator, notably with Gerry Gabrielse’s Harvard group making precision measurements of antiproton parameters.

Even while the proton-antiproton collider was getting into its stride in the early 1980s, CERN began a push for its next large machine, the 27 km LEP electron-positron collider. Such a large machine was again unique, and therefore an attraction for US physicists, who proposed the LOGIC detector. There were four slots for experiments at LEP and more than four proposals. LOGIC did not make it.

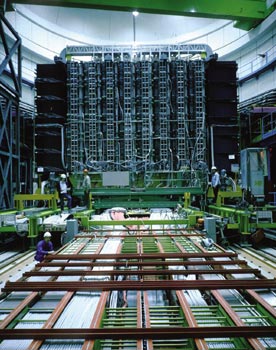

Ting, having lost out at the proton-antiproton collider, was determined to get a front seat at LEP. He, like Lederman, understood the importance of studying lepton pairs, and had got together a major international effort with scientists based in the US, China and Europe for a detector to analyse muons using a huge magnetic spectrometer. The proposal was initially labelled “L3” as it was the third letter of intent to be tabled for LEP, and the collaboration hoped that a more positive title would emerge. The experiment was approved, but no better name appeared. It went on to become a major US effort, with groups from Alabama, Boston, Caltech, Carnegie-Mellon, Harvard, Johns Hopkins, Los Alamos, Michigan, Northeastern, Oak Ridge, Princeton, Purdue and UC San Diego being introduced to research at CERN.

US researchers also collaborated in the other three LEP experiments. ALEPH, initially led by Jack Steinberger, included groups from Florida State, UC Santa Cruz, Washington/Seattle, and from Wisconsin, under Sau-Lan Wu, who had moved to LEP after previous research with the TASSO experiment at PETRA. OPAL included groups from Duke, Indiana, Maryland, Oregon and UC Riverside. DELPHI had participation from Ames, Iowa.

The SPS fixed-target programme had initially had little attraction for US physicists, as Fermilab’s machine had higher energy and was commissioned earlier. However, a new development came in the 1980s when the SPS became the scene of experiments using high-energy beams of nuclei (although the initial US push had been for an alternative heavy-ion scenario). This was a natural extension of work which had been pioneered at the Berkeley Bevalac, and the Lawrence Berkeley Laboratory made vital contributions to the ion source and nuclear beam infrastructure for these experiments.

by D Allan Bromley, Springer-Verlag New York, ISBN 0387952470, $59.95.

Senior statesman of US physics, Allan Bromley, has chosen the centenary of the American Physical Society to offer an illustrated review of the last 100 years of physics. At various times in his career, Professor Bromley has been president of the American Physical Society, the American Association for the Advancement of Science and the International Union of Pure and Applied Physics. He was also founder of Yale’s nuclear structure laboratory, and is Sterling professor of the sciences and dean of engineering at Yale. All of these achievements make him very well qualified to present a successful and accessible overview of 20th-century physics.

Pictures are the stars of this book, bringing the highly readable narrative to life. The reader’s eyes are spoiled by images such as that of J Robert Oppenheimer and Edward Teller shaking hands, despite their well publicized differences. The great advances in accelerators are brought home by the picture of the original Cockcroft-Walton machine, and the hilarious photo of Isidor Rabi cooking hot dogs on the coil head of the Columbia cyclotron demonstrates not only that cooling technology has improved over the years, but that physicists can have a delicious sense of humour.

What A Century of Physics necessarily lacks in depth, it more than makes up for in breadth. After covering events from the early part of the century, such as the Annus Mirabilis of 1932 and the Manhattan project, Bromley moves on to discuss post-war physics. He covers subjects as diverse as superconductivity and the evolution of computers, and he explains the Standard Model and covers the research activity of laboratories all around the world.

At the end of the book, Bromley draws connections between particle physics research and cosmology. In the book’s final breath, he goes back to the start of it all. Ten unanswered questions conclude his report, opening the door to a new century of physics.

by K A Milton, World Scientific, ISBN 9810243979 $87/£58.

In 1948 Hendrik Casimir showed that, according to quantum electrodynamics (QED), two parallel conducting plates should exert a force on each other – an effect that now bears his name. This force, according to one of several possible interpretations, is a direct result of the existence of zero-point vacuum fluctuations of the electromagnetic field. In simple terms, as the plates are placed closer and closer together, more and more modes of the electromagnetic field are excluded, with a corresponding reduction in the (admittedly infinite) amount of zero-point energy between them and an associated (and, amazingly, finite) attractive force. Similar effects occur whenever boundaries are placed in the vacuum, and all are collectively considered to be manifestations of the Casimir effect.

Milton’s book reviews this remarkable phenomenon from a theoretical viewpoint. Starting with parallel conducting plates, he goes on to extensions to different geometries; partially conductive and dielectric materials in place of conductors; the relation to van der Waals forces; dimensions more and less than the usual 3+1; contributions due to fermion fields; finite temperature effects; radiative corrections; and implications for hadronic physics and even cosmology.

With all these calculations and applications one might imagine that the Casimir effect would be well understood, but hardly anything could be further from the truth. For example, Casimir forces can be repulsive; they tend to expand a spherical shell, which is by no means intuitively obvious and in fact is a bit of a pity. Anticipating a force of the opposite sign, Casimir had hoped that they might supply the Poincaré stresses needed to stabilize a model of an electron as a tiny spherical shell of charge, and even lead to a calculation of the numerical value of the fine structure constant.

If the sign of the Casimir effect for a spherical shell is somewhat surprising, what happens in other cases can be even stranger. Change a spherical shell to a cubical box and it still tries to expand, but make it a long thin rectangular box and it tends to collapse. Go to an even number of space dimensions and the force on a hyperspherical shell becomes infinite. It’s all wonderfully bewildering.

Perhaps the most interesting recently recognized manifestation of the Casimir effect – if indeed that’s what it is – is the phenomenon of sonoluminescence, in which an acoustically tickled bubble of air in water releases visible light in 100 picosecond bursts. While the jury is still out on what exactly is going on, there are calculations suggesting that this could be due to a dynamical version of the Casimir effect in which vibrations of the bubble excite the QED vacuum. Here, however, the theory is much more difficult to work out, and different approximations lead to wildly differing estimates of how big the effect ought to be.

The Casimir effect is about a lot more than a force between two metal plates, and Milton’s book offers a great opportunity to read about it and learn the techniques by which it can be calculated. My one criticism of the book, which is probably not really fair given that the author is a theorist, is that it would be beneficial to have a discussion of the techniques by which the effect is observed in the laboratory. That said, the book is very comprehensive, clearly written and filled with wonderful physics.

by V Gribov and J Nyiri, 2001 Cambridge University Press (Cambridge monographs on particle physics, nuclear physics and cosmology no. 13), ISBN 0521662281, £55/$80.

This short book is based on the lectures of Vladimir Gribov that were given in Leningrad in 1974. It was completed, after his death in 1997, by his collaborator Julia Nyiri and it provides a pleasant introduction to the basics of field theory and quantum electrodynamics (QED). One of the book’s strengths is its intuitive and relatively leisurely introduction to quantum field theory (QFT) via the Feynman propagator and diagram approach that is particularly suited to students on their first approach to the forbidding machinery of modern QFT. Indeed, in its treatment of elementary but fundamental topics – such as the construction of the scattering amplitude; the relation between causality, unitarity and analyticity in the Mandelstam plane; and tree-level processes such as the Compton effect or soft electron bremsstrahlung – it can be compared to two of the best older texts on quantum electrodynamics – Feynman’s own book of this title and the volume on QED of the Landau and Lifshitz series. Unfortunately it also inherits deficiencies from its origins in the early 1970s.

Though the last two chapters discuss radiative corrections in QED and some aspects of renormalization theory, such as Ward identities, no mention is made of the central topic of the renormalization group, either in its older Gell-Mann-Low form or in the more modern Wilsonian guise within the effective field theory picture. Thus there are no anomalous dimensions of operators or running couplings as encapsulated in beta-functions – apart from what the student may find rather confusing remarks on the “zero charge problem”. Without these crucial tools a student is ill-prepared to explore the deeper properties of quantum field theory.

In addition there is no discussion of spontaneous symmetry breaking, the Higgs mechanism, Yang-Mills theory, ghosts, dimensional regularization, anomalies, or the operator product expansion. Therefore none of the physics of the theory of the strong or weak interactions can be discussed. So sadly, despite its pleasing and pedagogical introduction to the basics of QED, it can’t compete with modern quantum field theory texts, such as Peskin’s “Introduction to Quantum Field Theory”, as a full introductory course. However, I can recommend it as an enjoyable basic supplement to more complete texts.

In the late 1940s, Europe was struggling to emerge from the ruins of the Second World War. The US had played a vital role in the conflict, but had been less affected materially, and a shining vision of life across the Atlantic was a beacon of hope for millions of Europeans living in austerity, if not misery.

In a speech at Harvard on 5 June 1947, US Secretary of State George C Marshall said that the US should help to “assist in the return of normal economic health in the world”. North American “Marshall aid” was a major factor in restoring European economic health and dignity.

During the global conflict, many eminent European scientists had been drawn into the Manhattan Project at Los Alamos. Post-war, US science remained pre-eminent. Anxious to stem a “brain drain” of talent, farsighted pioneers saw that Europe needed a comparable scientific focus. This was the seed of an idea for a European centre for atomic research.

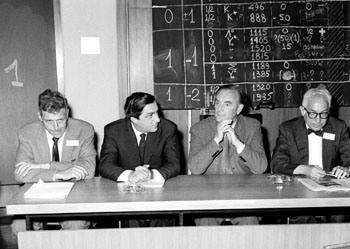

One of the organizations established in the wake of the Second World War to help promote world peace and co-operation was the United Nations Educational, Scientific and Cultural Organization (UNESCO). At the UNESCO General Conference in Florence, Italy, in June 1950, the idea for a European scientific laboratory still lay dormant. Among the US delegation at Florence was Isidor Rabi, who had won a 1944 Nobel prize for his work on the magnetic properties of nuclei. Rabi had played a key wartime role at the MIT Radiation Laboratory, and understood how pressing scientific needs could be transformed into major new projects. After the war Rabi played a major role in establishing the US Brookhaven National Laboratory.

The establishment of an analogous European laboratory was to Rabi a natural and vital need. However, on arrival in Florence he was disturbed to find that there was no mention of this idea on the agenda. Two Europeans, Pierre Auger (then UNESCO’s director of exact and natural sciences) and Edoardo Amaldi, who was to be a constant driving force, helped Rabi through the intricacies of European committee formalities. So the European seed was fertilized and within a few years CERN was born.

Another major, and very different, US contribution to CERN came two years after the Florence meeting. In 1952 a group of European accelerator specialists – Odd Dahl of Norway, Frank Goward of the UK and Rolf Wideröe of Germany – visited Brookhaven. CERN’s initial goal was to build a scaled-up version of Brookhaven’s new synchrotron – the Cosmotron – and the CERN group were anxious to admire the highest-energy accelerator in the world at that time.

To prepare a welcome for the European visitors, Stanley Livingston at Brookhaven called together his accelerator specialists to see how they could help the Europeans. During one of these meetings Livingston pointed out that all of the machine’s C-shaped focusing magnets faced outwards. Why not make some of them face inwards? Quickly Ernest Courant and the rest of the Brookhaven team saw that arranging the magnets to face alternately inward and outward could increase the focusing power of the synchrotron. The European visitors arrived just as the implications of the “alternating gradient” idea were being appreciated.

The CERN team took the idea back to Europe and immediately incorporated it into their new synchrotron design. Two members of the Brookhaven team – John and Hildred Blewett – later went to CERN and played a major role in ensuring that the new CERN Proton Synchrotron (PS) delivered its first high-energy protons in November 1959, several months before Brookhaven’s Alternating Gradient Synchrotron. This was the start of a long tradition of US-Europe collaboration in development work for major particle beam machines, which continues to this day.

Ernest Courant later made important contributions to CERN’s Intersecting Storage Rings (ISR) project, helping to convince accelerator physicists that beams in a proton collider could remain stable for long periods. In the early 1970s, CERN specialists came to Fermilab to help build and commission the big new US synchrotron. A few years later, US machine physicists came to CERN when the comparable Super Proton Synchrotron was getting under way. In the mid-1970s, Burt Richter of SLAC, during a sabbatical sojourn at CERN, helped set the scale for CERN’s LEP electron-positron collider, which had to be built as large as possible to minimize the losses caused by synchrotron radiation. The design eventually settled on a circumference of 27 km.

CERN officially came into being in 1954 when its convention document was ratified by the founding member states. Three years later, its first particle accelerator, a 600 MeV synchrocyclotron (SC), began operations. SC experiments on pion decay soon began to make their mark on the world particle physics scene. Slower to get going at the SC was a major effort to precision measure the magnetic moment of the muon – the famous g-2 experiment.

CERN experiments and major international physics conferences at CERN and in Geneva in the late 1950s introduced many US experimentalists to the attractions of Europe for a short visit or a longer sabbatical stay. One of these was Leon Lederman, newly tenured at Columbia, who made many useful contacts during his first stay at CERN and who left resolved to return. The SC g-2 experiment involved a lot of physicists by the standards of the day and attracted several other major US figures. Some also collaborated in bubble-chamber studies at CERN to determine particle properties vital for the emerging particle classification schemes based on internal symmetry.

As one of CERN’s main aims was to stem the tide of scientific migration westwards across the Atlantic, it was natural for the laboratory to headhunt Europeans who had made the move to the US. So CERN’s first director-general was the Swiss physicist Felix Bloch, who had left Europe in 1933 and went on to win a 1952 Nobel prize for measurements of nuclear magnetism. However, Bloch’s move to CERN was not a success.

Another contemporary colossus straddling the Atlantic was Victor Weisskopf. Austrian by birth, Weisskopf had made pioneering contributions to quantum mechanics in Europe in the 1930s, and, like Bloch and many others, had fled to the US to escape Nazi persecution, eventually making his way to Rochester. During the Second World War Weisskopf had worked at Los Alamos as deputy to theory division leader Hans Bethe. At Los Alamos, Weisskopf developed a flair for 20th-century “big science”.

When CERN was looking for a new director-general in the early 1960s, Weisskopf was a natural candidate, a distinguished European with experience in the management of big physics projects. Despite his protests that he knew little about administration, he was pushed into the job and CERN flourished. During Weisskopf’s mandate CERN developed a strong sense of purpose, and ambitious new projects for the future were authorized.

Younger Europeans who had been working in the US also chose CERN as their research base for a return to Europe in the early 1960s. Several were to go on to become very influential. Jack Steinberger emigrated to the US in 1934 and went on to make landmark contributions, mainly with bubble chambers, at the new generation of post-war accelerators at Berkeley, Columbia and Brookhaven. At CERN, Steinberger switched to electronic detectors.

After completing his degree at Pisa, Carlo Rubbia moved to Columbia for a taste of front-line research in weak interaction physics before moving to the SC at CERN. In their subsequent careers, Rubbia and Steinberger were highly visible from either side of the Atlantic. Both these physicists participated in the first studies at CERN of the phenomenon of CP violation, discovered at Brookhaven in 1964.

The Ford Foundation provided generous funding so that scientists from nations that were not signatories to the CERN Convention could participate in the laboratory’s research programme. In this way, more young US researchers were able to visit. One was Sam Ting, who worked at the PS as a Ford Foundation fellow with Giuseppe Cocconi.

Under Weisskopf, CERN’s next major project was the innovative ISR, the world’s first proton-proton colliding beam machine, which came into operation in 1971. It was unique. For the first time, Europe had a kind of front-line particle physics machine that the US didn’t, attaining a totally new energy range, and many scientists were keen to see what it could do. Among the first to make the eastward pilgrimage to Geneva were Leon Lederman of Columbia and Rod Cool of Rockefeller.

Working at Brookhaven, Lederman had studied the production of muon pairs, initially hunting for the intermediate boson, the carrier of the weak nuclear interaction. This hunt was some 15 years premature, but on the other hand it convinced Lederman, and others, of the value of lepton pairs as a signature of basic interactions.

At the ISR, a Europe-Columbia-Rockefeller collaboration was among the first to see that under ISR conditions some high-energy particles emerged at wide angles to the direction of the colliding beams. This suggested that occasionally something violent happened when the proton beams clashed together. It was a few years after the historic experiments at SLAC, which had used electrons to probe deep inside the proton and see that it contained hard scattering centres, but the ISR experiments saw the constituents deep inside protons colliding with each other.

Over the lifetime of the ISR (1971-84), US participation in experiments at CERN developed from small bands of intrepid pioneers to major groups. Other active collaborations involved researchers from Brookhaven, Harvard, MIT, Northwestern, Riverside, Stony Brook, Syracuse and UCLA.

A major US contribution at CERN was the 1979 discovery at the ISR of direct single photons from quark processes – the first sighting of electromagnetic radiation from quarks. Playing an important role here was Bob Palmer, a European migrant to the US who retained an attachment to CERN.

Over its first decade of operation the ISR made it clear that “keyhole physics”, using just a small sample of the produced particles, was not the only way to go, and colliding beam machines needed big detectors to intercept as many as possible of the emerging particles. With their ISR apprenticeship, US physicists learned this lesson early.

The second half of this history will look at US involvement in modern collider physics at CERN.

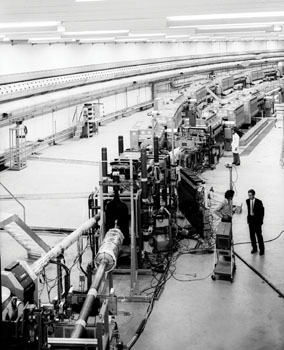

Some 50 km south of San Francisco, a long, low structure stretches for 3 km through the rolling, oak-studded hills behind the Stanford University campus to the base of the Santa Cruz mountains. This curious feature is the klystron gallery of the Stanford Linear Accelerator Center (SLAC) – by far the world’s largest electron microscope. It is one of the longest buildings on the surface of the Earth.

Ever since this powerful scientific instrument began operating in the mid-1960s, SLAC has been generating intense, high-energy beams of electrons and photons for research on the structure of matter. Physicists using its facilities have received three Nobel prizes for the discovery of the quarks and the tau lepton, both recognized today as fundamental building blocks of matter. Led by Wolfgang Panofsky and Burton Richter, its first two directors, the centre has also played a leading role in developing electron-positron storage rings and large “4p” detectors to observe subatomic debris spewing out from high-energy particle collisions.

Since the mid-1970s, other scientists have employed SLAC’s ultrabright X-ray beams to study the structure and behaviour of matter at atomic and molecular scales in the Stanford Synchrotron Radiation Laboratory (SSRL), now a division of SLAC. Molecular biologists, for example, have used these X-ray beams to determine the detailed structures of important biological molecules such as HIV protease and RNA polymerase. Still others have examined the behaviour of catalysts, semiconductors, superconductors, and the endless variety of advanced materials that are becoming increasingly essential in today’s high-tech industries.

SLAC is a national laboratory operated by Stanford University on behalf of the US Department of Energy (DOE), which supports its operations. The National Institutes of Health and the National Science Foundation provide additional funding for specific equipment and experiments. Use of SLAC’s facilities is available to qualified researchers from around the world; about 3000 users come to the centre each year from more than 20 countries to perform research in groups ranging in size from a few to several hundred scientists. In addition, SLAC has a staff of about 1400, of whom more than 300 are scientists involved in ongoing research. The results of all research performed at the laboratory are published openly in scientific and technical journals; no classified research is carried out on the premises.

The principal focus of research at SLAC is elementary particle physics – the field in which the centre has earned its three Nobel prizes. The first of these went to Richter, who shared the 1976 prize with Sam Ting for the discovery of the famous J/psi particle (which was eventually found to be made of charm quarks) two years earlier. In 1990, Jerome Friedman, Henry Kendall and Richard Taylor shared the prize for uncovering the quark substructure of protons and neutrons by studying deep-inelastic electron scattering from these targets in the late 1960s and early 1970s. SLAC’s third Nobel prize was awarded to Martin Perl in 1995 for his discovery of the tau lepton in the mid-1970s.

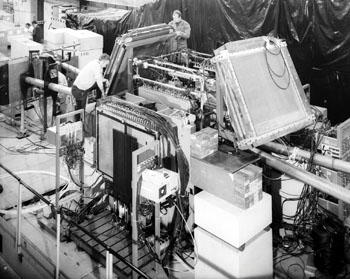

Stanford and SLAC physicists have also spearheaded the development of linear electron accelerators since the late 1940s. In the past two decades they have pioneered the development of linear electron-positron colliders. This work began in the early 1980s when SLAC upgraded its linear accelerator and converted it into the Stanford Linear Collider (SLC). Whereas CERN’s Large Electron-Positron (LEP) collider achieved higher energies, beam polarization proved to be the SLC’s forte, allowing researchers to probe subtle phenomena in the dominant Standard Model of particle physics. During the late 1980s and early 1990s, experiments at the SLC and LEP studying the decays of massive Z particles pinned down the exact number of light neutrino species and measured many key parameters of the Standard Model – especially the weak mixing angle – to high levels of precision. Since the shutdown of LEP in 2000, SLAC has been generating the highest-energy electron and positron beams in the world.

Working with colleagues from other high-energy physics laboratories in Japan, Europe and the US, SLAC physicists have developed accelerator technology for a next-generation instrument called the Next Linear Collider, which will be 30 km long. In January 2002, the US High-Energy Physics Advisory Panel recommended that US physicists play a leading role in an international effort to design and build such a linear collider.

Today the SLAC high-energy physics programme pivots around the PEP-II B-Factory. This facility was built during the mid-1990s under the leadership of SLAC’s current director Jonathan Dorfan as an upgrade of the original PEP storage ring. The electron-positron collider resides in a roughly circular tunnel that courses for 2200 m under one end of the 450 acre site. Inside the sophisticated 1200 ton BaBar particle detector, beams of electrons and positrons collide at unequal energies – 9.0 and 3.1 GeV – creating millions of pairs of B mesons per month. An international collaboration – involving about 550 physicists from more than 70 institutions in nine countries – is examining how these particles disintegrate and searching for subtle differences between matter and antimatter. During the summer of 2001, they uncovered conclusive evidence for such an asymmetry, known as CP violation, in certain specific decays of neutral B mesons. The BaBar collaboration is continuing to seek further examples of this rare phenomenon, which is widely believed to be responsible for the great preponderance of matter in the universe.

Physics research continues to thrive in SLAC’s cavernous End Station A. Since the landmark discovery of quarks there, nuclear and high-energy physicists have used this fixed-target experimental facility to study the substructure of nuclear matter in great detail, most recently with polarized beams of 50 GeV electrons that became available after the construction of the SLC. They are now using these beams to make an exacting measurement of the weak mixing angle by scattering polarized electrons from atomic electrons and measuring the extremely slight asymmetries that are expected to occur. These physicists are also developing high-energy beams of polarized photons to continue their research on the quark-gluon substructure of protons and neutrons.

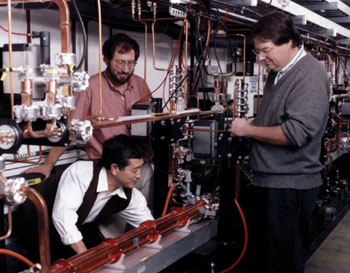

Looking to the long-term future of high-energy physics research, a group of SLAC physicists and engineers has been working for several years on advanced particle acceleration techniques. In collaboration with university researchers, for example, the group is developing laser-induced plasmas that can boost the energy of an electron beam substantially over very short distances. This team has worked on “plasma lenses” to focus and accelerate particle beams.

SLAC has also been moving aggressively into the closely related fields of particle astrophysics and cosmology, using insights and techniques from particle physics to study the heavens. (In fact, the leading cosmological theory of inflation was conceived at SLAC in 1980 by Alan Guth, then a postdoctoral researcher.) Aided by scientists from universities and laboratories in Europe, Japan and the US, SLAC physicists are designing and building the Gamma-ray Large Area Space Telescope (GLAST), a high-resolution detector of energetic (up to about 300 MeV) photons scheduled for launch into Earth orbit in 2005. GLAST will employ sophisticated particle-detection and data-acquisition techniques that were originally developed for ground-based particle-physics experiments. Jointly funded by the DOE, the National Aeronautics and Space Administration and foreign scientific agencies, this satellite will examine sudden outbursts of gamma rays from black holes and other exotic astrophysical sources.

Cutting-edge research into the atomic and molecular structure of matter occurs at SSRL. Using the SPEAR storage ring, which was adapted to function as a dedicated synchrotron radiation source, scientists generate intense X-ray beams from a circulating 3 GeV electron beam. Each year, more than 1600 scientists from many different disciplines use this radiation for research in such areas as designing new drugs, developing advanced information technologies (for example flat-panel computer displays and high-density microchips) and remediation of environmental contamination. Since its inception in 1974, SSRL has pioneered this burgeoning field of synchroton radiation research by developing equipment and experimental techniques commonly used today in nearly 50 such laboratories around the world. A major upgrade of the SPEAR facility that is currently under way will greatly increase the brightness of its X-ray beams and help to keep SSRL competitive with these other facilities.

Today, SLAC and SSRL are poised to begin building a next-generation facility, to be called the Linac Coherent Light Source, that will help to roll back the frontiers of X-ray research. Electrons accelerated in the final third of the linear accelerator will be compressed into tiny bunches that will then be directed through a special magnet array to produce laser-like X-ray beams of unparalleled brilliance. This unique instrument should open up new avenues of scientific research on such topics as ultrafast chemical reactions.

SLAC is also the world’s leader in developing high-power klystrons, which generate the microwaves used to accelerate electrons. Invented in 1937 at Stanford University, klystrons are also used to power radar arrays and for medical accelerators employed in cancer therapy. For decades, SLAC and the nearby Varian Corporation shared people, designs and ideas in a symbiotic relationship that has steadily advanced klystron technology. Medical accelerators are now a billion-dollar industry; they are used to give cancer treatments to more than 100,000 people every day. In addition, a software program called EGS (for Electron-Gamma Shower), developed by SLAC’s Ralph Nelson to simulate showers of subatomic particles, is used by hundreds of hospitals throughout the world to plan radiation dosages for cancer therapy.

Computers and telecommunications are other areas where SLAC research has strongly affected both the US and world economies. In December 1991, Paul Kunz expanded the then-fledgling World Wide Web (invented at CERN by Tim Berners-Lee) to North America, establishing the first US website at SLAC and making its popular SPIRES database easily accessible. The following year, another SLAC physicist developed an influential graphical Web browser to help communicate the reams of data and publications that are produced in the field every year.

Scientific education is ultimately one of SLAC’s most important goals. The thousands of students who have come to the laboratory to participate in advanced research have learned from working side by side with some of the best scientists on the planet, helping to push back the frontiers of their disciplines. They return to universities across the country and around the world – or take positions in industry or government – with a much better understanding of what it means to carry out scientific research.