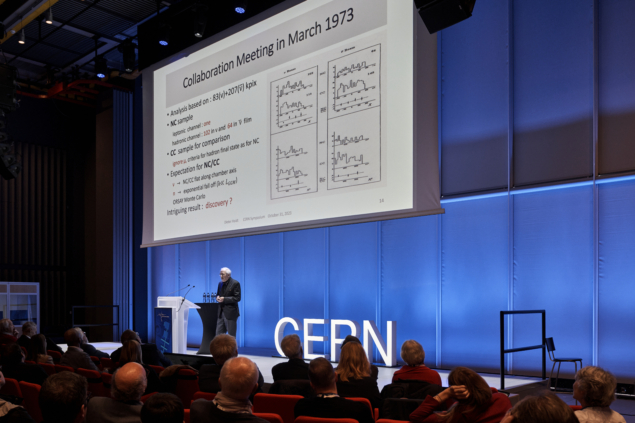

Celebrating the 1973 discovery of weak neutral currents by the Gargamelle experiment and the 1983 discoveries of the W and Z bosons by the UA1 and UA2 experiments at the SppS, a highly memorable scientific symposium in the new CERN Science Gateway on 31 October brought the past, present and future of electroweak exploration into vivid focus. “Weak neutral currents were the foundation, the W and Z bosons the pillars, and the Higgs boson the crown of the 50 year-long journey that paved the electroweak way,” said former Gargamelle member Dieter Haidt (DESY) in his opening presentation.

History could have turned out differently, said Haidt, since both CERN and Brookhaven National Laboratory (BNL) were competing in the new era of high-energy neutrino physics: “The CERN beam was a flop initially, allowing BNL to snatch the muon-neutrino discovery in 1962, but a second attempt at CERN was better.” This led André Lagarrigue to dream of a giant bubble chamber, Gargamelle, financed and built by French institutes and operated by CERN with beams from the Proton Synchrotron (PS) from 1970 to 1976. Picking out the neutral-current signal from the neutron-cascade background was a major challenge, and a solution seemed hopeless until Haidt and his collaborators made a breakthrough regarding the meson component of the cascade.

The ten years between the discovery of neutral currents and the W and Z bosons are what took CERN from competent mediocrity to world leader

Lyn Evans

By early July 1973, it was realised that Gargamelle had seen a new effect. Paul Musset presented the results in the CERN auditorium on 19 July, yet by that autumn Gargamelle was “treated with derision” due to conflicting results from a competitor experiment in the US. ‘The Gargamelle claim is the worst thing to happen to CERN,’ Director-General John Adams was said to have remarked. Jack Steinberger even wagered his cellar that it was wrong. Following further cross checks by bombarding the detector with protons, the Gargamelle result stood firm. At the end of Haidt’s presentation, collaboration members who were present in the audience were recognised with a warm round of applause.

From the PS to the SPS

The neutral-current discovery and the subsequent Gargamelle measurement of the weak mixing angle made it clear not only that the electroweak theory was right but that the W and Z were within reach of the technology of the day. Moving from the PS to the SPS, Jean-Pierre Revol (Yonsei University) took the audience to the UA1 and UA2 experiments ten years later. Again, history could have taken a different turn. While CERN was working towards a e+e– collider to find the W and Z, said Revol, Carlo Rubbia proposed the radically different concept of a hadron collider — first to Fermilab, which, luckily for CERN, declined. All the ingredients were presented by Rubbia, Peter McIntyre and David Cline in 1976; the UA1 detector was proposed in 1978 and a second detector, UA2, was proposed by CERN six months later. UA1 was huge by the standards of the day, said Revol. “I was advised not to join, as there were too many people! It was a truly innovative project: the largest wire chamber ever built, with 4π coverage. The central tracker, which allowed online event displays, made UA1 the crucial stepping stone from bubble chambers to modern electronic ones. The DAQ was also revolutionary. It was the beginning of computer clusters, with same power as IBM mainframes.”

First SppS collisions took place on 10 July 1981, and by mid-January 1983 ten candidate W events had been spotted by the two experiments. The W discovery was officially announced at CERN on 25 January 1983. The search for the Z then started to ramp up, with the UA1 team monitoring the “express line” event display around the clock. On 30 April, Marie Noelle Minard called Revol to say she had seen the first Z. Rubbia announced the result at a seminar on 27 May, and UA2 confirmed the discovery on 7 June. “The SppS was a most unlikely project but was a game changer,” said Haidt. “It gave CERN tremendous recognition and paved the way for future collaborations, at LEP then LHC.”

Former UA2 member Pierre Darriulat (Vietnam National Space Centre) concurred: “It was not clear at all at that time if the collider would work, but the machine worked better than expected and the detectors better than we could dream of.” He also spoke powerfully about the competition between UA1 and UA2: “We were happy, but it was spoiled in a way because there was all this talk of who would be ‘first’ to discover. It was so childish, so ridiculous, so unscientific. Our competition with UA1 was intense, but friendly and somewhat fun. We were deeply conscious of our debt toward Carlo and Simon [van der Meer], so we shared their joy when they were awarded the Nobel prize two years later.” Darriulat emphasised the major role of the Intersecting Storage Rings and the input of theorists such as John Ellis and Mary K Gaillard, reserving particular praise for Rubbia. “Carlo did the hard work. We joined at the last moment. We regarded him as the King, even if we were not all in his court, and we enjoyed the rare times when we saw the King naked!”

Our competition with UA1 was intense, but friendly and somewhat fun

Pierre Darriulat

The ten years between the discovery of neutral currents and the W and Z bosons are what took CERN “from competent mediocrity to world leader”, said Lyn Evans in his account of the SppS feat. Simon van der Meer deserved special recognition, not just for his 1972 paper on stochastic cooling, but also his earlier invention of the magnetic horn, which was pivotal in increasing the neutrino flux in Gargamelle. Evans explained the crucial roles of the Initial Cooling Experiment and the Antiproton Accumulator, and the many modifications needed to turn the SPS into a proton-antiproton collider. “All of this knowledge was put into the LHC, which worked from the beginning extremely well and continues to do so. One example was intrabeam scattering. Understanding this is what gives us the very long beam lifetimes at the LHC.”

Long journey

The electroweak adventure began long before CERN existed, pointed out Wolfgang Hollik, with 2023 also marking the 90th anniversary of Fermi’s four-fermion model. The incorporation of parity violation came in 1957 and the theory itself was constructed in the 1960s by Glashow, Salam, Weinberg and others. But it wasn’t until ‘t Hooft and Veltman showed that the theory is renormalizable in the early 1970s that it became a fully-fledged quantum field theory. This opened the door to precision electroweak physics and the ability to search for new particles, in particular the top quark and Higgs boson, that were not directly accessible to experiments. Electroweak theory also drove a new approach in theoretical particle physics based around working groups and common codes, noted Hollik.

The afternoon session of the symposium took participants deep into the myriad of electroweak measurements at LEP and SLD (Guy Wilkinson, University of Oxford), Tevatron and HERA (Bo Jayatilaka, Fermilab), and finally the LHC (Maarten Boonekamp, Université Paris-Saclay and Elisabetta Manca, UCLA). The challenges of such measurements at a hadron collider, especially of the W-boson mass, were emphasised, as were their synergies with QCD in measurements in improving the precision of parton distribution functions.

The electroweak journey is far from over, however, with the Higgs boson offering the newest exploration tool. Rounding off a day of excellent presentations and personal reflections, Rebeca Gonzalez Suarez (Uppsala University) imagined a symposium 40 years from now when the proposed collider FCC-ee at CERN has been operating for 16 years and physicists have reconstructed nearly 1013 W and Z bosons. Such a machine would take the precision of electroweak physics into the keV realm and translate to a factor of seven increase in energy scale. “All of this brings exciting challenges: accelerator R&D, machine-detector interface, detector design, software development, theory calculations,” she said. “If we want to make it happen, now is the time to join and contribute!”