On 1 November 1955, Physical Review Letters published the paper “Observation of antiprotons” by Owen Chamberlain, Emilio Segrè, Clyde Wiegand and Tom Ypsilantis, at what was then known as the Radiation Laboratory of the University of California at Berkeley. This paper, which announced the discovery of the antiproton (for which Chamberlain and Segrè would share the 1959 Nobel Prize for Physics), had been received only eight days earlier. However, the story of the discovery of the antiproton really begins in 1928, when the eccentric and brilliant British physicist, Paul Dirac, formulated a theory to describe the behaviour of relativistic electrons in electric and magnetic fields.

Dirac’s equation was unique for its time because it took into consideration both Albert Einstein’s special theory of relativity and the effects of quantum physics proposed by Edwin Schrödinger and Werner Heisenberg. While it worked well on paper, Dirac’s rather straightforward equation carried with it a most provocative implication: it permitted negative as well as positive values for the energy E. Initially few physicists seriously considered Dirac’s idea because no-one had ever observed particles of negative energy. From the standpoint of both physics and common sense, the energy of a particle could only be positive.

Attitudes towards Dirac’s equation changed dramatically in 1932, when Carl David Anderson reported the observation of a negatively charged electron in a project at the California Institute of Technology that originated with his mentor, Robert Millikan. Anderson named the new particle the “positron”. Both Dirac and Anderson would win Nobel Prizes for Physics for their discoveries. Dirac shared the 1933 Nobel prize with Schrödinger, and Anderson shared the 1936 Nobel prize with Victor Hess. However, the existence of the positron, the antimatter counterpart of the electron, raised the question of an antimatter counterpart to the proton.

As Dirac’s theory continued to explain successfully phenomena associated with electrons and positrons, it followed – from the revised standpoints of both physics and common sense – that it should also successfully explain protons. This would then demand the existence of an antimatter counterpart. The search for the antiproton was under way, but it would get off to a very slow start, as it would be another two decades before a machine capable of producing such a particle became available.

Enter the Bevatron

Anderson discovered the positron with a cloud chamber during investigations of cosmic rays, but it was extremely difficult, if not impossible, to use the same approach for finding the antiproton. If physicists were going to find the antiproton, they were first going to have to make one.

However, even with the invention of the cyclotron in 1931 by Ernest Lawrence, earthbound accelerators were not up to the task. Physicists knew that creating an antiproton would require the simultaneous creation of a proton or a neutron. Since the energy required to produce a particle is proportional to its mass, creating a proton-antiproton pair would require twice the proton rest energy, or about 2 billion eV. Given the fixed-target collision technology of the times, the best approach for making 2 billion eV available would be to strike a stationary target of neutrons with a beam of protons accelerated to an energy of about 6 billion eV.

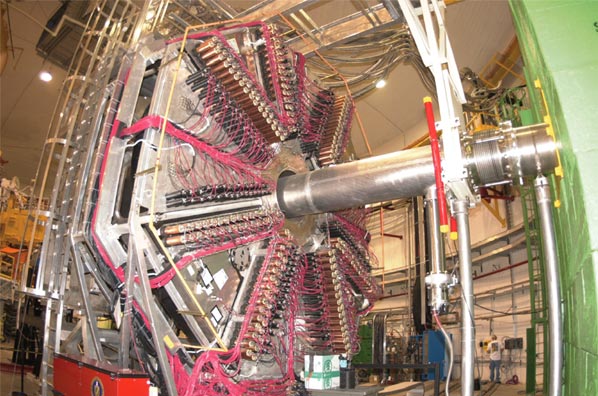

In 1954, Lawrence commissioned the Bevatron accelerator to reach energies of several billion electron-volts – then designated as BeV (now universally known as GeV) – to be built at his Radiation Laboratory in Berkeley. (Upon Lawrence’s death in 1958, the laboratory was renamed the Lawrence Berkeley National Laboratory.) This weak-focusing proton synchrotron was designed to accelerate protons up to 6.5 GeV. Though never its officially stated purpose, the Bevatron was built to go after the antiproton. As Chamberlain noted in his Nobel laureate lecture, Lawrence and his close colleague, Edwin McMillan, who co-discovered the principle behind synchronized acceleration and coined the term “synchrotron”, were well aware of the 6 GeV needed to produce antiprotons and made certain the Bevatron would be able to get there.

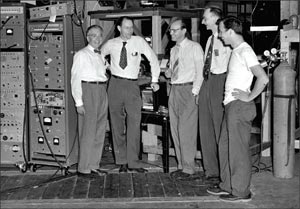

Armed with a machine that had the energetic muscle to make antiprotons, Lawrence and McMillan put together two teams to go after the elusive particle. One team was led by Edward Lofgren, who managed operations of the Bevatron. The other was led by Segrè and Chamberlain. Segrè had been the first student to earn his physics degree at the University of Rome under Enrico Fermi. He had, with the aid of one of Lawrence’s cyclotrons, discovered technetium, the first artificially produced chemical element. He was also one of the scientists who determined that a plutonium-based bomb was feasible, and his experiments on the scattering of neutrons and protons and proton polarization broke new ground in understanding nuclear forces. Chamberlain had also studied under Fermi, and under Segrè as well. He was Segrè’s assistant on the Manhattan Project at Los Alamos while still a graduate student, and later joined Segrè at Berkeley to collaborate on the nuclear-forces studies.

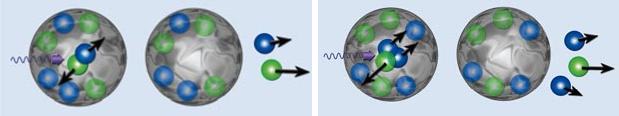

Making an antiproton was only half the task; no less formidable a challenge was to devise a means of identifying the beast once it had been spawned. For every antiproton created, 40,000 other particles would be created. The time to cull the antiproton from the surrounding herd would be brief: within about 10-7 s after it appears, an antiproton comes into contact with a proton and both particles are annihilated.

According to Chamberlain, again from his Nobel lecture, it was understood from the start that at least two independent quantities would have to be measured for the same particle to identify it as an antiproton. After considering several possibilities, it was decided that they should be momentum and velocity.

Measuring momentum

To measure momentum, the research team used a system of magnetic quadrupole lenses, which was suggested to them by Oreste Piccioni, an expert on quadrupole magnets and beam extraction, who was then at Brookhaven National Laboratory. The idea was to set up the system so that only particles of a certain momentum interval could pass through. As the Bevatron’s proton beam struck a target in the form of a copper block, fragments of nuclear collisions would emerge in all directions. While most of these fragments were lost, some would pass through the system. For specifically defined values of momentum, the negative particles among the captured fragments would be deflected by the magnetic lenses into and through collimator apertures.

To measure velocity, which was used to separate antiprotons from negative pions, the researchers deployed a combination of scintillation counters and a pair of Cherenkov detectors. The scintillation counters were used to time the flight of particles between two sheets of scintillator, 12 m apart. Under the specific momentum defined by Segrè, Chamberlain and their collaborators, relativistic pions traversed this distance 11 ns faster than the 51 ns it took for the more ponderous antiprotons. Signals from the two scintillators were set up to coincide only if they came from an antiproton. However, because it is possible for two pions to have exactly the right spacing to imitate the signal from an antiproton, the researchers also used the Cherenkov detectors.

One Cherenkov detector was somewhat conventional in that it used a liquid fluorocarbon medium. It was dubbed the “guard counter” because it could measure the velocity of particles moving faster than an antiproton. The second detector, which was designed by Chamberlain and Wiegand, used a quartz medium, and only particles moving at the speed predicted for antiprotons set it off.

In conjunction with the momentum and velocity experiments, Berkeley physicist Gerson Goldhaber and Edoardo Amaldi from Rome led a related experiment using photographic-emulsion stacks. If a suspect particle was truly an antiproton, the Berkeley researchers expected to see the signature star image of an annihilation event. Here the antiproton and a proton or neutron from an ordinary nucleus, presumably that of a silver or bromine atom in the photographic emulsion, would die simultaneously.

Success!

The antiproton experiments of Segrè and Chamberlain and their collaborators began in the first week of August, 1955. Their first run on the Bevatron lasted five consecutive days. Lofgren and his collaborators ran their experiments for the following two weeks. The Segrè and Chamberlain group returned on 29 August and ran experiments until the Bevatron broke down on 5 September. On 21 September, a week after operating crews had revived the Bevatron, Lofgren’s group was to begin a four-day run, but instead it ceded its time to Segrè and Chamberlain. That day, the future Nobel laureates and their team found their first evidence of the antiproton based on momentum and velocity. Subsequent analysis of the emulsion-stack images revealed the signature annihilation star that confirmed the discovery. In all, Segrè, Chamberlain and their group counted a total of 60 antiprotons produced during a run that lasted approximately 7 h.

The public announcement of the antiproton’s discovery received a mixed response. The New York Times enthusiastically proclaimed “New Atom Particle Found; Termed a Negative Proton”, while the particle’s hometown newspaper, the Berkeley Gazette, sombrely announced “Grim new find at UC”. The Berkeley reporter had been told that should an antiproton come in contact with a person, that person would blow up. Today, 50 years on, antiprotons have become a staple of high-energy physics experiments, with trillions being produced at CERN and Fermilab, and no known human fatalities.