The biennial Lepton-Photon conference was held in Uppsala on 30 June – 5 July. The talks erected the impressive edifice known as the Standard Model and showed that experimental ingenuity has not yet shaken its foundations. Francis Halzen summarizes.

Twenty-five years ago at the Rochester meeting held in Madison, Leon Lederman said, “The experimentalists do not have enough money and the theorists are overconfident.” Nobody could have anticipated then that experiments would establish the Standard Model as a gauge theory with a precision of one in 1000, pushing any interference from possible new physics to energy scales beyond 10 TeV. The theorists can modestly claim that they have taken revenge for Lederman’s remark. However, as the Lepton-Photon 2005 meeting underlined, there is no feeling that we are now dotting the i’s and crossing the t’s of a mature theory. All the big questions remain unanswered; worse still, the theory has its own demise built into its radiative corrections.

The electroweak challenge

The most evident of unanswered questions is why are the weak interactions weak? In 1934 Enrico Fermi provided an answer with a theory that prescribed a quantitative relation between the fine-structure constant, α, and the weak coupling, G ˜ α⁄MW2, where MW can be found from the rate of muon decay to be around 100 GeV (once parity violation and neutral currents, which Fermi did not know about, are taken into account). Fermi could certainly not have anticipated that his early phenomenology would develop into a renormalizable gauge theory that allows us to calculate the radiative corrections to his formula. Besides regular higher-order diagrams, loops associated with the top quark and the Higgs boson also contribute, and are consistent with observations.

One of my favourite physicists once referred to the Higgs as the “ugly” particle. Indeed, if one calculates the radiative corrections to the mass appearing in the Higgs potential, the same gauge theory that withstood the onslaught of precision experiments at CERN’s Large Electron-Positron collider, the SLAC linear collider and Fermilab’s Tevatron grows quadratically. Some new physics is needed to tame the divergent behaviour, at an energy scale, L, of less than a few tera-electron-volts by the most conservative of estimates. There is an optimistic interpretation, just as Fermi anticipated particle physics at 100 GeV in 1934, that the electroweak gauge theory requires new physics at 2˜3 TeV, to be revealed by the Large Hadron Collider (LHC) at CERN and, possibly, the Tevatron.

Dark clouds have built up on this sunny horizon, however, because some electroweak precision measurements match the Standard Model predictions with too high a precision, pushing L to around 10 TeV. Some theorists have panicked and proposed that the factor multiplying the unruly quadratic correction, 2 MW2 + MZ2 + Mh2 – 4Mt2, must vanish exactly. This has been dubbed the Veltman condition. It “solves” the problem because the observations can accommodate scales as large as 10 TeV, possibly even higher, once the dominant contribution is eliminated.

If the Veltman condition does happen to be satisfied, it would leave particle physics with an ugly fine-tuning problem reminiscent of the cosmological constant; but this is very unlikely. The LHC must reveal the “Higgs” physics already observed via radiative corrections, or at least discover the physics that implements the Veltman condition, which must still appear at 2 ˜ 3 TeV although higher scales can be rationalized for other tests of the theory. Supersymmetry is a textbook example. Even though it elegantly controls the quadratic divergence by the cancellation of boson and fermion contributions, it is already fine-tuned at a scale of 2 ˜ 3 TeV. There has been an explosion of creativity to resolve the challenge in other ways; the good news is that all involve new physics in the form of scalars, new gauge bosons, non-standard interactions, and so on.

Alternatively, we may be guessing the future while holding too small a deck of cards, and the LHC will open a new world that we did not anticipate. The hope then is that particle physics will return to its early traditions where experiment leads theory, as it should, and where innovative techniques introduce new accelerators and detection methods that allow us to observe with an open mind and without a plan.

CP violation and neutrino mass

Another grand unresolved question concerns baryogenesis: why are we here? At some early time in the evolution of the universe quarks and antiquarks annihilated into light, except for just one quark in 1010 that failed to find a partner and became us. We are here because baryogenesis managed to accommodate Andrei Sakharov’s three conditions, one of which dictates CP violation. Precision data on CP violation in neutral kaons have been accumulated over 40 years, and the measurements can, without exception, be accommodated by the Standard Model with three families of quarks. History has repeated itself for B-mesons, but in only three years, owing to the magnificent performance of the experiments at the B-factories – Belle at KEK and BaBar at SLAC. Direct CP violation has been established in the decay Bd →Kπ with a significance in excess of 5σ. Unfortunately, this result and a wealth of data contributed by the CLEO collaboration at Cornell, DAFNE at Frascati and the Beijing Spectrometer (BES) fail to reveal evidence for new physics. Given the rapid progress and the better theoretical understanding of the expectations in the Standard Model relative to the kaon system, the hope is that improved data will pierce the Standard Model’s resistant armour. Where theory is concerned, it is worth noting that the lattice now does calculations that are confirmed by experiment.

A third important question concerns neutrino mass. A string of fundamental experimental measurements has led progress in neutrino physics. Supporting evidence from reactor and accelerator experiments, including first data from the reborn Super-Kamiokande detector, has confirmed discovery of oscillations in solar and atmospheric neutrinos. High-precision data from the pioneering experiments now trickle in more slowly, although evidence for the oscillatory behaviour in L/E of the muon neutrinos in the atmospheric-neutrino beam has become very convincing.

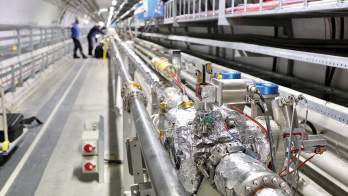

Nevertheless, the future of neutrino physics is undoubtedly bright. Construction at Karlsruhe of the KATRIN spectrometer, which by studying the kinematics of tritium decay will be sensitive to an electron-neutrino mass as low as 0.02 eV, is in progress, and a wealth of ideas on double beta decay and long-baseline experiments is approaching reality. These experiments will have to answer the great “known unknowns” of neutrino physics: their absolute mass and hierarchy, the value of the third small mixing angle and its associated CP-violating phase, and whether neutrinos are really Majorana particles. Discovering neutrinoless double beta decay would settle the last question, yield critical information on the absolute-mass scale and, possibly, resolve the hierarchy problem. In the meantime we will keep wondering whether small neutrino masses are our first glimpse of grand unified theories via the seesaw mechanism, or represent a new Yukawa scale tantalizingly connected to lepton conservation and, possibly, the cosmological constant.

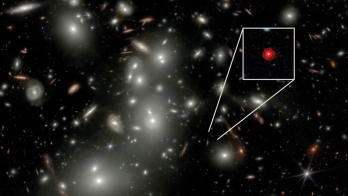

Information on neutrino mass has also emerged from an unexpected direction – cosmology. The structure of the universe is dictated by the physics of cold dark matter and the galaxies we see today are the remnants of relatively small overdensities in its nearly uniform distribution in the very early universe. Overdensity means overpressure that drives an acoustic wave into the other components that make up the universe, i.e. the hot gas of nuclei and photons and the neutrinos. These acoustic waves are seen today in the temperature fluctuations of the microwave background, as well as in the distribution of galaxies in the sky. With a contribution to the universe’s matter similar to that of light, neutrinos play a secondary, but identifiable role. Because of their large mean-free paths, the neutrinos prevent the smaller structures in the cold dark matter from fully developing and this effect is visible in the observed distribution of galaxies.

Simulations of structure formation with varying amounts of matter in the neutrino component, i.e. varying neutrino mass, can be matched to a variety of observations of today’s sky, including measurements of galaxy-galaxy correlations and temperature fluctuations on the surface of last scattering. The results suggest a neutrino mass of no more than 1 eV, summed over the three neutrino flavours – a range compatible with the one deduced from oscillations.

The imprint on the surface of last scattering of the acoustic waves driven into the hot gas of nuclei and photons also reveals a value for the relative abundance of baryons to photons of 6.5 +0.4–0.3 × 10-10 (from the Wilkinson Microwave Anisotropy Probe). Nearly 60 years ago, George Gamow realized that a universe born as hot plasma must consist mostly of hydrogen and helium, with small amounts of deuterium and lithium added. The detailed balance depends on basic nuclear physics, as well as the relative abundance of baryons to photons: the state-of-the-art result of this exercise yields 4.7+1.0-0.8 × 10-10. The agreement of the two observations is stunning, not just because of their precision, but because of the concordance of two results derived from totally unrelated ways of probing the early universe.

The physics of partons

Physics at the high-energy frontier is the physics of partons, probing the question of what the proton really is. At the LHC, it will be gluons that produce the Higgs boson, and in the highest-energy experiments, neutrinos interact with sea-quarks in the detector. We can master this physics with unforeseen precision because of a decade of steadily improving measurements of the nucleon’s structure at HERA, DESY’s electron-proton collider. These now include experiments using targets of polarized protons and neutrons.

HERA is our nucleon microscope, tunable by the wavelength and the fluctuation time of the virtual photon exchanged in the electron-proton collision. With the wavelengths achievable, the proton has now been probed with a resolution of one thousandth of its 1 fm size. In these interactions, the fluctuations of the virtual photons survive over distances ct ˜ 1/x, where x is the relative momentum of the parton. In this way, HERA now studies the production of chains of gluons as long as 10 fm, an order of magnitude larger than, and probably totally insensitive to, the proton target. These are novel structures, the understanding of which has been challenging for quantum chromodynamics (QCD).

Theorists analyse HERA data with calculations performed to next-to-next-to-leading order in the strong coupling, and at this level of precision must include the photon as a parton inside the proton. The resulting electromagnetic structure functions violate isospin and differentiate a u quark in a proton from a d quark in a neutron because of the different electric charge of the quark. Interestingly, the inclusion of these effects modifies the extraction of the Weinberg angle from data from the NuTeV experiment at Fermilab, bridging roughly half of the discrepancy between NuTeV’s result and the value in the Particle Data Book. Added to already anticipated intrinsic isospin violations associated with sea-quarks, the NuTeV anomaly may be on its way out.

While history has proven that theorists had the right to be confident in 1980 at the time of Lederman’s remark, they have not faded into the background. Despite the dominance of experimental results at the conference, they provided some highlights of their own. Developing QCD calculations to the level at which the photon structure of the proton becomes a factor is a tour de force, and there were other such highlights at this meeting. Progress in higher-order QCD computations of hard processes is mind-boggling and valuable, sometimes essential, for interpreting LHC experiments. Discussions at the conference of strings, supersymmetry and additional dimensions were very much focused on the capability of experiments to confirm or debunk these concepts.

Towards the highest energies

Theory and experiment joined forces in the ongoing attempts to read the information supplied by the rapidly accumulating data from the Relativistic Heavy Ion Collider (RHIC) at Brookhaven. Rather than the anticipated quark-gluon plasma, the data suggest the formation of a strongly interacting fluid with very low viscosity for its entropy. Similar fluids of cold 6Li atoms have been created in atomic traps. Interestingly, theorists are exploiting Juan Maldacena’s connection between four-dimensional gauge theory and 10-dimensional string theory to model just such a thermodynamic system. The model is of a 10D rotating black hole with Hawking-Beckenstein entropy, which accommodates the low viscosities observed. This should give notice that very-high-energy collisions of nuclei may prove more interesting than anticipated from “QCD-inspired” logarithmic extrapolations of accelerator data. Such physics is relevant to analysing cosmic-ray experiments.

A century has passed since cosmic rays were discovered, yet we do not know how and where they are accelerated. Solving this mystery is very challenging, as can be seen by simple dimensional analysis. A magnetic field B of size R can accelerate a particle with electric charge q to an energy Ε < ΓqvBR, with velocity v˜c, and no higher (where Γ is a possible boost factor between the frame of the accelerator and ourselves ). This is the Hillas formula. Note that it applies to our man-made accelerators, where kilogauss fields over several kilometres yield 1 TeV, because the accelerators reach efficiencies that can come close to the dimensional limit.

Opportunity for particle acceleration to the highest energies in the cosmos is limited to dense regions where exceptional gravitational forces create relativistic particle flows, such as the dense cores of exploding stars, inflows on supermassive black holes at the centres of active galaxies, and so on. Given the weak magnetic field (microgauss) of our galaxy, no structures seem large or massive enough to yield the energies of the highest-energy cosmic rays, implying instead extragalactic objects. Common speculations include nearby active galactic nuclei powered by black holes of 1 billion solar masses, or the gamma-ray-burst-producing collapse of a supermassive star into a black hole.

The problem for astrophysics is that in order to reach the highest energies observed, the natural accelerators must have efficiencies approaching 10% to operate close to the dimensional limit. This is so daunting a concept that many believe that cosmic rays are not the beams of cosmic accelerators but the decay products of remnants from the early universe, for instance topological defects associated with a grand unified theory phase transition near 1024 eV.

There is a realistic hope that this long-standing puzzle will be resolved soon by ambitious experiments: air-shower arrays of 10,000 km2, arrays of air Cherenkov detectors, and kilometre-scale neutrino observatories. While no definitive breakthroughs were reported at the conference, preliminary data forecast rapid progress and imminent results in all three areas.

The air-shower array of the Pierre Auger Observatory is confronting the problem of low statistics at the highest energies by instrumenting a huge collection area covering 3000 km2 on an elevated plane in western Argentina. The completed detector will observe several thousand events a year above 10 EeV and tens above 100 EeV, with the exact numbers depending on the detailed shape of the observed spectrum.

The end of the cosmic-ray spectrum is a matter of speculation given the somewhat conflicting results from existing experiments. Above a threshold of 50 EeV cosmic rays interact with cosmic microwave photons and lose energy to pions before reaching our detectors. This is the origin of the Greissen-Zatsepin-Kuzmin cutoff that limits the sources to our supercluster of galaxies. This feature in the spectrum is seen by the High Resolution Fly’s Eye (HiRes) in the US at the 5s level but is totally absent from the data from the Akeno Giant Air Shower Array (AGASA) in Japan.

At this meeting the Auger collaboration presented the first results from the partially deployed array, with an exposure similar to that of the final AGASA data. The data confirm the existence of events above 100 EeV, but there is no evidence for the anisotropy in arrival directions claimed by the AGASA collaboration. Importantly, the Auger data reveal a systematic discrepancy between the energy measurements made using the independent fluorescent and Cherenkov detector components. Reconciling the measurements requires that very-high-energy showers develop deeper in the atmosphere than anticipated by the particle-physics simulations used to analyse previous experiments. The performance of the detector foreshadows a qualitative improvement of the observations in the near future.

Cosmic accelerators are also cosmic-beam dumps producing secondary beams of photons and neutrinos. The AMANDA neutrino telescope at the South Pole, now in its fifth year of operation, has steadily improved its performance and has increased its sensitivity by more than an order of magnitude since reporting its first results in 2000. It has reached a sensitivity roughly equal to the neutrino flux anticipated to accompany the highest-energy cosmic rays, dubbed the Waxman-Bahcall bound. Expansion into the IceCube kilometre-scale neutrino observatory is in progress. Companion experiments in the deep Mediterranean are moving from R&D to construction with the goal of eventually building a detector the size of IceCube.

However, it is the HESS array of four air Cherenkov gamma-ray telescopes deployed under the southern skies of Namibia that delivered the particle-astrophysics highlights at the conference. This is the first instrument capable of imaging astronomical sources in gamma rays at tera-electron-volt energies, and it has detected sources with no counterparts in other wavelengths. Its images of young galactic supernova remnants show filament structures of high magnetic fields that are capable of accelerating protons to the energies, and with the energy balance, required to explain the galactic cosmic rays. Although the smoking gun for cosmic-ray acceleration is still missing, the evidence is tantalizingly close.

• The next Lepton-Photon conference will take place in Daegu, Korea, in 2007.