Today, the tools of experimental particle physics are ubiquitous in hospitals and biomedical research. Particle beams damage cancer cells; high-performance computing infrastructures accelerate drug discoveries; computer simulations of how particles interact with matter are used to model the effects of radiation on biological tissues; and a diverse range of particle-physics-inspired detectors, from wire chambers to scintillating crystals to pixel detectors, all find new vocations imaging the human body.

CERN has actively pursued medical applications of its technologies as far back as the 1970s. At that time, knowledge transfer happened – mostly serendipitously – through the initiative of individual researchers. An eminent example is Georges Charpak, a detector physicist of outstanding creativity who invented the Nobel-prize-winning multiwire proportional chamber (MWPC) at CERN in 1968. The MWPC’s ability to record millions of particle tracks per second opened a new era for particle physics (CERN Courier December 1992 p1). But Charpak strived to ensure that the technology could also be used outside the field – for example in medical imaging, where its sensitivity promised to reduce radiation doses during imaging procedures – and in 1989 he founded a company that developed an imaging technology for radiography which is currently deployed as an orthopaedic application. Following his example, CERN has continued to build a culture of entrepreneurship ever since.

Triangulating tumours

Since as far back as the 1950s, a stand-out application for particle-physics detector technology has been positron-emission tomography (PET) – a “functional” technique that images changes in the metabolic process rather than anatomy. The patient is injected with a compound carrying a positron-emitting isotope, which accumulates in areas of the body with high metabolic activity (the uptake of glucose, for example, could be used to identify a malignant tumour). Pairs of back-to-back 511 keV photons are detected when a positron annihilates with an electron in the surrounding matter, allowing the tumour to be triangulated.

Pioneering developments in PET instrumentation took place in the 1970s. While most scanners were based on scintillating crystals, the work done with wire chambers at the University of California at Berkeley inspired CERN physicists David Townsend and Alan Jeavons to use high-density avalanche chambers (HIDACs) – Charpak’s detector plus a photon-conversion layer. In 1977, with the participation of CERN radiobiologist Marilena Streit-Bianchi, this technology was used to create some of the first PET images, most famously of a mouse. The HIDAC detector later contributed significantly to 3D PET image reconstruction, while a prototype partial-ring tomograph developed at CERN was a forerunner for combined PET and computed tomography (CT) scanners. Townsend went on to work at the Cantonal Hospital in Geneva and then in the US, where his group helped develop the first PET/CT scanner, which combines functional and anatomic imaging.

Crystal clear

In the onion-like configuration of a collider detector, an electromagnetic calorimeter often surrounds a descendant of Charpak’s wire chambers, causing photons and electrons to cascade and measuring their energy. In 1991, to tackle the challenges posed by future detectors at the LHC, the Crystal Clear collaboration was formed to study innovative scintillating crystals suitable for electromagnetic calorimetry. Since its early years, Crystal Clear also sought to apply the technology to other fields, including healthcare. Several breast, pancreas, prostate and animal-dedicated PET scanner prototypes have since been developed, and the collaboration continues to push the limits of coincidence-time resolution for time-of-flight (TOF) PET.

In TOF–PET, the difference between the arrival times of the two back-to-back photons is recorded, allowing the location of the annihilation along the axis connecting the detection points to be pinned down. Better time resolution therefore improves image quality and reduces the acquisition time and radiation dose to the patient. Crystal Clear continues this work to this day through the development of innovative scintillating-detector concepts, including at a state-of-the-art laboratory at CERN.

The dual aims of the collaboration have led to cross-fertilisation, whereby the work done for high-energy physics spills over to medical imaging, and vice versa. For example, the avalanche photodiodes developed for the CMS electromagnetic calorimeter were adapted for the ClearPEM breast-imaging prototype, and technology developed for detecting pancreatic and prostate cancer (EndoTOFPET-US) inspired the “barrel timing layer” of crystals that will instrument the central portion of the CMS detector during LHC Run 3.

Pixel perfect

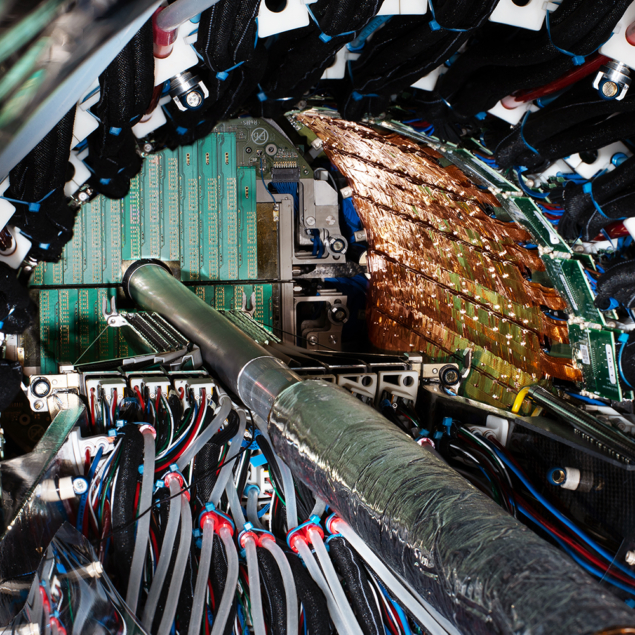

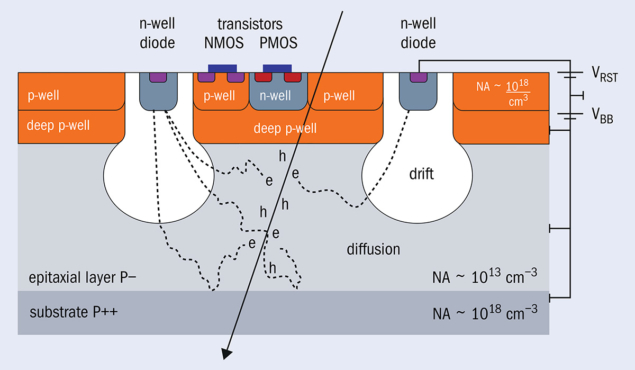

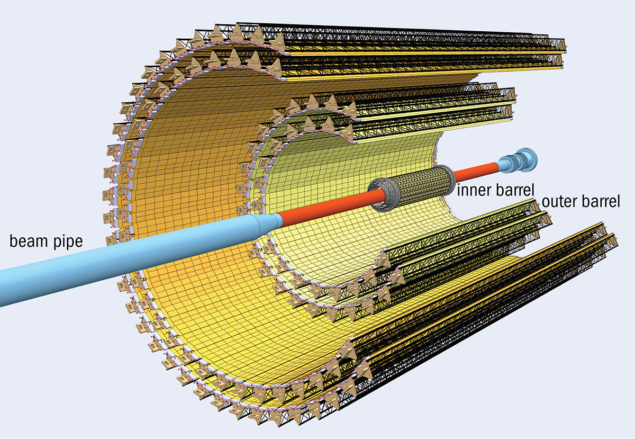

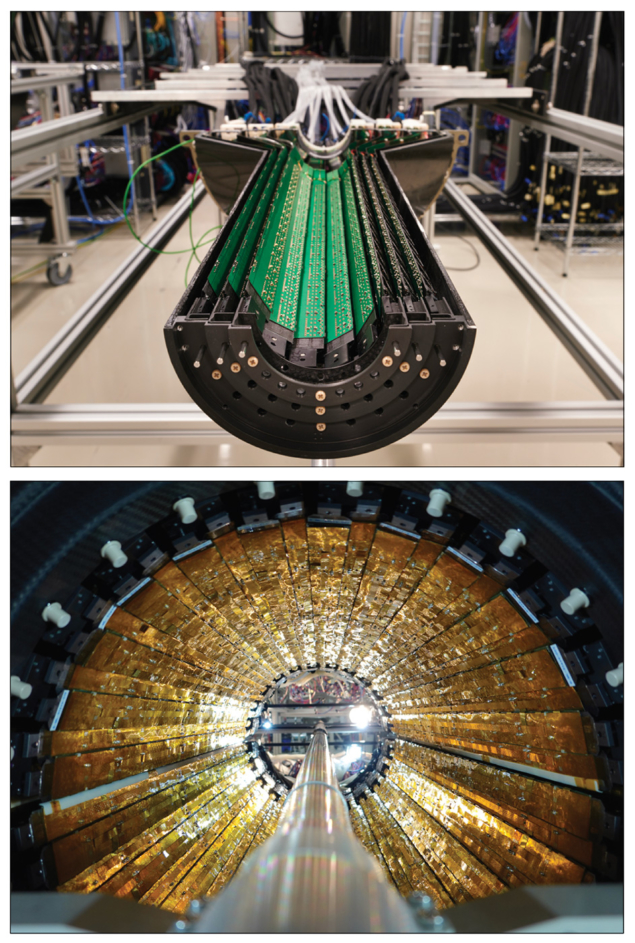

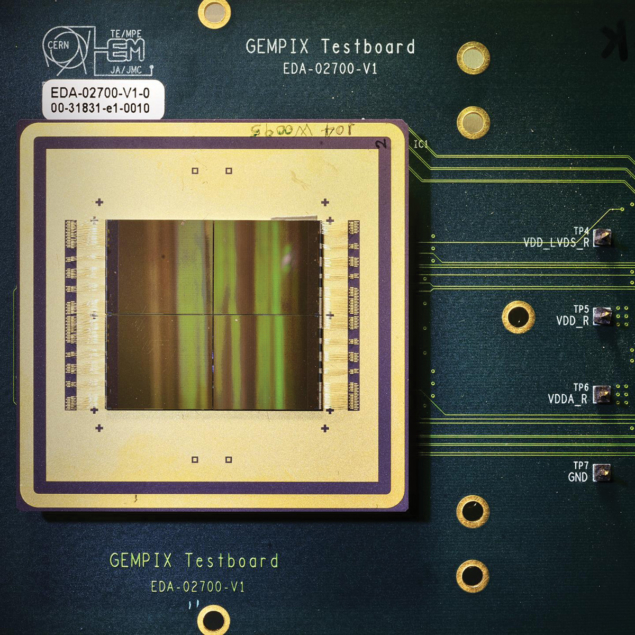

In the same 30-year period, the family of Medipix and Timepix read-out chips has arguably made an even bigger impact on med-tech and other application fields, becoming one of CERN’s most successful technology-transfer cases. Developed with the support of four successive Medipix collaborations, involving a total of 37 research institutes, the technology is inspired by the high-resolution hybrid pixel detectors initially developed to address the challenges of particle tracking in the innermost layers of the LHC experiments. In hybrid detectors, the sensor array and the read-out chip are manufactured independently and later coupled by a bump-bonding process. This means that a variety of sensors can be connected to the Medipix and Timepix chips, according to the needs of the end user.

The first Medipix chip produced in the 1990s by the Medipix1 collaboration was based on the front-end architecture of the Omega3 chip used by the half-million-pixel tracker of the WA97 experiment, which studied strangeness production in lead–ion collisions. The upgraded Medipix1 chip also included a counter per pixel. This demonstrated that the chips could work like a digital camera, providing high-resolution, high-contrast and noise-hit-free images, making them uniquely suitable for medical applications. Medipix2 improved spatial resolution and produced a modified version called Timepix that offers time or amplitude measurements in addition to hit counting. Medipix3 and Timepix3 then allowed the energy of each individual photon to be measured – Medipix3 allocates incoming hits to energy bins in each pixel, providing colour X-ray images, while Timepix3 times hits with a precision of 1.6 ns, and sends the full hit data – coordinate, amplitude and time – off chip. Most recently, the Medipix4 collaboration, which was launched in 2016, is designing chips that can seamlessly cover large areas, and is developing new read-out architectures, thanks to the possibility of tiling the chips on all four sides.

Medipix and Timepix chips find applications in widely varied fields, from medical imaging to cultural heritage, space dosimetry, materials analysis and education. The industrial partners and licence holders commercialising the technology range from established enterprises to start-up companies. In the medical field, the technology has been applied to X-ray CT prototype systems for digital mammography, CT imagers for mammography, and beta- and gamma-autoradiography of biological samples. In 2018 the first 3D colour X-ray images of human extremities were taken by a scanner developed by MARS Bioimaging Ltd, using the Medipix3 technology. By analysing the spectrum recorded in each pixel, the scanner can distinguish multiple materials in a single scan, opening up a new dimension in medical X-ray imaging: with this chip, images are no longer black and white, but in colour (see “Colour X-ray” image).

Although the primary aim of the Timepix3 chip was applications outside of particle physics, its development also led directly to new solutions in high-energy physics, such as the VELOpix chip for the ongoing LHCb upgrade, which permits data-driven trigger-free operation for the first time in a pixel vertex detector in a high-rate experiment.

Dosimetry

CERN teams are also exploring the potential uses of Medipix technology in dosimetry. In 2019, for example, Timepix3 was employed to determine the exposure of medical personnel to ionising radiation in an interventional radiology theatre at Christchurch Hospital in New Zealand. The chip was able to map the radiation fluence and energy spectrum of the scattered photon field that reaches the practitioners, and can also provide information about which parts of the body are most exposed to radiation.

Meanwhile, “GEMPix” detectors are being evaluated for use in quality assurance in hadron therapy. GEMPix couples gas electron multipliers (GEMs) – a type of gaseous ionisation detector developed at CERN – with the Medipix integrated circuit as readout to provide a hybrid device capable of detecting all types of radiation with a high spatial resolution. Following initial results from tests on a carbon-ion beam performed at the National Centre for Oncological Hadrontherapy (CNAO) in Pavia, Italy, a large-area GEMPix detector with an innovative optical read-out is now being developed at CERN in collaboration with the Holst Centre in the Netherlands. A version of the GEMPix called GEMTEQ is also currently under development at CERN for use in “microdosimetry”, which studies the temporal and spatial distributions of absorbed energy in biological matter to improve the safety and effectiveness of cancer treatments.

Knowledge transfer at CERN

As a publicly funded laboratory, CERN has a remit, in addition to its core mission to perform fundamental research in particle physics, to expand the opportunities for its technology and expertise to deliver tangible benefits to society. The CERN Knowledge Transfer group strives to maximise the impact of CERN technologies and know-how on society in many ways, including through the establishment of partnerships with clinical, industrial and academic actors, support to budding entrepreneurs and seed funding to CERN personnel.

Supporting the knowledge-transfer process from particle physics to medical research and the med-tech industry is a promising avenue to boost healthcare innovation and provide solutions to present and future health challenges. CERN has provided a framework for the application of its technologies to the medical domain through a dedicated strategy document approved by its Council in June 2017. CERN will continue its efforts to maximise the impact of the laboratory’s know-how and technologies on the medical sector.

Two further dosimetry applications illustrate how technologies developed for CERN’s needs have expanded into commercial medical applications. The B-RAD, a hand-held radiation survey meter designed to operate in strong magnetic fields, was developed by CERN in collaboration with the Polytechnic of Milan and is now available off-the-shelf from an Italian company. Originally conceived for radiation surveys around the LHC experiments and inside ATLAS with the magnetic field on, it has found applications in several other tasks, such as radiation measurements on permanent magnets, radiation surveys at PET-MRI scanners and at MRI-guided radiation therapy linacs. Meanwhile, the radon dose monitor (RaDoM) tackles exposure to radon, a natural radioactive gas that is the second leading cause of lung cancer after smoking. The RaDoM device directly estimates the dose by reproducing the energy deposition inside the lung instead of deriving the dose from a measurement of radon concentration in air; CERN also developed a cloud-based service to collect and analyse the data, to control the measurements and to drive mitigation measures based on real time data. The technology is licensed to the CERN spin-off BAQ.

Cancer treatments

Having surveyed the medical applications of particle detectors, we turn to the technology driving the beams themselves. Radiotherapy is a mainstay of cancer treatment, using ionising radiation to damage the DNA of cancer cells. In most cases, a particle accelerator is used to generate a therapeutic beam. Conventional radiation therapy uses X-rays generated by a linac, and is widely available at relatively low cost.

Medipix and Timepix read-out chips have become one of CERN’s most successful technology-transfer cases

Radiotherapy with protons was first proposed by Fermilab’s founding director Robert Wilson in 1946 while he was at Berkeley, and interest in the use of heavier ions such as carbon arose soon after. While X-rays lose energy roughly exponentially as they penetrate tissue, protons and other ions deposit almost all of their energy in a sharp “Bragg” peak at the very end of their path, enabling the dose to be delivered on the tumour target, while sparing the surrounding healthy tissues. Carbon ions have the additional advantage of a higher radiobiological effectiveness, and can control tumours that are radio-resistant to X-rays and protons. Widespread adoption of hadron therapy is, however, limited by the cost and complexity of the required infrastructures, and by the need for more pre-clinical and clinical studies.

PIMMS and NIMMS

Between 1996 and 2000, under the impulsion of Ugo Amaldi, Meinhard Regler and Phil Bryant, CERN hosted the Proton-Ion Medical Machine Study (PIMMS). PIMMS produced and made publicly available an optimised design for a cancer-therapy synchrotron capable of using both protons and carbon ions. After further enhancement by Amaldi’s TERA foundation, and with seminal contributions from Italian research organisation INFN, the PIMMS concept evolved into the accelerator at the heart of the CNAO hadron therapy centre in Pavia. The MedAustron centre in Wiener Neustadt, Austria, was then based on the CNAO design. CERN continues to collaborate with CNAO and MedAustron by sharing its expertise in accelerator and magnet technologies.

In the 2010s, CERN teams put to use the experience gained in the construction of Linac 4, which became the source of proton beams for the LHC in 2020, and developed an extremely compact high-frequency radio-frequency quadrupole (RFQ) to be used as injector for a new generation of high-frequency, compact linear accelerators for proton therapy. The RFQ accelerates the proton beam to 5 MeV after only 2 m, and operates at 750 MHz – almost double the frequency of conventional RFQs. A major advantage of using linacs for proton therapy is the possibility of changing the energy of the beam, and hence the depth of treatment in the body, from pulse to pulse by switching off some of the accelerating units. The RFQ technology was licensed to the CERN spin-off ADAM, now part of AVO (Advanced Oncotherapy), and is being used as an injector for a breakthrough linear proton therapy machine at the company’s UK assembly and testing centre at STFC’s Daresbury Laboratory.

In 2019 CERN launched the Next Ion Medical Machine Study (NIMMS) to develop cutting-edge accelerator technologies for a new generation of compact and cost-effective ion-therapy facilities. The goal is to propel the use of ion therapy, given that proton installations are already commercially available and that only four ion centres exist in Europe, all based on bespoke solutions.

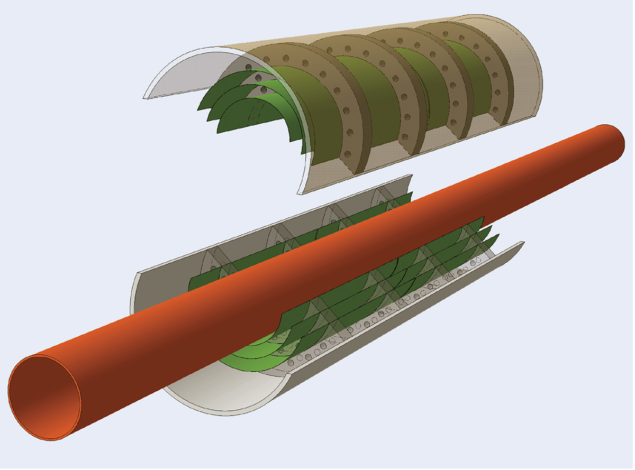

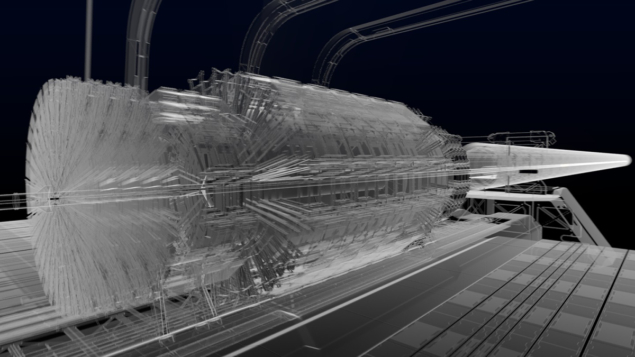

NIMMS is organised along four different lines of activities. The first aims to reduce the footprint of facilities by developing new superconducting magnet designs with large apertures and curvatures, and for pulsed operation. The second is the design of a compact linear accelerator optimised for installation in hospitals, which includes an RFQ based on the design of the proton therapy RFQ, and a novel source for fully-stripped carbon ions. The third concerns two innovative gantry designs, with the aim of reducing the size, weight and complexity of the massive magnetic structures that allow the beam to reach the patient from different angles: the SIGRUM lightweight rotational gantry originally proposed by TERA, and the GaToroid gantry invented at CERN which eliminates the need to mechanically rotate the structure by using a toroidal magnet (see figure “GaToroid”). Finally, new high-current synchrotron designs will be developed to reduce the cost and footprint of facilities while reducing the treatment time compared to present European ion-therapy centres: these will include a superconducting and a room-temperature option, and advanced features such as multi-turn injection for 1010 particles per pulse, fast and slow extraction, and multiple ion operation. Through NIMMS, CERN is contributing to the efforts of a flourishing European community, and a number of collaborations have been already established.

Another recent example of frontier radiotherapy techniques is the collaboration with Switzerland’s Lausanne University Hospital (CHUV) to build a new cancer therapy facility that would deliver high doses of radiation from very-high-energy electrons (VHEE) in milliseconds instead of minutes. The goal here is to exploit the so-called FLASH effect, wherein radiation doses administered over short time periods appear to damage tumours more than healthy tissue, potentially minimising harmful side-effects. This pioneering installation will be based on the high-gradient accelerator technology developed for the proposed CLIC electron–positron collider. Various research teams have been performing their biomedical research related to VHEE and FLASH at the CERN Linear Electron Accelerator for Research (CLEAR), one of the few facilities available for characterising VHEE beams.

Radioisotopes

CERN’s accelerator technology is also deployed in a completely different way to produce innovative radioisotopes for medical research. In nuclear medicine, radioisotopes are used both for internal radiotherapy and for diagnosis of cancer and other diseases, and progress has always been connected to the availability of novel radioisotopes. Here, CERN has capitalised on the experience of its ISOLDE facility, which during the past 30 years has the proton beam from the CERN PS Booster to produce 1300 different isotopes from 73 chemical elements for research ranging from nuclear physics to the life sciences. A new facility, called ISOLDE-MEDICIS, is entirely dedicated to the production of unconventional radioisotopes with the right properties to enhance the precision of both patient imaging and treatment. In operation since late 2017, MEDICIS will expand the range of radioisotopes available for medical research – some of which can be produced only at CERN – and send them to partner hospitals and research centres for further studies. During its 2019 and 2020 harvesting campaigns, for example, MEDICIS demonstrated the capability of purifying isotopes such as 169Er or 153Sm to new purity grades, making them suitable for innovative treatments such as targeted radioimmunotherapy.

Data handling and simulations

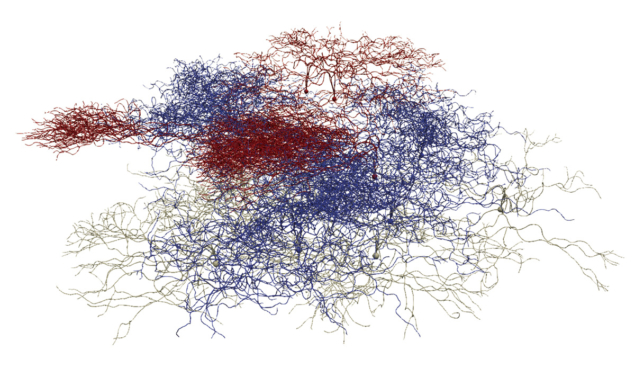

The expertise of particle physicists in data handling and simulation tools are also increasingly finding applications in the biomedical field. The FLUKA and Geant4 simulation toolkits, for example, are being used in several applications, from detector modelling to treatment planning. Recently, CERN contributed its know-how in large-scale computing to the BioDynaMo collaboration, initiated by CERN openlab together with Newcastle University, which initially aimed to provide a standardised, high-performance and open-source platform to support complex biological simulations (see figure “Computational neuroscience”). By hiding its computational complexity, BioDynaMo allows researchers to easily create, run and visualise 3D agent-based simulations. It is already used by academia and industry to simulate cancer growth, accelerate drug discoveries and simulate how the SARS-CoV-2 virus spreads through the population, among other applications, and is now being extended beyond biological simulations to visualise the collective behaviour of groups in society.

The expertise of particle physicists in data handling and simulation tools are increasingly finding applications in the biomedical field

Many more projects related to medical applications are in their initial phases. The breadth of knowledge and skills available at CERN was also evident during the COVID-19 pandemic when the laboratory contributed to the efforts of the particle-physics community in fields ranging from innovative ventilators to masks and shields, from data management tools to open-data repositories, and from a platform to model the concentration of viruses in enclosed spaces to epidemiologic studies and proximity-sensing devices, such as those developed by Terabee.

Fundamental research has a priceless goal: knowledge for the sake of knowledge. The theories of relativity and quantum mechanics were considered abstract and esoteric when they were developed; a century later, we owe to them the remarkable precision of GPS systems and the transistors that are the foundation of the electronics-based world we live in. Particle-physics research acts as a trailblazer for disruptive technologies in the fields of accelerators, detectors and computing. Even though their impact is often difficult to track as it is indirect and diffused over time, these technologies have already greatly contributed to the advances of modern medicine and will continue to do so.

Michael Benedikt (left) completed his PhD on medical accelerators as a member of the CERN Proton-Ion Medical Machine Study group. He joined CERN’s accelerator operation group in 1997, where he headed different sections before becoming deputy group leader from 2006 to 2013. From 2008 to 2013, he was project leader for the accelerator complex for the MedAustron hadron therapy in Austria, and since 2013 he has led the Future Circular Collider Study at CERN.

Michael Benedikt (left) completed his PhD on medical accelerators as a member of the CERN Proton-Ion Medical Machine Study group. He joined CERN’s accelerator operation group in 1997, where he headed different sections before becoming deputy group leader from 2006 to 2013. From 2008 to 2013, he was project leader for the accelerator complex for the MedAustron hadron therapy in Austria, and since 2013 he has led the Future Circular Collider Study at CERN.