Image credit: CERN.

Interest in CERN has evolved over the years. At its inception, the Organization’s founding member states clearly saw the new institution’s potential as a centre of excellence for basic research, a driver of innovation, a provider of first-class education and a catalyst for peace. After several decades of business as usual, CERN is again on the radar of its member-state governments. This is spurred on partly by the public interest that has made CERN something of a household name. But whether in the public spotlight or not, it is incumbent on CERN to spell out to all of its stakeholders why it represents such good value for money today, just as it did 60 years ago. Even though the reasons may be familiar to those working at CERN, they are not always so clear to government officials and policy makers, and it’s worth setting them out in detail.

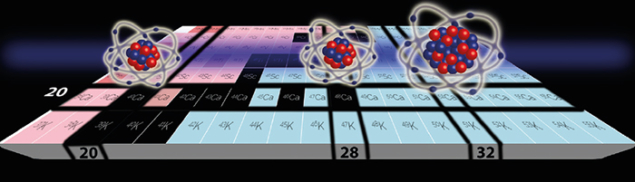

First and foremost, CERN has made major contributions to how we understand the world that we live in. The discovery and detailed study of weak vector bosons in the 1980s and 1990s, and the recent discovery of the Higgs boson, messenger of the Brout–Englert–Higgs mechanism, have contributed much to our understanding of nature at the level of the fundamental particles and their interactions: now rightfully called the Standard Model of particle physics. This on its own is a major cultural achievement, and it has taught us much about how we have arrived at this point in history: right from the moment it all began, 13.6 billion years ago. Appreciation of this cultural contribution has never been higher than today. More than 100,000 people visit CERN every year, including hundreds of journalists reaching millions of people. None leave CERN unimpressed, and all are, without a doubt, culturally enriched by their experience.

Educating and innovating

CERN’s second major area of impact is education, having educated many generations of top-level physicists, engineers and technicians. Some have remained at CERN, while others have gone on to pursue careers in basic research at universities and institutes elsewhere, therefore contributing to top-level education and multiplying the effect of their own experience at CERN. Many more, however, have made their way into industry, fulfilling an important mission for CERN – that of providing skilled people to advance the economies of our member states and collaborating nations. More than 500 doctoral degrees are awarded annually on the basis of work carried out at CERN experiments and accelerators. In 2015, more than 400 doctoral, technical and administrative students were welcomed by CERN, usually staying for between several months and a year. The CERN summer-student and teacher programmes, which provide short stints of intensive education, also welcome hundreds of students and high-school teachers every year.

A third important contribution of CERN is the innovation that results from research that requires technology at levels and in areas where no one has gone before. The best-known example of CERN technology is the World Wide Web, which has profoundly changed the way that our society works worldwide. But the web is just the tip of the iceberg. Advances in fields such as magnet technology, cryogenics, electronics, detector technology and statistical methods have also made their way into society in ways that are equally impactful, although less obviously evident. While the societal benefits of techniques such as advanced photon and lepton detection may not seem immediately relevant beyond the realms of research, the impact they have had in medical imaging, for instance, is profound.

Often not very visible, but no less effective in contributing to our prosperity and well-being, developments such as this are a vital part of the research cycle. CERN is increasingly taking a proactive approach towards transferring its innovation, knowledge and skills to those who can make these count for society as a whole, and this is generally well appreciated. Recent initiatives include public–private partnerships such as OpenLab, Medipix and IdeaSquare, which provide low-entry-threshold mechanisms for companies to engage with CERN technology. In return, CERN benefits through stimulating the kind of industrial innovation that enables next-generation accelerators and detectors.

The recent Viewpoint by CERN Director-General, Fabiola Gianotti (CERN Courier March 2016 p5) gives a superb outline of the opportunities and challenges for particle physics during the coming years. Clearly it will require great dexterity to juggle the continuation of a state-of-the-art research programme at the LHC and a diverse range of other facilities, with greater engagement with important activities beyond CERN, such as the US neutrino programme, while at the same time preparing for future accelerators and detectors. This will stretch CERN’s capabilities to the limit. But it is precisely this challenge that will motivate the Organization to do better and innovate in all areas, with inevitable benefits for society. Scientific culture and societal impacts advancing hand-in-hand through cutting-edge research: it is this that makes CERN worthy of the support it receives from governments worldwide.