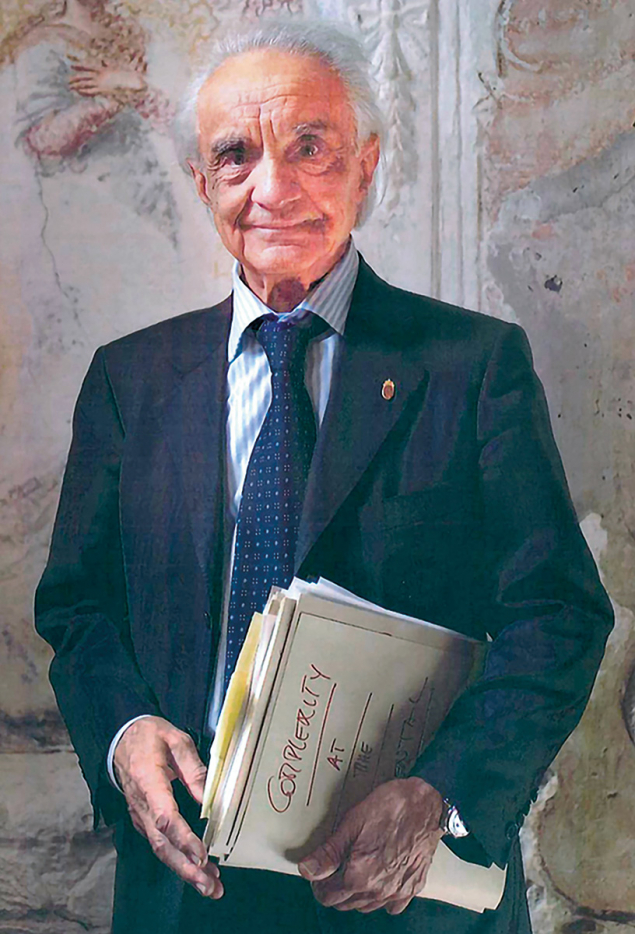

Antonino Zichichi, one of the most influential figures in high-energy physics and a towering presence in Italian scientific culture, passed away in Rome on 9 February 2026, at the age of 96.

Born in Trapani, Sicily, in 1929, into an ancient family from Erice, Zichichi graduated from the University of Palermo in the early 1950s. In 1955 he joined CERN, at the dawn of its experimental programme, and in 1965 he led the experiment at the Proton Synchrotron that culminated in the discovery of the antideuteron – an antinucleus composed of an antiproton and an antineutron that provided decisive confirmation of the existence of nuclear antimatter.

A professor of physics at the University of Bologna since 1960, he led the Bologna–CERN–Frascati collaboration, which carried out the first search for the tau lepton and established the experimental method through which its discovery would later be achieved at SLAC National Accelerator Laboratory. Beyond these early milestones, his results and discoveries were numerous and fundamental, including significant limits on free quark production in strong and weak interactions, the discovery of the effective energy in QCD and evidence for the first beauty baryon.

A master of invention

Equally important were his early inventions, among them the electronic circuit for time-of-flight measurements, the preshower for calorimetry and a new technology for high-precision polynomial magnetic fields. Later, by securing Italian funding for the LAA project at CERN, he launched an extensive R&D programme on innovative detection technologies. This notably allowed the development of microelectronics, which together with the design of silicon strip and pixel detectors, would become crucial for the LHC experiments and the development of the Multigap Resistive Plate Chamber (MRPC), a detector with record time resolution. The first large-scale implementation of MRPC technology was the ALICE experiment’s Time-of-Flight (TOF) system that Zichichi led for over two decades.

His scientific legacy cannot be separated from his profound and lasting contribution to the Italian National Institute for Nuclear Physics (INFN). Serving as its president from 1977 to 1982, he played a decisive role in strengthening the institute at a crucial stage of its development, consolidating its international standing and reinforcing Italy’s participation in the great global enterprises of particle physics. Under his leadership, INFN expanded its experimental commitments at CERN and in the US, while investing strategically in detector development and advanced technologies.

Zichichi was instrumental in establishing major research facilities and many large projects are tied to his name: from the LEP and LHC projects at CERN to the HERA project at DESY, and the Gran Sasso National Laboratories at INFN, that he conceived and strategically designed with its experimental halls pointing towards CERN. Today recognised as the world’s foremost underground laboratories for astroparticle physics, attracting thousands of scientists from leading institutions across the globe, the Gran Sasso National Laboratories stand as a monumental testament to Zichichi’s foresight. The idea that an international research centre such as the Gran Sasso Laboratories can serve as a crossroads for scientists from different backgrounds, cultures and institutions, collaborating in fundamental research, reflects the vision that Zichichi consistently pursued. A vision that sees science as a means of diplomacy, enabling dialogue among nations around a common goal.

Strongly convinced that scientific cooperation could be a concrete tool for diplomacy and peacebuilding, Zichichi founded the Ettore Majorana Foundation and Center for Scientific Culture in Erice, Sicily, in 1963, which became a hub for international scientific collaboration and a forum for discussion among researchers from around the world. From there, in 1982, he promoted the Erice Statement for Peace, an urgent appeal to the international scientific community to place its work in the service of peace rather than war, at a time of heightened risk of global nuclear conflict.

That same conviction informed his engagement in European and international scientific governance. Zichichi was among the founders of the European Physical Society (where he served as its president from 1978 to 1980), chaired the NATO Committee on Disarmament Technologies and represented the European Economic Community on the scientific committee of the International Science and Technology Center in Moscow. From 1986 onwards, as president of the World Lab and the World Federation of Scientists, he supported scientific development in emerging countries and focused attention on planetary emergencies.

He did not limit himself to building bridges between scientists, but also between science, culture and society. A highly skilled communicator and educator, he published widely read books and essays aimed at the broader public, and appeared frequently in the Italian media, inspiring young people across Italy and conveying to them his passion for, and belief in, the importance of scientific research. He helped shape scientific culture in Italy in the latter half of the 20th century, insisting that fundamental research is not merely a technical endeavour but a cornerstone of human progress.

Multiple honours

Over the course of his long career, Zichichi received more than 60 awards and honours in Italy and abroad, including the Knight Grand Cross of the Order of Merit of the Italian Republic and the Enrico Fermi Prize of the Italian Physical Society. He was also president of the Enrico Fermi Historical Museum and Research Centre, further testifying to his dedication to preserving and promoting Italy’s scientific heritage.

With his death, the global scientific community loses a visionary researcher, a formidable architect of international scientific collaborations, and a tireless advocate for science as a vehicle of dialogue and peace. What always struck those who shared with him the demanding and inspiring journey of research was his unfailing enthusiasm and deep passion for science, which he cultivated tirelessly until his final days. That same passion lives on not only in his discoveries and in the institutions he helped to create, but also in the generations of scientists who continue to build bridges across borders in the name of knowledge.