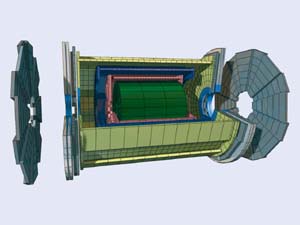

By replacing its Large Electron Positron collider with a proton-proton collider, CERN will be able to generate much higher energy collisions for physicists to examine. The amount of energy lost to synchrotron radiation by particles on curved paths decreases with the mass of the particles, and is therefore much less for protons than for electrons. Synchrotron radiation nevertheless poses a problem for designers of high-intensity proton accelerators, since although the energy loss is less, the number of photons emitted can actually be higher and their energy increases with the cube of the beam energy. These photons can lead to a number of undesirable phenomena, including heating and gas desorption from the vacuum chamber walls. Perhaps the most difficult to deal with, however, is photoemission of electrons from the vacuum chamber, which at 7 TeV in the Large Hadron Collider (LHC) is the dominant mechanism of electron generation and can lead to the establishment of an electron cloud that can cause beam deterioration.

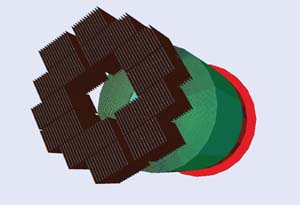

Electron-cloud phenomena have been observed at many accelerators around the world, including CERN where LHC-type beams in the proton synchrotron and super proton synchrotron (SPS) have generated clouds. In the LHC bending arcs at full energy, the process begins with synchrotron radiation photons emitted in a narrow band striking the outside wall of the accelerator’s vacuum chamber. The majority liberate electrons, which are turned back by the dipole magnetic field and reabsorbed. Some photons, however, are reflected and go on to liberate electrons from the top or bottom of the vacuum chamber. These electrons are accelerated by the charge of a passing bunch of positively charged particles and can go on to free further low-energy electrons from the opposite wall of the chamber. If a sufficiently large fraction of low-energy electrons survives long enough, successive passing bunches lead to a runaway effect known as multipacting, which generates the electron cloud.

A copper-coated beam screen will be installed within the vacuum chamber of the LHC. This serves to carry away heat, and also controls the electron cloud in the dipole magnets by limiting the number of reflected electrons. The pressure increase caused by the electron cloud, its impact on beam diagnostics and, for the LHC, the heat load on the beam screen and cold bore are further primary concerns. Surface conditioning by electron bombardment will rapidly lower gas desorption and secondary electron yield of the beam screen surface. When electron multiplication is sufficiently reduced, it will no longer compensate for electrons lost between two successive bunches, and there will be little or no build-up of the electron cloud. This principle has recently been demonstrated at the SPS.

Future machines

The CERN workshop brought together some 60 participants from 17 institutes to discuss electron-cloud simulations for proton and positron beams. Simulations for future linear colliders and intense proton drivers suggest that in these machines, electrons in the vacuum chamber may reach densities some 10-100 times higher than in existing machines. Workshop participants reviewed a number of simulation codes that have been developed using different approximations and including different physics. Key aims of the meeting were to review current analytical, simulation and modelling approaches to the electron-cloud problem, determine the important outstanding questions, and develop a strategy for future studies. Reports on the current status of experimental observations worldwide served as a motivation and benchmark for the simulation studies.

Experimental work carried out at many different laboratories in Europe, Japan and the US was reported in the two opening sessions of the workshop. Results from laboratory measurements of secondary electron emission and electron energy spectra – an invaluable input for the electron-cloud modelling – were also discussed. Presentations on simulations of electron-cloud build-up and associated beam instabilities included the physics models that form the basis of existing simulation codes, simulation results and comparisons of simulations and observations. Two sessions concentrated on future studies, including plasma physics approaches, and on possible remedies to electron-cloud problems.

Summarizing the workshop, Weiren Chou of Fermilab highlighted the need to strengthen international collaboration on electron-cloud effects. A tangible result of the workshop was the establishment of a few key contact people who have agreed to coordinate future worldwide activities related to laboratory measurements, theoretical approaches and simulation-code comparisons.