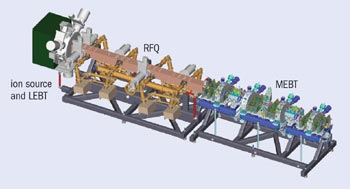

The US Spallation Neutron Source (SNS) project involves no fewer than six US national laboratories. Its accelerator systems consist of the front end built at Lawrence Berkeley National Laboratory (LBNL), a linear accelerator (linac) being built by Los Alamos National Laboratory (LANL) with superconducting radiofrequency (RF) cavities supplied by Jefferson Laboratory, and an accumulator ring and associated transfer lines being built by Brookhaven National Laboratory (BNL). The target system and conventional facilities are the responsibility of Oak Ridge National Laboratory (ORNL) in Tennessee, and the initial complement of experimental stations is to be supplied by Argonne National Laboratory (ANL) and ORNL. The front end creates an intense negative hydrogen-ion beam, chops it into “minipulses”, and accelerates it to 2.5 MeV. The linac then brings the beam to its full energy of 1 GeV, and the accumulator ring compresses the macropulses into sub-microsecond packets to be delivered to the spallation target.

The SNS front end represents a prototypical injector for the kind of so-called proton driver accelerators that are under construction or being planned worldwide. Accelerators that include an accumulator ring, such as the SNS, typically use negative hydrogen-ion beams, but the design approach lends itself to genuine proton beams as well.

Beamline elements

The two-chamber ion source was developed from an earlier model built for the Superconducting Super Collider. A magnetic dipole filter reflects energetic electrons from the main plasma and allows only low-energy electrons to pass into the second chamber, thus favouring the creation of negative hydrogen ions. The discharge is sustained by a 2 MHz RF system and requires up to 45 kW pulsed power at 6% duty factor (1 ms, 60 Hz). The main RF power, as well as low-amplitude 13.56 MHz power used to facilitate ignition at the beginning of every discharge pulse, is delivered through a porcelain-coated antenna immersed in the plasma. A newly developed coating technology brings the uninterrupted running time between services in reach of the desired value of 3 weeks; in fact, one single antenna was used over a period of 2 months during the final commissioning phase. The creation of the negative hydrogen ions is enhanced by a minute amount of caesium dispensed on the inside of the secondary discharge chamber surrounding the outlet aperture. When negative ions are extracted from a plasma, a copious amount of electrons is extracted as well, and a second dipole magnet configuration deflects most of them to a dumping electrode inserted in the main extraction gap, thus keeping the power of the removed electrons at manageable levels.

The low-energy beam-transport (LEBT) system makes use of purely electrostatic focusing by two einzel lenses (ring-shaped electrodes that at first slow the beam down, make it expand, and then, upon reaccelerating, squeeze it into a converging envelope). The second of these lenses is split into four quadrants to provide DC beam-steering as well as pre-chopping capabilities, dividing the 1 ms macropulses into 645 ns packets separated by 300 ns gaps. The rise and fall times of these minipulses were measured to be less than 25 ns, and the beam-in-gap current is reduced to less than 0.1-1% of the pulse amplitude. The electrostatic focusing principle allows for a very short LEBT length of 120 mm, and avoids time variations in the degree of space-charge compensation generally encountered with pulsed beams in magnetic focusing structures. It also provides for a short transition length between the LEBT and the subsequent RF quadrupole (RFQ) accelerator.

The RFQ is the main accelerator of the front end, and boosts the beam energy from 65 keV to 2.5 MeV. The RFQ fields are applied to four modulated vanes, and parasitic dipole modes are eliminated by p-mode stabilizers (straight bars running across the RFQ that shift the resonant frequency of the dipole modes and eliminate steering forces on the beam). The four RFQ cavities are built as hybrid structures, with high-conductivity copper on the inside brazed to a stiff outer shell. Dynamic tuning is achieved by regulating the temperature difference between the cavity walls and the vane tips, and the RFQ can be operated at full power (about 750 kW pulsed) within 2 minutes of a cold start. The 402.5 MHz klystron system used for front-end commissioning at LBNL was provided by LANL, and will be replaced by a more modern system that is part of the series procured by LANL for the first part of the linac.

After the RFQ, the medium-energy beam-transport (MEBT) system receives the beam and hands it over to the subsequent drift-tube linac (DTL). The MEBT includes the main travelling-wave chopper system designed to give the minipulses sharp flanks of 10 ns rise and fall times, and to attenuate the chopped/unchopped beam-current ratio to the nominal value of 0.01%. The active deflector plates and power switches of the MEBT chopping system were supplied by LANL, and have not yet been commissioned. A water-cooled molybdenum target is installed at the centre of the MEBT to absorb the chopped beam fraction, and a so-called “anti-chopper” guides those particles back to the beam axis that missed the chopper target during the pulse ramping. Fourteen quadrupole magnets provide transverse matching, and four rebuncher cavities control the bunch length.

Beam diagnostics were built and commissioned by LBNL, ORNL, LANL, and BNL members of the SNS Diagnostics Collaboration; they include two current monitors, six beam-position monitors that provide input for six steerer pairs, and five wire scanners to measure horizontal and vertical beam profiles. For the commissioning activities at LBNL, an external slit/harp emittance device was added at the end of the MEBT to assess the transverse beam quality. This type of emittance scanner uses a movable entrance slit to select various locations across the beam and a 32-wire detector system to measure the local beam divergence at each of these positions. The external scanner will be used during the recommissioning period at ORNL, and might later be replaced by an in-line device. The front-end beam was also used to test a laser-based profile-monitor prototype, and the results are promising for the possible use of this type of monitor in the superconducting linac sections. It is planned to eventually install beam-scrapers in the MEBT that can be used to clip beam halo and reduce beam spill in the high-energy part of the linac. Five units of a newly designed low-level RF system were built by LBNL, and supported the phase-synchronized operation of the RFQ klystron and the MEBT rebuncher cavities. The EPICS control system was supplied by the LBNL members of the SNS Global Controls group, and even allowed remote read-out of operational parameters from ORNL; remote operation would have been possible, but was not exercised in this period.

As a result of the front-end commissioning effort, several facts were established. The ion source reliably produces beams at the nominal duty factor of 6% with intensities exceeding 50 mA and uninterrupted periods of operation expected to reach 2 weeks or more. Electrostatic focusing works well with high-intensity beams in the LEBT. The RFQ transmission ranges above 90%, and the RFQ clips most of the low-intensity emittance wings generated by LEBT aberrations. All MEBT subsystems function as designed (only the main chopper system was not tested) – about 99% transmission was achieved without using any steerer, and the sensitivities to quadrupole and rebuncher tuning closely mirror simulation results. The transverse MEBT output emittances are just slightly above the nominal value, and can be reduced further by halo-scrapers. The most spectacular result is represented by the 50 mA pulsed beam-current measured at the end of the MEBT, almost 30% above the design goal of 38 mA.

Starting on 31 May, the SNS front end was partially disassembled and shipped to ORNL by 15 July. It is now fully installed at the SNS site, and recommissioning is planned to begin later this year.