When CERN was founded in 1954 the member states realized that they were making a long-term financial commitment to pay for a very large accelerator and for the operations that would go with it. A basic document, “CERN Gen 5”, had suggested that a large capital cost would be spread over the building period, followed by a much lower annual operating budget. The decisions by CERN Council to build the Synchrocyclotron and the Proton Synchrotron (PS), which had large, but not clearly defined, capital cost, were backed by early annual budget decisions to support continuing construction. Then, towards the end of the PS construction, Council fixed a three-year ceiling for the budgets for 1959-1961, which was intended to allow the PS to start operating, but with the hope still in some people’s minds that later budgets would be lower.

In 1961 it became clear that this ceiling was going to be breached by a significant amount, and that there was no hope for a future reduction. Council therefore set up a committee under the Dutch delegate Jan Bannier, with senior representatives from the major member states, to review the needs of CERN and make recommendations for future financial policy. CERN was represented by Sam Dakin, the director for administration, and myself, recently appointed as directorate member for applied physics.

Discussions inside CERN made it clear that more staff would be needed in all parts of the organization to build up the PS programme to “full exploitation”. Based on empirical data for “costs per man”, the total annual costs would rise in the following years, at a rate of 13% per annum. The shock this figure generated in the committee was somewhat damped when, following my request, the members produced forecasts for their own national science expenditures. Their figures, plotted logarithmically, showed large sums with steady exponential growth at rates of 20-25%. In comparison, CERN’s 13% line at the bottom of the page looked modest and reasonable.

Another important input came from the UK administration delegate, who proposed a rolling four-year budget procedure following internal practice in the UK Treasury. In this procedure, Council would vote in December on the details of the budget for the coming year, within a total that had been fixed a year previously; it would make a “firm estimate” for the budget total for the next year and “provisional estimates” for budget totals for the following two years, with all figures being given at constant prices. In accepting this farsighted proposal, the Bannier committee also recommended that the budget should increase by 13% for two years and by not less than 10% for two years thereafter. Bannier also added a warning that the organization should consider the possibility of a large new project at a later date. Despite its experience with CERN Gen 5 and the three-year ceiling, in 1962 Council approved the committee’s recommendations, showing how much CERN was blessed by the courage of its founding fathers.

It was then clear that CERN needed to establish effective internal procedures to provide well justified and reliable proposals for the programme and budget for four to five years ahead. These would have to be agreeable to the physics community, who were the users of the CERN equipment and services, and acceptable to a majority of the member states, who paid the bill.

The director-general at the time was Viki Weisskopf, who had been appointed in mid-1961 just as the budget crisis was becoming apparent. At CERN the director-general is ultimately responsible to Council for all the work and finances of the laboratory. He therefore needs to have access to information and analysis on all the work in forms suitable for his policy decisions inside the laboratory, and for discussion with Council and its committees. Subject to pressure for resources from all sides, he also needs an independent source of information with high enough status to ensure good collaboration with all parties involved, and with access to their work. As a director with no division to manage and having worked with the Bannier committee I could fulfil such a role, despite my lack of qualifications, by thinking up a planning system to meet the needs of the Bannier budget procedure.

At that moment I was on my own and had to rely on the help of others to collect data. I was, however, accustomed to working alone, having been John Adams’ technical aide in the design of the PS machine. I could fit in well with the director-general’s policy of delegating work, by putting as much as possible into the hands of divisional planners, provided they followed agreed procedures. Fortunately, from 1963 on I had the good fortune to have Gabriel Minder, an engineer from ETH, Zurich, to work with me. Among other qualities he spoke eight languages, and the two of us did most of the thinking and detailed design work for planning procedures over the next few years. Minder eventually published this work as a CERN report (Minder 1970) and as a PhD thesis for ETH (Minder 1969).

The Functional Programme Presentation

I realized that I should minimize the disturbance to existing administrative systems – particularly the plan of accounts and the annual budget procedure under Georges Tièche, the head of the Finance Division – and not upset the detailed planning work inside the operating divisions. Thus the natural starting point was the existing plan of accounts. This had three main headings in each of the 12 divisions: personnel, operation and capital outlays. These headings were subdivided into some 2000 codes for different groups and sections inside divisions, and also for some detail on the kind of material or service concerned. This was very appropriate for financial control, but left personnel costs unallocated and gave little indications on the nature and type of work being done. By contrast, the top management needed information on how many people did or would do just what, for what purpose, how successfully and at what cost. This information would need to span several years both into the past and the future in an appropriate degree of detail for the questions in hand.

I decided that we needed to prepare figures not in terms of the divisions and groups working on parts of the programme, but in a functional form that could describe the state of identifiable activities of different types, covering perhaps several groups or sections, even across divisions, and that would be independent of current structures. Subactivities could be defined inside a division and could be combined to make divisional activities, and also CERN-wide activities where this would be coherent and useful.

A divisional (sub)activity would be an identifiable part of a division’s work, specified by a description with the numbers of people and man-years involved each year, the annual cost and some measure of the resulting output. It would be aimed at programme planning and decision making for CERN, not at local financial management.

To be able to prepare such a Functional Programme Presentation, the FPP, I brought in early on the divisional assistants, who were already accustomed to preparing the detailed annual budget and accounts and who understood the workings of their divisions. With them we could establish agreed sets of divisional activities and subactivities, with their costs and manpower at that time. This required two judgements on the part of the divisional assistants: to allocate percentages of the manpower data of a division between its different subactivities and to identify which official accounts code applied to each subactivitiy. In 80% of the cases the accounts codes matched well enough with the subactivities, but for the remainder judgements were made on percentage splittings of amounts in account codes. These two sets of percentage keys would be revised once or twice a year as the work advanced.

At that point the central administration computer could be programmed to use these keys to convert standard divisional budget data into functional activity costs and manpower figures, without asking for extra work in the divisional offices.

The subactivity descriptions of measures of output could not be given simply as numbers, as staff and money could. They inevitably implied written work descriptions and statements of aims and of output, often with a subjective component. Nevertheless, by inspecting data for past years, simple numerical work indices could quite often be found, such as data on hours of operation and failure rates for equipment, installed kilowatts of power supplies, and square metres of floor space built or cleaned, which could be agreed by all parties to be relevant for evaluating aims and results, at least in the short term.

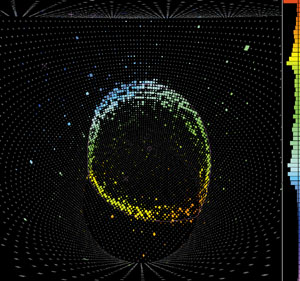

As an example, the Track Chamber Division (TC) programme in 1969 covered the research and development work described by 12 activities: bubble-chamber operations and development, particle-beam operations and development, picture-evaluation operation and development, low-temperature operations and development, the Big European Bubble Chamber (BEBC) project, additional laboratory staff, fellows and visitors, and divisional direction.

Although the activities were quite specific to the work in hand, they fitted into a few general classes, which formed the top level of the CERN FPP. They were R for research and operations, E for equipment and development, I and Z for improvements and major long-term projects, S for general services, and B for buildings and site equipment. Most of the TC programme fell into either the R or E classes, and BEBC into the I class. S and B covered the general administrative and technical services for the whole laboratory and the corresponding infrastructure, with similar subactivity structures at divisional and CERN levels.

An important point to note here is the clear separation between operations and development in all parts of a programme. These imply two different types of work, staff, expenditure and timetable, which should be kept clear in everybody’s minds and in the forecasting.

The whole picture

The overall result of these steps of coding and combination was that about 2000 accounting codes could summarized into some 150 understandable divisional activities and then into 30 CERN activities. These gave a picture of the whole CERN programme, which was suitable for discussions of general policy before the finer details of any part of a programme were examined.

I should stress that this structure was built bottom-up by the divisional staff, who knew what they were doing and saying and only had very broad guidelines from me as the planning officer. This, I believe, helped to make the whole system well accepted throughout CERN.

In 1965 I sent Minder to Washington, DC, to the president’s Office of the Budget and to NASA, to compare our FPP with the US Administration’s new Planning-Programming-Budgeting System (PPBS), which the Department of Defense had set up in 1961 and many other federal and local agencies had adopted by 1965. However, the PPBS had not proven usable by US research laboratories, due to certain features related to the nature of basic research. We were pleasantly surprised to find those problems had been solved within the FPP. Minder also collected data at OECD in Paris for our long-term models, and we found that their figures could be inserted into our projections for expected users, e.g. the numbers of European graduate and post-graduate physicists.

By 1966 the FPP was beginning to come together, presenting the state of the laboratory over an eight-year period: the past four years, the current year and the three years ahead, where total budgets were known with varying degrees of certainty. At the CERN level it offered in particular a long-term view of two topics of importance for both the management and Council. They were the size and distribution of personnel and the balance between operation and investment.

To be an effective laboratory 10-15 years later, CERN had to maintain high investment in technical development and new equipment and infrastructures, in parallel with satisfying growing demands for resources from the short-term research and operational activities that came from the large and growing community of users. To make this investment visible I stressed the importance to divisional staff of clearly separating R-type work and costs, which were needed to run and maintain facilities, and E-type work aimed at improving facilities or extending their scale and future performance.

The FPP also clearly brought out staff numbers in different areas of activity, and made it clear if staff levels to operate new equipment were being properly planned. Staff numbers have always been a sensitive item for funding bodies, as they are a direct method of controlling expenditure, and staff can represent a long term commitment that is more difficult to undo than cancellation of orders for materials.

What did the FPP give to CERN?

The FPP, with its planning cycle, gave CERN a tool that allowed people at all levels to work together each year to build up a rolling four-year forward plan that took account all parties’ needs and that could be accepted by the member states with very little modification. It could accommodate the addition of major improvement projects and the building and operation of the Intersecting Storage Rings as a new CERN programme. For instance, the Swiss federal administrators of education and research, who wondered why they should support several CERN programmes, accepted to do so by discussing these in the FPP format.

The FPP was used from 1963 through to 1975, when the 300 GeV Super Proton Synchrotron was integrated into the CERN basic programme. At that point the new management changed the philosophy to planning in terms of projects rather than activities.

I am not aware of how well this new scheme worked in practice, as by then I was out of the management structure and working largely on my own. I can, however, see dangers in such a change. For example, accelerator operations are an activity requiring continuity over many years, without the end date and total cost that are suggested by the term “project”. There is also the risk of not properly separating operations and investment. The top management could be faced with plans containing a large number of small-project proposals, which should really be decided at (inter)divisional level within the broader limits of agreed activities. I insisted that I did not want to have details of such divisional work formally reported to me, to avoid the temptation of micro-management by central staff. This policy, I believe, made the FPP well accepted by the divisions and helpful to them in clarifying their planning.

The intrinsic uncertainties of basic research have led to the idea that it cannot be planned, and this is certainly true at the level of its aims, which must depend on what nature offers us and not on what we would like it to tell us. However, the necessary continuity in exploring a fruitful line of enquiry for a certain period means that the effort and the cost can usefully be planned over that period. In some fields this time can be quite long, a decade or more, particularly where large equipment is involved. In elementary particle physics today this useful life is around 20 to 30 years, from the conception of a large facility to the time at which it may usefully be replaced or altered in a major way, with the cost of something completely new.

A four-year planning period, in which much of the work and cost has to be foreseen with some certainty, fits theoretically very well into the conduct of this type of research. The FPP is an existence proof that such a planning system can actually be implemented without disturbing the life of the laboratory, and without calling for a large central administration. This helps to avoid the directorate being tempted to become involved in micro-management, which is better left to lower levels in the organization. This is not, alas, always true in practice.

Mervyn Hine 1920-2004

Mervyn Hine, pioneer of the construction of CERN’s Proton Synchrotron, and of computing and networking at CERN, passed away on 26 April following a serious accident in his home. He had recently completed this article for the CERN Courier in the series “50 years of CERN”, which we publish here as a tribute to an important figure and personality in the history of CERN. An obituary will appear in the next issue.