When the LHC operates at peak luminosity, about a 1000 million interactions will be produced and detected each second at the heart of the CMS experiment. However, only a tiny fraction of these events will be of major importance. As in many particle-physics experiments, a trigger system selects the most interesting physics in real time so that data from just a few of the collisions are recorded. The remaining events – the vast majority – are discarded and cannot be recovered later. The trigger system, therefore, in effect determines the physics potential of the experiment for ever.

The traditional trigger system in a hadron-collider experiment is comprised of three tiers. Level 1 (L1) is mostly hardware and low-level firmware that selects about 100,000 interactions from the 1000 million or so produced each second. Level 2 (L2), which is typically a combination of custom-built hardware and software, then filters a few thousand interactions to be sent to the next level. Level 3 (L3), in turn, invokes higher-level algorithms to select the couple of hundred events per second that require detailed study.

At the LHC, proton bunches cross in the experiments at a rate of up to 40 million times a second – with up to 20 or so interactions per crossing. At CMS, each crossing can produce around 1 MB of data. The aim of the trigger system is to reduce the data rate to about 1 GB/s, which is the speed at which the data-acquisition system can record data. This implies reducing the event rate to around 100 Hz.

The novelty of the CMS trigger system is that the traditional L2 and L3 components are merged into a single system – the high-level trigger (HLT). This is a commercial PC farm that takes all of the interactions from L1 and selects the best 200–300 events each second. Therefore, at CMS the reduction in data rate is carried out in two steps. The L1 trigger, based on custom-built electronics, first reduces the number of events by a factor of around 400, while another factor of about 1000 comes from the HLT.

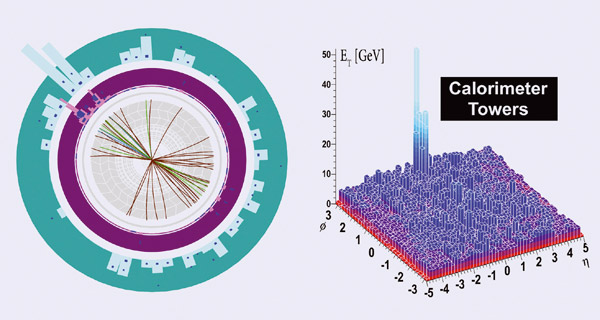

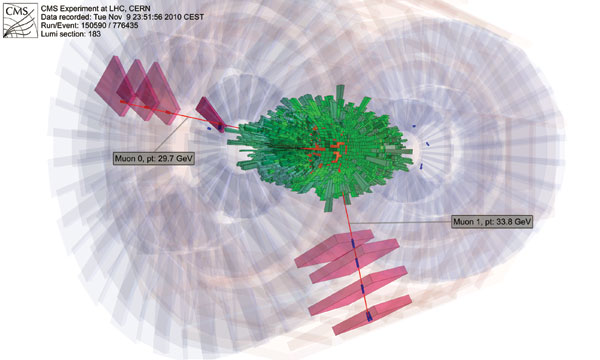

The data from the collisions are initially stored in buffers, but the L1 electronics still has less than 3 μs to make a decision and transfer that data on to the HLT. Given this short time frame, the L1 trigger acts only on information with coarse granularity from the muon detectors and the calorimeters, which is used to identify important objects, such as muons and jets. By contrast, the HLT works with a modified version of the CMS event offline reconstruction software, with full granularity for all of the sub-detectors, including the central tracker. To reduce the time taken, usually only the regions identified by the L1 trigger are read out, and reconstructed in a “regional reconstruction” process.

Such a system has never before operated at a particle collider. The advantage that this design buys is additional flexibility in the online selection system: the CMS experiment can run the more sophisticated L3 algorithms on a larger fraction of the collisions. In a three-tier system, experiments do this only on events that have been filtered through the second stage. With a two-tier trigger, CMS can do the more sophisticated filtering earlier in the game, so the experiment can look for more exotic events that might not have been recorded in a traditional trigger system. The price that CMS pays for this flexibility is a higher-capacity network switch and a larger “filter farm” of around 5000 CPUs.

Events à la carte

Running the trigger for a large experiment is a complex process because there are typically many conflicting needs coming from different detector and physics groups within the collaboration. As far as possible, everyone’s needs have to be covered – but this is no easy task. The CMS experiment is sophisticated and can do a great deal of different physics, but it all comes down to whether or not the events have been selected by the trigger. There is a constant struggle to make sure that the collaboration can maximize the physics potential of the experiment as a whole, while at the same time catering to the assorted tastes of the various groups.

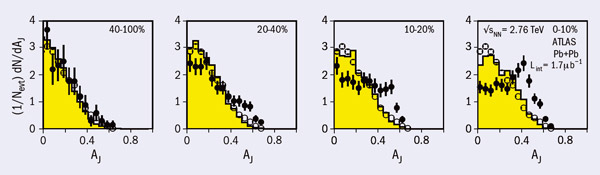

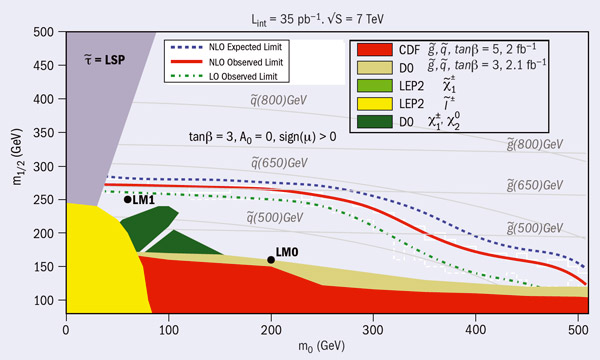

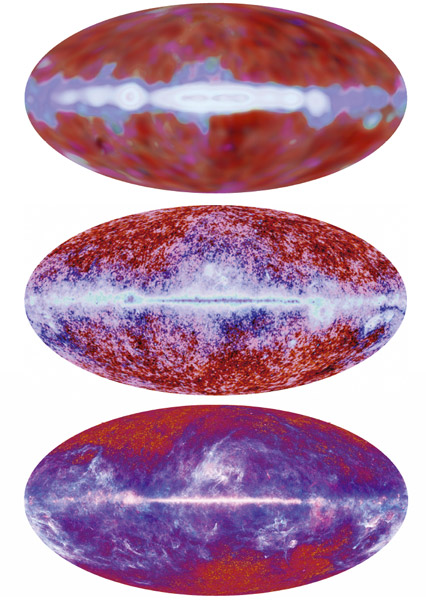

The trigger “menu” can be thought of as a selection of triggers to suit all tastes. Some groups order just the entrée of established Standard Model physics, while others look to tuck in to the main course of Higgs particles, supersymmetry (SUSY), heavy-ion physics, CP-violation and so on. Those with a sweet tooth come with their minds set predominantly on the dessert of exotica – all of the new physics that is not related to the main course.

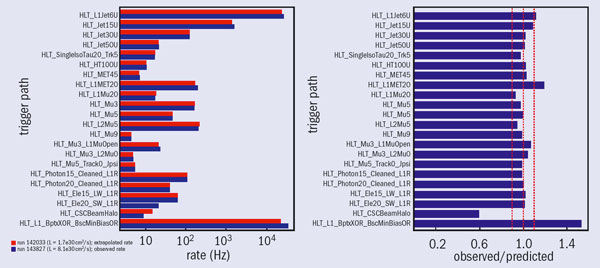

At a practical level, the menu consists of various paths that fall into one of three categories. First, inclusive trigger paths look at overall properties, such as total energy or missing transverse energy, which are particularly important for detector studies. Second, single-object paths identify objects, for example, an electron or a jet. These are valuable for physics studies, particularly for Standard Model processes. Third, multi-object paths contain a combination of single objects. The trigger menu pulls the various paths together and the filter farm executes the HLT algorithms as much as possible in parallel – the HLT has less than 100 ms to make a decision for a L1 rate of about 50 kHz. Figure 1 shows rates for several HLT paths for an instantaneous luminosity of 8 × 1031 cm–2 s–1.

The menu has to cover a range of physics: it must be as inclusive as possible, not only to accommodate more physics needs but also to make room for things that had not been considered when the experiment was running. For example, some theorists might come up with a new idea only after CMS has finished collecting data, but the experiment may have already captured what is needed if it has run with an “inclusive” trigger.

As the luminosity of the LHC increases, so does the collision rate, which means that tighter selection criteria need to be applied and the menu must constantly evolve to accommodate these needs. At CMS, physics groups – as well as detector groups – regularly submit proposals for triggers that they would like to have implemented. Requests are merged whenever possible into common triggers to simplify the menu. This makes it easier to maintain the menu as well as to spot mistakes and fix them. In addition, the bandwidth can be maximized if two groups share a trigger. For example, instead of two groups receiving a rate of 2 Hz each, they could devote 4 Hz to a common, more economical, trigger.

Once the proposals have been made, the Trigger Menu Development and HLT Code Integration Groups come up with a menu prototype, rather like a “tasting menu”. This takes all of the proposals into account and tries to implement them in a coherent trigger menu that adheres to every parameter to satisfy all appetites. While attempts are made to accommodate as many triggers as possible, if there are conflicting needs from different groups then the Physics and Trigger co-ordination has the final word.

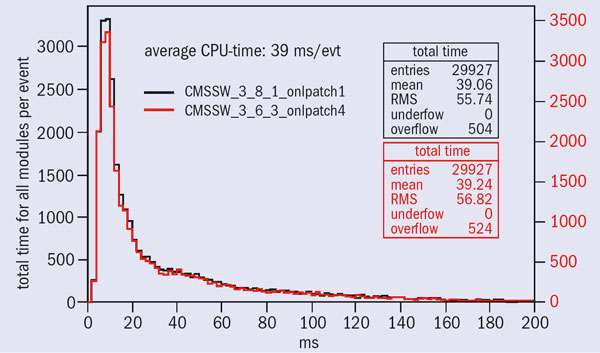

The Trigger Performance Group then takes the prototype and runs it on “signal” events – using either real data or simulated – from all of the physics groups to test whether the menu picks out what it is supposed to select. If problems are found – and they often are – then the teams go back and fix them to produce the next prototype. At some point, the prototype will appear to be good enough to be deployed by the Trigger Menu Integration Group. This team then puts the menu online to test it, making sure that everything functions as expected. One important aspect of this validation is to verify that the full menu can run at the HLT within the budgeted time (figure 2).

Ever-changing ingredients

The CMS experiment has evolved since the early running period, when it was in commissioning mode, so that by the end of the 2010 the collaboration could maximize the physics output. The trigger system has adjusted in parallel to reflect this changing reality. During the 2010 proton run, the Trigger Studies Group produced more than a dozen menus of L1 and HLT “dishes”, which successfully filtered CMS physics data over five orders of magnitude in luminosity, over the range 1 × 1027 – 2 × 1032 cm–2 s–1.

Most of the triggers for the LHC start-up in March 2010 covered what was needed to understand the detector, such as calibration, alignment, noise studies and commissioning in general. Since then, these triggers have been gradually reduced to a minimum. The menu is now dominated by physics triggers, including a whole suite of new SUSY triggers that were deployed last September.

As mentioned above, the complexity of the trigger menu increases as a function of luminosity. Because the early interactions were at low luminosities, it was possible to be inclusive – to record as many events as possible. As the luminosity has increased, however, certain triggers have had to be sacrificed. Triggers for Standard Model physics have been the first to be reduced because the priority is to discover new physics. However, a fraction of the trigger bandwidth always goes to Standard Model physics, which is used as a reference.

Sometimes, triggers are removed because they are no longer needed or they have been replaced by more advanced versions. At other times, there is an overlap period to understand what the new trigger does compared with the old one.

The incredible performance of the LHC – which reached the luminosity target for the 2010 proton–proton collisions run of 1032 cm–2 s–1 several weeks earlier than expected – has kept the trigger-system team on its toes. Over the next few years, the evolution of luminosity will continue to require the trigger “chefs” to produce creative menus to cope with the ever-changing range of ingredients on offer.