Since the revolutionary discovery of the J/ψ meson, quarkonia – bound states of heavy quark–antiquark pairs – have played a crucial role in understanding fundamental interactions. Being the hadronic-physics equivalent of positronium, they allow detailed study of some of the basic properties of quantum chromodynamics (QCD), the theory of strong interactions. Yet, despite the apparent simplicity of these states, the mechanism behind their production remains a mystery, after decades of experimental and theoretical effort (Brambilla et al. 2011). In particular, the angular decay-

distributions of the quarkonium states produced in hadron collisions – which should provide detailed information on their formation and quantum properties – remain challenging and present a seemingly irreconcilable disagreement between the measurements and the QCD predictions.

Given the success of the Standard Model, why has this intriguing situation not captivated more attention in the high-energy-physics community? The reason may be that this problem belongs to the notoriously obscure and computationally cumbersome “non-perturbative” side of the Standard Model. While the failure to reproduce an experimental observable that is perturbatively calculable in the electroweak or strong sector would be interpreted as a sign of new physics, phenomena requiring a non-perturbative treatment – such as those related to the long-distance regime of the strong force – are less likely to trigger an immediate reaction.

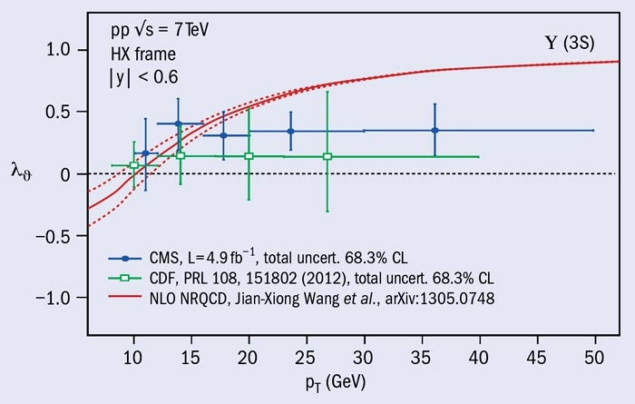

It can also be argued that, until recently, doubts existed regarding the reliability of the experimental data, given some contradictions among results and the incompleteness of the analysis strategies (Faccioli et al. 2010). Similar doubts also existed about the usefulness of the data as a test of theory, given their limited extension into the “interesting” region of high transverse-momentum (pT). The recently published, precise and exhaustive polarization measurements of Υ from the CDF and CMS experiments (CDF collaboration 2012 and CMS collaboration 2013a), which extend to pT of around 40 GeV, have significantly changed this picture, building a robust and unambiguous set of results to challenge the theoretical predictions.

his approach successfully reproduces the differential pT cross-sections, which has been interpreted as a plausible indication that the underlying assumptions are correct

Quarkonium production has been the subject of ambitious theoretical efforts aimed at fully and systematically calculating how an intrinsically non-perturbative system (the cc or bb state) is produced in high-energy collisions and – potentially – at providing Standard Model references for fully fledged precision studies. The nonrelativistic QCD (NRQCD) framework consistently fuses perturbative and non-perturbative aspects of the quarkonium production process, exploiting the notion that the heavy quark and antiquark move relatively slowly when bound as a quarkonium state (Bodwin et al. 1995). This approach introduces into the calculations a mathematical expansion in the quarkʼs velocity-squared, v2, supplementing the usual expansion in the strong coupling constant αs of the hard-scattering processes.

The non-perturbative ingredients in these calculations are the long-distance matrix elements (LDME) that describe the transitions from point-like di-quark objects, which can also be coloured (“colour-octet” states), to the colourless observable quarkonia. In principle these could be calculated using non-perturbative models but the current approach leaves them as free parameters of a global fit to some kinematic spectra of quarkonium production. This approach successfully reproduces the differential pT cross-sections, which has been interpreted as a plausible indication that the underlying assumptions are correct.

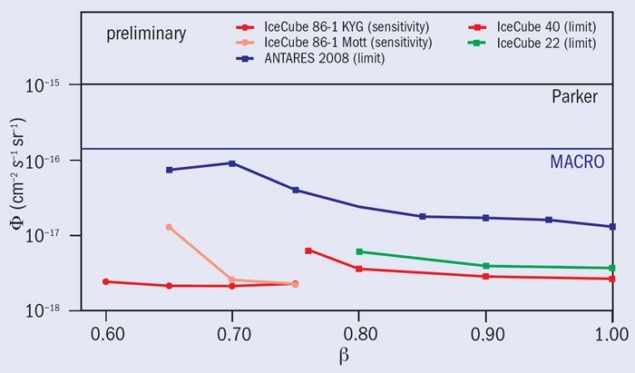

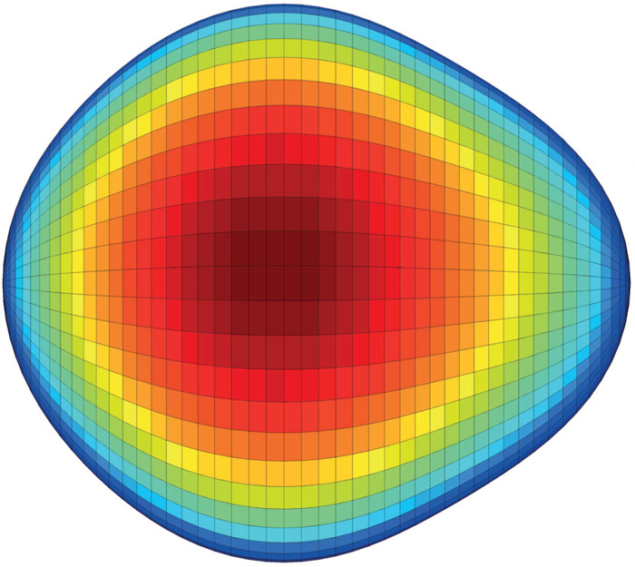

The next step in the validation of the NRQCD framework is to make other predictions without changing the previously fitted matrix elements and compare them with independent measurements. The framework clearly predicts that S-wave quarkonia (J/ψ, ψ(2S) and the Υ(nS) states) directly produced in parton–parton scattering at pT much higher than their mass are transversely polarized – that is, their angular momentum vectors are aligned as the spin of a real photon. Specifically, considering their decay into μ+μ–, this means that the decay leptons are preferentially emitted in the meson’s direction of motion. The measurements made by CDF and CMS contradict this picture dramatically: the Υ states always decay almost isotropically, meaning that they are produced with no preferred orientation of their angular momentum vectors.

One aspect to keep in mind is that sizeable but not yet well measured fractions of the S-wave quarkonia (orbital angular momentum L=0) are produced from feed-down decays of P-wave states (L=1) leading to more complex polarization patterns. In particular, it is conceivable that the transverse polarization of the directly produced Υ(1S) mesons, say, is washed away by a suitable level of longitudinal polarization brought by the Υ(1S) mesons produced in χb decays. Such potential “conspiracies” illustrate how intertwined the studies of S- and P-wave states are, showing that a complete understanding of the underlying physics requires a global analysis of the whole family.

Few measurements are so far available on the production and polarization of P-wave quarkonia (χc and χb), which are experimentally challenging because the main detection channels involve radiative decays producing low-energy photons. In this respect the Υ(3S) resonance, only affected by feed-down decays from the recently discovered χb(3P) state, a presumably small contribution, offers a clearer comparison between predictions and measurements: the verdict is that there is striking disagreement, as the left-hand figure above shows.

A more decisive assessment of the seriousness of the theory difficulties is provided by measurements of the polarization of high-pT charmonia. Such data probe a domain of high values of the ratio of pT to mass, where the NRQCD prediction is supposed to rest on firmer ground. Furthermore, the heavier charmonium state, ψ(2S), is free from feed-down decays and so its decay angular distribution exclusively reflects the polarization of S-wave quarkonia directly produced in parton–parton scattering, therefore representing a cleaner test of theory. The results for the ψ(2S) shown recently by the CMS collaboration at the Large Hadron Collider Physics Conference, reaching up to pT of 50 GeV, are in disagreement with the theoretically expected transverse polarization, as the right-hand figure indicates (CMS collaboration 2013b). This challenges the assumed hypothesis that long- and short-distance aspects of the strong force can be separated in calculations on these QCD phenomena. The ultimate “smoking-gun signal” will come from measurements of the polarization of directly produced J/ψ mesons. These probe higher pT/mass ratios and lower heavy-quark velocities than the studies of ψ(2S) but at additional cost in the necessary experimental discrimination of the J/ψ mesons from χc decays.

The solution to the quarkonium-polarization problem remains unknown but it seems a safe bet that it will open new perspectives over a whole class of processes

Definite judgements will have to wait for more thorough scrutiny of the theoretical ingredients. An explicit proof that perturbative and non-perturbative effects can be factorized – already existing for several hard-scattering processes in QCD – has yet to be formally provided for the case of quarkonium production. At the same time, the method to determine the colour-octet transition-matrix elements using measured pT spectra must be improved. For example, the existing NRQCD global fits use differential cross-sections measured with acceptance corrections that are evaluated assuming unpolarized production, ignoring the large uncertainty that the experiments assign to the lack of prior knowledge about quarkonium polarization (the acceptance determinations strongly depend on the shape of the dilepton decay distributions). Paradoxically, the fit results lead to the prediction of strong transverse polarization. Moreover, while the NRQCD predictions are considered robust only at sufficiently high pT, the fits assign equal weight to data collected at high pT and those collected at pT values that are similar to the quarkonium mass, which drive the results because of their higher precision. Finally, it could be that the higher-order corrections in the perturbative part of the calculations (currently performed at next-to-leading order in αs) are sizable and not yet well accounted for in current theoretical uncertainties, or that the LDME expansion in the heavy-quark velocity should be reconsidered.

The solution to the quarkonium-polarization problem remains unknown but it seems a safe bet that it will open new perspectives over a whole class of processes. It could unveil an improved Standard Model capable of providing testable predictions for high-momentum production of a large category of non-perturbative hadronic objects. In any case, it will surely stimulate profound rethinking of how such phenomena can be described and predicted.