Throughout history, our notion of space and time has undergone a number of dramatic transformations, thanks to figures ranging from Aristotle, Leibniz and Newton to Gauss, Poincaré and Einstein. In our present understanding of nature, space and time form a single 4D entity called space–time. This entity plays a key role for the entire field of physics: either as a passive spectator by providing the arena in which physical processes take place or, in the case of gravity as understood by Einstein’s general relativity, as an active participant.

Since the birth of special relativity in 1905 and the CPT theorem of Bell, Lüders and Pauli in the 1950s, we have come to appreciate both Lorentz and CPT symmetry as cornerstones of the underlying structure of space–time. The former states that physical laws are unchanged when transforming between two inertial frames, while the latter is the symmetry of physical laws under the simultaneous transformations of charge conjugation (C), parity inversion (P) and time reversal (T). These closely entwined symmetries guarantee that space–time provides a level playing field for all physical systems independent of their spatial orientation and velocity, or whether they are composed of matter or antimatter. Both have stood the tests of time, but in the last quarter century these cornerstones have come under renewed scrutiny as to whether they are indeed exact symmetries of nature. Were physicists to find violations, it would lead to profound revisions in our understanding of space and time and force us to correct both general relativity and the Standard Model of particle

Accessing the Planck scale

Several considerations have spurred significant enthusiasm for testing Lorentz and CPT invariance in recent years. One is the observed bias of nature towards matter – an imbalance that is difficult, although perhaps possible, to explain using standard physics. Another stems from the synthesis of two of the most successful physics concepts in history: unification and symmetry breaking. Many theoretical attempts to combine quantum theory with gravity into a theory of quantum gravity allow for tiny departures from Lorentz and CPT invariance. Surprisingly, even deviations that are suppressed by 20 orders of magnitude or more are experimentally accessible with present technology. Few, if any, other experimental approaches to finding new physics can provide such direct access to the Planck scale.

Unfortunately, current models of quantum gravity cannot accurately pinpoint experimental signatures for Lorentz and CPT violation. An essential milestone has therefore been the development of a general theoretical framework that incorporates Lorentz and CPT violation into both the Standard Model and general relativity: the Standard Model Extension (SME), as formulated by Alan Kostelecký of Indiana University in the US and coworkers beginning in the early 1990s. Due to its generality and independence of the underlying models, the SME achieves the ambitious goal of allowing the identification, analysis and interpretation of all feasible Lorentz and CPT tests (see panel below). Any putative quantum-gravity remnants associated with Lorentz breakdown enter the SME as a multitude of preferred directions criss-crossing space–time. As a result, the playing field for physical systems is no longer level: effects may depend slightly on spatial orientation, uniform velocity, or whether matter or antimatter is involved. These preferred directions are the coefficients of the SME framework; they parametrise the type and extent of Lorentz and CPT violation, offering specific experiments the opportunity to try to glimpse them.

The Standard Model Extension

At the core of attempts to detect violations in space–time symmetry is the Standard Model Extension (SME) – an effective field theory that contains not just the SM but also general relativity and all possible operators that break Lorentz symmetry. It can be expressed as a Lagrangian in which each Lorentz-violating term has a coefficient that leads to a testable prediction of the theory.

Lorentz and CPT research is unique in the exceptionally wide range of experiments it offers. The SME makes predictions for symmetry-violating effects in systems involving neutrinos, gravity, meson oscillations, cosmic rays, atomic spectra, antimatter, Penning traps and collider physics, among others. In the case of free particles, Lorentz and CPT violation lead to a dependence of observables on the direction and magnitude of the particles’ momenta, on their spins, and on whether particles or antiparticles are studied. For a bound system such as atomic and nuclear states, the energy spectrum depends on its orientation and velocity and may differ from that of the corresponding antimatter system.

The vast spectrum of experiments and latest results in this field were the subject of the triennial CPT conference held at Indiana University in June this year (see panel below), highlights from which form the basis of this article.

The seventh triennial CPT conference

A host of experimental efforts to probe space–time symmetries were the focus of the week-long Seventh Meeting on CPT and Lorentz Symmetry (CPT’16) held at Indiana University, Bloomington, US, on 20–24 June, which are summarised in the main text of this article. With around 120 experts from five continents discussing the most recent developments in the subject, it has been the largest of all meetings in this one-of-a-kind triennial conference series. Many of the sessions included presentations involving experiments at CERN, and the discussions covered a number of key results from experiments at the Antiproton Decelerator and future improvements expected from the commissioning of ELENA. The common thread weaving through all of these talks heralds an exciting emergent era of low-energy Planck-reach fundamental physics with antimatter.

A host of experimental efforts to probe space–time symmetries were the focus of the week-long Seventh Meeting on CPT and Lorentz Symmetry (CPT’16) held at Indiana University, Bloomington, US, on 20–24 June, which are summarised in the main text of this article. With around 120 experts from five continents discussing the most recent developments in the subject, it has been the largest of all meetings in this one-of-a-kind triennial conference series. Many of the sessions included presentations involving experiments at CERN, and the discussions covered a number of key results from experiments at the Antiproton Decelerator and future improvements expected from the commissioning of ELENA. The common thread weaving through all of these talks heralds an exciting emergent era of low-energy Planck-reach fundamental physics with antimatter.

CERN matters

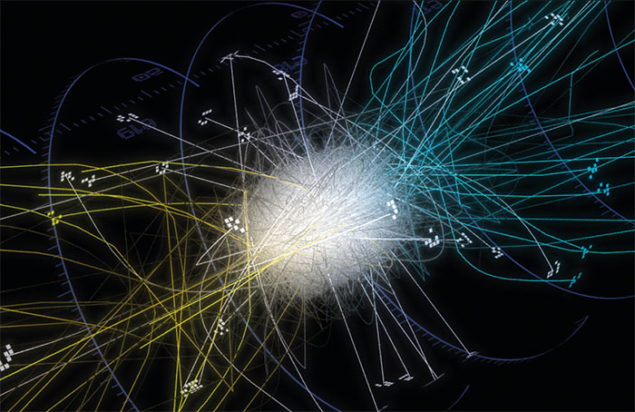

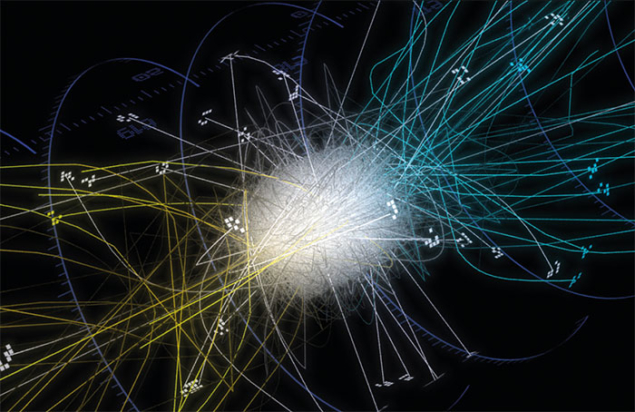

As host to the world’s only cold-antiproton source for precision antimatter physics (the Antiproton Decelerator, AD) and the highest-energy particle accelerator (the Large Hadron Collider, LHC), CERN is in a unique position to investigate the microscopic structure of space–time. The corresponding breadth of measurements at these extreme ends of the energy regime guarantees complementary experimental approaches to Lorentz and CPT symmetry at a single laboratory. Furthermore, the commissioning of the new ELENA facility at CERN is opening brand new tests of Lorentz and CPT symmetry in the antimatter sector (see panel below).

Cold antiprotons offer powerful tests of CPT symmetry

CPT – the combination of charge conjugation (C), parity inversion (P) and time reversal (T) – represents a discrete symmetry between matter and antimatter. As the standard CPT test framework, the Standard Model Extension (SME) possesses a feature that might perhaps seem curious at first: CPT violation always comes with a breakdown of Lorentz invariance. However, an extraordinary insight gleaned from the celebrated CPT theorem of the 1950s is that Lorentz symmetry already contains CPT invariance under “mild smoothness” assumptions: since CPT is essentially a special Lorentz transformation with a complex-valued velocity, the symmetry holds whenever the equations of physics are smooth enough to allow continuation into the complex plane. Unsurprisingly, then, the loss of CPT invariance requires Lorentz breakdown, an argument made rigorous in 2002. Lorentz violation, on the other hand, does not imply CPT breaking.

That CPT breaking comes with Lorentz violation has the profound experimental implication that CPT tests do not necessarily have to involve both matter and antimatter: hypothetical CPT violation might also be detectable via the concomitant Lorentz breaking in matter alone. But this feature comes at a cost: the corresponding Lorentz tests typically cannot disentangle CPT-even and CPT-odd signals and, worse, they may even be blind to the effect altogether. Antimatter experiments decisively brush aside these concerns, and the availability at CERN of cold antiprotons has thus opened an unparalleled avenue for CPT tests. In fact, all six fundamental-physics experiments that use CERN’s antiprotons have the potential to place independent limits on distinct regions of the SME’s coefficient space. The upcoming Extra Low ENergy Antiproton (ELENA) ring at CERN (see “CERN soups up its antiproton source”) will provide substantially upgraded access to antiprotons for these experiments.

One exciting type of CPT test that will be conducted independently by the ALPHA, ATRAP and ASACUSA experiments is to produce antihydrogen, an atom made up of an antiproton and a positron, and compare its spectrum to that of ordinary hydrogen. While the production of cold antihydrogen has already been achieved by these experiments, present efforts are directed at precision spectroscopy promising clean and competitive constraints on various CPT-breaking SME coefficients for the proton and electron.

At present, the gravitational interaction of antimatter remains virtually untested. The AEgIS and GBAR experiments will tackle this issue by dropping antihydrogen atoms in the Earth’s gravity field. These experiments differ in their detailed set-up, but both are projected to permit initial measurements of the gravitational acceleration, g, for antihydrogen at the per cent level. The results will provide limits on SME coefficients for the couplings between antimatter and gravity that are inaccessible with other experiments.

A third fascinating type of CPT test is based on the equality of the physical properties of a particle and its antiparticle, as guaranteed by CPT invariance. The ATRAP and BASE experiments have been advocating such a comparison between protons and antiprotons confined in a cryogenic Penning trap. Impressive results for the charge-to-mass ratios and g factors have already been obtained at CERN and are poised for substantial future improvements. These measurements permit clean bounds on SME coefficients of the proton with record sensitivities.

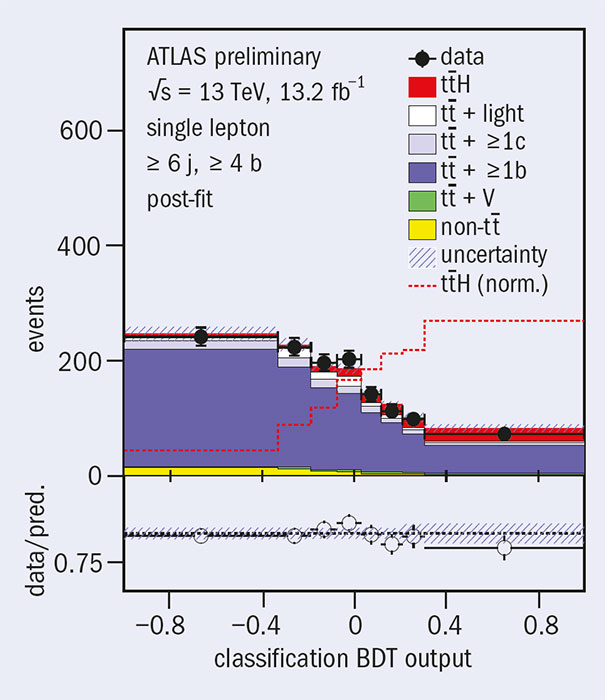

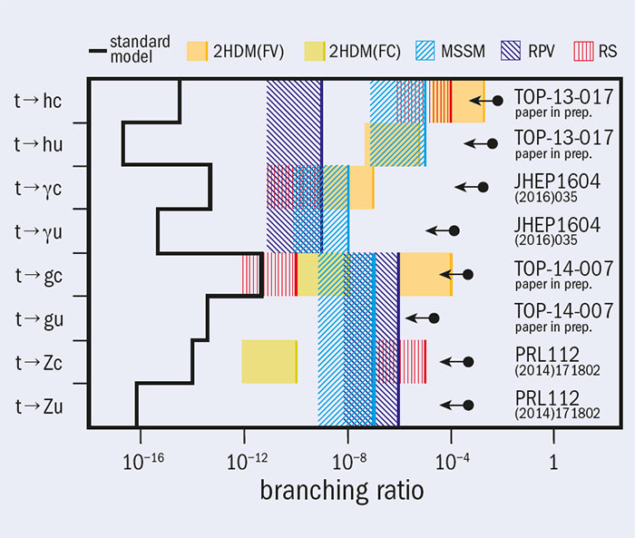

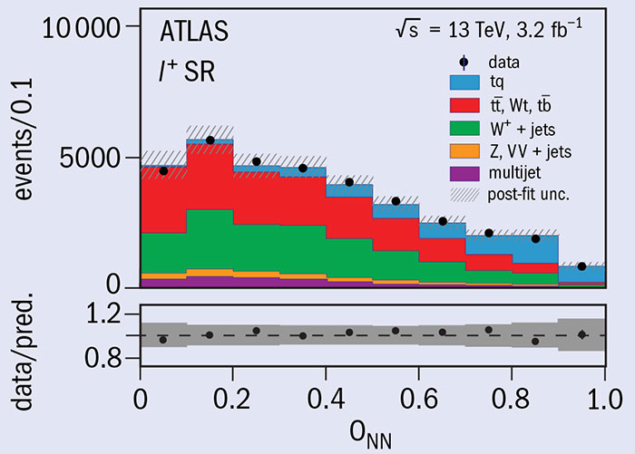

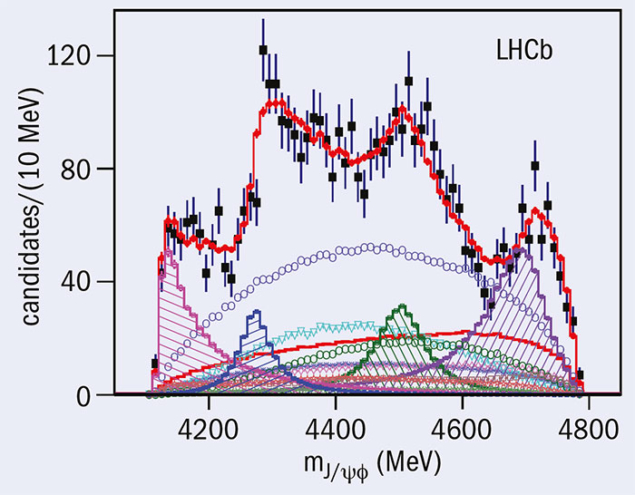

Regarding the LHC, the latest Lorentz- and CPT-violation physics comes from the LHCb collaboration, which studies particles made up of b quarks. The experiment’s first measurements of SME coefficients in the Bd and Bs systems, published in June this year, have improved existing results by up to two orders of magnitude. LHCb also has competition from other major neutral-meson experiments. These involve studies of the Bs system at the Tevatron’s DØ experiment, recent searches for Lorentz and CPT violation with entangled kaons at KLOE and the upcoming KLOE-2 at DAΦNE in Italy, as well as results on CPT-symmetry tests in Bd mixing and decays from the BaBar experiment at SLAC. The LHC’s general-purpose ATLAS and CMS experiments, meanwhile, hold promise for heavy-quark studies. Data on single-top production at these experiments would allow the world’s first CPT test for the top quark, while the measurement of top–antitop production can sharpen by a factor of 10 the earlier measurements of CPT-even Lorentz violation at DØ.

Other possibilities for accelerator tests of Lorentz and CPT invariance include deep inelastic scattering and polarised electron–electron scattering. The first ever analysis of the former offers a way to access previously unconstrained SME coefficients in QCD employing data from, for example, the HERA collider at DESY. Polarised electron–electron scattering, on the other hand, allows constraints to be placed on currently unmeasured Lorentz violations in the Z boson, which are also parameterised by the SME and have relevance for SLAC’s E158 data and the proposed MOLLER experiment at JLab. Lorentz-symmetry breaking would also cause the muon spin precession in a storage ring to be thrown out of sync by just a tiny bit, which is an effect accessible to muon g-2 measurements at J-PARC and Fermilab.

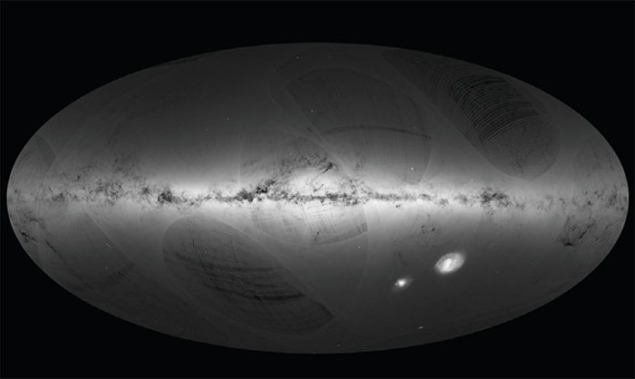

Historically, electromagnetism is perhaps most closely associated with Lorentz tests, and this idea continues to exert a sustained influence on the field. Modern versions of the classical Michelson–Morley experiment have been realised with tabletop resonant cavities as well as with the multi-kilometre LIGO interferometer, with upcoming improvements promising unparalleled measurements of the SME’s photon sector. Another approach for testing Lorentz and CPT symmetry is to study the energy- and direction-dependent dispersion of photons as predicted by the SME. Recent observations by the space-based Fermi Large Area Telescope severely constrain this effect, placing tight limits on 25 individual non-minimal SME coefficients for the photon.

AMO techniques

Experiments in atomic, molecular and optical (AMO) physics are also providing powerful probes of Lorentz and CPT invariance and these are complementary to accelerator-based tests. AMO techniques excel at testing Lorentz-violating effects that do not grow with energy, but they are typically confined to normal-matter particles and cannot directly access the SME coefficients of the Higgs or the top quark. Recently, advances in this field have allowed researchers to carry out interferometry using systems other than light, and an intriguing idea is to use entangled wave functions to create a Michelson–Morley interferometer within a single Yb+ ion. The strongly enhanced SME effects in this system, which arise due to the ion’s particular energy-level structure, could improve existing limits by five orders of magnitude.

Other AMO systems, such as atomic clocks, have long been recognised as a backbone of Lorentz tests. The bright SME prospects arising from the latest trend toward optical clocks, which are several orders of magnitude more precise than traditional varieties based on microwave transitions, are being examined by researchers at NIST and elsewhere. Also, measurements on the more exotic muonium atom by J-PARC and by the PSI can place limits on the SME’s muon coefficients, which is a topic of significant interest in light of several current puzzles involving the muon.

From neutrinos to gravity

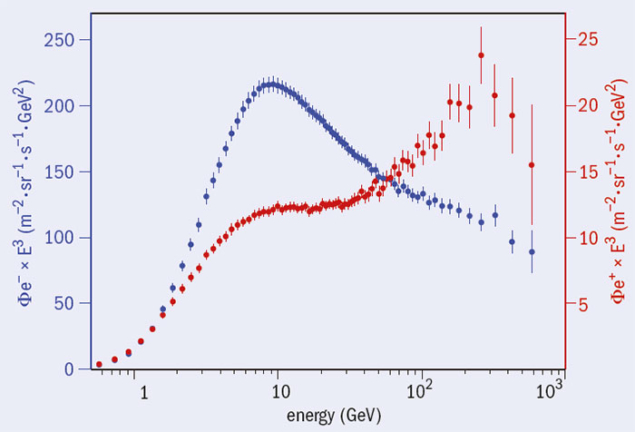

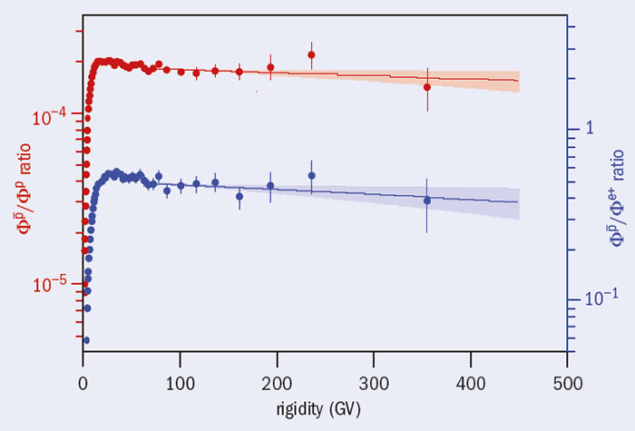

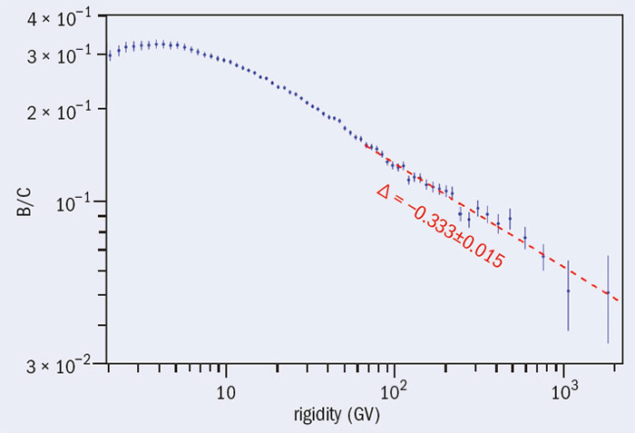

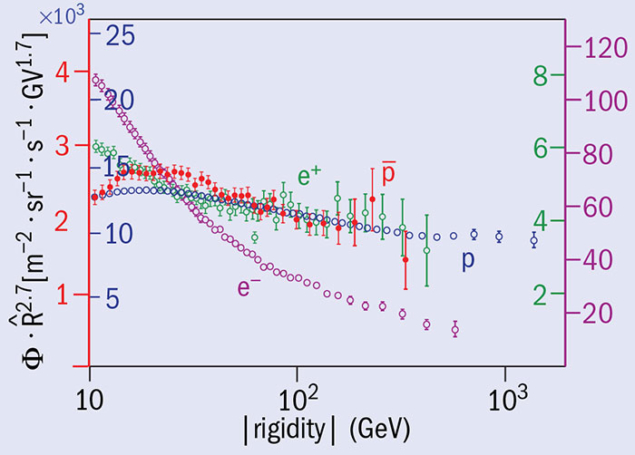

Unknown neutrino properties, such as their mass, and tension between various neutrino measurements have stimulated a wealth of recent research including a number of SME analyses. The breakdown of Lorentz and CPT symmetry would cause the ordinary neutrino–neutrino and antineutrino–antineutrino oscillations to exhibit unusual direction, energy and flavour dependence, and would also induce unconventional neutrino–antineutrino mixing and kinematic effects – the latter leading to modified velocities and dispersion, as measured in time-of-flight experiments. Existing and planned neutrino experiments offer a wealth of opportunities to examine such effects. For example: upcoming results from the Daya Bay experiment should yield improved limits on Lorentz violation from antineutrino–antineutrino mixing; EXO has obtained the first direct experimental bound on a difficult-to-access “counter-shaded” coefficient extracted from the electron spectrum of double beta decay; T2K has announced new constraints on the a-and-c coefficients tightened by a factor of two using the muon-neutrino; and IceCube promises extreme sensitivities to “non-minimal” effects with kinematical studies of astrophysical neutrinos, such as Cherenkov effects of various kinds.

The modern approach to Lorentz and CPT tests remains as active as ever.

The feebleness of gravity makes the corresponding Lorentz and CPT tests in this SME sector particularly challenging. This has led researchers from HUST in China and from Indiana University to use an ingenious tabletop experiment to seek Lorentz breaking in the short-range behaviour of the gravitational force. The idea is to bring gravitationally interacting test masses to within submillimetre ranges of one another and observe their mechanical resonance behaviour, which is sensitive to deviations from Lorentz symmetry in the gravitational field. Other groups are carrying out related cutting-edge measurements of SME gravity coefficients with laser ranging of the Moon and other solar-system objects, while analysis of the gravitational-wave data recently obtained by LIGO has already yielded many first constraints on SME coefficients in the gravity sector, with the promise of more to come.

After a quarter century of experimental and theoretical work, the modern approach to Lorentz and CPT tests remains as active as ever. As the theoretical understanding of Lorentz and CPT violation continues to evolve at a rapid pace, it is remarkable that experimental studies continue to follow closely behind and now stretch across most subfields of physics. The range of physical systems involved is truly stunning, and the growing number of different efforts displays the liveliness and exciting prospects for a research field that could help to unlock the deepest mysteries of the universe.