What, in a nutshell, did you uncover in your famous 1997 work, which became the most cited in high-energy physics?

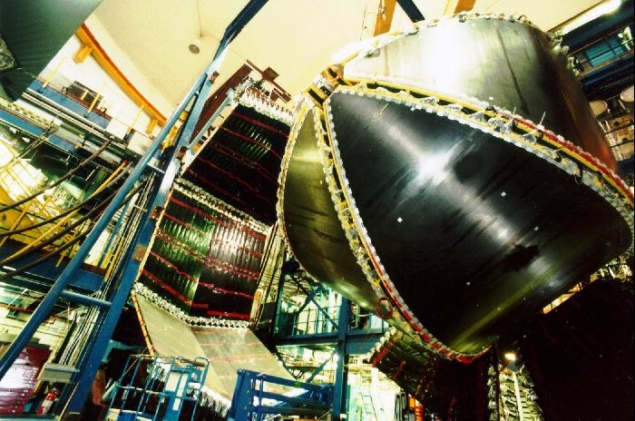

The paper conjectured a relation between certain quantum field theories and gravity theories. The idea was that a strongly coupled quantum system can generate complex quantum states that have an equivalent description in terms of a gravity theory (or a string theory) in a higher dimensional space. The paper considered special theories that have lots of symmetries, including scale invariance, conformal invariance and supersymmetry, and the fact that those symmetries were present on both sides of the relationship was one of the pieces of evidence for the conjecture. The main argument relating the two descriptions involved objects that appear in string theory called D-branes, which are a type of soliton. Polchinski had previously given a very precise description for the dynamics of D-branes. At low energies a soliton can be described by its centre-of-mass position: if you have N solitons you will have N positions. With D-branes it is the same, except that when they coincide there is a non-Abelian SU(N) gauge symmetry that relates these positions. So this low-energy theory resembles the theory of quantum chromodynamics, except that with N colours and special matter content.

On the other hand, these D-brane solitons also have a gravitational description, found earlier by Horowitz and Strominger, in which they look like “black branes” – objects similar to black holes but extended along certain spatial directions. The conjecture was simply that these two descriptions should be equivalent. The gravitational description becomes simple when N and the effective coupling are very large.

Did you stumble across the duality, or had you set out to find it?

It was based on previous work on the connection between D-branes and black holes. The first major result in this direction was the computation of Strominger and Vafa, who considered an extremal black hole and compared it to a collection of D-branes. By computing the number of states into which these D-branes can be arranged, they found that it matched the Bekenstein–Hawking black-hole entropy given in terms of the area of the horizon. Such black holes have zero temperature. By slightly exciting these black holes some of us were attempting to extend such results to non-zero temperatures, which allowed us to probe the dynamics of those nearly extremal black holes. Some computations gave similar answers, sometimes exactly, sometimes up to coefficients. It was clear that there was a deep relation between the two, but it was unclear what the concrete relation was. The gravity–gauge (AdS/CFT) conjecture clarified the relationship.

Are you surprised by its lasting impact?

Yes. At the time I thought that it was going to be interesting for people thinking about quantum gravity and black holes. But the applications that people found to other areas of physics continue to surprise me. It is important for understanding quantum aspects of black holes. It was also useful for understanding very strongly coupled quantum theories. Most of our intuition for quantum field theory is for weakly coupled theories, but interesting new phenomena can arise at strong coupling. These examples of strongly coupled theories can be viewed as useful calculable toy models. The art lies in extracting the right lessons from them. Some of the lessons include possible bounds on transport, a bound on chaos, etc. These applications involved a great deal of ingenuity since one has to extract the right lessons from the examples we have in order to apply them to real-world systems.

What does the gravity–gauge duality tell us about nature, given that it relates two pictures (e.g. involving different dimensionalities of space) that have not yet been shown to correspond to the physical world?

It suggests that the quantum description of spacetime can be in terms of degrees of freedom that are not localised in space. It also says that black holes are consistent with quantum mechanics, when we look at them from the outside. More recently, it was understood that when we try to describe the black-hole interior, then we find surprises. What we encounter in the interior of a black hole seems to depend on what the black hole is entangled with. At first this looks inconsistent with quantum mechanics, since we cannot influence a system through entanglement. But it is not. Standard quantum mechanics applies to the black hole as seen from the outside. But to explore the interior you have to jump in, and you cannot tell the outside observer what you encountered inside.

One of the most interesting recent lessons is the important role that entanglement plays in constructing the geometry of spacetime. This is particularly important for the black-hole interior.

I suspect that with the advent of quantum computers, it will become increasingly possible to simulate these complex quantum systems that have some features similar to gravity. This will likely lead to more surprises.

In what sense does AdS/CFT allow us to discuss the interior of a black hole?

It gives us directly a view of a black hole from the outside, more precisely a view of the black hole from very far away. In principle, from this description we should be able to understand what goes on in the interior. While there has been some progress on understanding some aspects of the interior, a full understanding is still lacking. It is important to understand that there are lots of weird possibilities for black-hole interiors. Those we get from gravitational collapse are relatively simple, but there are solutions, such as the full two-sided Schwarzschild solution, where the interior is shared between two black holes that are very far away. The full Schwarzschild solution can therefore be viewed as two entangled black holes in a particular state called the thermofield double, a suggestion made by Werner Israel in the 1970s. The idea is that by entangling two black holes we can create a geometric connection through their interiors: the black holes can be very far away, but the distance through the interior could be very short. However, the geometry is time-dependent and signals cannot go from one side to the other. The geometry inside is like a collapsing wormhole that closes off before a signal can go through. In fact, this is a necessary condition for the interpretation of these geometries as entangled states, since we cannot send signals using entanglement. Susskind and myself have emphasised this connection via the “ER=EPR” slogan. This says that EPR correlations (or entanglement) should generally give rise to some sort of “geometric” connection, or Einstein–Rosen bridge, between the two systems. The Einstein–Rosen bridge is the geometric connection between two black holes present in the full Schwarzschild solution.

Are there potential implications of this relationship for intergalactic travel?

Gao, Jafferis and Wall have shown that an interesting new feature appears when one brings two entangled black holes close to each other. Now there can be a direct interaction between the two black holes and the thermofield double state can be close to the ground state of the combined system. In this case, the geometry changes and the wormhole becomes traversable.

One can find solutions of the Standard Model plus gravity that look like two microscopic magnetically charged black holes joined by a wormhole

In fact, as shown by Milekhin, Popov and myself, one can find solutions of the Standard Model plus gravity that look like two microscopic magnetically charged black holes joined by a wormhole. We could construct a controllable solution only for small black holes because we needed to approximate the fermions as being massless.

If one wanted a big macroscopic wormhole where a human could travel, then it would be possible with suitable assumptions about the dark sector. We’d need a dark U(1) gauge field and a very large number of massless fermions charged under U(1). In that case, a pair of magnetically charged black holes would enable one to travel between distant places. There is one catch: the time it would take to travel, as seen by somebody who stays outside the system, would be longer than the time it takes light to go between the two mouths of the wormhole. This is good, since we expect that causality should be respected. On the other hand, due to the large warping of the spacetime in the wormhole, the time the traveller experiences could be much shorter. So it seems similar to what would be experienced by an observer that accelerates to a very high velocity and then decelerates. Here, however, the force of gravity within the wormhole is doing the acceleration and deceleration. So, in theory, you can travel with no energy cost.

How does AdS/CFT relate to broader ideas in quantum information theory and holography?

Quantum information has been playing an important role in understanding how holography (or AdS/CFT) works. One important development is a formula, due to Ryu and Takayanagi, for the fine-grained entropy of gravitational systems, such as a black hole. It is well known that the area of the horizon gives the coarse-grained, or thermodynamic, entropy of a black hole. The fine-grained entropy, by contrast, is the actual entropy of the full quantum density matrix describing the system. Surprisingly, this entropy can also be computed in terms of the area of the surface. But it is not the horizon, it is typically a surface that lies in the interior and has a minimal area.

If you could pick any experiment to be funded and built, what would it be?

Well, I would build a higher energy collider, of say 100 TeV, to understand better the nature of the Higgs potential and look for hints of new physics. As for smaller scale experiments, I am excited about the current prospects to manipulate quantum matter and create highly entangled states that would have some of the properties that black holes are supposed to have, such as being maximally chaotic and allowing the kind of traversable wormholes described earlier.

How close are we to a unified theory of nature’s interactions?

String theory gives us a framework that can describe all the known interactions. It does not give a unique prediction, and the accommodation of a small cosmological constant is possible thanks to the large number of configurations that the internal dimensions can acquire. This whole framework is based on Kaluza–Klein compactifications of 10D string theories. It is possible that a deeper understanding of quantum gravity for cosmological solutions will give rise to a probability measure on this large set of solutions that will allow us to make more concrete predictions.Matth