The fourth biennial workshop on astroparticle physics in Germany took place at DESY, Zeuthen, in October 2005. It provided scientists in all branches of astroparticle physics, high-energy physics and astronomy with the opportunity to meet representatives of the German Ministry of Education and Research (BMBF) and discuss the status and future strategies of astroparticle physics.

Astroparticle physics in Germany is mainly pursued by the Helmholtz Association – at the Forschungszentrum Karlsruhe (FZK) and DESY – the Max Planck Society and several universities. It has rapidly achieved many successes in various topics, mainly through international collaborations. However, the next generation of experiments will surpass the funding available in Germany and it may be necessary to set priorities even though the advances in many areas are promising. As Johannes Blümer, chair of the Committee for Astroparticle Physics in Germany (KAT) – which is elected by the German astroparticle physics community – noted at the workshop: “Everything is possible, but not all at the same time”.

Cosmic ray success stories

The main success story in astroparticle physics concerns the observations of the highest energy photons by imaging air Cherenkov telescopes (IACTs). These telescopes register the Cherenkov light emitted by extensive air showers that are initiated by photons in the atmosphere. After a long and sometimes painful period of poking around in the dark, in 1989 the Crab nebula was discovered to be a source of photons of multi-tera-electron-volt energies – the first such astrophysical source. With the latest generation of IACTs – the Major Atmospheric Gamma Imaging Cherenkov (MAGIC) telescope on the island of La Palma and most notably the High Energy Stereoscopic System (HESS) telescope array in Namibia – a wealth of galactic and extragalactic sources has since shown up (figure 1). The German community has a major involvement in both HESS and MAGIC.

This research has opened up a new branch of observational astrophysics, as IACTs have detected mysterious galactic tera-electron-volt photon sources that have no counterpart at any other wavelength. Surely more surprises are to be expected.

In charged cosmic rays, the energy spectrum exhibits what is called a “knee” around 1-10 PeV and for years the solution to understanding the origin of cosmic rays in this energy region seemed to be just out of reach. A detailed multi-parameter analysis of the energy spectrum of groups of chemical elements by the Karlsruhe Shower Core and Array Detector (KASCADE) collaboration at FZK has resolved the knee into successively heavier elements. However, it has hit a wall due to the limited theoretical understanding of high-energy interactions in the atmosphere. Data from the Large Hadron Collider (LHC) at CERN may help to improve simulations of these interactions.

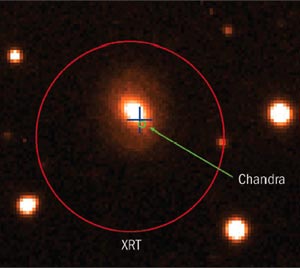

Registering cosmic rays at the highest energies, around 1020eV, the Pierre Auger Observatory (in which German universities and the FZK are major participants) is now moving quickly forward. “Hybrid” events, which were presented at the Zeuthen workshop (figure 2), made a strong impression. Although Auger is not fully operational yet, timescales are such that next-generation experiments need to be discussed now. These include, for example, the Extreme Universe Space Observatory, a space-born experiment to observe fluorescent and Cherenkov light from huge air showers. It would provide a sensitive area about one order of magnitude larger than Auger and would be perfectly suited to events above the famous Greisen-Zatsepin-Kuzmin cut-off (if indeed there are any), as well as for neutrino astronomy beyond 5 × 1019 eV.

The measurement of the radio emission from air showers has witnessed its own breakthrough. At the KASCADE ground array, the LOPES collaboration records geosynchrotron radio emission from air showers (figure 3). A realistic modelling of the radio emission has been achieved, which in turn enables the derivation of air-shower parameters from the radio data. The radio technique will allow for potentially very large and cost-effective installations. In the near future, more details will be investigated in conjunction with the Pierre Auger Observatory. This workshop series had its share in this success, as the possibility of new radio measurements was presented at the first meeting in 1999. Financial support was quickly provided by the BMBF and LOPES came into being.

Following the proof of principle of cosmic-neutrino detection by the Antarctic Muon and Neutrino Detector Array at the South Pole and the array in Lake Baikal, the installation of the 1 km3 sized IceCube neutrino telescope is now under way at the South Pole, with major participation from DESY and several German universities. IceCube should reach a sensitivity sufficient to identify sources of cosmic neutrinos. Technology tests by the ANTARES collaboration for a similar neutrino telescope in the Mediterranean have been concluded successfully.

A direct measurement of neutrino mass is badly needed to set the absolute scale for the mass differences derived from neutrino flavour oscillations. The Karslruhe Tritium Neutrino Experiment at FZK is likely to be the ultimate detector for a direct measurement of the neutrino mass from tritium decays. It aims for a mass sensitivity of 0.2 eV. The Germanium Detector Array, which is being pushed by German astroparticle physicists, is used to search for neutrinoless double beta decays and could reach a sensitivity of 0.1 eV for Majorana neutrinos.

Low-energy solar-neutrino spectroscopy is still required for a detailed understanding of the Sun and may prove the existence of matter effects in neutrino oscillations. The BOREXINO experiment, which has German participation, should start taking data in summer 2006. However, technology now allows us to aim for much larger future installations, such as the Low Energy Neutrino Astronomy project (with 50 kt of scintillator), up to 1 Mt water Cherenkov detectors or 100 kt liquid argon “bubble chambers”, as are being developed by the ICARUS collaboration. These new experiments would enable the measurement of “time resolved” neutrino spectroscopy in correlation with helioseismology. In addition, they could give access to relic and galactic supernova neutrinos and geoneutrinos, and could provide sensitive results on proton decay.

There are many convincing indirect arguments for the existence of dark matter in the universe. Theories predict weakly interacting massive particles (WIMPs) with masses above a few tens of giga-electron-volts as constituents of dark matter. Workshop participants heard of interesting evidence for galactic dark matter from archival data of the EGRET satellite. Meanwhile, three strategies are being followed in the hunt for WIMPs: they could be created at particle colliders (one of the prime targets for the LHC), while “natural” WIMPs are being searched for through their annihilation products, by satellites, IACTs and neutrino telescopes, or by elastic scattering processes in specialized detectors. A fourth line of attack is to try to detect axions, which may be created in non-thermal processes in the universe and hence provide cold dark-matter particles with masses in the region of 100 μeV.

There is already a good deal of indirect evidence for the existence of gravitational waves through the observation of energy losses in binary pulsar systems. However, the experimental systems of the Laser Interferometer Gravitational Wave Observatory (LIGO) in the US, the Virgo detector at Pisa in Italy and GEO600 near Hannover in Germany could directly detect gravitational waves, for example from the collision of two neutron stars. Unfortunately, the predicted event rates vary between one in a few years to one in a few thousand years. The advanced LIGO instrument, to which the German Max Planck Society will contribute, will achieve a much higher sensitivity. It is scheduled to start data taking in 2013. The space mission LISA, a joint effort between ESA and NASA, will extend the observational window to much lower frequencies and may even make primordial gravitational waves accessible. The decisions in Europe and the US on LIGO and LISA show the high priority that is being given to this research field, although in Germany only the Max Planck Society is significantly involved so far.

A stimulating one-day multimessenger workshop after the meeting discussed methods to combine observations of photons from radio to tera-electron-volt energies with those of neutrinos, gravitational waves and charged cosmic rays. The aim would be to extend the classical multiwavelength approach of astronomy towards a true multimessenger strategy.

Organizational issues

The total funding of astroparticle physics in Germany for 2005 amounted to approximately €35 million, while the corresponding amount for Europe is about €130 million a year. This may be compared with the investments of roughly €30 million that will be necessary for a next-generation experiment for the direct identification of dark-matter constituents, of about €8 million for each telescope in a next-generation IACT system, or of €500 million for a 1 Mt water Cherenkov detector. It is evident that such installations will be realized only within international collaborations.

An even greater challenge is to create the roadmaps necessary to guide the way into the future of astroparticle physics. Consequently, the scientific community is being asked to produce roadmaps on national, European and international levels. The most important European organizations in this respect are the Astroparticle Physics European Coordination (APPEC) in which funding agencies of 10 European countries are members at present, and the European Strategy Forum on Research Infrastructures (ESFRI). The German KAT takes a central role in linking the roadmaps for the future of astroparticle physics in Europe and Germany.

Astroparticle physics has applied for EU support (€28 million in total) for four projects with important German participation:

- the Integrated Large Infrastructures for Astroparticle Science to concentrate on dark-matter searches, double beta decay and gravitational waves (granted);

- a High Energy Astroparticle Physics Network (not granted);

- KM3NeT to develop a deep-sea facility in the Mediterranean for neutrino astronomy (granted);

- the Astroparticle ERAnet to improve further the coordination within Europe giving a solid operational basis to APPEC (granted).

In Germany the Helmholtz Association has taken the initiative to improve networking between universities and research institutes in the country by funding three “virtual institutes”: VIKHOS (high-energy radiation from the cosmos), VIDMAN (dark matter and neutrino physics) and VIPAC (particle cosmology).

In conclusion, astroparticle physics is making good progress in Germany and elsewhere. While tera-electron-volt astronomy is firmly established, several other fields are now just reaching the sensitivities for making breakthroughs. Further progress is imaginable only in the context of international collaborations following well-accepted roadmaps. The required organizational structures are being put into place and the next decade will decide on the future of many areas of astroparticle physics.

Luckily, imagination, fascination and fantasy remain unbroken. Astroparticle physicists continue to dream of utilizing the Moon as an ultimate target for observing the interactions of the highest energy cosmic rays or for listening to the feeble sound emitted in neutrino interactions in water and ice.