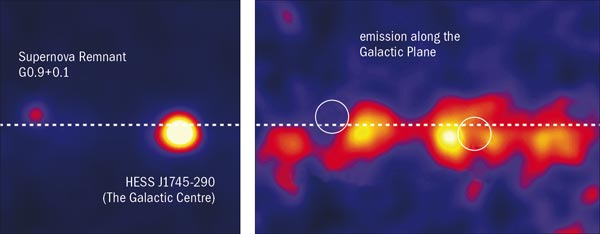

When the LHC starts up, heavy-ion physics will enter an era where high transverse-momentum (pT) processes contribute significantly to the nucleus–nucleus cross-section. The LHC will produce very hard, strongly interacting probes – the attenuation of which can be used to study the properties of the quark–gluon plasma (QGP) – at sufficiently high rates to make detailed measurements. At the LHC, high rates are for the first time expected at energies at which jets can be fully reconstructed against the high background from the underlying nucleus–nucleus event.

Image credit: Jan Rak.

To prepare for the new high pT and jet analysis challenges, the physics department at the University of Jyväskylä, Finland, organized the five-day Workshop on High pT Heavy-Ion Physics at the LHC. More than 60 participants attended the workshop, ranging from senior experts in heavy-ion physics to doctoral students. It brought together physicists from operating facilities – mainly RHIC at the Brookhaven National Laboratory (BNL) – as well as from future LHC experiments (ALICE, ATLAS and CMS), and included valuable contributions from theorists. Jyväskylä in early spring, coupled with reindeer-meat dinners and animated student lectures in the evening, created a superb atmosphere for many discussions of physics, even outside of the official programme.

Mike Tannenbaum of BNL gave an opening colloquium which looked back to the 1970s. He listed old results that raised the same questions that are the focus of today’s discussions. Many recent questions in high-pT physics can be traced back to the 1970s at CERN, with proton–proton (pp) collisions at the ISR, which were followed in the early 1980s by proton–antiproton collisions at the SPS. This was when jet physics was born and the first methods of jet analysis were developed. It was reassuring to learn that many CERN results remain valid and that recent thinking is really based on those early understandings. On the other hand, many ideas still remain in a premature state. Only the high-luminosity experiments at RHIC and the LHC are – or will be – able to investigate certain phenomena and measure their effects more precisely. These pp data are therefore very important, not merely because they serve as a baseline for understanding results in heavy-ion collisions.

Striking gold at RHIC

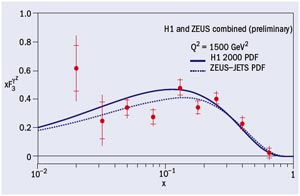

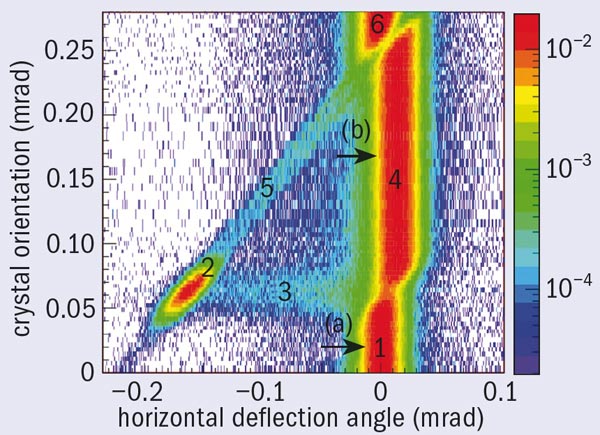

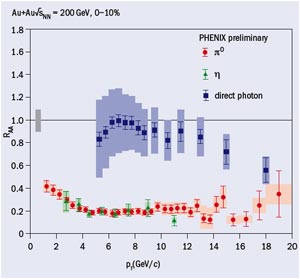

Several presentations at the workshop reviewed results from RHIC on single-particle spectra and two-particle correlations at high pT. Striking effects have been observed in central gold–gold collisions. Among the most prominent are the suppression of high-pT particles and the suppression of back-to-back correlations. These results show that the jet structure is strongly modified in dense matter consistent with perturbative QCD calculations of partonic energy loss via induced gluon radiation.

Data on heavy flavours from RHIC experiments have also provided puzzles. The measured suppression of heavy-flavour pT spectra, which is close to that of light flavours, cannot be explained by radiative energy loss alone and requires a contribution from elastic scattering. Further issues can be addressed by analysis of dijet topology or by the use of two- or multi-particle correlation techniques. Several experimental and theoretical presentations given at the meeting examined the possibility of using multi-particle correlation and photon–hadron correlations to study the partonic pT distributions, fragmentation functions, jet shape and other parton properties sensitive to the details of parton interactions with excited nuclear matter.

Another series of talks investigated the features of the parton coalescence process, which is supported by a large amount of experimental data on particle spectra and asymmetrical flow production. On the other hand, jet-orientated analysis of different data (for example, Ω–-charged hadron correlations) does not show the behaviour expected from quark coalescence. Therefore, further work is needed to understand this puzzling situation before the new experiments begin at the LHC.

Towards the LHC

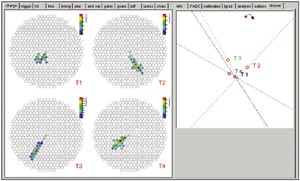

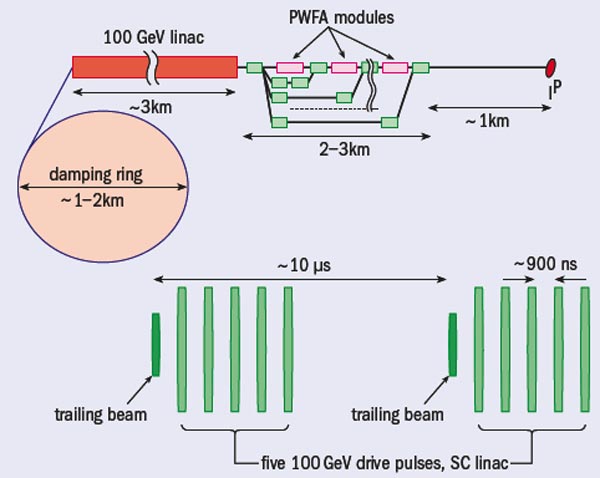

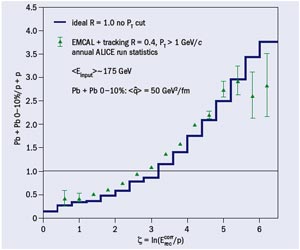

Among the four large LHC experiments, ALICE is the one that is optimized for heavy-ion physics. The CMS and ATLAS collaborations have also established a heavy-ion programme, which will certainly strengthen the field. The workshop heard about the capabilities of the three experiments for jet reconstruction and analysis of jet structure. The large background from the underlying event is a challenge for all experiments, requiring the development of new techniques for background subtraction. The strength of the ATLAS and CMS experiments is their full calorimetric coverage, and therefore large measured jet rates, which will allow them to measure jets in central lead–lead collisions up to 350 GeV and to perform Z0-jet correlation studies. ALICE will use the combination of its central tracking system and an electromagnetic calorimeter to measure jets. The smaller acceptance of the detector will limit the energy range to about 200 GeV. The strength of ALICE lies in its low-pT and particle identification capabilities. These allow ALICE to measure fragmentation functions down to small momentum fractions and to determine the particle composition of jets (figure 2).

A consistent theoretical approach to describe jet measurements in heavy-ion collisions can only be obtained through detailed Monte Carlo studies of jet production and in-medium modifications. They are needed to optimize the data analysis and to discriminate between different models. Some new event-generators adopted for the challenges of LHC physics (PyQuench, HydJet, HIJING-2) were also discussed during the meeting.

The workshop also examined the recent interest in understanding strongly interacting particles using conjectures from string and higher-dimensional physics. Stan Brodsky of SLAC gave a summary of his understanding of the many QCD effects that appear in kinematical regions not testable by perturbative QCD, where anti-de Sitter space/conformal field-theory models could come into consideration. In the duality picture, due to Juan Maldacena, the intensively interacting quark and gluon fields produced in heavy-ion collisions can be treated as a projection into the higher dimensional black-hole horizon. The equation of motion on the black-hole horizon could become analytically solvable in contrast to the vastly complicated numerical (lattice) approach in non-perturbative QCD theory. The new experiments at LHC energies May shed more light on the role of extra dimensions in curved space and could initiate a revolution in the description of strongly interacting matter.

The next workshop on this topic will be in Budapest in March 2008 and will offer the opportunity to display the latest theoretical results before the LHC is running with pp collisions at 14 TeV.