The Sun, a typical middle-aged star, is the most important astronomical body for life on Earth, and since ancient times its phenomena have had a key role in revealing new physics. Answering the question of why the Sun moves across the sky led to the heliocentric planetary model, replacing the ancient geocentric system and foreshadowing the laws of gravity. In 1783 a sun-like star led the Revd John Mitchell to the idea of the black hole, and in 1919 the bending of starlight by the Sun was a triumphant demonstration of general relativity. The Sun even provides a laboratory for subatomic physics. The understanding that it shines by nuclear fusion grew out of the nuclear physics of the 1930s; more recently the solution to the solar neutrino “deficit” problem has implied new physics.

Image credit: Rainer Arlt, Astrophysikalisches Institut Potsdam.

This progress in science, triggered by the seemingly pedestrian Sun, seems set to continue, as a variety of solar phenomena still defy theoretical understanding. It may be that one answer lies in astroparticle physics and the curious hypothetical particle known as the axion. Neutral, light, and very weakly interacting, this particle was proposed more than 25 years ago to explain the absence of charge-parity (CP) symmetry violation in the strong interaction.

So what are the problems with the Sun? These lie, perhaps surprisingly, with the more visible, outermost layers, which have been observed for hundreds, if not thousands, of years.

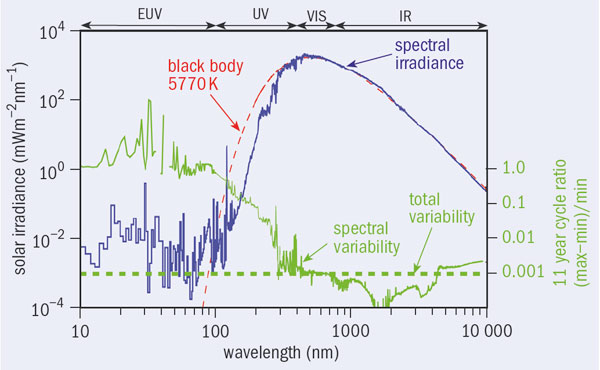

Image credit: Judith Lean/NRL Washington.

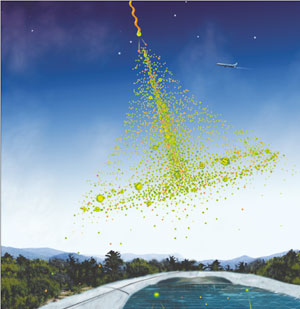

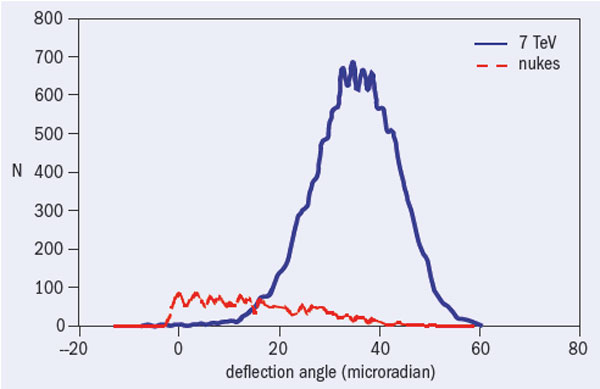

First, why is the corona – the Sun’s atmosphere with a density of only a few nanograms per cubic metre – so hot, with a temperature of millions of degrees? This question has challenged astronomers since Walter Grotrian, of the Astrophysikalisches Observatorium in Potsdam, discovered the corona in the 1930s. Within a few hundred kilometres, the temperature rises to be about 500 times that of the underlying chromosphere, instead of continuing to fall to the temperature of empty space (2.7 K). While the flux of extreme ultraviolet photons and X-rays from the higher layers is some five orders of magnitude less than the flux from the photosphere (the visible surface), it is nevertheless surprisingly high and inconsistent with the spectrum from a black body with the temperature of the photosphere (figure 1). Thus, some unconventional physics must be at work, since heat cannot run spontaneously from cooler to hotter places. In short, everything above the photosphere should not be there at all.

Another question is how does the corona continuously accelerate the solar wind of some thousand million tonnes of gas per second at speeds as high as 800 km/s? The same puzzle holds for the transient but dramatic coronal mass ejections (CMEs). How and where is the required energy stored, and how are the ejections triggered? This question is probably related to the mystery of coronal heating. And what is it that triggers solar flares, which heat the solar atmosphere locally up to about 10 to 30 million degrees, similar to the high temperature of the core, some 700,000 km beneath? These unpredictable events appear to be like violent “explosions” occurring near sunspots in the lower corona. This suggests magnetic energy as their main energy source, but how is the energy stored and how is it released so rapidly and efficiently within seconds? Even though many details are known, new observations call into question the 40-year-old standard model for solar flares, which 150 years after their discovery still remain a major enigma.

Image credit: Markus Gann/Dreamstime.com.

On the Sun’s surface, what is it that causes the 11-year solar cycle of sunspots and solar activity? This seems to be the biggest of all solar mysteries, since it involves the oscillation of the huge “magnets” of a few kilogauss on the face of the Sun, ranging from 300 to 100,000 km in size. The origin of sunspots has been one of the great puzzles of astrophysics since Galileo Galilei first observed them in the early 1600s. Their rhythmic comings and goings, first measured by the apothecary Samuel Heinrich Schwabe in 1826, could be the key to understanding the unpredictable Sun, since everything in the solar atmosphere varies in step with this magnetic cycle.

Beneath the Sun’s surface, the contradiction between solar spectroscopy and the refined solar interior models provided by helioseismology has revived the question about the heavy-element composition of the Sun, with new abundances some 25 to 35% lower than before. Abundances vary from place to place and from time to time in the Sun, and are enhanced near flares, showing an intriguing dependence on the square of the magnetic intensity in these regions. The so-called “solar oxygen crisis” or “solar model problem” is thus pointing at some non-standard physical process or processes that occur only in the solar atmosphere, and with some built-in magnetic sensor.

These are just some of the most striking solar mysteries, each crying out for an explanation. So can astroparticle physics help? The answer could be “yes”, using a scenario in which axions, or particles like axions, are created and converted to photons in regions of high magnetic fields or by their spontaneous decay.

The expectation from particle physics is that axions should couple to electromagnetic fields, just as neutral pions do in the Primakoff effect known since 1951, which regards the production of pions by high-energy photons as the reverse of the decay into two photons. Interestingly, axions could even couple coherently to macroscopic magnetic fields, giving rise to axion–photon oscillation, as the axions produce photons and vice versa. The process is further enhanced in a suitably dense plasma, which can increase the coherence length. This means that the huge solar magnetic fields could provide regions for efficient axion–photon mutation, leading to the sudden appearance of photons from axions streaming out from the Sun’s interior. The photosphere and solar atmosphere near sunspots are the most likely magnetic regions for this process to become “visible”, as the material above is transparent to emerging photons.

According to this scenario, the Sun should be emitting axions, or axion-like particles, with energies reflecting the temperature of the source. Thus one or more extended sources of new low-energy particles (below around 1 keV), and the ubiquitous solar magnetic fields of strengths varying from around 0.5 T, as measured at the surface, up to 100 T or much more in the interior, might together give rise to the apparently enigmatic behaviour of a star like the Sun.

Conventional solar axion models, inspired by QCD, have one small source of particles in the solar core, with an energy spectrum that peaks at 4 to 5 keV. They therefore exclude the low energies where the solar mysteries predominantly occur. This immediately suggests an extended axion “horizon”. Experiments to detect solar axions – axion helioscopes such as the CERN Solar Axion Telescope (CAST) – should widen their dynamic range towards lower energies, in order to enter this new territory.

The revised solar axion scenario must also accommodate two components of photon emission, namely, a continuous inward emission together, occasionally, with an outward radiation pressure. Massive and light axion-like particles, both of which have been proposed, can provide these thermodynamically unexpected inward and outward photons respectively. They offer an exotic but still simple solution, given the Sun’s complexity.

The emerging picture is that the transition region (TR) between the chromosphere and the corona (which is only about 100 km thick and only some 2000 km above the solar surface) is the manifestation of a space and time dependent balance between the two photon emissions. However, the almost equally probable disappearance of photons into axion-like particles in a magnetic environment must also be taken into account in understanding the solar puzzles. The TR could be the most spectacular place in the Sun, since it is where the mysterious temperature inversion appears, while flares, CMEs and other violent phenomena originate near the TR.

Astrophysicists generally consider the ubiquitous solar magnetism to be the key to understanding the Sun. The magnetic field appears to play a crucial role in heating up the corona, but the process by which it is converted into heat and other forms of energy remains an unsolved problem. In the new scenario, the generally accepted properties of the radiative decay of particles like axions and their coupling to magnetic fields are the device to resolve the problem – in effect, a real “απó μηχανηζ θεóζ” (the deus ex machina of Greek tragedy). The magnetic field is no longer the energy source, but is just the catalyst for the axions to become photons, and vice versa.

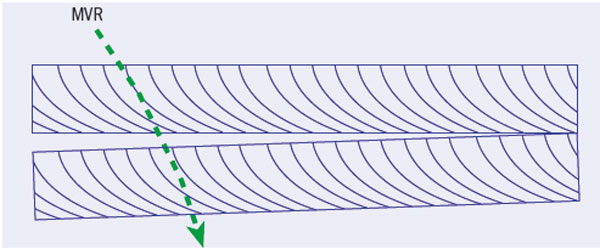

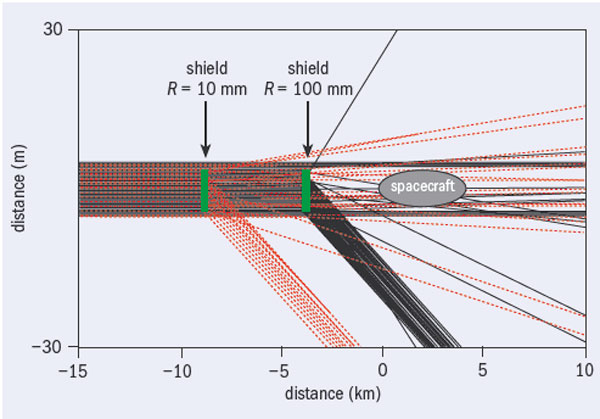

The precise mechanism for enhancing axion–photon mutation in the Sun that this picture requires remains elusive and challenging. One aim is to reproduce it in axion experiments. CAST, for example, seeks to detect photons created by the conversion of solar axions in the 9 T field of a prototype superconducting LHC dipole. However, the process depends on the unknown mass of the axion. Every day the CAST experiment changes the density of the gas inside the two tubes in the magnet in an attempt to match the velocity of the solar axion with that of the emerging photon propagating in the refractive gas.

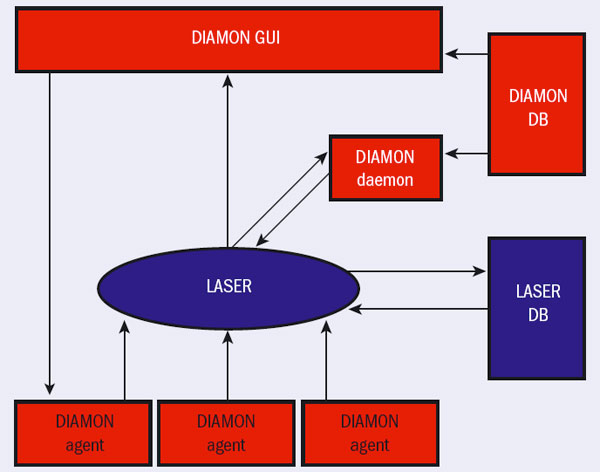

Image credit: V Anastassopoulos and M Tsagri/University of Patras.

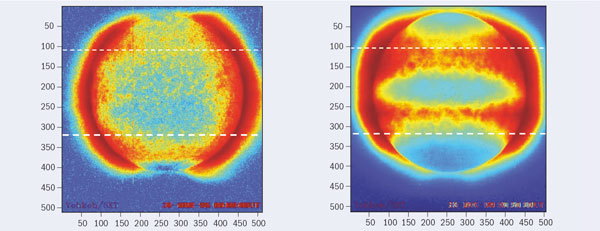

It is reasonable to assume that fine tuning of this kind in relation to the axion mass might also occur in the restless magnetic Sun. If the energy corresponding to the plasma frequency equals the axion rest mass, the axion-to-photon coherent interaction will increase steeply with the product of the square of the coherence length and the transverse magnetic field strength. Since solar plasma densities and/or magnetic fields change continuously, such a “resonance crossing” could result in an otherwise unexpected photon excess or deficit, manifesting itself in a variety of ways, for example, locally as a hot or cold plasma. Only a quantum electrodynamics that incorporates an axion-like field can accommodate such transient brightening as well as dimming (among many other unexpected observations).

These ideas also have implications for the better tuning not only of CAST, but also of orbiting telescopes such as the Japanese satellite Hinode (formerly Solar B), NASA’s Reuven Ramaty High Energy Solar Spectroscopic Imager and the NASA–ESA Solar and Heliospheric Observatory, which have been transformed recently to promising axion helioscopes, following suggestions by CERN’s Luigi di Lella among others. The joint Japan–US–UK mission Yohkoh has also joined the axion hunt, even though it ceased operation in 2001, by making its data freely available.

The revised axion scenario therefore seems to fit as an explanation for most (if not all) solar mysteries. Such effects can provide signatures for new physics as direct and as significant as those from laboratory experiments, even though they are generally considered as indirect; the history of solar neutrinos is the best example of this kind.

Following these ideas and others on millicharged particles, paraphotons or any other weakly interacting sub-electron-volt particles, axion-like exotica will mean that the Sun’s visible surface – and probably not its core – holds the key to its secrets. As in neutrino physics, the multifaceted Sun, from its deep interior to the outer corona and the solar wind, could be the best laboratory for axion physics and the like. The Sun, the most powerful accelerator in the solar system, whose working principle is not yet understood, has not been as active as it is now for some 11,000 years. Is this an opportunity not to be missed?