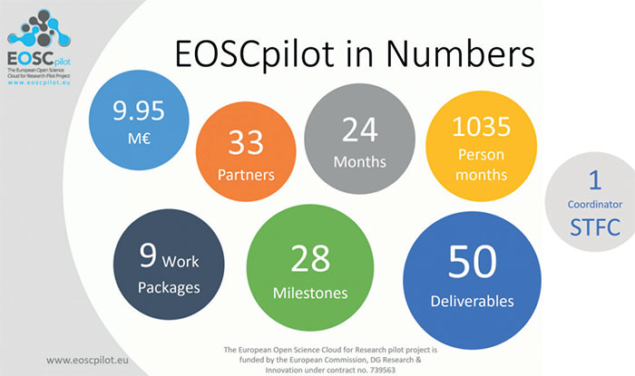

A European scheme to make publicly funded scientific data openly available has entered its first phase of development, with CERN one of several organisations poised to test the new technology. Launched in January and led by the UK’s Science and Technology Facilities Council, a €10 million two-year pilot project funded by the European Commission marks the first step towards the ambitious European Open Science Cloud (EOSC) project. With more than 30 organisations involved, the aim of the EOSC is to establish a Europe-wide data environment to allow scientists across the continent to exchange and analyse data. As well as providing the basis for better scientific research and making more efficient use of data resources, the open-data ethos promises to address societal challenges such as public-health or environmental emergencies, where easy access to reliable research data may improve response times.

The pilot phase of the EOSC aims to establish a governance framework and build the trust and skills required. Specifically, the pilot will encourage selected communities to develop demonstrators to showcase EOSC’s potential across various research areas including life sciences, energy, climate science, material science and the humanities. Given the intense computing requirements of high-energy physics, CERN is playing an important role in the pilot project.

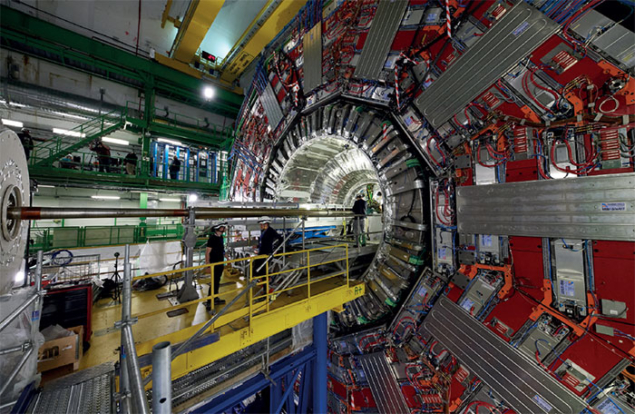

The CERN demonstrator aims to show that the basic requirements for the capture and long-term preservation of particle-physics data, documentation, software and the environment in which it runs can be satisfied by the EOSC pilot. “The purpose of CERN’s involvement in the pilot is not to demonstrate that the EOSC can handle the complex and demanding requirements of LHC data-taking, reconstruction, distribution, re-processing and analysis,” explains Jamie Shiers of CERN’s IT department. “The motivation for long-term data preservation is for reuse and sharing.”

Propelled by the growing IT needs of the LHC and experience gained by deploying scientific workloads on commercial cloud services, explains Bob Jones of CERN’s IT department, CERN proposed a model for a European science cloud some years ago. In 2015 this model was expanded and endorsed by members of EIROforum. “The rapid expanse in the quantities of open data being produced by science is stretching the underlying IT services,” says Jones. “The Helix Nebula Science Cloud, led by CERN, is already working with leading commercial cloud service providers to support this growing need for a wide range of scientific use cases.”

The challenging EOSC project, which raises issues such as service integration, intellectual property, legal responsibility and service quality, complements the work of the Research Data Alliance and builds on the European Strategy Forum on Research Infrastructure (ESFRI) road map. “Our goal is to make science more efficient and productive and let millions of researchers share and analyse research data in a trusted environment across technologies, disciplines and borders,” says Carlos Moedas, EC commissioner for research, science and innovation.