By Steven Weinberg

The Belknap Press of Harvard University Press

When Nobel laureates offer their point of view, people generally are curious to listen. Self-described rationalist, realist, reductionist and devoutly secular, Steven Weinberg has published a new book reflecting on current affairs in science and beyond. In Third Thoughts, he addresses themes that are of interest for both laypeople and researchers, such as the public funding of science.

Weinberg shared the Nobel Prize in Physics in 1979 for unifying the weak interaction and electromagnetism into the electroweak theory, the core of the Standard Model, and has made many other significant contributions to physics. At the same time, Weinberg has been and remains a keen science populariser. Probably his most famous work is the popular-science book The First Three Minutes, where he recounts the evolution of the universe immediately following the Big Bang.

Third Thoughts is his third collection of essays for non-specialist readers, following Lake Views (2009) and Facing Up (2001). In it are 25 essays divided into four themes: science history, physics and cosmology, public matters, and personal matters. Some are the texts of speeches, some were published previously in The New York Review of Books, and others are released for the first time.

The essays span subjects from quantum mechanics to climate change, from broken symmetry to cemeteries in Texas, and are pleasantly interspersed with his personal life stories. Like his previous collections, Weinberg deals with topics that are dear to him: the history of science, science spending, and the big questions about the future of science and humanity.

The author defines himself as an enthusiastic amateur in the history of science, albeit a “Whig interpreter” (meaning that he evaluates past scientific discoveries by comparing them to the current advancements – a method that irks some historians). Beyond that, his taste for controversy encourages him to cogitate over Einstein’s lapses, Hawking’s views, the weaknesses of quantum mechanics and the US government’s financing choices, among others.

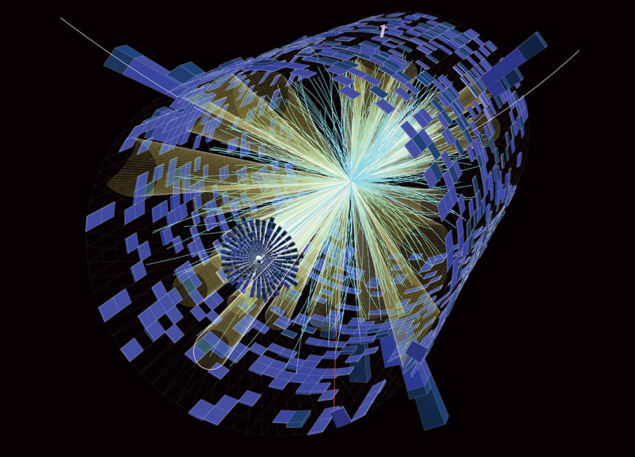

Readers who are interested in US politics will find the section “Public matters” very thought-provoking. In particular, the essay “The crisis of big science” is based on a talk he gave at the World Science Festival in 2011 and later published in the New York Review of Books. He explains the need for big scientific projects, and describes how both cosmology and particle physics are struggling for governmental support. Though still disappointed by the cut of the Superconducting Super Collider (SSC) in the early 1990s, he is excited by the new endeavours at CERN. He reiterates his frank opinions against manned space flight, and emphasises how some scientific obstacles are intertwined in the historical panorama. In this way, Weinberg sets the cancellation of the SSC in a wider problematic context, where education, healthcare, transportation and law enforcement are under threat.

The author condenses the essence of what physicists have learnt so far about the laws of nature and why science is important. This is a book about asking the right questions, when time is ripened to look for the answers. He explains that the question “What is the world made of?” needed to wait for chemistry advances at the end of the 18th century. “What is the structure of the electron?” needed to wait for quantum mechanics. While “What is an elementary particle?” is still waiting for an answer.

The essays vary in difficulty, and some concepts and views are repeated in several essays, thus each of them can be read independently. While most are digestible for readers without any background knowledge in particle physics, a general understanding of the Standard Model would help with grasping the content of some of the paragraphs. Having said that, the general reader can still follow the big picture and logically-argued thoughts.

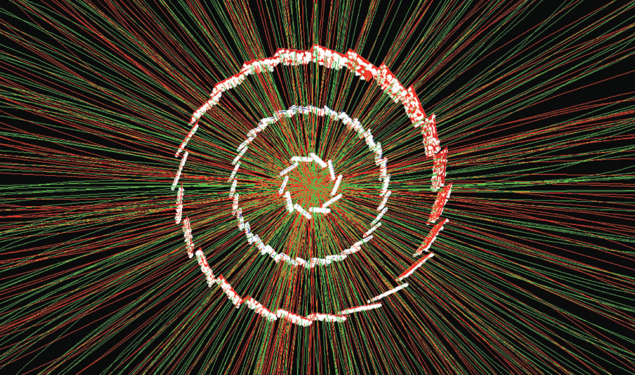

Several essays talk about CERN. More specifically, the “The Higgs, and beyond” article was written before the announcement of the Higgs boson discovery in 2011, and briefly presents the possibility of technicolour forces. The following essay, “Why the Higgs?”, was commissioned just after the announcement in 2012 to explain “what all the fuss is about”.

One of the most curious essays to explore is number 24. Citing Weinberg: “Essay 24 has not been published until now because everyone who read it disagreed with it, but I am fond of it so bring it out here.” There, he draws parallels between his job as a theoretical physicist and the one of creative artists.

Not all scientists are able to write in such an unconstrained and accessible way. Despair, sorrow, frustration, doubt, uneasiness and wishes all emerge page after page, offering the reader the privilege of coming closer to one of the sharpest scientific minds of our era.