Vacuum technology for particle accelerators has been pioneered by CERN since its early days. The Intersecting Storage Rings (ISR) brought the most important breakthroughs. Half a century ago, this technological marvel – the world’s first hadron collider – required proton beams of unprecedented intensity and extremely low vacuum pressures in the interaction areas (below 10–11 mbar). Addressing the former challenge led to innovative surface treatments such as glow-discharge cleaning, while the low-vacuum requirement drove the development of materials and their treatments. It also led to novel high-performance cryogenic pumps and vacuum gauges that are still in use today, and CERN’s record for the lowest ever achieved pressure at room temperature (2 × 10–14 mbar) still stands.

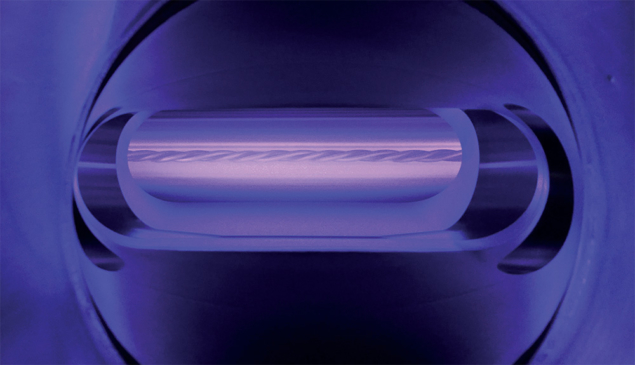

The Large Electron Positron (LEP) collider opened a new chapter in CERN’s vacuum story. Even though LEP’s residual gas density and current intensities were less demanding than those of the ISR, its exceptional length and intense synchrotron-light power triggered the need for unconventional solutions at reasonable cost. Responding to this challenge, the LEP vacuum team developed extruded aluminium vacuum chambers and introduced, for the first time, linear pumping by non-evaporable getter (NEG) strips. In parallel, LEP project leader Emilio Picasso launched another fruitful development that led to the production of the first superconducting radio-frequency cavities based on niobium thin-film coating on copper substrates. It was a great success, and the present accelerating RF cavities of the LHC and HIE-ISOLDE are essentially based on the expertise assimilated for LEP.

The coexistence at CERN of both NEG and thin-film expertise was the seed for another breakthrough in vacuum technology: NEG thin-film coatings, driven by the requirements of the LHC and its project leader Lyn Evans. The NEG material, a micron-thick coating made of a mixture of titanium, zirconium and vanadium, is deposited onto the inner wall of vacuum chambers and, after activation by heating in the accelerator, provides pumping for most of the gas species present in accelerators. The Low Energy Ion Ring was the first CERN accelerator to implement extensive NEG coating in around 2006. For the LHC, one of the technology’s key benefits is its low secondary-electron emission, which suppresses the growth of electron clouds in the room-temperature part of the machine.

New concepts

Synchrotron radiation-induced desorption and electron clouds at temperatures of around 4.3 K had to be studied in depth for the LHC, leading CERN’s vacuum experts to develop new concepts for vacuum systems at cryogenic temperatures, in particular the beam screen. The more intense beams of the high-luminosity LHC (HL-LHC) upgrade, presently under way, will amplify the effect of electron clouds on both the beam stability and the thermal load to the cryogenic systems. Since NEG coatings are limited for room-temperature beam pipes, an alternative strategy had to be found for the parts of the accelerators that cannot be heated, such as those in the HL-LHC’s inner triplet magnets.

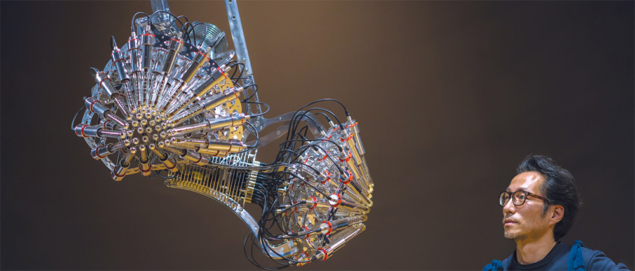

Following an idea that originated at CERN in 2006, thin-film coatings made from carbon offer a solution and the material has already been deposited on tens of SPS vacuum chambers within the LHC Injectors Upgrade project. Another idea to fight electron clouds for the HL-LHC involves laser-treating surfaces to make them more rough, so that secondary electrons are intercepted by the surrounding surfaces. In collaboration with UK researchers and GE Inspection Robotics, CERN’s vacuum team has recently developed a miniature robot that can direct the laser onto the LHC beam screen (see image above). The possibility of in situ surface treatments by lasers opens new perspectives for vacuum technology in the next decades, including studies for future circular colliders. Another benefit of this study is the development of small robots for the in situ inspection of long ultra-high vacuum beam pipes, such as those of the LHC’s arcs.

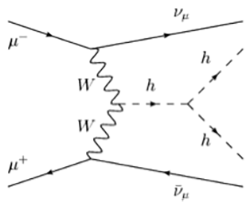

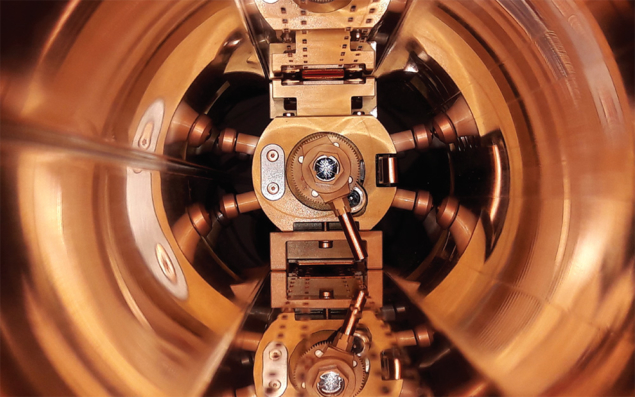

The Compact Linear Collider (CLIC) project, which envisages a high-energy linear electron–positron collider at CERN, demands quadrupole magnets with a very small-diameter beam pipe (about 8 mm) supporting pressures in the ultra-high vacuum range. This can be obtained by NEG-coating the vacuum vessel, but the coating process in such a high aspect-

ratio geometry is not easy due to the very small space available for the material source and the plasma needed for its sputtering. This troublesome issue has been solved by a complete change of the production process in which the NEG material is no longer directly coated on the wall of the tiny pipe, but instead is coated on the external wall of a sacrificial mandrel made of high-purity aluminium.

Next-generation synchrotron-light sources share CLIC’s need for very compact magnets with magnetic poles as close as possible to the beam, so as to reduce costs and improve beam performance. CERN’s vacuum group collaborates closely with vacuum experts of light sources, MAX IV in Sweden and PSI in Switzerland being prominent examples, to develop the required very-small-diameter vacuum pipes. Further technology transfer has come from the sophisticated simulations necessary for the HL-LHC and the Future Circular Collider study, which have also found applications beyond the accelerator field, from the coating of electronic devices to space simulation.

Relations with industry are key to the operation of CERN’s accelerators, especially those in the LHC chain. The vacuum industry across CERN’s member countries provides us with state-of-art components, valves, pumps, gauges and control equipment that have contributed to the high reliability of the lab’s vacuum systems. In return, the LHC gives high visibility to industrial products. Indeed, the variety of projects and activities performed at CERN provide us with a continuous stimulation to improve and extend our competences in vacuum technology. In addition to future colliders are: antimatter physics, which requires very low gas density; radioactive-beam physics, which imposes severe controls on contamination and gas exhausting; and gravity-wave physics for which the tradeoff between cost and performance of vacuum systems is essential for the approval of next-generation observatories.

An orthogonal driver of innovation in vacuum technology is the reduction of costs and operational downtime of CERN’s accelerators. Achieving ultra-high vacuum in a matter of a few hours at a reduced cost would also have an impact well beyond the high-energy physics community. This and other challenges involved in fundamental exploration are guaranteed to drive further advances in vacuum technology.