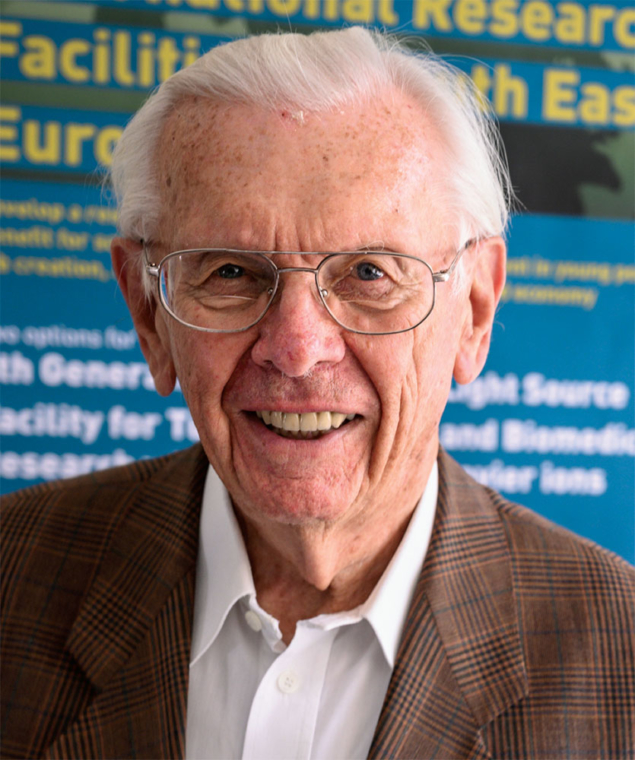

Murray Gell-Mann and I were born a few days apart, in September 1929. Being born on almost the same date as a genius does not help much, except for the fact that by having the same age there was a non-zero probability that we would meet. And, indeed, this is what happened; furthermore, we and our families became friends.

Murray’s family was heavily affected by the economic crash of October 1929. His father had to change job completely. However, if this had not happened, it is possible that Murray might have become a successful businessman instead of a brilliant physicist. Everybody knows that Murray was immensely cultured and had multiple interests. I can quote a few at random: penguins, other birds (tichodromes for instance), Swahili, Creole, Franco- Provençal (and more generally the history of languages), pre-Columbian art and American–Indian art, gastronomy (including French wines and medieval food), the history of religions, climatic change and its consequences, energy resources, protection of the environment, complexity, cosmology and the quantum theory of measurement. However, it is in the field of theoretical particle physics that he made his most creative and important contributions. For these, up until 24 May 2019, I personally considered him to be the best particle-physics theoretician alive.

Bright beginnings

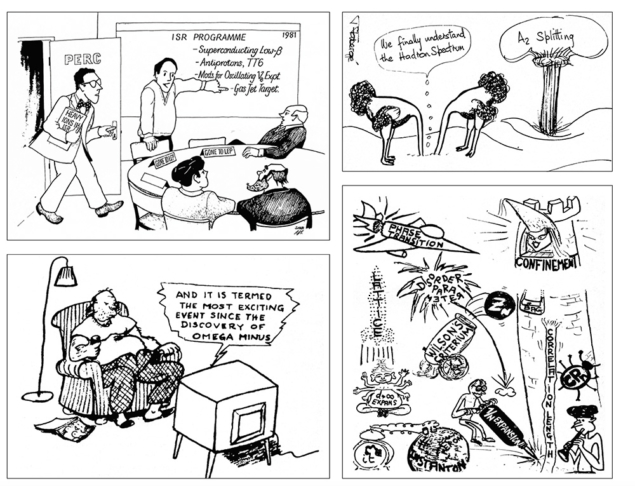

I met Murray for the first time at Les Houches in 1952, one year after the foundation of the school by Cecile Morette-DeWitt. It was immediately obvious that he was extremely bright. Although I never had the occasion to collaborate with Murray, there was a time when his advice was very precious to me. Most of my own research in theoretical physics was extremely rigorous work but, for a time in 1980, I became a phenomenologist: I proposed a naïve potential model to calculate the energies of quarkonium, b–b̅ and c–c̅ systems. My colleagues were divided about this. In particular, my Russian friends were very critical. When I gave a talk about this in Aspen, Murray said: “We don’t understand why it works, but it works and you should continue.” I followed his advice, including treating strange quarks as “heavy”. Again it worked, and my colleague Jean Marc Richard adapted this model to baryons. It enabled the mass of the Ω– baryon to be calculated with great accuracy, and also correctly predicted the mass of the b–s̅ meson, which was discovered years later by ALEPH. This is typical of Murray’s philosophy: if something works, go ahead: “non approfondire” as Italian writer Alberto Moravia says.

In 1955 I attended my first physics conference, in Pisa. After a breakfast with Erwin Schrödinger, I took the tram and met Murray. In the afternoon, at the University of Pisa, he made the first public presentation of the strangeness scheme. The auditorium was packed. I was completely bewildered by this extraordinary achievement, with its incredible predictive power (which was very soon checked), including the KK̅ system. I had already left Ecole Normale-Orsay for CERN when he and Maurice Levy wrote their famous paper featuring, for the first time, what was later called the “Cabibbo angle”.

I then had the good fortune to be sent to the La Jolla conference in 1961. There I met Nick Khuri for the first time, who also became a close friend, and I heard Murray presenting “the eightfold way” – i.e. the SU(3) octet model. Also attending were Marcel Froissart, who derived the “Froissart bound”, and Geoff Chew, who presented his version of the S-matrix programme. Both were most inspiring for my future work (sadly, both also passed away recently). What I did not realise at the time was that the Chew programme had been largely anticipated by Murray, who first was involved in the use of dispersion relations and then noticed, in 1956, that the combination of analyticity, unitarity and crossing symmetry could lead to field theory on the mass shell, with some interesting consequences.

In 1962, during the Geneva “Rochester” conference, I was again present when Murray, after a review of hadron spectroscopy by George Snow, stood up and pointed out that the sequence of particles Δ, ∑*, Ξ* could be completed by a particle that he called Ω– to form a decuplet in the SU(3) scheme. He predicted its mode of production, its decay (which was to be weak) and its mass. This was followed by a period of deep scepticism among theoreticians, including some of the best. However, at the end of 1963, while I was in Princeton, Nick Samios and his group at Brookhaven announced that the Ω– had been discovered, with exactly the correct mass within a few MeV. Frank Yang, one of the sceptics, called it “the most important experiment in particle physics in recent years”. I missed the invention of the quarks, being in Princeton, far from Caltech where Murray was, and from CERN where George Zweig was visiting. I met Bob Serber but was completely unaware of his catalytic role in that discovery.

Close friends

My next important meeting with Murray was in Yerevan in Armenia in 1965, where Soviet physicists had invited a group of some eight western physicists. This time Murray came with his whole family: his wife, Margaret – a British archaeology student whom he met in Princeton – and his children, Lisa and Nick. During the following summer, which the Gell-Manns spent in Geneva, our families met several times. The Gell-Manns spent another year at CERN before Harold Fritzsch, Gell-Mann and Heinrich Leutwyler wrote the “Credo” of QCD.

Margaret and Murray came to Geneva again for the academic year 1979/1980. They were living in an apartment in the same group of buildings as us. Schu, my wife, who died at the same age as Murray from a similar disease, became a close friend of Margaret, who was a typically British girl: very reserved, very intelligent and possessing a good sense of humour. An extraordinary friendship grew between Margaret and Schu. When the Gell-Manns left Geneva for Pasadena, Margaret knew that there was something wrong with her health. Back in the US she discovered that she had cancer. I do not know the number of transatlantic trips that we made – sometimes both of us, sometimes Schu alone – to help. In between, Schu and Margaret had an extensive correspondence. It is nice that the ashes of Murray will be close to Margaret.

After Margaret’s death, we all kept in touch. We met in many places: Paris, Pasadena, Beyrouth, Geneva and Bern. Of these, two stand out. In 2004 Murray attended a meeting of linguists at the University of Geneva. He proposed to give a talk at CERN on the origin and evolution of languages. Luis Alvarez-Gaumé and I accepted. It was absolutely fantastic. I regret that we don’t have a written version, but we certainly have tapes. The second occasion was in Bern. Every year the Swiss confederation gives the Einstein Medal to someone suggested by the University of Bern. The 2005 medal was a special one because this was 100 years after Einstein’s trilogy of fundamental discoveries. The candidate had to be of an extremely high level, so Murray was chosen. At the ceremony in Bern, Schu decided to wear a brooch that had been given to her by Murray to thank her for what she did for Margaret. The brooch represented Hopi “skinwalkers”. During lunch, however, the brooch disappeared. Either it had been lost or stolen. We were extremely unhappy and could not refrain from telling this to Murray. Some time later, Schu received a parcel containing a new brooch; an illustration of Murray’s faithfulness in friendship.

- This article is an updated version of a tribute published on the occasion of Gell-Mann’s 80th birthday (CERN Courier April 2010 p27).