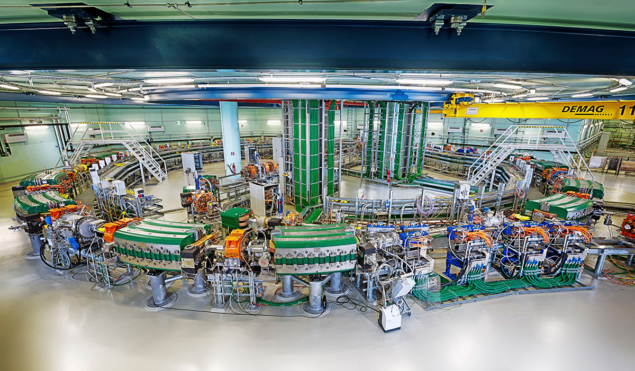

Researchers in the US have demonstrated an advanced accelerator dipole magnet with a field of 14.1 T – the highest ever achieved for such a device at an operational temperature of 4.5 K. The milestone is the work of the US Magnet Development Program (MDP), which includes Fermilab, Lawrence Berkeley National Laboratory (LBNL), the National High-Field Magnetic Field Laboratory and Brookhaven National Laboratory. The MDP’s “cos-theta 1” (MDPCT1) dipole, made from Nb3Sn superconductor, beats the 13.8 T at 4.5 K achieved by LBNL magnet “HD2” a decade ago, and follows the 14.6 T at 1.9 K (13.9 T at 4.5 K) reached by “FRESCA 2” at CERN in 2018, which was built as a superconducting-cable test station. Together with other recent advances in accelerator magnets in Europe and elsewhere, the result sends a positive signal for the feasibility of next-generation hadron colliders.

The MDP was established in 2016 by the US Department of Energy to develop magnets that operate as closely as possible to the fundamental limits of superconducting materials while minimising the need for magnet training. The programme aims to integrate domestic accelerator-magnet R&D and position the US in the technology development for future high-energy proton-proton colliders, including a possible 100 km-circumference facility at CERN under study by the Future Circular Collider (FCC) collaboration. In addition to the baseline design of MDPCT1, other design options for such a machine have been studied and will be tested in the coming years.

“The goal for this first magnet test was to limit the coil mechanical pre-load to a safe level, sufficient to produce a 14 T field in the magnet aperture,” explains MDPCT1 project leader Alexander Zlobin of Fermilab. “This goal was achieved after a short magnet training at 1.9 K: in the last quench at 4.5 K the magnet reached 14.1 T. Following this successful test the magnet pre-stress will be increased to reach its design limit of 15 T.”

The result sends a positive signal for the feasibility of next-generation hadron colliders

The development of high-field superconducting accelerator magnets has received a strong boost from high-energy physics in the past decades. The current state of the art is the LHC dipole magnets, which operate at 1.9 K to produce a field of around 8 T, enabling proton-proton collisions at an energy of 13 TeV. Exploring higher energies, up to 100 TeV at a possible future circular collider, requires higher magnetic fields to steer the more energetic beams. The goal is to double the field strength compared to the LHC dipole magnets, reaching up to 16 T, which calls for innovative magnet design and a different superconductor compared to the Nb-Ti used in the LHC. Currently, Nb3Sn (niobium tin) is being explored as a viable candidate for reaching this goal. High-temperature superconductors, such as REBCO, MgB2 and iron-based materials, are also being studied.

HL-LHC first

The first accelerator magnets to use Nb3Sn technology are the 11 T dipole magnets and the final-focusing magnets under development for the high luminosity LHC (HL-LHC), which will be installed around the interaction points. But the FCC would require more than 5000 superconducting dipoles grouped for powering in series and operating continuously over long time periods. A number of critical aspects underlie the design, cost-effective manufacturing and reliable operation of 16 T dipole magnets in future colliders. Among the targets for the Nb3Sn conductor is a critical current density of 1500 A/mm2 at 16 T and 4.2 K – almost a 50% increase compared to the current state of the art. In addition to the conductor, developing an industry-adapted design for 16 T dipoles and other accelerator magnets with higher performance presents a major challenge.

The FCC collaboration has launched a rigorous R&D programme towards 16T magnets. Key components are the global Nb3Sn conductor development programme, featuring a network of academic institutes and industrial partners, and the 16 T magnet-design work package supported by the EU-funded EuroCirCol project. This is now being followed by a 16 T short-model programme aiming at constructing model magnets with several partners worldwide such as the US MDP. Unit lengths of Nb3Sn wires with performance at least comparable to that of the HL-LHC conductor have already been produced by industry and cabled at CERN, while, at Fermilab, multi-filamentary wire produced with an internal oxidation process has already exceeded the critical current density target for the FCC – just two examples of many recent advances in this area. EuroCirCol, which officially wound up this year (see Study comes full EuroCirCol), has also enabled a design and cost model for the magnets of FCC, demonstrating the feasibility of Nb3Sn technology.

“The enthusiasm of the worldwide superconductor community and the achievements are impressive,” says Amalia Ballarino, leader of the conductor activity at CERN. “The FCC conductor development targets are very challenging. The demonstration of a 14 T field in a dipole accelerator magnet and the possibility of reaching the target critical current density in R&D wires are milestones in the history of Nb3Sn conductor and a reassuring achievement for the FCC magnet development programme.”