Alvin Tollestrup, who passed away on 9 February at the age of 95, was a visionary. When I joined his group at Caltech in the summer of 1960, experiments in particle physics at universities were performed at accelerators located on campus. Alvin had helped build Caltech’s electron synchrotron, the highest energy photon-producing accelerator at the time. But he thought more exciting physics could be performed elsewhere, and managed to get approval to run an experiment at Berkeley Lab’s Bevatron to measure a rare decay mode of the K+ meson. This was the first time an outsider was allowed to access Berkeley’s machine, much to the consternation of Luis Alvarez and other university faculty.

When I joined Alvin’s group he asked a postdoc, Ricardo Gomez, and me to design, build and test a new type of particle detector called a spark chamber. He gave us a paper by two Japanese authors on “A new type of particle detector: the discharge chamber”, not what he wanted, but a place to start. In retrospect it was remarkable that Alvin was willing to risk the success of his experiment on the creation of new technology. Alvin also asked me to design a transport system of magnetic lenses that would capture as many K mesons as possible at the “thin window” of the accelerator and guide them to our “hut” on the accelerator floor where K decays would be observed. I did my calculations on an IBM 709 at UCLA — Alvin checked them by tracing rays at his drafting table. When the beam design was completed and the chain of magnets was in place on the accelerator floor, Alvin threaded a single wire through them from the thin window to our hut.

I had no idea what he was doing, or why. Around Alvin the Zen master, I didn’t say much or ask many questions. After turning the magnets on and running current through the wire, the wire snapped to attention tracing the path a K would follow from where it left the accelerator to where its decays would be observed. The wire floated through the magnet centres far from their walls, tracing an unobstructed path. Calculations − how much current was required in the wire − followed by testing, were Alvin’s modus operandi.

A couple of months later in 1962, run-time arrived. All the equipment for the experiment was built and tested over a two-year period at Caltech, shipped in a moving van to the Bevatron, and assembled in our hut. We had 21 half-days to make our measurements. The proton beam inside the accelerator was steered into a tungsten target behind the thin window through which the Ks would pass. Inside the hut we waited for the scintillation counters to start clicking wildly, but there was hardly a click. In complete silence, Alvin set out to find what happened to the beam, slowly moving a scintillation counter from one magnet to the next until he reached the thin window. Finding that hardly any Ks were coming through it, Alvin asked the operator in the control room to shut the machine down and remove the thin window to expose the target — an unprecedented request that meant losing the vacuum the proton beam required. There was a long silence while the operator mentally processed the request. Several phone calls later the operator complied. With a pair of long tongs Alvin pressed a small square of dental film against the radioactive target. When developed it showed a faintly illuminated edge at the top of the target. The Bevatron surveyors had placed the target one inch below its proper position, a big mistake. But there was no panic or finger pointing, just measurement and appropriate action. That was Alvin’s style, always diplomatic with management, never asking for something without sufficient reason, and persistent. Unfortunately, we were unfairly charged a full day of running time, which Alvin chose not to contest. Not everyone at UC Berkeley was happy with outside users coming in to use “their machine,” and Alvin did not want to antagonize them.

Without his influence, I never would have discovered quarks (aces), whose existence was later definitively confirmed in deep inelastic scattering experiments.

Alvin was my first thesis advisor. When he taught me how to think about my measurements, he also taught me how to analyze and judge the measurements of others. This was essential in understanding which of the many “discoveries” of hadrons in the early 1960s were believable. Without his influence, I never would have discovered quarks (aces), whose existence was later definitively confirmed in deep inelastic scattering experiments.

Fermilab years

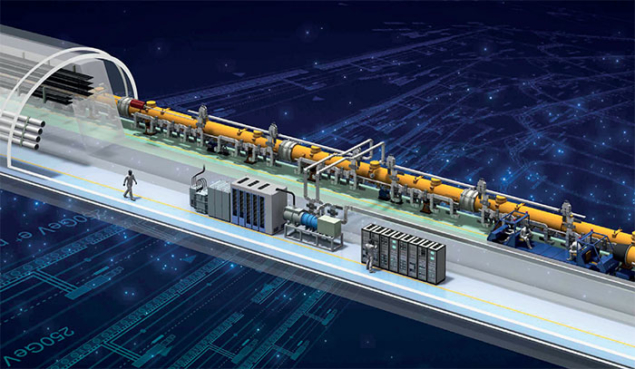

More than a dozen years later, true to his belief that users of accelerators should improve them, Alvin left Caltech for Fermilab where he would create the first large-scale application of superconductivity. Physics at Fermilab at that time was limited by the energy of the protons it produced: 200 GeV, which was the design energy of the laboratory’s 6.3km circumference Main Ring. If superconducting magnets could be built, the Main Ring’s copper magnets could be replaced, energy costs could be significantly reduced, and the energy of protons could be doubled. Furthermore, protons and antiprotons could eventually be accelerated in the same ring, traveling in opposite directions, colliding at nodes around the ring where experiments could be performed. All this without digging a new tunnel.

I went to visit Alvin shortly after he arrived at Fermilab and found him at a drafting table once more tracing rays, this time through superconducting magnets. Looking up he told me of the magnetostrictive forces trying to tear each magnet apart, and the enormous energy stored within each one (as much energy as a one-tonne vehicle traveling more than 100 km h-1) all within a bath of liquid helium bombarded by stray high-energy protons. If a superconducting magnet “quenched” and returned to its normal state, this energy would suddenly be released and serious damage would occur. There was also the possibility of a domino effect, one magnet quenching after another.

With a number of ingenious inventions, always experimenting but only making one change at a time, and combining the understanding that comes from physics with the practicalities necessary for engineering, Alvin made essential contributions to the design, testing and commissioning of the superconducting magnets. When the “energy doubler”, henceforth the Tevatron, was completed in 1983, Alvin worked on converting it to a proton-antiproton collider. The collider began operation in 1987, and Alvin was the primary spokesperson for the CDF experimental collaboration from 1980 to 1992. The Tevatron was the world’s most powerful particle collider for 25 years until the LHC came along. The top quark and the tau neutrino were both discovered there. Alvin’s critical contributions to the design, construction and initial operation of the Tevatron were recognised in 1989 with a US National Medal of Technology and Innovation.

Deserved recognition

Designing robust superconducting magnets that could be mass produced was extremely difficult. Physicists at Brookhaven working on their next-generation accelerator − Isabelle – failed, even though they received substantially more government support and funding. And, ten days after the LHC was first switched on in 2008, an electrical fault in a connection between adjacent magnets caused a massive magnet quench and significant damage which closed the accelerator for several months.

The virtuosity required to create new accelerators sometimes exceeds what is necessary to run the resulting prizewinning experiments.

Alvin once told me that the Bevatron’s director, Ed Lofgren, never got the recognition he deserved. The Bevatron was designed and built to find the antiproton, and sure enough Segre and Chamberlain found it as soon as the Bevatron was turned on. They were recognised for their discovery with a Nobel Prize, but the work Lofgren did to create the machine for them was of a higher order than that required to run their experiment. Alvin also didn’t get the recognition he deserved. His modesty only exacerbated the problem. The virtuosity required to create new accelerators sometimes exceeds what is necessary to run the resulting prizewinning experiments.

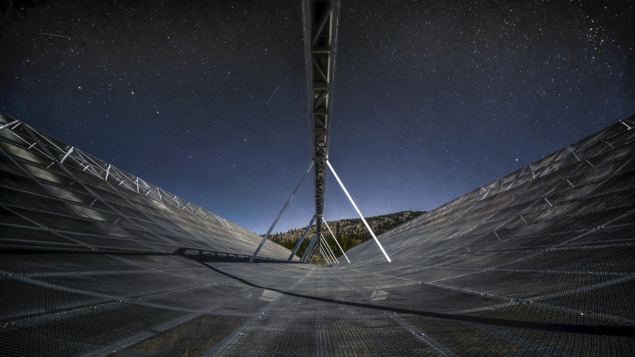

Alvin remained a visionary all his life. For many years Richard Feynman kept a question carefully written in the upper left-hand corner of his blackboard: “Why does the muon weigh?” To help answer this question, and create a new frontier in high-energy physics, Alvin began work on a muon collider in the early 1990s, and interest in the collider has increased ever since.

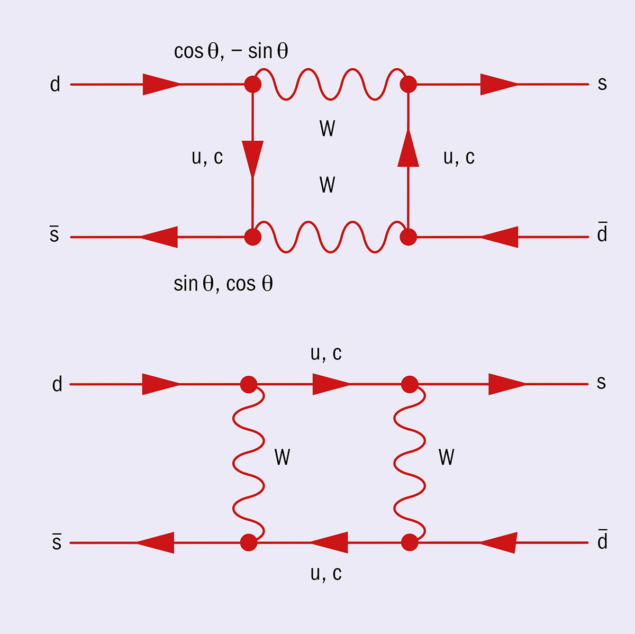

There were things that I was never able to learn from Alvin. His intuition for electronics was beyond my grasp, a gift from the gods. That intuition helped him make one of the most important measurements of the 1950s. Parity violation had been discovered, but how was it violated? There were competing theories, championed by giants. The V − A theory predicted the existence of the decay π−→ e−ν ̄, but this decay was not seen in two independent experiments by Jack Steinberger in 1955, and Herb Anderson in 1957. As a testimony to the difficulty of this measurement, both Steinberger and Anderson were outstanding experimentalists, students of Fermi. Steinberger later shared the Nobel Prize for demonstrating that the electron and muon each have their own neutrinos. Alvin, with his knowledge of how photomultipliers worked, discovered a flaw in one of the experiments, and with collaborators at CERN, went on to find the decay at the predicted rate, validating the V − A theory of the weak interactions.

Alvin did not suffer fools gladly, but outside of work he created a community of collaborators, an extended family. He fed and entertained us. His pitchers of martinis and platters of whole hams are memorable. As a child my parents took me to a traveling circus where we saw a tight-rope performer, Karl Wallenda, who had an incredible high-wire act. Walenda is quoted as saying, “Life is on the wire. The rest is waiting.” Alvin showed us how to have fun while waiting, and shared a long and phenomenal life with us, both off − and especially on − the high wire.