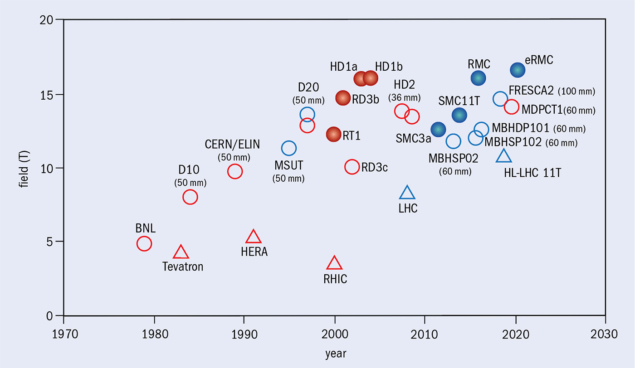

The steady increase in the energy of colliders during the past 40 years, which has fuelled some of the greatest discoveries in particle physics, was possible thanks to progress in superconducting materials and accelerator magnets. The highest particle energies have been reached by proton–proton colliders, where beams of high-rigidity travelling on a piecewise circular trajectory require magnetic fields largely in excess of those that can be produced using resistive electromagnets. Starting from the Tevatron in 1983, through HERA in 1991, RHIC in 2000 and finally the LHC in 2008, all large-scale hadron colliders were built using superconducting magnets.

Large superconducting magnets for detectors are just as important to high-energy physics experiments as beamline magnets are to particle accelerators. In fact, detector magnets are where superconductivity took its stronghold, right from the infancy of the technology in the 1960s, with major installations such as the large bubble-chamber solenoid at Argonne National Laboratory, followed by the giant BEBC solenoid at CERN, which held the record for the highest stored energy for many years. A long line of superconducting magnets has provided the magnetic fields for detectors of all large-scale high-energy physics colliders, with the most recent and largest realisation being the LHC experiments, CMS and ATLAS.

Optimisation

All past accelerator and detector magnets had one thing in common: they were built using composite Nb–Ti/Cu wires and cables. Nb–Ti is a ductile alloy with a critical field of 14.5 T and critical temperature of 9.2 K, made from almost equal parts of the two constituents. It was discovered to be superconducting in 1962 and its performance, quality and cost have been optimised over more than half a century of research, development and large-scale industrial production. Indeed, it is unlikely that the performance of the LHC dipole magnets, operated so far at 7.7 T and expected to reach nominal conditions at 8.33 T, can be surpassed using the same superconducting material, or any foreseeable improvement of this alloy.

And yet, approved projects and studies for future circular machines are all calling for the development of superconducting magnets that produce fields beyond those produced for the LHC. These include the High-Luminosity LHC (HL-LHC), which is currently taking shape, and the Future Circular Collider design study (FCC), both at CERN, together with studies and programmes outside Europe, such as the Super proton–proton Collider in China (SppC) or the past studies of a Very Large Hadron Collider at Fermilab and the US–DOE Muon Accelerator Program (see HL-LHC quadrupole successfully tested). This requires that we turn to other superconducting materials and novel magnet technology.

The HL-LHC springboard

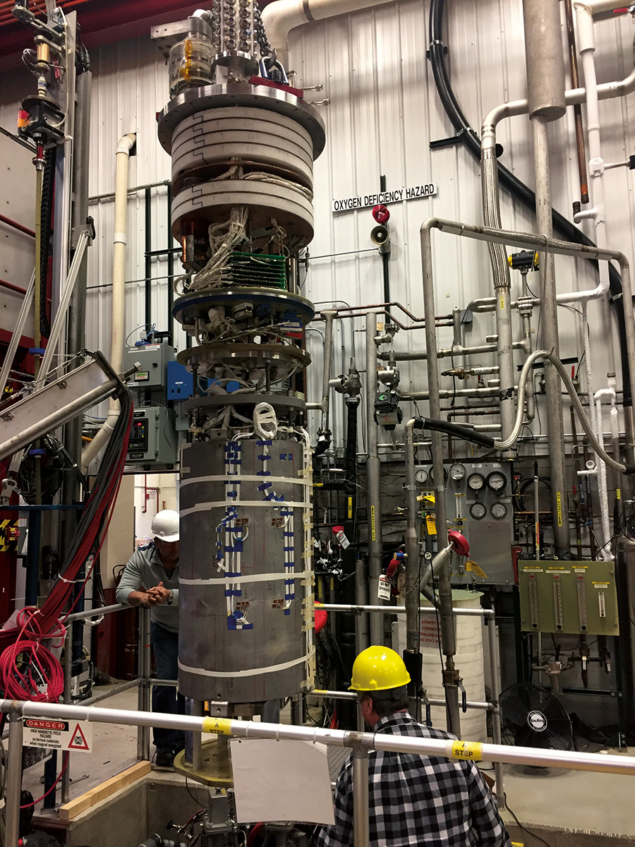

To reach its main objective, to increase the levelled LHC luminosity at ATLAS and CMS, and the integrated luminosity by a factor of 10, the HL-LHC requires very large-aperture quadrupoles, with field levels at the coil in the range of 12 T in the interaction regions. These quadrupoles, currently being built and tested at CERN and Fermilab (see HL-LHC quadrupole successfully tested), are the main fruit of the 10-year US-DOE LHC Accelerator Research Program (US–LARP) – a joint venture between CERN, Brookhaven National Laboratory, Fermilab and Lawrence Berkeley National Laboratory. In addition, the increased beam intensity calls for collimators to be inserted in locations within the LHC “dispersion suppressor”, the portion of the accelerator where the regular magnet lattice is modified to ensure that off-momentum particles are centered in the interaction points. To gain the required space, standard arc dipoles will be substituted by dipoles of shorter length and higher field, approximately 11 T. As described earlier, such fields require the use of new materials. For the HL-LHC, the material of choice is the intermetallic compound of niobium and tin Nb3Sn, which was discovered in 1954. Nb3Sn has a critical field of about 30 T and a critical temperature of about 18 K, outperforming Nb–Ti by a factor of two. Though discovered before Nb–Ti, and exhibiting better performance, Nb3Sn has not been used for accelerator magnets so far because in its final form it is brittle and cannot withstand large stress and strain without special precautions.

The HL-LHC is the springboard to the future of high-field accelerator magnets

In fact, Nb3Sn was one of the candidate materials considered for the LHC in the late 1980s and mid 1990s. Already at that time it was demonstrated that accelerator magnets could be built with Nb3Sn, but it was also clear that the technology was complex, with a number of critical steps, and not ripe for large-scale production. A good 20 years of progress in basic material performance, cable development, magnet engineering and industrial process control was necessary to reach the present state, during which time the success of the production of Nb3Sn for the ITER fusion experiment has given confidence in the credibility of this material for large-scale applications. As a result, magnet experts are now convinced that Nb3Sn technology is sufficiently mature to satisfy the challenging field levels required by the HL-LHC.

A difficult recipe

The present manufacturing recipe for Nb3Sn accelerator magnets consists of winding the magnet coil with glass-fibre insulated cables made of multi-filamentary wires that contain Nb and Sn precursors in a Cu matrix. In this form the cables can be handled and plastically deformed without breakage. The coils then undergo heat treatment, typically at a temperature of around 650 °C, during which the precursor elements react chemically and form the desired Nb3Sn superconducting phase. At this stage, the reacted coil is extremely fragile and needs to be protected from any mechanical action. This is done by injecting a polymer, which fills the interstitial spaces among cables, and is subsequently cured to become a matrix of hardened plastic providing cohesion and support to the cables.

The above process, though conceptually simple, has a number of technical difficulties that call for top-of-the-line engineering and production control. To give some examples, the texture of the electrical insulation, consisting of a few tenths of mm of glass fibre, needs to be able to withstand the high-temperature heat-treatment step, but also retain dielectric and mechanical properties at liquid-helium temperatures 1000 °C lower. The superconducting wire also changes its dimensions by a few percent, which is orders of magnitude larger than the dimensional accuracy requested for field quality and therefore must be predicted and accommodated for by appropriate magnet and tooling design. The finished coil, even if it is made solid by the polymer cast, still remains stress and strain sensitive. The level of stress that can be tolerated without breakage can be up to 150 MPa, to be compared to the electromagnetic stress of optimised magnets operating at 12 T that can reach levels in the range of 100 MPa. This does not leave much headroom for engineering margins and manufacturing tolerances. Finally, protecting high-field magnets from quenches, with their large stored energy, requires that the protection system has a very fast reaction – three times faster than at the LHC – and excellent noise rejection to avoid false trips related to flux jumps in the large Nb3Sn filaments.

The next jump

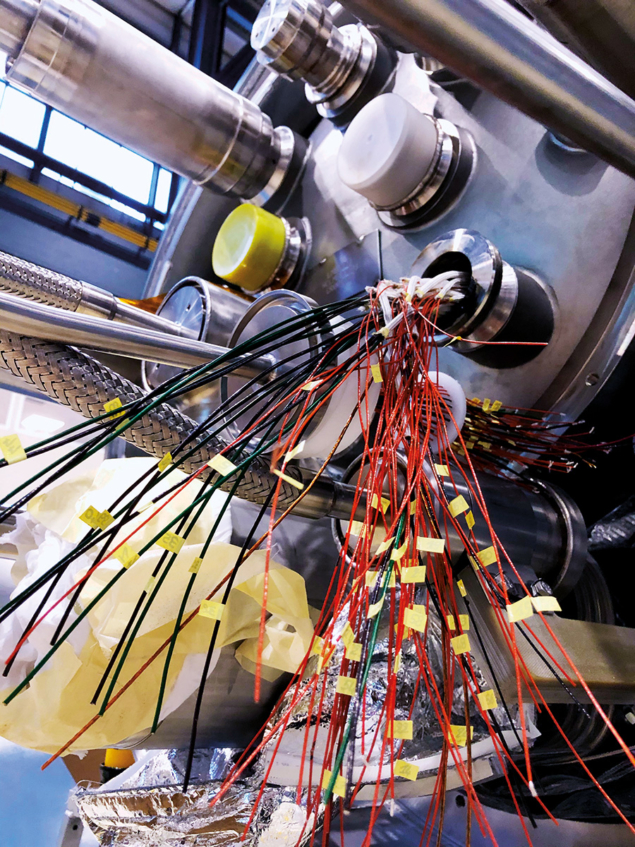

The CERN magnet group, in collaboration with the US–DOE laboratories participating in the LHC Accelerator Upgrade Project, is in the process of addressing these and other challenges, finding solutions suitable for a magnet production on the scale required for the HL-LHC. A total of six 11 T dipoles (each about 6 m long) and 20 inner triplet quadrupoles (up to 7.5 m long) are in production at CERN and in the US, and the first magnets have been tested (see “Power couple” image). And yet, it is clear that we are not ready to extrapolate such production on a much larger scale, i.e. to the thousands of magnets required for a possible future hadron collider such as FCC-hh. This is exactly why the HL-LHC is so critical to the development of high-field magnets for future accelerators: not only will it be the first demonstration of Nb3Sn magnets in operation, steering and colliding beams, but by building it on a scale that can be managed at the laboratory level we have a unique opportunity to identify all the areas of necessary development, and the open technology issues, to allow the next jump. Beyond its prime physics objective, the HL-LHC is therefore the springboard to the future of high-field accelerator magnets.

Climb to higher peak fields

For future circular colliders, the target dipole field has been set at 16 T for FCC-hh, allowing proton–proton collisions at an energy of 100 TeV, while China’s proposed pp collider (SppC) aims at a 12 T dipole field, to be followed by a 20 T dipole. Are these field levels realistic? And based on which technology?

Looking at the dipole fields produced by Nb3Sn development magnets during the past 40 years (figure 1), fields up to 16 T have been achieved in R&D demonstrators, suggesting that the FCC target can be reached. In 2018 “FRESCA2” – a large-aperture (100 mm) dipole developed over the past decade through a collaboration between CERN and CEA-Saclay in the framework of the European Union project EuCARD – attained a record field of 14.6 T at 1.9 K (13.9 T at 4.5 K). Another very recent result, obtained in June 2019, is the successful test at Fermilab by the US Magnet Development Programme (MDP) of a “cos-theta” dipole with an aperture of 60 mm called MDPCT1 (see “Cos-theta 1” image), which reached a field of 14.1 T a t 4.5 K (CERN Courier September/October 2019 p7). In February this year, the CERN magnet group set a new Nb3Sn record with an enhanced racetrack model coil (eRMC), developed in the framework of the FCC study. The setup, which consists of two racetrack coils assembled without mid-plane gap (see “Racetrack demo” image), produced a 16.36 T central field at 1.9 K and a 16.5 T peak field on the coil, which is the highest ever reached for a magnet of this configuration. The magnet was also tested at 4.5 K and reached a field of about 16.3 T (see HL-LHC quadrupole successfully tested). These results send a positive signal for the feasibility of next-generation hadron colliders.

A field of 16 T seems to be the upper limit that can be reached with a Nb3Sn accelerator magnet. Indeed, though the conductor performance can still be improved, as demonstrated by recent results obtained at the National High Magnetic Field Laboratory (NHMFL), Ohio State University and Fermilab within the scope of the US-MDP, this is the point at which the material itself will run out of steam. As for any other superconductor, the critical current density drops as the field grows, requiring an increasing amount of material to carry a given current. The effect becomes dramatic when approaching a significant fraction of the critical field. Akin to Nb-Ti in the region of 8 T, a further field increase with Nb3Sn beyond 16 T would require an exceedingly large coil and an impractical amount of conductor. Reaching the ultimate performance of Nb3Sn, which will be situated between the present 12 T and the expected maximum of 16 T, still requires much work. The technology issues identified by the ongoing work on the HL-LHC magnets are exacerbated by the increase in field, electromagnetic force and stored energy. Innovative industrial solutions will be needed, and the conductor itself brought to a level of maturity comparable to Nb–Ti in terms of performance, quality and cost. This work is the core of the ongoing FCC magnet-development programme that CERN is pursuing in collaboration with laboratories, universities and industries worldwide.

As the limit of Nb3Sn comes into view, we see history repeating itself: the only way to push beyond it to higher fields will be to resort to new materials. Since Nb3Sn is technically the low-temperature superconductor (LTS) with the highest performance, this will require a shift to high-temperature superconductors.

High-temperature superconductivity (HTS), discovered in 1986, is of great relevance in the quest for high fields. When operated at low temperature (the same liquid-helium range as LTS), HTS materials have exceedingly large critical fields in the range of 100 T and above. And yet, only recently has the material and magnet engineering reached the point where HTS materials can generate magnetic fields in excess of LTS ones. The first user applications coming to fruition are ultra-high-field NMR magnets, as recently delivered by Bruker Biospin, and the intense magnetic fields required by materials science, for example the 32 T all-superconducting user facility built at NHMFL.

As for their application in accelerator magnets, the potential of HTS to make a quantum leap is enormous. But it is also clear that the tough challenges that needed to be solved for Nb3Sn will escalate to a formidable level in HTS accelerator magnets. The magnetic force scales with the square of the field produced by the magnet, and for HTS the problem will no longer be whether the material can carry the super-currents, but rather how to manage stresses approaching structural material limits. Stored energy has the same square-dependence on the field, and quench detection and protection in large HTS magnets are still a spectacular challenge. In fact, HTS magnet engineering will probably differ so much from the LTS paradigm that it is fair to say that we do not yet know whether we have identified all the issues that need to be solved. HTS is the most exciting class of material to work with; the new world for brave explorers. But it is still too early to count on practical applications, not least because the production cost for this rather complex class of ceramic materials is about two orders of magnitude higher than that of good-old Nb–Ti.

It is thus logical to expect the near future to be based mainly on Nb3Sn. With the first demonstration to come imminently in the LHC, we need to consolidate the technology and bring it to the maturity necessary on a large-scale production. This may likely take place in steps – exploring 12 T territory first, while seeking the solutions to the challenges of ultimate Nb3Sn performance towards 16 T – and could take as long as a decade. For China’s SppC, iron-based HTS has been suggested as a route to 20 T dipoles. This technology study is interesting from the point of view of the material, but the magnet technology for iron-based superconductors is still rather far away.

Meanwhile, nurtured by novel ideas and innovative solutions, HTS could grow from the present state of a material of great potential to its first applications. The LHC already uses HTS tapes (based on Bi-2223) for the superconducting part of the current leads. The HL-LHC will go further, by pioneering the use of MgB2 to transport the large currents required to power the new magnets over considerable distances (thereby shielding power converters and making maintenance much easier). The grand challenges posed by HTS will likely require a revolution rather than an evolution of magnet technology, and significant technology advancement leading to large-scale application in accelerators can only be imagined on the 25-year horizon.

Road to the future

There are two important messages to retain from this rather simplified perspective on high-field magnets for accelerators. Firstly, given the long lead times of this technology, and even in times of uncertainty, it is important to maintain a healthy and ambitious programme so that the next step in technology is at hand when critical decisions on the accelerators of the future are due. The second message is that with such long development cycles and very specific technology, it is not realistic to rely on the private sector to advance and sustain the specific demands of HEP. In fact, the business model of high-energy physics is very peculiar, involving long investment times followed by short production bursts, and not sustainable by present industry standards. So, without taking the place of industry, it is crucial to secure critical know-how and infrastructure within the field to meet development needs and ensure the long-term future of our accelerators, present and to come.

- This is an updated version of an article published recently in a special supplement about magnet technology:

preview-courier.web.cern.ch/p/in-focus/magnet-technology.