In Greek mythology, Atlas was a Titan who had to hold up the heavens with his hands as a punishment for having taken part in a revolt against the Olympians. For LHC, the ATLAS detector will also have an onerous physics burden to bear, but this is seen as a golden opportunity rather than a punishment.

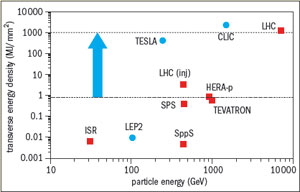

The major physics goal of CERN’s LHC proton–proton collider is the quest for the long-awaited “Higgs” mechanism, which drives the spontaneous symmetry breaking of the electroweak Standard Model picture. The large ATLAS collaboration proposes a large general-purpose detector to exploit the full discovery potential of LHC’s proton collisions. LHC will provide proton–proton collision luminosities at the awe inspiring level of 1034 cm–2 s–1, with initial running in at 1033. The ATLAS philosophy is to handle as many signatures as possible at all luminosity levels, with the initial running providing more complex possibilities.

The ATLAS concept was first presented as a letter of intent to the LHC Committee in November 1992. Following initial presentations at the Evian meeting in March of that year, two ideas for general-purpose detectors, the ASCOT and EAGLE schemes, merged, with Friedrich Dydak (MPl Munich) and Peter Jenni (CERN) as ATLAS co-spokesmen.

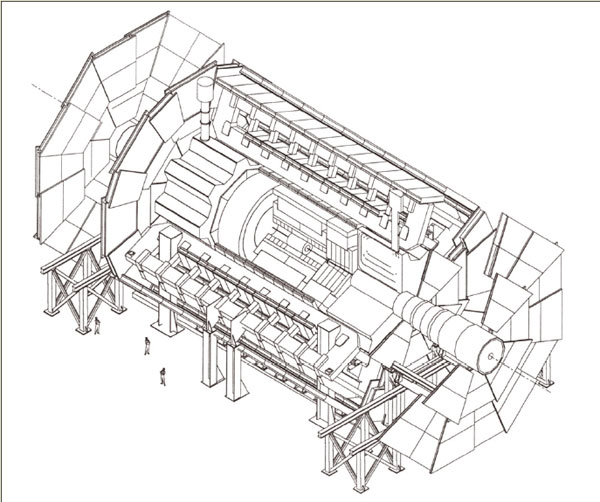

Since the initial letter of intent presentation, the ATLAS design has been optimized and developed, guided by physics performance studies and the LHC-oriented detector R&D programme. The overall detector concept is characterized by an inner superconducting solenoid (for inner tracking) and large superconducting air-core toroids outside the calorimetry. This solution avoids constraining the calorimetry while providing a high-resolution, large acceptance and robust detector.

The outer magnet will extend over a length of 26 m with an outer diameter of almost 20 m. The total weight of the detector is 7000 tonnes. Fitted with its endcap toroids, the outer magnet alone will weigh 1400 tonnes.

Designs on calorimetry

To achieve its basic aims, the ATLAS design has gone for very good electromagnetic calorimetry for electron and photon identification and measurements, complemented by complete (hermetic) jet and missing energy calorimetry; efficient tracking at high luminosity for lepton momentum measurements, for heavy quark tagging, and for good electron and photon identification, as well as heavy-flavour vertexing and reconstruction capability; precision muon-momentum measurements up to the highest luminosities and very low transverse-momentum triggering at lower luminosities. Other overall design aims include large angular coverage together with triggering and particle-momentum capabilities at low transverse momenta.

The inner detector is contained in a cylinder 6.8 m long (with a solenoid of length 5.3 m) and diameter 2.3 m, providing a magnetic field of 2 T. Design of the coil is being developed by the Japanese KEK Laboratory. Reflecting LHC’s bold physics aims and the pace of detector R&D, this inner detector is packed with innovative tracking technology (compared with existing major detectors), including high-resolution pixel and strip detectors inside and straw tubes with transition radiation capability farther away from the beam pipe. Finest granularity will be provided by semiconductor pixel detectors immediately around the beam pipe, providing about a hundred million pixels. With this technology moving rapidly, the final solution will benefit from ongoing R&D work.

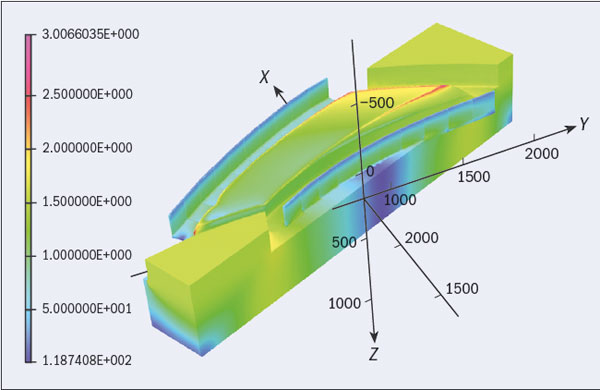

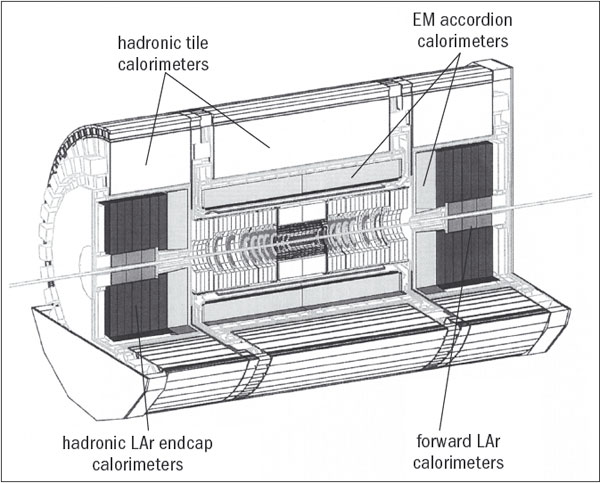

Surrounding the tracking region will be highly granular electromagnetic-sampling calorimetry, probably based on liquid argon (however, studies on an alternative liquid-krypton scheme are still in progress), contained in an “accordion” absorber structure in a cylinder 7 m long and 4.5 m across, plus two endcaps. The inner solenoid coil is integrated into the vacuum vessel of the calorimeter cryogenics, reducing the amount of material that emerging particles have to cross.

Liquid argon is used for both electromagnetic and hadronic calorimetry in the endcaps of the calorimeter, the former arranged in a “Spanish fan” geometry to cover all azimuthal angles without cracks, the latter in a wheel-like structure using copper absorber. Integrated into the endcaps is the forward calorimetry based on an array of rods and tubes embedded in a tungsten absorber some 5 m from the interaction point.

The bulk of the hadronic calorimetry is provided by three large barrels of a novel tile scintillator with plastic scintillator plates embedded in iron absorber and read out by wavelength-shifting fibres. The tiles, laid perpendicular to the beam direction, are staggered in depth to simplify construction and fibre routing. The total weight of the calorimetry system is 4000 tonnes (the entire UA1 detector that ran at CERN’s proton–antiproton collider for a decade and was considered a big detector in its time, weighed 2000 tonnes).

The air-core toroid magnet, with its long barrel and inserted endcaps, generates a substantial field over a large volume but with a light and open structure that minimizes troublesome multiple scattering. The toroid route was chosen because this geometry features the magnetic field perpendicular to the particle, and avoids large volumes of iron flux return. The French Saclay Laboratory is responsible for the barrel and the British Rutherford Appleton Laboratory for the endcaps.

lnterleaved with the main air-toroid magnet will be the muon chambers, the last outposts of ATLAS. These chambers, arranged in projective towers in the barrel region, are diametrically 22 m apart, with the central muon barrel extending 26 m and forward muon chambers 42 m apart, along the beam direction. Cathode-strip chambers will be used in the highest-rate environment close to the beam direction, supplemented farther out by “monitored” drift tubes – pressurized thin-wall tubes arranged in several layers.

Overall, ATLAS so far involves some 1500 scientists and engineers representing 140 institutions in 31 countries (including 17 CERN member states). The participation of non-member state groups is still subject to the satisfactory establishment of bilateral agreements between CERN and the appropriate funding agencies. However, their potential involvement in ATLAS is already woven deeply into the fabric of the collaboration.

For example, semiconductor strips for the inner detector could involve teams from institutes in Australia, Canada, the Czech Republic, Finland, Germany, Japan, Norway, Poland, Russia, Sweden, Switzerland, the UK and the US, while the scintillator tiles could involve Armenia, Brazil, the Czech Republic, France, ltaly, Portugal, Romania, Russia, Spain, Sweden, CERN and the US.

In addition to the 7000 tonnes of ATLAS hardware, a major effort is also required for software and data acquisition. To handle ATLAS data, the first-level trigger, which identify unambiguously which event crossing is responsible for the event, operates at the full-bunch crossing rate of 40 MHz (one bunch every 25 ns). It takes about 2 μs for the first-level trigger information to take shape and be distributed. During level-1 trigger-processing time, all data is held in pipelines prior to output at 100 kHz for subsequent processing at level 2. During these 10 ms, the level-2 processors look at subsets of detector data before passing it on for final processing (at about 1 kHz) at level 3, where complete event reconstruction becomes possible. Trigger processors at all three levels will be programmable.

• June 1995 p9 (abridged).

Tiles and accordions

Design work and prototyping is well under way for the modules that will make up the ATLAS detector. One feature of the design stresses very good electromagnetic calorimetry for electron and photon identification and measurements, complemented by accurate measurements of hadronic jets and missing energy.

Arranged as a conventional central barrel with two endcaps, the inner part (including endcaps) uses the very-radiation-resistant liquid argon technique for electromagnetic measurements, contained in a 13 m long cylinder with outer radius 2.25 m, surrounded by less expensive iron-scintillator tiles sampling calorimetry for the hadronic part, extending to a radius of 4.25 m.

In the inner part of the endcaps, liquid argon is also used for the hadronic calorimeter. Special requirements are needed for the forward calorimeter around the beam pipe, about 5 m from the collision point. Fully integrated with the endcaps, liquid argon is again the sampling medium of choice.

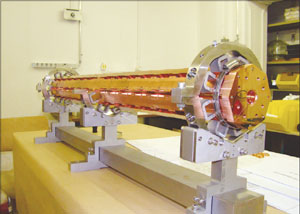

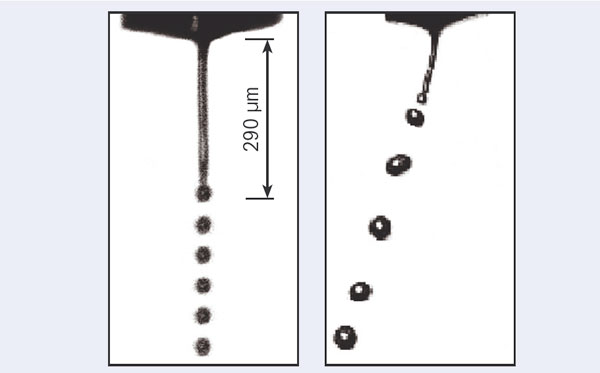

For the electromagnetic liquid-argon part, the 1024 lead–stainless steel converters of the sampling calorimeter are arranged in a novel corrugated “accordion” structure, with plates following the direction of the emerging secondary particles.

The barrel hadronic calorimetry is provided by an active medium of 3 mm-thick scintillator tiles, interleaved with absorber in the form of 14 mm steel sheets, and fashioned as a large 2500-tonne cylinder to surround the liquid argon barrel and endcaps. Full-scale prototypes under test show promising energy resolution.

• April 1997 pp5–6 (abridged).