The quality of a charged particle beam is characterized by the product of its radius and divergence – the emittance – and by the momentum spread. Together they define the part of the phase space that is occupied by the particles in an accelerator. In 1966 Gersh Budker proposed a method that would allow the compression, or “cooling”, of the occupied phase space in stored proton beams. His idea of electron cooling was based on the interaction of a monochromatic and well directed electron beam with the heavier protons circulating over a certain distance in a section of a storage ring. The electrons are produced continuously in an electron gun, accelerated electrostatically to a velocity equal to the average velocity of the circulating beam and then inflected into the beam. Both beams overlap for a distance, over which the cooling takes place, and then the electrons are separated from the ion beam and directed onto a collector.

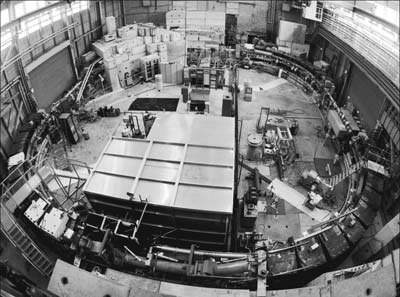

The first successful demonstration of electron cooling took place in 1974 at the proton storage ring NAP-M at what is now the Budker Institute of Nuclear Physics (BINP) in Novosibirsk. A few years later CERN and Fermilab built dedicated facilities to study the cooling process, which was a prerequisite for the accumulation of antiprotons for the proposed conversion of proton accelerators to proton–antiproton colliders. The Initial Cooling Experiment (ICE) at CERN became operational in 1977 with the goal of determining which of two cooling methods would be more appropriate for high-energy antiprotons: electron cooling or the technique proposed by Simon van der Meer at CERN, namely, stochastic cooling. The tests on electron cooling took place in 1979 (see box).

From ICE to LEAR

As is well known, CERN chose stochastic cooling for the Antiproton Accumulator that was used to feed the SPS when operating as a proton–antiproton collider. However, the request by physicists for a programme with low-energy antiprotons allowed the ICE electron cooler to continue, with a new lease of life. Thirty years and two reincarnations later, essentially the same device is now used routinely to cool and deliver low-energy antiprotons to experiments on CERN’s Antiproton Decelerator (AD).

In its first reincarnation, the ICE cooler was used on the Low Energy Antiproton Ring (LEAR). This decelerator ring was built to deliver intensities of a few thousand million (109) antiprotons in an ultra-slow extraction mode to up to three experiments simultaneously over many hours. Operation in LEAR required a static vacuum level less than 10–11 torr, which meant that the cooler needed a major upgrade of its vacuum system. The high gas load coming from the cathode and collector regions of the cooler had made its operation on ICE very problematic and the best obtainable vacuum was in the order of 10–10 torr. Higher pumping speeds and a careful choice of materials were therefore needed if there was to be any significant improvement in the vacuum.

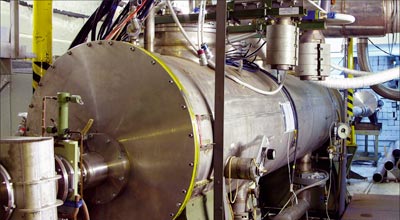

A team from CERN and Karlsruhe carried out an extensive study of various vacuum techniques between 1981 and 1984, resulting in a new design for the complete vacuum envelope, which was built using high-quality AISI 316LN stainless steel. In addition, the whole system was designed to be bakeable at 300 °C in situ for 24 hours, requiring permanent jackets to provide the necessary thermal insulation. The use of non-evaporable getter (NEG) strips developed for the Large Electron–Positron Collider provided an increase in pumping speed and three such modules were initially installed on the cooler. The choice of NEGs was evident as space limitations excluded any other type of pumping system, such as cryopumps or sputter ion pumps.

With this hurdle overcome preparations started for the integration of the cooling device with LEAR. To fit into one of the 8 m long straight sections of the machine, the interaction length of the cooler had to be reduced by half. Luckily the drift solenoid had been designed in two equal parts so removing one half was not a problem. The high voltage and the control systems of the device were also completely refurbished and a dedicated equipment building was erected close to the LEAR ring. The installation of the cooler took place during the summer of 1987 followed by the conditioning of the cathode and further tests to monitor the evolution of the LEAR vacuum in the presence of the electron beam. By the autumn of 1987 the cooler was ready to cool its first beam. The first cooling tests took place on a 50 MeV proton beam injected directly from Linac 1 and the initial results confirmed all expectations.

After protons the attention turned to antiprotons and the use of electron instead of stochastic cooling to improve the duty cycle of the deceleration in LEAR. To deliver high-quality antiproton beams to the different experiments in the South Hall, the operators applied stochastic cooling after injection at 609 MeV/c and then at various plateaus during the deceleration process. It would normally take around 20 minutes to obtain a “cold” beam at 100 MeV/c, the lowest momentum in LEAR. The use of electron cooling reduced this time to 5 minutes as cooling was needed for only 10 seconds on each of the intermediate plateaus, compared with 5 minutes per plateau with stochastic cooling. Hardware modifications required to render the operation of the cooler as reliable and effective as possible included the replacement of the collector with one that had a better collection efficiency (>99.99%), a new control system to synchronize the power supplies for the cooler with the LEAR magnetic cycle, and the implementation of a transverse feedback system (or “damper”) to counteract the coherent instabilities observed with such dense particle beams.

Apart from being the first cooler to be used routinely for accelerator operations, this apparatus was also the first to demonstrate the cooling and stacking of ions. In 1989 a machine experiment was devoted to studies on O6+ and O8+ ions coming from Linac 1. By applying electron cooling during the longitudinal stacking pro-cess this succeeded in increasing the intensity by a factor of 20. Later these ions were accelerated to an energy of 408 MeV/u and extracted to an experiment measuring the distribution of dose with depth in types of plastic equivalent to human tissue.

The years of operation on LEAR also allowed detailed studies of the cooling process. A full investigation into the influence of the machine’s optical parameters demonstrated that cooling was not effective over the whole radius of the electron beam and that having a finite value of the dispersion function in the cooling section could enhance the process significantly. Before these studies it was believed that a circulating ion beam with transverse dimensions comparable to the electron beam size would produce stronger cooling.

In a separate study the electron beam was neutralized by accumulating positively charged ions using electrostatic traps placed at either end of the cooling section. By neutralizing the space charge of the electron beam, the induced drift velocity of the electrons would become negligible and hence the equilibrium emittances of the ion beam would be reduced further. Even though a neutralization factor of more than 90% could readily be obtained, it proved to be very difficult to stabilize this very high level of neutralization. Secondary electrons produced in the collector would be accelerated out of the collector region and oscillate back and forth between the collector and the gun. At each passage through the cooling section they would excite the trapped ions causing an abrupt deneutralization.

Another important modification to the cooler was the development of a variable-current electron gun

Another important modification to the cooler was the development of a variable-current electron gun. The gun inherited from ICE was of the resonant type and offered little operational flexibility. The new gun was of the adiabatic type with the peculiarity that it had been designed to operate in a relatively low magnetic field – a prerequisite for its integration in LEAR. Online control of the electron beam intensity was possible by simply varying the voltage difference between the cathode and the “grid” electrode.

Towards the end of the antiproton programme on LEAR, the cooler was paving the way for the conversion of this ring to the Low Energy Ion Ring (LEIR), which would cool and accumulate lead ions for CERN’s new big accelerator, the LHC. A series of machine experiments using lead ions with various charge states (52+ to 55+) not only demonstrated the feasibility of the proposed scheme, but also brought to light an anomalously high recombination rate between the cooling electrons and the Pb53+ ions (which had initially been the proposed charge state) leading to lifetimes that were too short for cooling and stacking in LEAR. It was decided to use Pb54+ ions instead, as they are produced in equal quantities to the 53+ charge state.

On to the AD

After 10 years on LEAR, the cooler was moved to the AD in 1998 where it continues to provide cold antiprotons for the “trap” experiments in their quest to produce large quantities of antihydrogen. Recently the AD team attempted a novel deceleration technique using electron cooling. The idea is to ramp the cooler and the main magnetic field of the AD simultaneously to a lower-energy plateau. This allows the antiproton beam to be kept cold throughout the deceleration pro-cess avoiding the adiabatic blow-up that all beams experience when their energy is reduced. The first tests were very modest, decelerating 3.5 × 107 antiprotons from 46.5 to 43.4 MeV, but future experiments will concentrate on decelerating the beam below 5.3 MeV.

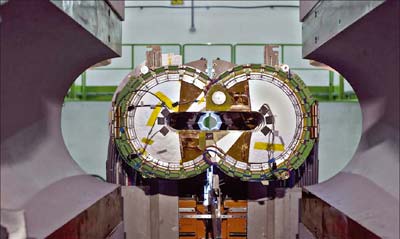

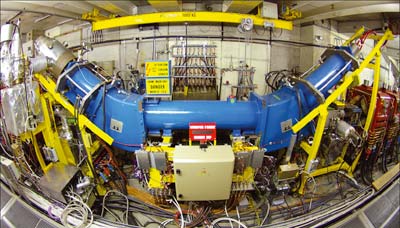

The experience gained with the upgraded ICE cooler on LEAR provided the stepping stones for the design of a new state-of-the-art cooler for the I-LHC project to provide ions for the LHC. This is the first of a new generation of coolers incorporating all of the recent developments in electron cooling technology (adiabatic expansion, electrostatic bend, variable density electron beam, high perveance and “pancake” solenoid structure) for the cooling and accumulation of heavy ion beams. High perveance, or intensity, is necessary to rapidly reduce the phase-space dimensions of a newly injected “hot” beam, while variable density helps to efficiently cool particles with large betatron oscillations and at the same time improve the lifetime of the cooled stack. Adiabatic expansion also enhances the cooling rate because it reduces the transverse temperature of the electron beam by a factor proportional to the ratio of the longitu-dinal magnetic field between the gun and the cooling section.

The new cooler, built in collaboration with BINP, was commissioned at the end of 2005 and has since been routinely used to provide high-brightness lead-ion beams required for the LHC. In parallel there have been studies to determine the influence of the cooler parameters (electron beam intensity, density distribution, size) on the lifetime and maximum accumulated current of the ions.

Electron cooling will certainly be around at CERN for quite a few more years. With the AD antiproton physics programme extended until 2016, the original ICE cooler will be nearly 40 years old when it finally retires. If the Extra Low ENergy Antiproton (ELENA) ring comes to life, it will require the design of a new cooler with an energy range of 50 to 300 eV to cool and ultimately decelerate antiprotons to only 100 keV. The possibility of polarized antiprotons at high energy in the AD will also require either an upgrade of the present cooler or the construction of a new one capable of generating a high-current electron beam at 300 keV. Of course the LEIR electron cooler will continue to deliver lead ions for the LHC and, with a renewed interest for a fixed-target ion programme, other ion species could also find themselves being cooled and stacked in LEIR.