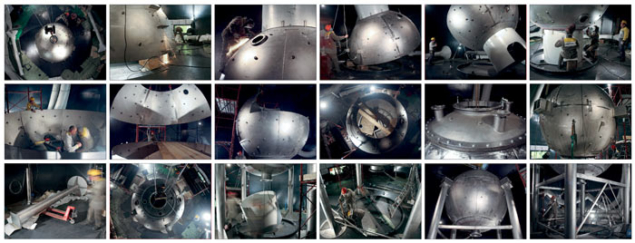

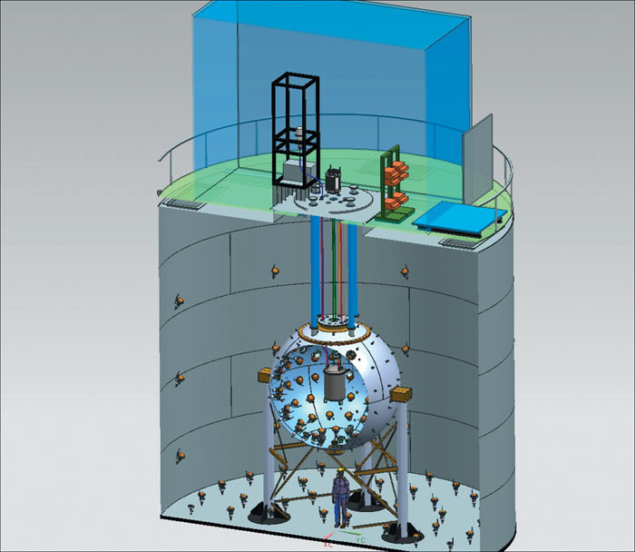

Image credit: Vinicio Tullio LNF/INFN.

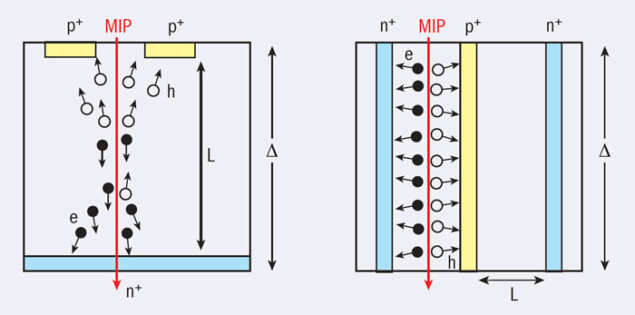

Electron clouds – abundantly generated in accelerator vacuum chambers by residual-gas ionization, photoemission and secondary emission – can affect the operation and performance of hadron and lepton accelerators in a variety of ways. They can induce increases in vacuum pressure, beam instabilities, beam losses, emittance growth, reductions in the beam lifetime or additional heat loads on a (cold) chamber wall. They have recently regained some prominence: since autumn 2010, all of these effects have been observed during beam commissioning of the LHC.

Electron clouds were recognized as a potential problem for the LHC in the mid-1990s and the first workshop to focus on the phenomenon was held at CERN in 2002. Ten years later, the fifth electron-cloud workshop has taken place, again in Europe. More than 60 physicists and engineers from around the world gathered at La Biodola, Elba, on 5–8 June to discuss the state of the art and review recent electron-cloud experience.

Valuable test beds

Many electron-cloud signatures have been recorded and a great deal of data accumulated, not only at the LHC but also at the CESR Damping Ring Test Accelerator (CesrTA) at Cornell, DAΦNE at Frascati, the Japan Proton Research Complex (J-PARC) and PETRA III at DESY. These machines all serve as valuable test beds for simulations of electron-cloud build-up, instabilities and heat load, as well as for new diagnostics methods. The latter include measurements of synchronous phase-shift and cryoeffects at the LHC, as well as microwave transmission, coded-aperture images and time-resolved shielded pick-ups at CesrTA. The impressive resemblance between simulation and measurement suggests that the existing electron-cloud models correctly describe the phenomenon. The workshop also analysed the means of mitigating electron-cloud effects that are proposed for future projects, such as the High-Luminosity LHC, SuperKEKB in Japan, SuperB in Italy, Project-X in the US, the upgrade of the ISIS machine in the UK and the International Linear Collider (ILC).

An international advisory committee had assembled an exceptional programme for ECLOUD12. As a novel feature for the series, members of the spacecraft community participated, including the Val Space consortium based in Valencia, the French aerospace laboratory Onera, Massachusetts Institute of Technology, the Instituto de Ciencia de Materiales de Madrid and the École Polytechnique Fédérale de Lausanne (EPFL). Indeed, satellites in space suffer from problems that greatly resemble the electron cloud in accelerators, which can be modelled and cured by similar countermeasures. These problems include the motion of the satellites through electron clouds in outer space, the relative charging of satellite components under the influence of sunlight and the loss of performance of high-power microwave devices on space satellites. Intriguingly, the “Furman formula” parameterizing the secondary emission yield, which was first introduced around 1996 to analyse electron-cloud build-up for the PEP-II B factory, then under construction at SLAC, is now widely used to describe secondary emission on the surface of space satellites. Common countermeasures for both accelerators and satellites include advanced coatings and both communities use simulation codes such as BI-RME/ECLOUD and FEST3D. A second community to be newly involved in the workshop series included surface scientists, who at this meeting explained the chemistry and secrets of secondary emission, conditioning and photon reflections. Another important first appearance at ECLOUD12 was the use of Gabor lenses, e.g. at the University of Frankfurt, to study incoherent electron-cloud effects in a laboratory set-up.

Several powerful new simulation codes were presented for the first time at ECLOUD12. These novel codes include: SYNRAD3D from Cornell, for photon tracking, modelling surface properties and 3D geometries; OSMOSEE from Onera, to compute the secondary-emission yield, including at low primary energies; PyECLOUD from CERN, to perform improved and faster build-up simulations; the latest version of WARP-POSINST from Lawrence Berkeley National Laboratory, which allows for self-consistent simulations that combine build-up, instability and emittance growth, and is used to study beam-cloud behaviour over hundreds of turns through the Super Proton Synchrotron (SPS); and BI-RME/ECLOUD from a collaborative effort of EPFL and CERN, to study various aspects of the interaction of microwaves with an electron cloud. New codes also mean more work. For example, the advocated transition from ECLOUD to PyECLOUD implies that substantial code development done at Cornell and EPFL for ECLOUD may need to be redone.

Several open questions remain

ECLOUD12 could not solve all of the puzzles, and several open questions remain. Why, for example, does the betatron sideband signal – characterizing the electron-cloud related instability – at CesrTA differ from similar signals at KEKB and PETRA III? Why was the beam-size growth at PEP-II observed in the horizontal plane, while simulations had predicted it to be vertical? How can the complex nature of intricate incoherent effects be described fully? Which ingredients are missing for correctly modelling the electron-cloud behaviour for electron beams, e.g. the existence of a certain fraction of high-energy photoelectrons? How does the secondary-emission yield of the copper coating on the LHC beam-screen decrease as a function of incident electron dose and incident electron energy (looking for the “correct” equation to describe the variation of the primary energy at which the maximum yield is attained as a function of this maximum yield, εmax (δmax) and the concurrent evolution in the reflectivity of low-energy electrons, R)? Does the conditioning of stainless steel differ from that of copper? If it is the same, then why should the SPS’s beam pipe be coated but not the LHC’s? Can the secondary-emission yield change over a timescale of seconds during the accelerator cycle (a suspicion based on evidence from the Main Injector at Fermilab)? Can the surface conditioning be speeded up by the controlled injection of carbon-monoxide gas?

As for the “electron-cloud safety” of future machines, ECLOUD12 concluded that the design mitigations for the ILC and for SuperKEKB appear to be adequate. The LHC and its upgrades (HL-LHC, HE-LHC) should also be safe with regard to electron cloud if the surface conditioning (“scrubbing”) of the chamber wall progresses as expected. The situations for Project-X, the upgrade for the Relativistic Heavy Ion Collider, J-PARC and SuperB are less finalized and perhaps more challenging.

ECLOUD12 was organized jointly and co-sponsored by INFN-Frascati, INFN-Pisa, CERN, EuCARD-AccNet and the Low Emittance Ring (LER) study at CERN. In addition, the SuperB project provided a workshop pen “Made in Italy”. The participants also enjoyed a one-hour football match (another novel feature) between experimental and theoretical electron-cloud experts – the latter clearly outnumbered – as well as post-dinner discussions until well past midnight. The next workshop of the series could be ECLOUD15, which would coincide with the 50th anniversary of the first observation of the electron-cloud phenomenon at a small proton storage-ring in Novosibirsk and its explanation by Gersh Budker.

• All of the presentations at ECLOUD12.

The ECLOUD12 workshop was dedicated to the memory of the late Francesco Ruggiero, former leader of the accelerator physics group at CERN, who launched an important remedial electron-cloud crash programme for the LHC in 1997.