Your co-Nobelists in the discovery of gravitational waves, Kip Thorne and Rainer Weiss, have both recognised your special skills in the management of the LIGO collaboration. When you landed in LIGO in 1994, what was the first thing you changed?

When I arrived in LIGO, there was a lot of dysfunction and people were going after each other. So, the first difficult problem was to make LIGO smaller, not bigger, by moving people out who weren’t going to be able to contribute constructively in the longer term. Then, I started to address what I felt were the technical and management weaknesses. Along with my colleague, Gary Sanders, who had worked with me on one of the would-be detectors for the Superconducting Super Collider (SSC) before the project was cancelled, we started looking for the kind of people that were missing in technical areas.

For example, LIGO relies on very advanced lasers but I was convinced that the laser that was being planned for, a gas laser, was not the best choice because lasers were, and still are, a very fast-moving technology and solid-state lasers were more forward-looking. Coming from particle physics, I’m used to not seeing a beam with my own eyes. So I wasn’t disturbed that the most promising lasers at that time emitted light in the infrared, instead of green, and that technology had advanced to where they could be built in industry. People who worked with interferometers were used to “little optics” on lab benches where the lasers were all green and the alignment of mirrors etc was straightforward. I asked three of the most advanced groups in the world who worked on lasers of the type we needed (Hannover in Germany, Adelaide in Australia and Stanford in California) if they’d like to work together with us, and we brought these experts into LIGO to form the core of what we still have today as our laser group.

Project management for forefront science experiments is very different, and it is hard for people to do it well

This story is mirrored in many of the different technical areas in LIGO. Physics expertise and expertise in the use of interferometer techniques were in good supply in LIGO, so the main challenge was to find expertise to develop the difficult forefront technologies that we were going to depend on to reach our ambitious sensitivity goals. We also needed to strengthen the engineering and project-management areas, but that just required recruiting very good people. Later, the collaboration grew a lot, but mostly on the data-analysis side, which today makes up much of our collaboration.

According to Gary Sanders of SLAC, “efficient management of large science facilities requires experience and skills not usually found in the repertoire of research scientists”. Are you a rare exception?

Gary Sanders was a student of Sam Ting, then he went to Los Alamos where he got a lot of good experience doing project work. For myself, I learned what was needed kind of organically as my own research grew into larger and larger projects. Maybe my personality matched the problem, but I also studied the subject. I know how engineers go about building a bridge, for example, and I could pass an exam in project management. But, project management for forefront science experiments is very different, and it is hard for people to do it well. If you build a bridge, you have a boss, and he or she has three or four people who do tasks under his/her supervision, so generally the way a large project is structured is a big hierarchical organisation. Doing a physics research project is almost the opposite. For large engineering projects, once you’ve built the bridge, it’s a bridge, and you don’t change it. When you build a physics experiment, it usually doesn’t do what you want it to do. You begin with one plan and then you decide to change to another, or even while you’re building it you develop better approaches and technologies that will improve the instruments. To do research in physics, experience tells us that we need a flat, rather than vertical, organisational style. So, you can’t build a complicated, expensive ever-evolving research project using just what’s taught in the project-management books, and you can’t do what’s needed to succeed in cost, schedule, performance, etc, in the style found in a typical physics-department research group. You have to employ some sort of hybrid. Whether it’s LIGO or an LHC experiment, you need to have enough discipline to make sure things are done on time, yet you also need the flexibility and encouragement to change things for the better. In LIGO, we judiciously adapted various project-management formalities, and used them by not interfering any more than necessary with what we do in a research environment. Then, the only problem – but admittedly a big one – is to get the researchers, who don’t like any structure, to buy into this approach.

How did your SSC experience help?

It helped with the political part, not the technical part, because I came to realise how difficult the politics and things outside of a project are. I think almost anything I worked on before has been very hard, because of what it was or because of some politics in doing it, but I didn’t have enormous problems that were totally outside my control, as we had in the SSC.

How did you convince the US government to keep funding LIGO, which has been described as the most costly project in the history of the NSF?

It’s a miracle, because not only was LIGO costly, but we didn’t have much to show in terms of science for more than 20 years. We were funded in 1994, and we made the first detection more than 20 years later. I think the miracle wasn’t me, rather we were in a unique situation in the US. Our funding agency, the NSF, has a different mission than any other agency I know about. In the US, physical sciences are funded by three big agencies. One is the DOE, which has a division that does research in various areas with national labs that have their own structures and missions. The other big agency that does physical science is NASA, and they have the challenge of safety in space. The NSF gets less money than the other two agencies, but it has a mission that I would characterise by one word: science. LIGO has so far seen five different NSF directors, but all of them were prominent scientists. Having the director of the funding agency be someone who understood the potential importance of gravitational waves, maybe not in detail, helped make NSF decide both to take such a big risk on LIGO and then continue supporting it until it succeeded. The NSF leadership understands that risk-taking is integral to making big advancements in science.

What was your role in LIGO apart from management?

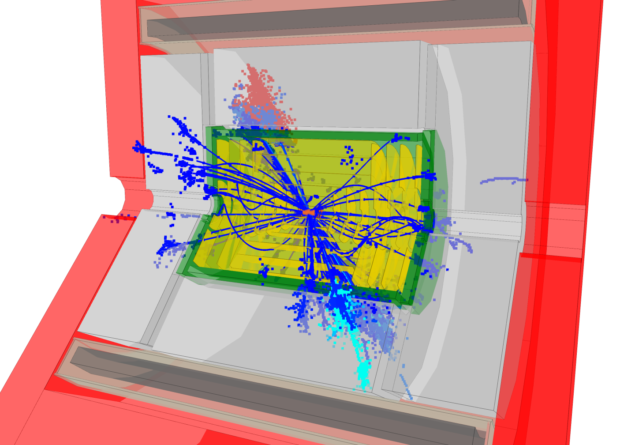

I concentrated more on the technical side in LIGO than on data analysis. In LIGO, the analysis challenges are more theoretical than they are in particle physics. What we have to do is compare general relativity with what happens in a real physical phenomenon that produces gravitational waves. That involves more of a mixed problem of developing numerical relativity, as well as sophisticated data-analysis pipelines. Another challenge is the huge amount of data because, unlike at CERN, there are no triggers. We just take data all the time, so sorting through it is the analysis problem. Nevertheless, I’ve always felt and still feel that the real challenge for LIGO is that we are limited by how sensitive we can make the detector, not by how well we can do the data analysis.

What are you doing now in LIGO?

Now that I can do anything I want, I am focusing on something I am interested in and that we don’t employ very much, which is artificial intelligence and machine learning (ML). In LIGO there are several problems that could adapt themselves very well to ML with recent advances. So we built a small group of people, mostly much younger than me, to do ML in LIGO. I recently started teaching at the University of California Riverside, and have started working with young faculty in the university’s computer-science department on adapting some techniques in ML to problems in physics. In LIGO, we have a problem in the data that we call “glitches”, which appear when something that happens in the apparatus or outside world appears in the data. We need to get rid of glitches, and we use a lot of human manpower to make the data clean. This is a problem that should adapt itself very well to a ML analysis.

Now that gravitational waves have joined the era of multi-messenger astronomy, what’s the most exciting thing that can happen next?

For gravitational waves, knowing what discovery you are going to make is almost impossible because it is really a totally new probe of the universe. Nevertheless, there are some known sources that we should be able to see soon, and maybe even will in the present run. So far we’ve seen two sources of gravitational waves: a collision of two black holes and a collision of two neutron stars, but we haven’t yet seen a black hole with a neutron star going around it. They’re particularly interesting scientifically because they contain information about nuclear physics of very compact objects, and because the two objects are very different in mass and that’s very difficult to calculate using numerical relativity. So it’s not just checking off another source that we found, but new areas of gravitational-wave science. Another attractive possibility is to detect a spinning neutron star, a pulsar. This is a continuous signal that is another interesting source which we hope to detect in a short time. Actually, I’m more interested in seeing unanticipated sources where we have no idea what we’re going to see, perhaps phenomena that uniquely happen in gravity alone.

The NSF leadership understands that risk-taking is integral to making big advancements

Will we ever see gravitons?

That’s a really good question because gravitons don’t exist in Einstein’s equations. But that’s not necessarily nature, that’s Einstein’s equations! The biggest problem we have in physics is that we have two fantastic theories. One describes almost anything you can imagine on a large scale, and that’s Einstein’s equations, and the other, which describes almost too well everything you find here at CERN, is the Standard Model, which is based on quantum field theory. Maybe black holes have the feature that they satisfy Einstein’s equations and at the same time conserve quantum numbers and all the things that happen in quantum physics. What we are missing is the experimental clue, whether it’s gravitons or something else that needs to be explained by both these theories. Because theory alone has not been able to bring them together, I think we need experimental information.

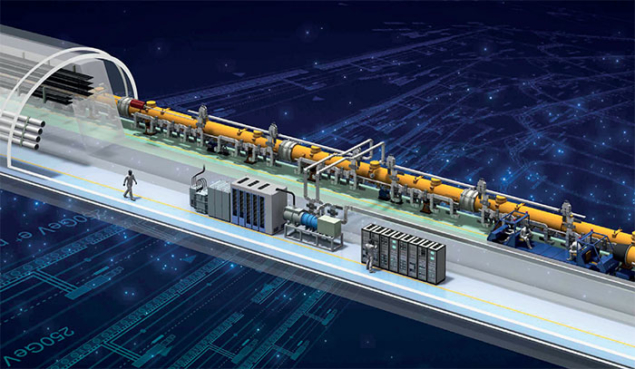

Do particle accelerators still have a role in this?

We never know because we don’t know the future, but our best way of understanding what limits our present understanding has been traditional particle accelerators because we have the most control over the particles we’re studying. The unique feature of particle accelerators is that of being able to measure all the parameters of particles that we want. We’ve found the Higgs boson and that’s wonderful, but now we know that the neutrinos also have mass and the Higgs boson possibly doesn’t describe that. We have three families of particles, and a whole set of other very fundamental questions that we have no handle on at all, despite the fact that we have this nice “standard” model. So is it a good reason to go to higher energy or a different kind of accelerator? Absolutely, though it’s a practical question whether it’s doable and affordable.

What’s the current status of gravitational-wave observatories?

We will continue to improve the sensitivity of LIGO and Virgo in incremental steps over the next few years, and LIGO will add a detector in India to give better global coverage. KAGRA in Japan is also expected to come online. But we can already see that

next-generation interferometers will be needed to pursue the science in the future. A good design study, called the Einstein Telescope, has been developed in Europe. In the US we are also looking at next-generation detectors and have different ideas, which is healthy at this point. We are not limited by nature, but by our ability to develop the technologies to make more sensitive interferometers. The next generation of detectors will enable us to reach large red shifts and study gravitational-wave cosmology. We all look forward to exploiting this new area of physics, and I am sure important discoveries will emerge.