The annual “Strings” conference draws together a large number of active researchers in the field from all over the world. As the largest and most important event on string theory, it aims to review the recent developments for experts, rather than give a comprehensive overview of the field. CERN was an attractive venue for the conference this year, with the imminent start-up of the LHC together with the longer-term Theory Institutes on string phenomenology and black holes taking place just before and after the event. Organized by CERN’s Theory Unit, the universities of Geneva and Neuchâtel, and the ETH Zurich, Strings 2008 attracted more than 400 participants from 36 countries. It opened in the presence of CERN’s management and the rector of the University of Geneva, who also represented the state of Geneva. Appropriately, the first talk was by Gabriele Veneziano, formerly of CERN and one of the initiators of string theory following his famous formula invention 40 years ago. There was a welcome reception at the United Nations in Geneva, and the conference banquet was held in the Unimail building at the university.

A framework for unified particle physics

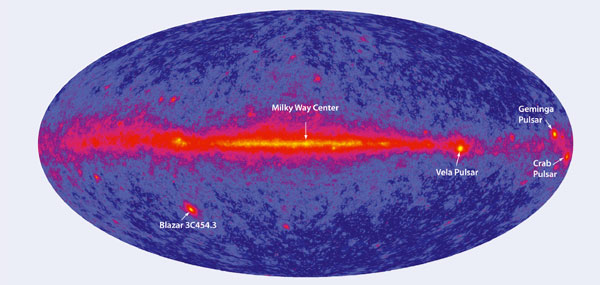

String theory can be seen as a framework for generalizing conventional particle quantum field theory, with applications stretching across a broad range of areas, such as quantum gravity, grand unification, gauge theories, heavy-ion physics, cosmology and black holes. It allows the systematic investigation of many of the important features of such theories by providing a coherent and consistent way of formulating the problems at hand. As Hirosi Ooguri from the California Institute of Technology so aptly said in his summary talk, string theory can be viewed, depending on the application, as a candidate, a model, a tool and/or a language.

The richness of string theory makes it a candidate for a consistent framework that truly unifies all of particle physics, including gravity. It also provides a stage for analysing complicated problems, such as quantum black holes and strongly coupled systems, as in quark–gluon plasma, through the means of idealized, often supersymmetric, models. Moreover, string theory has been proved to be an invaluable tool for doing computations in particle physics in an extremely efficient manner. It also often provides a novel language, with which it miraculously transforms seemingly hard problems into simple ones by reformulating them in a “dual” way. This also includes certain hard problems in mathematics that become simple when translated into the language of string theory.

Image credit: E Gianolio.

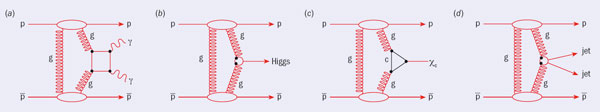

The talks displayed all of these four facets of string theory effectively. Essentially there were five key areas on which the conference focused, roughly reflecting the fields of highest activity and progress during the past year. In addition, there were three talks on the LHC and its physics by the project leader, Lyn Evans; CERN’s chief scientific officer, Jos Engelen; and Oliver Buchmuller from CERN and the CMS experiment. These were intended to educate the string community in down-to-earth physics.

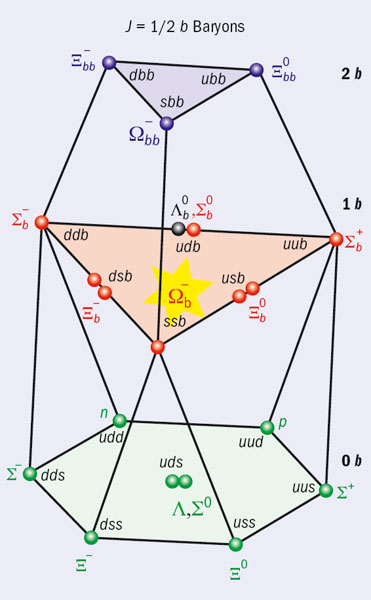

The first area covered was string phenomenology, which uses string theory as model and candidate for the unification of all particles and forces. The various approaches for model building reviewed were mostly of a geometrical nature. That is, many properties of the Standard Model can be translated into geometrical properties of the compactification space that is used to make strings look four-dimensional at low energies. While this translation can be pushed a long way qualitatively, it seems exceedingly difficult technically to go much beyond this stage and obtain predictions that would be testable at the LHC. On the other hand, for the most optimistic case in which the string scale is low (namely of the order of the scale of the weak interactions), concrete predictions of string theory are fully possible, as reported in one of the talks.

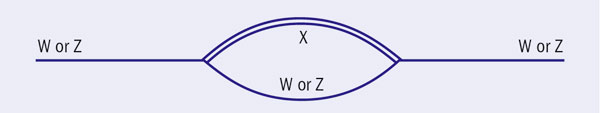

Another area, which has become highly visible during the past year, is the computation of certain scattering amplitudes, often in theories with extended supersymmetries and notably in N = 8 supergravity. Extensive computations based on string-inspired methods suggest that this theory may be finite, owing to unexpected cancellations of Feynman diagrams. However, some researchers have suggested that Feynman diagrams might not provide the most efficient way to perform quantum field theory; the results may instead point to the existence of a yet-to-be-discovered dual formulation of the theory that would be much simpler. Other related results concern theories with less supersymmetry, as well as amplitudes of phenomenological relevance, such as multi-gluon scattering amplitudes.

It is well known that string theory is a theory not only of strings but also of membranes and other extended objects. A hot topic of the past year has been the “M-brane mini-revolution”. This deals with a novel description of M-theory membranes and has created some controversy about the meaning of the results. Several talks duly reviewed this subject and it became apparent that the issues had not yet been completely settled.

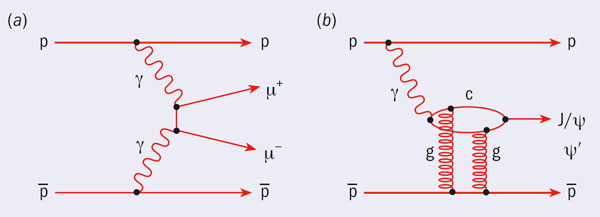

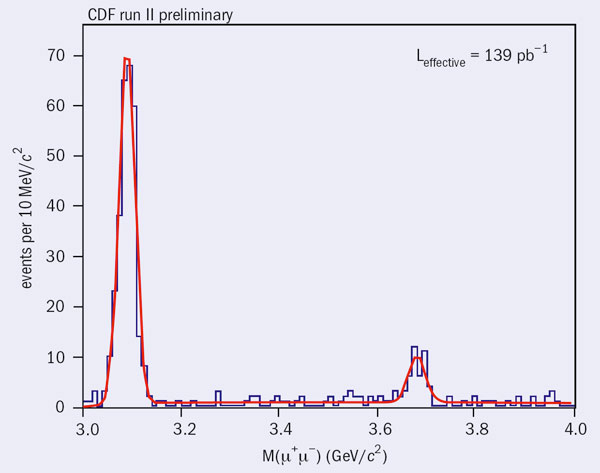

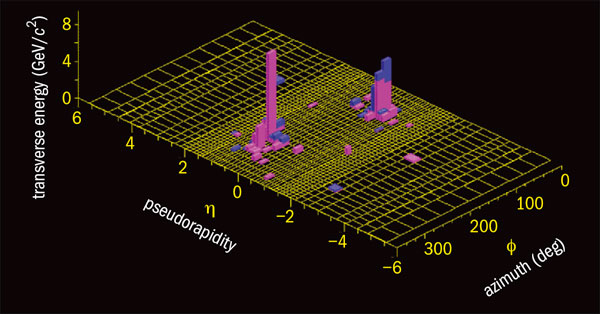

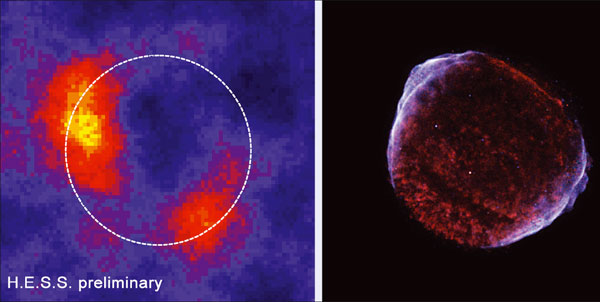

A key topic of every string conference within the last 10 years has been the gauge theory/gravity duality, which maps ordinary gauge theories to gravitational – i.e., string – theories. This year’s focus was mainly on the connection between systems that are strongly coupled – and in a sense hydrodynamical – and gravity. This leads to a stringy, dual interpretation of certain states in heavy-ion physics, such as the quark–gluon plasma. In particular, a link can be made between the decay of glueball states in QCD and the decay of black holes by Hawking radiation. While these ideas seem to work well on a qualitative level, quantitatively solid results are much harder to obtain because of the strongly coupled nature of the physics involved. The significance of this approach is the subject of ongoing debate and collaboration between heavy-ion physicists and string theorists.

A field of permanent activity and conceptual importance is that of black hole physics, to which string theory has made extremely important contributions during the past few years. As reviews at the conference showed, the identification and counting of microscopic quantum states in stringy toy models has been refined and made more precise, even to the level of quantum corrections. Moreover, fascinating connections between black holes and topological strings have been proposed, and testing those connections has been an important field of activity during the past few years. The results of topological string theory have also had a considerable impact on certain areas of mathematics, and have led to fruitful interactions with mathematicians.

Apart from these five focus areas, other subjects were reviewed at the conference. For example, there was a lecture on loop quantum gravity so that the string community could judge whether there might be connections to this seemingly different approach to quantum gravity.

Both during the conference and afterwards, many participants expressed the view that string theory continues to be a healthy, fascinating and important subject for theoretical work. This is despite the fact that the original main goal, namely to explain the Standard Model of particle physics, appears to be much harder to achieve (if, indeed, achievable at all) than initially hoped. In the final outlook talk, David Gross of the Kavli Institute for Theoretical Physics at the University of California, Santa Barbara, presented a picture of string theory as an umbrella that covers most of theoretical physics, similar to the way in which CERN has emerged as an umbrella for the worldwide community of particle physicists.