Since the first LHC collisions about two years ago, the ATLAS experiment has performed superbly – collecting quality data with high efficiency, processing the data in a timely way and preparing and publishing many new physics results. During this time the LHC has delivered more than 5 fb–1 of proton–proton collision data at √s=7 TeV. Using a good fraction of these data, the collaboration has carried out Higgs-boson searches, as well as searches beyond the Standard Model. While no new physics has been observed, ATLAS is setting stringent limits on the production cross-sections of new particles, including – but not limited to – the Higgs boson predicted by the Standard Model. Accurate measurements of Standard Model physics quantities have been performed covering many orders of magnitude in cross-section, with a precision often comparable to or exceeding that of the predictions.

These accomplishments have not been as easy as they may appear. Built on the tremendous success of the LHC, they are also the product of the strength and hard work of the 3000-member ATLAS collaboration. This article recounts the story of the first two years of the ATLAS physics programme, with an eye to some of the special occurrences and accomplishments along the way. However, it represents only the tip of the iceberg as far as the reach of the LHC physics programme is concerned. So far, ATLAS has collected just a small percentage of the total luminosity expected over the lifetime of the LHC.

Major progress

Before any physics analysis can be carried out, many members of the ATLAS collaboration work tirelessly to ready and tune the detectors, data acquisition, and trigger, to collect and reconstruct the data, and to check the quality of the data. Other members work to understand and characterize the various reconstructed objects seen in the detector. These are electrons, muons, τ leptons, photons, jets, missing transverse energy and identified heavy flavour. In two years ATLAS has gone from beginning to understand charged particles in the inner detector to using complex neural networks for flavour tagging. Algorithms have also progressed, for example from simple calorimeter-based definitions of missing transverse energy to more sophisticated definitions correcting for the calibrations and energies of the various objects.

The general complexity of the ATLAS results has followed a similar progression, from counting events for processes with large cross-sections, through using advanced analysis techniques to extract small signals from large backgrounds. Some analyses used complex unfolding techniques to compare measurements in the best way to parton-level predictions, and some have used modern statistical tools that allow the combination of many different channels into a single physics interpretation.

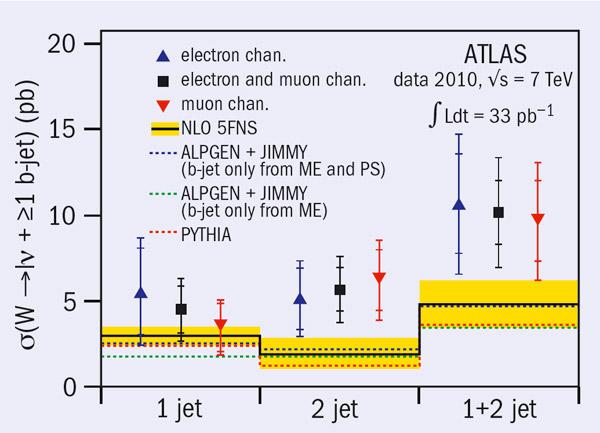

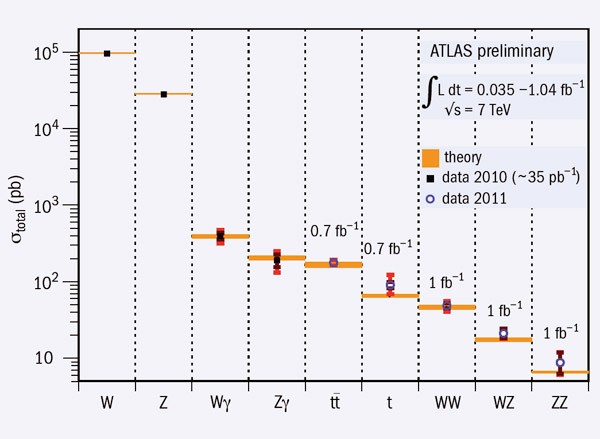

Figure 1 summarizes the production cross-sections for the main Standard Model processes and shows the luminosity used to measure these processes. The W and Z inclusive cross-sections and the Wγ and Zγ cross-sections were measured with the approximately 35 pb–1 from 2010. The tt cross-section is based on a statistical combination of measurements using dilepton final states with 0.70 fb–1 of data and single-lepton final states with 35 pb–1. The other measurements were made with the 2011 dataset. After only two years of running ATLAS can now measure processes with cross-section times branching ratio down to around 10 pb.

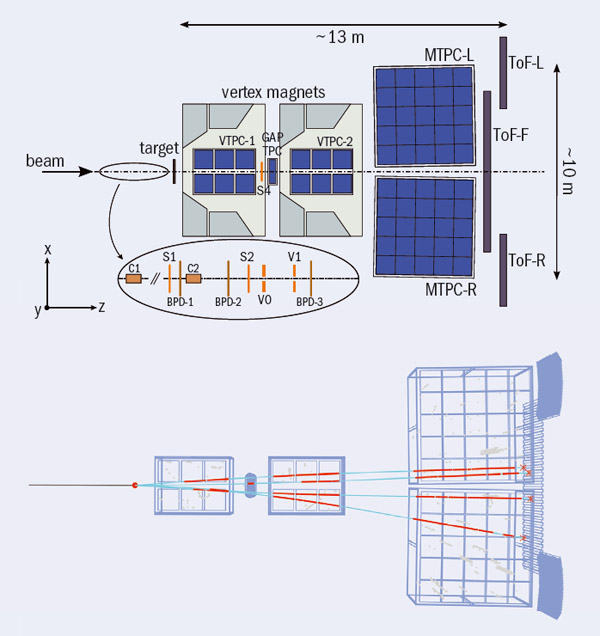

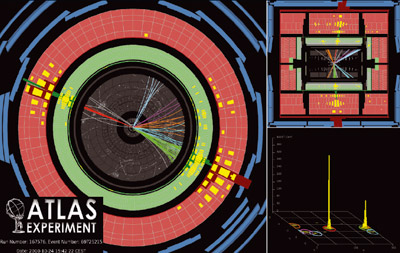

One of the by-products of the excellent LHC performance is another increase in complexity: the presence of multiple interactions within the same bunch crossing (pile-up). The 40 pb–1 of data recorded in 2010 had an average of 3 interactions per bunch crossing (<μ>), allowing good quality measurements in a relatively clean environment. The LHC run in 2011 was characterized by a rapid increase in instantaneous luminosity from the beginning of the machine operations in March, reaching <μ> of 10 in August. Figure 2 shows the complexity of an event with a Z→μμ candidate produced in a bunch crossing with 11 reconstructed proton–proton interaction vertices. The time for the integrated luminosity to double at the beginning of the run was less than one week. Since the end of May this year, the machine has regularly delivered more luminosity in one day than the total delivered in 2010.

First results

In the beginning, analyses focused mostly on understanding and measuring properties using single detectors. The first ATLAS publication on collisions reported the charged-particle multiplicities and distributions as a function of the transverse momentum pT and pseudo-rapidity η, analysing the data taken in December 2009 (√s=0.9 TeV). This allowed validation of the charged-particle reconstruction, providing important feedback on the modelling of the alignment of the detector as well as on the distribution of the material. These results, and those from a more detailed later study, were also used to tune the parameters of the Monte Carlo modelling of non-perturbative processes, which is now used to model the effect of the multiple interactions for the latest data.

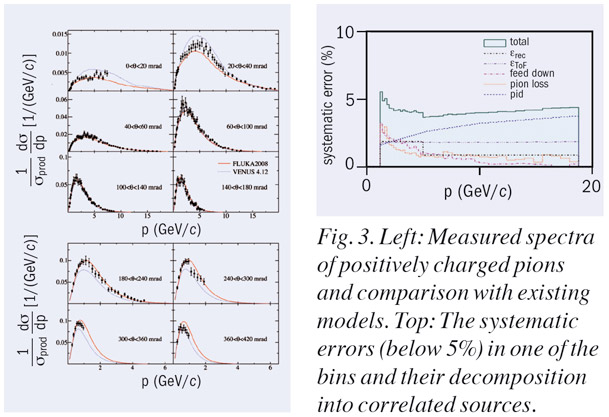

Using only 17 nb–1 of recorded data, the production cross-sections of inclusive jets and dijets have been measured over a range of jet transverse-momenta, up to 0.6 TeV. Figure 3 shows the event with the highest central dijet-mass recorded by ATLAS in 2010. These measurements allowed the first accurate tests of QCD at the LHC. The differential cross-sections showed a remarkably good agreement with next-to-leading (NLO) perturbative QCD (pQCD) calculations, corrected for non-perturbative effects, in this unexplored kinematic regime. Given the good agreement of data and the Standard Model predictions, the study of dijet final states was used to set limits on the mass of new physics objects such as excited quarks Q*: excluding 0.30<mQ* <1.26 TeV at 95% confidence level (CL).

The first few hundred inverse nanobarns of data allowed early searches for new physics, looking for quark-contact interactions in dijet final-state events by studying the χ variable associated with a jet pair, where χ=exp|y*| and y* is the scattering rapidity evaluated in the centre-of-mass frame. The data were fully consistent with Standard Model expectations and allowed quark-contact interactions to be excluded at 95% CL with a compositeness scale Λ=3.4 TeV. Using about 100 times more data than the first jet results, ATLAS studied more complex multi-jet production, with up to six jets per event in the kinematic region of pTjet>60 GeV.

Accurate measurements of Standard Model quantities have been performed covering many orders of magnitude in cross-section

ATLAS was soon able to combine measurements from many detector components and reconstruct more of the Standard Model particles. A study of Standard Model production of gauge bosons (W± and Z0/γ*) was performed with the first 330 nb–1 of data, in the lν and ll final states (l=e or μ). Both the measurements of the inclusive production cross-section times branching fraction σW,Z × BR(W,Z→ lν, ll) and the ratio of the two agree well with next-to-NLO calculations (NNLO) within experimental and theoretical uncertainties.

By combining measurements from the calorimeters and the central inner tracker, ATLAS was able to analyse the production of inclusive high-pT photons and perform extensive QCD studies. For this, additional complexity resides in the need to model and understand significant background contributions. These were estimated for this analysis from data, based on the observed distribution of the transverse isolation energy around the photon candidate. A comparison of results to predictions from NLO pQCD calculations again showed remarkable agreement.

An initial measurement of the production cross-section for top-quark pairs was already possible with just a small fraction of the 2010 data. Combining information from almost all detector systems, events were selected with either a single lepton produced together with at least four high-pT jets plus large transverse missing energy, or two leptons in association with at least two high-pT jets and large transverse missing energy. In this analysis ATLAS used b-jet identification algorithms for the first time – crucial for the rejection of the large backgrounds that do not contain b-quarks. A total of 37 single-lepton and 9 dilepton events were selected, in good agreement with Standard Model predictions.

The use of all of the 2010 data allowed ATLAS to study more complex quantities and distributions. For example, the W and Z differential cross-sections were measured as functions of the boson transverse-momentum, allowing more extensive tests of pQCD. Using the ratio of the difference between the number of the positive and the negative Ws to their sum, ATLAS measured the W charge-asymmetry as a function of the boson pseudo-rapidity. These results provided the first input from ATLAS on the fractions of u and d quark momentum of the proton.

At this stage, the analysis of W→τν and Z→ττ represented an important step in commissioning the selection of hadronic τ final states that are crucial in searches for new physics as well as for the Higgs boson. More accurate studies of top physics were also performed, from the inclusive cross-section for tt pairs to a preliminary measurement of the top quark’s mass.

New experiences

The full 2010 dataset also offered the first concrete possibility to look for physics signals beyond the Standard Model over a wide spectrum of final states. Events with high-pT jets or leptons and with large missing transverse momentum were studied extensively to search for supersymmetric (SUSY) particles. Here, more complex variables were used, such as the “effective mass” (the sum of the transverse momentum of selected jets, leptons and missing transverse energy), which is sensitive to the production of new particles. No significant excess of events was found in the data and limits were set on the mass of squarks and gluinos, m&qtilde; and m>ilde;, assuming simplified SUSY models. If m&qtilde; = m>ilde; and the mass of the lightest stable SUSY particle is mχ01=0, then the limit is about 850 GeV at 95% CL. Limits have been placed assuming other SUSY interpretations, such as minimal supergravity grand unification (MSUGRA) and the constrained minimal supersymmetric extension of the Standard Model (CMSSM).

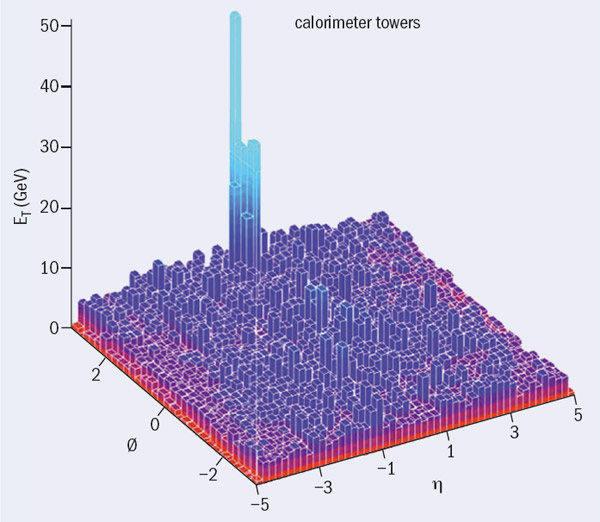

The 2010 run ended with a short period in November dedicated to lead-ion collisions with a centre-of-mass energy per nucleon √sNN = 2.76 TeV. This was certainly one of the most amazing experiences for ATLAS during the first two years of collisions at the LHC. As the online event display in the ATLAS control room brought up the first event images, the calorimeter plot showed many events where a narrow cluster of calorimeter cells with high-energy deposits (a jet) were poorly – or not at all – balanced in the transverse plane by equivalent activity in the back-to-back region (figure 4). The gut feeling was clear: this was the first direct observation of jet-quenching in heavy-ion collisions. A detailed analysis of the early lead-collision data studied the dijet asymmetry – defined as Aj = (ETj1–ETj2)/(ETj1+ETj2), where ETji is the transverse jet energy calibrated at the hadronic scale – as a function of the event “centrality”. This showed that the transverse energies of dijets in opposite hemispheres become systematically more unbalanced with increasing event “centrality”, leading to a large number of events that contain highly asymmetric dijets. Such an effect was not observed in proton–proton collisions, pointing to an interpretation in terms of strong jet-energy loss in a hot, dense medium.

The early part of data-taking in 2011 was an extremely intense period. Already in June, ATLAS presented the first preliminary results on eight analyses using about 300 pb–1 of data, almost 10 times the integrated luminosity of 2010. This allowed more stringent limits to be placed on SUSY particles, heavy bosons W’ and Z’, new particles decaying to tt pairs, and on the production cross-section of a Standard Model Higgs boson decaying to photon-pair final states. It also allowed the first limits on particles with masses above 1 TeV.

Using a similar dataset ATLAS reported preliminary results on the production of single top at the LHC

Using a similar dataset ATLAS reported preliminary results on the production of single top at the LHC, looking in particular to the t-channel process, where a b-quark from the sea scatters with a valence quark. This process is particularly important, as any deviation from QCD predictions may indicate the presence of new physics beyond the Standard Model. The analysis is extremely difficult as the signal is hidden under a large non-reducible background from W+jets. It requires complex methods that make use of the full kinematic information of the events, looking simultaneously at many different distributions.

Extending the search with 1 fb–1

While ATLAS was carrying out these analyses, the LHC completed delivery of the first inverse femtobarn, opening up a number of new physics channels. The collaboration quickly released results on a total of 35 physics analyses with these data, most of them having to deal with the increased level of pile-up.

Preliminary WW, WZ and ZZ diboson production cross-section measurements show an overall precision of about 15% (WW and WZ) or 30% (ZZ). These measurements, all consistent with the Standard Model predictions, represent an important foundation for searches for the Standard Model Higgs boson. Triple-gauge couplings have been studied and found to be in agreement with the Standard Model, allowing limits to be placed on the size of anomalous couplings of this kind.

The 2011 data allowed more sensitive searches for SUSY particles, using similar or more complex distributions than in 2010. Once more, the results are in good agreement with the Standard Model expectations and have again been interpreted in the MSUGRA/CMSSM models as well as in simplified models. These studies exclude squarks and gluinos in simplified models with masses less than about 1.08 TeV at 95% CL.

ATLAS has also performed searches for dijet, lepton–neutrino and lepton–lepton resonances. Figure 5 shows the invariant mass distribution for an electron and missing transverse-energy. These searches have placed limits on new heavy-quark masses, mQ* >1.92 TeV, and on the mass of W’ and Z’ predicted by a number of different models, mW’ > 2.15 TeV and mZ’ > 1.83 TeV, all at the 95% CL.

The 2011 data began to open up the search for the Standard Model Higgs boson. To cover the entire mass range, from about 110 GeV (the limit from the Large Electron Positron collider is 114.4 GeV at 95% CL) to the highest possible values (around 600 GeV), this exploration was conducted in several final states: H→ γγ; ττ; WW(*)→lνlν, lνqq; ZZ(*)→llll, llνν, llqq as well as H→bb produced in association with a W or Z.

In the analysis dedicated to the search for H→γγ processes, events with pairs of high-pT photons were selected and the photons combined to reconstruct the invariant mass. The accurate measurement of the direction of flight of the photons is crucial for obtaining high mass-resolution and hence a strong rejection of background processes, in particular, QCD diphoton production. This is possible in ATLAS thanks to the longitudinal segmentation of the electromagnetic calorimeter, which in addition allows a strong rejection of fake photons produced by QCD jets. This channel alone allowed exclusion at the level of about three times the cross-section predicted by the Standard Model.

The analysis dedicated to the search for H→WW*→ lνlν was based on the selection of high-pT lepton pairs, electrons and muons, produced in association with large transverse missing energy. Two independent final-state classes were considered, depending on whether 0 or 1 high-pT jets were reconstructed in the same event. The analysis revealed no excess events, excluding the production of a Standard Model Higgs boson with mass in the interval 154<mH<186 GeV at 95% CL and thereby enlarging the mass region already excluded by the Tevatron.

The golden channel H→ZZ(*)→4l is based on a conceptually simple analysis: the selection of events with isolated dimuon or di-electron pairs, associated to the same hard-scattering proton–proton vertex. ATLAS found the rate of 4-lepton events to be fully consistent with the expectations from background; the analysis excludes a Higgs boson produced with a cross-section close to that predicted by the Standard Model throughout nearly the entire mass interval from 200 to 400 GeV. No evidence for an excess of events has been found in all other analysed channels, allowing 95% CL exclusion limits to be placed for each of them.

Last, ATLAS used complex statistical methods to combine the information from all of these Higgs decay channels into a single limit. While the Standard Model does not predict the mass of the Higgs boson, it does predict the production cross-section and branching ratios once the mass is known. Figure 6 shows, as a function of the Higgs mass, the Higgs boson production cross-section excluded at 95% CL by ATLAS, in terms of the Standard Model cross-section. If the solid black line (the observed limit) dips below 1, then the data exclude the production of the Standard Model Higgs at 95% CL at that mass. If the solid black line is above 1, the production of a Standard Model Higgs cannot be excluded at that mass. As figure 6 shows, the data exclude the Standard Model Higgs boson in the mass range 146<mH<466 GeV at 95% CL, with the exception of the mass intervals 232<mH <256 GeV and 282<mH <296 GeV.

This article has been able to present only a few of the ATLAS results using up to the first inverse femtobarn of data. In 2011 the experiment collected more than 5 fb–1, so the collaboration is working hard on the analysis of the new data in time for presentation of new results at the “winter” conferences early in 2012 . The first results presented here represent only a few per cent of the total data ultimately expected from the LHC. We look forward to many more exciting and impressive years.

For information about all these results and more, see https://twiki.cern.ch/twiki/bin/view/AtlasPublic.