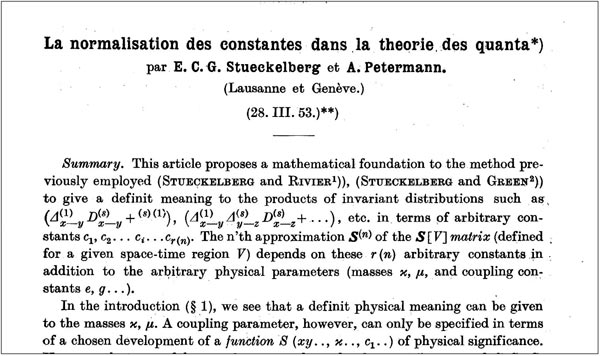

The origin of this conceptual revolution was the work in which these two theoretical physicists discovered that all quantities such as the gauge couplings (αi ) and the masses (mj) must “run” with q2, the invariant four-momentum of a process (Stueckelberg and Petermann 1951). It took many years to realize that this “running” allows not only the existence of a grand unification and opens the way to supersymmetry but also finally produces the need for a non-point-like description of physics processes – the relativistic quantum-string theory – that should produce the much-needed quantization of gravity.

It is interesting to recall the reasons that this paper attracted so much attention. The radiative corrections to any electromagnetic process had been found to be logarithmically divergent. Fortunately, all divergencies could be grouped into two classes: one had the property of a mass; the other had the property of an electric charge. If these divergent integrals were substituted with the experimentally measured mass and charge of the electron, then all theoretical predictions could be made to be “finite”. This procedure was called “mass” and “charge” renormalization.

Stueckelberg and Petermann discovered that if the mass and the charge are made finite, then they must run with energy. However, the freedom remains to choose the renormalization subtraction points. Petermann and Stueckelberg proposed that this freedom had to obey the rules of an invariance group, which they called the “renormalization group” (Stueckelberg and Petermann 1953). This is the origin of what we now call the renormalization group equations, which – as mentioned – imply that all gauge couplings and masses must run with energy. It was remarkable many years later to find that the three gauge couplings could converge, even if not well, towards the same value. This means that all gauge forces could have the same origin; in other words, grand unification. A difficulty in the unification was the new supersymmetry that my old friend Bruno Zumino was proposing with Julius Wess. Bruno told me that he was working with a young fellow, Sergio Ferrara, to construct non-Abelian Lagrangian theories simultaneously invariant under supergauge transformations, without destroying asymptotic freedom. During a nighttime discussion with André, in the experimental hall to search for quarks at the Intersecting Storage Rings in 1977, I told him that two gifts were in front of us: asymptotic freedom and supersymmetry. The first was essential for the experiment being implemented, the second to make the convergence of the gauge couplings “perfect” for our work on the unification. We will see later that this was the first time that we realized how to make the unification “perfect”.

The muon g-2

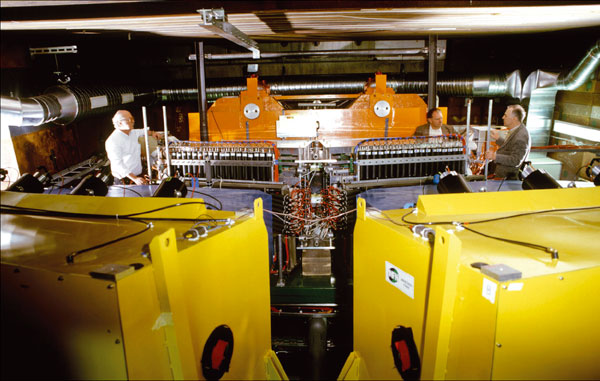

The second occasion for me to know about André came in 1960, when I was engaged in measuring the anomalous magnetic moment (g-2) of the muon. He had made the most accurate theoretical prediction, but there was no high-precision measurement of this quantity because technical problems remained to be solved. For example, a magnet had to be built that could produce a set of high-precision polynomial magnetic fields throughout as long a path as possible. This is how the biggest (6-m long) “flat magnet” came to be built at CERN with the invention of a new technology now in use the world over. André worked only at night and because he was interested in the experimental difficulties he spent nights with me working in the SC-Experimental Hall. It was a great help for me to interact with the theorist who had made the most accurate theoretical prediction for the anomalous magnetic moment of a particle 200 times heavier than the electron. The muon must surely reveal a difference in a fundamental property like its g-value. Otherwise, why is its mass 200 times greater than that of the electron? (Even now, five decades later, no one knows why.)

When the experiment at CERN proved that, at the level of 2.5 parts in a million for the g-value, the muon behaves as a perfect electromagnetic object, the problem changed focus to ask why are there so many muons around? The answer lay in the incredible value of the mass difference between the muon and its parent, the π. Could another “heavy electron” – a “third lepton” – exist with a mass in the range of giga-electron-volts? Had a search ever been done for this third “lepton”? The answer was no. Only strongly interacting particles had been studied. This is how the search for a new heavy lepton, called HL, was implemented at CERN, with the Proton AntiProton into LEpton Pairs (PAPLEP) project, where the production process was proton–antiproton annihilation. André and I discussed these topics in the CERN Experimental Hall during the night shifts he spent with me.

The results of the PAPLEP experiment gave an unexpected and extremely strong value for the (time-like) electromagnetic form-factor of the proton, whose consequence was a factor 500 below the point-like cross-section for PAPLEP. This is how, during another series of night discussions with André , we decided that the “ideal” production process for a third “lepton” was (e+e–) annihilation. However, there was no such collider at CERN. The only one being built was at Frascati, by Bruno Touschek, who was a good friend of Bruno Ferretti and another physicist who preferred to work at night. I had the great privilege of knowing Touschek when I was in Rome. He also became a strong supporter of the search for a “third lepton” with the new e+e– collider, ADONE. Unfortunately the top energy of ADONE was 3 GeV and the only result that we could achieve was a limit of 1 GeV for the mass of the much desired “third lepton”.

Towards supersymmetry

Another topic talked about with André has its roots in the famous work with Stueckelberg – the running with energy of the fundamental couplings of the three interactions: electromagnetic, weak and strong. The crucial point here was at the European Physical Society (EPS) conferences in York (1978) and Geneva (1979). In my closing lecture at EPS-Geneva, I said: “Unification of all forces needs first a supersymmetry. This can be broken later, thus generating the sequence of the various forces of nature as we observe them.” This statement was based on work with André where in 1977 we studied – as mentioned before – the renormalization-group running of the couplings and introduced a new degree of freedom: supersymmetry. The result was that the convergence of the three couplings improved a great deal. This work was not published, but known to a few, and it led to the Erice Schools Superworld I, Superworld II and Superworld III.

This is how we arrived at 1991 when it was announced that the search for supersymmetry had to wait until the multi-tera-electron-volt energy threshold would become available. At the time, a group of 50 young physicists was engaged with me on the search for the lightest supersymmetric particle in the L3 experiment at CERN’s Large Electron Positron (LEP) collider. If the new theoretical “predictions” were true then there was no point in spending so much effort in looking for supersymmetry-breaking in the LEP energy region. Reading the relevant papers, André and I realized that no one had ever considered the evolution of the gaugino mass (EGM). During many nights of work we improved the unpublished result of 1977 mentioned above: the effect of the EGM was to bring down the energy threshold for supersymmetry-breaking by nearly three orders of magnitude. Thanks to this series of works I could assure my collaborators that the “theoretical” predictions on the energy-level where supersymmetry-breaking could occur were perfectly compatible with LEP energies (and now with LHC energies).

Finally, in the field of scientific culture, I would like to pay tribute to André Petermann for having been a strong supporter for the establishment of the Ettore Majorana Centre for Scientific Culture in Erice. In the old days, before anyone knew of Ettore Majorana, André was one of the few people who knew about Majorana neutrinos and that relativistic invariance does not give any privilege to spin-½ particles, such as the privilege of having antiparticles, all spin values having the same privilege. In all of my projects André was of great help, encouraging me to go on, no matter what the opposition could present in terms of arguments that often he found to be far from being of rigorous validity.