Long, long ago, in a far, far different universe, there were equal amounts of matter and antimatter. At least, this is the most popular conception. Why only matter remains has been a nagging question for decades.

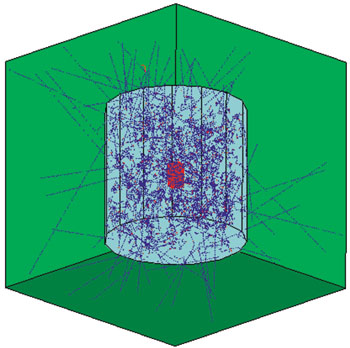

We normally think of antimatter as a sort of inverted matter behaving the same way as matter does but with reversed properties, such as electric charge. How nature could choose matter over antimatter is puzzling. A seemingly obscure violation of a symmetry principle, called CP, may hold part of the key. As we approach the close of the millennium, laboratories around the world are poised to enter a new era by studying this phenomenon in a new sector B mesons, particles containing the fifth quark, variously denoted as “beauty”, “bottom” or simply “b”.

Symmetry principles

A major theme in particle physics for the last half-century has been symmetry relations. These came to the fore in the mid-1950s when “parity” violation was discovered. Parity conservation is the apparently innocuous proposition that the laws of physics are the same, or symmetric, when spatially inverted (the parity operation, P), as in a mirror-image world.

Prompted by the realization of T D Lee and C N Yang that there was no experimental evidence that weak interactions conserved parity, C S Wu and collaborators discovered in 1957 that weak interactions do not conserve parity in the radioactive decay of cobalt-60. A stunning development was that weak interactions depend on the specific “handedness” of particles. In modern terms, this is because the charged W carrier particle only couples left-handedly.

The realization soon followed that another symmetry charge-conjugation (C) was violated too. This is the operation of switching particles to their antiparticles, and vice versa. However, C violation occurred in such a way that the combined operation of charge-conjugation and parity (CP) restored the symmetry. Thus the decays of mirror-inverted cobalt-60 antinuclei, for example, should behave the same way as those of cobalt-60.

Although P and C are not always good symmetries, the combined CP operation was respected by nature. CP was a consolation prize for physicists. At least it seemed so until 1964. Less than a decade after the fall of parity symmetry, physicists were jolted again when CP invariance also fell by the wayside. A landmark experiment, led by James Cronin and Val Fitch, saw a rare neutral K meson decay that should be prohibited if CP is a perfect symmetry. The effect is small: 1 in 500 decays.

Parity violation could be attributed to an intrinsic feature of weak interactions, but CP violation was a mystery. The effect was very small and hard to study. Was it a feature of weak interactions alone, a sign of a new type of interaction or something completely different? While the origin of CP violation remained a mystery, within a few years it was realized by the renowned Soviet physicist Andrei Sakharov that CP violation was a necessary ingredient for an eventual explanation of how an initially matter-antimatter symmetric universe could evolve into a matter-dominated one.

It took some time for Sakharov’s suggestion to be appreciated, but, in the end, CP violation went from being an unpleasant wart on the face of weak interactions to a critical component of an explanation of why we exist.

Quark mixing?

Of the many ideas offered to explain CP violation, one remarkably bold proposition was based on quark mixing. In this hypothesis, which was proposed by N Cabibbo in 1963, the quantum states of quarks with definite mass are mixtures of the states that the weak interaction “sees”.

With only four quarks, the rotation matrix that transforms one set of quark states into the other is restricted to real numbers and cannot accommodate CP violation. In 1972, eight years after the discovery of CP violation, M Kobayashi and T Maskawa proposed that quark mixing be generalized to cover three generations of quark pairs. With six quarks, the rotation matrix, now known as the Cabibbo-Kobayashi-Maskawa (CKM) matrix, can have a physical phase that is a complex number, and this could account for the CP violation observed in neutral K-mesons.

The bold proposal did not attract much attention. After all, only three quarks were known at the time. There was speculation about a fourth quark, but even the quark model itself was regarded with some lingering suspicion. Kobayashi and Maskawa were advocating not one new quark but three.

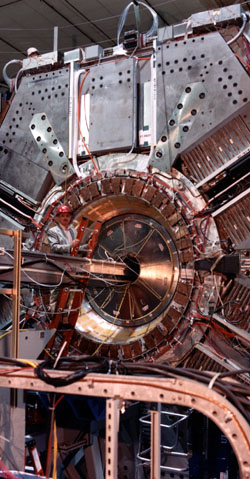

The picture began to change quickly in 1974 when the J/psi was discovered and the second quark generation completed. In a surprisingly short time, Kobayashi and Maskawa’s third generation was also exposed: the tau lepton appeared in 1975, and then the b quark surfaced with the upsilon discovery in 1977. The wait was long, however, before its partner, the top quark, definitively showed itself in 1995.

Quark mixing became an integral part of the Standard Model of particle physics, and the hypothesis of Kobayashi and Maskawa became a leading candidate to describe CP violation in the only place it has so far been observed, neutral K-mesons.