Rare processes and the violation of CP symmetry provided the focus for the recent Theory Workshop at the DESY Laboratory in Hamburg. As emphasized by Chris Quigg (Fermilab) in the opening lecture, discrete symmetries (box 1) and their violation, in particular CP symmetry, play an important role in a deeper understanding of nature at both very small and large distances. Studying these violations in rare processes may give hints to what lies beyond the Standard Model of particle physics.

In the Standard Model, CP violation is attributed to quark transitions, which are described by the three dimensional (Cabbibo-Kobayashi-Maskawa; CKM) matrix. The classic effect in the decays of neutral kaons into two pions, which was first seen in 1964, is attributed to “indirect” CP violation through the mixing of the neutral kaon and its antiparticle (box 2). This type of violation is usually characterized by a small parameter, which is measured to be roughly 2.3 x 10-3. However, the Standard Model also allows “direct” CP violation, governed by quark mechanisms involving the exchange of the sixth “top” quark.

The theoretical status of these effects was summarized by Matthias Jamin (Heidelberg). Refined gluon corrections and a more accurate top quark mass (174 ± 5 GeV) from the CDF and DO collaborations at Fermilab have both considerably improved the evaluation of CP violation parameters. While indirect CP violation in the Standard Model is consistent with experimental data, large uncertainties currently preclude a precise comparison of direct and indirect CP violation.

Despite intensive efforts by theorists, estimates of this ratio (which is known in the trade as e¢/e) by various groups range between 5 x 10-4 and 30 x 10-4 .

As discussed by Guido Martinelli (Rome), Laurent Lellouch (Marseille) and Amarjit Soni (Brookhaven), advanced numerical lattice calculations could considerably improve the estimates in the coming years. However,

as stressed by Jamin, it is important to develop further the existing analytical tools in order to confront the lattice

results.

Difficult measurements

The experimental situation for e¢/e, described by Martin Holder (Siegen), improved considerably in the past two years due to measurements by the KTeV collaboration at Fermilab (28 ± 4) x 10-4 and the NA48 collaboration at CERN (14 ± 4) x 10-4. These measurements confirm the previous result of the NA31 collaboration at CERN (23 ± 7) x 10-4 that e¢/e is not zero, confidently ruling out certain hypotheses.

Also taking into account the older inconclusive measurement of the E731 collaboration (7 ± 5) x 10-4, one arrives at the world average of (19 ± 3) x 10-4. In view of the spread in values obtained by various experimental groups, the resulting small error should be treated with caution.

Within the next few years the experimental situation should improve considerably through the new data from KTeV and NA48, and in particular from the KLOE experiment at DAFNE in Frascati.

New channels

While the theoretical estimates of e¢/e in the Standard Model are compatible with the experimental data within the theoretical and experimental uncertainties, there is still a lot of room for new physics. As discussed by Luca Silvestrini (Rome), important new contributions are still possible within supersymmetric (box 3) extensions of the Standard Model.

The present bounds on CP violation in various processes already give very important limits for the masses and weak couplings of supersymmetric particles. In particular, the pattern of the masses of squarks – the supersymmetric partners of quarks – is severely restricted. However, in spite of these constraints, large supersymmetric effects in CP-violating processes are possible.

It is important to study CP violating decays and CP conserving rare decays, which are theoretically far cleaner than those traditionally studied. As stressed by Gino Isidori (Frascati), a “gold-plated” decay in this respect is that of the long-lived kaon into a neutral pion, neutrino and antineutrino, proceeding almost exclusively through direct CP violation. The predicted branching ratio within the Standard Model (3 x 10-11) and the presence of neutrinos and the neutral pion make the measurement of this decay formidable.

On the other hand, in certain supersymmetric models the branching ratio could be one order of magnitude higher. Most important is that the newly approved KOPIO experiment at Brookhaven should be able to measure this decay in the first half of this decade even if the branching ratio is at the predicted level. There are also plans to measure this decay at Fermilab and at KEK in Japan. The optimism in measuring this branching ratio is strengthened by the observation of one event in the CP-conserving decay of the charged kaon into a charged pion, neutrino and antineutrino by the E787 at Brookhaven, dating from 1997.

The branching ratio for this rare decay as of the end of 2000 is around 1.5 x 10-10 – slightly higher but fully compatible with expectations. As this decay is also theoretically very clean, the improved measurements of its branching ratio expected in the coming years at Brookhaven, and later at Fermilab, will provide powerful constraints on the elements of the CKM quark transition matrix and the parameters of new physics.

Beauty quark

In the coming years, some of the most promising tests of

the Standard Model and its extensions will come from studying the decays of B-mesons (containing the fifth –

“beauty” – quark) into strange hadrons and either a photon or a lepton-antilepton pair. As reviewed by

Christoph Greub (Bern), refined calculations of gluon corrections and new physics contributions, in particular in

supersymmetric models, during the last years will allow for stringent tests of the theory once the experimental

branching ratios become precise.

The channel with the final photon was observed in 1993 by the CLEO

collaboration at Cornell, and these data have been improved considerably since by CLEO and by the ALEPH

collaboration at CERN. Recently the efforts to measure this channel precisely have been joined by the new

B-factories at SLAC (Stanford) and KEK (Japan), so that in a few years a rather precise branching ratio should

become available.

However, as emphasized by Greub, the available data, while being consistent with

current expectations, already put powerful constraints on supersymmetric extensions. The second channel, with

a muon-antimuon pair in the final state, should be observed this year at the B-factories and at Fermilab. For

new physics, this is even more interesting than the photon-yielding decay.

Larger effects

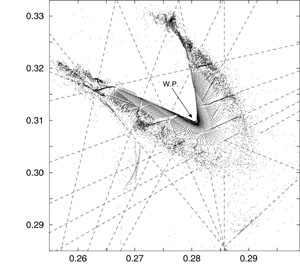

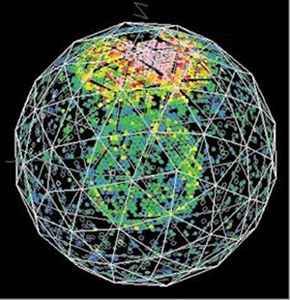

While CP violation has been observed so far only in kaon decays, where the effects are rather small, much larger effects are predicted for B-mesons. As stressed by Roy Aleksan (Saclay), Yosef Nir (Weizmann) and Robert Fleischer (DESY), there are several decays where measurements should fix CP violation without almost any hadronic uncertainties. Here the central role is played by the “gold-plated” decay of the Bd-mesons (bound states of an b-antiquark and a down quark) into a short-lived kaon and a ¥ (charm quark-antiquark bound state). The corresponding CP violating asymmetry is parametrized by an angle, ß, in the so-called unitarity triangle, which is related to the CKM matrix.

The most recent data – which were reviewed by Aleksan – from the BaBar and Belle experiments at SLAC and KEK respectively give values of sin2ß somewhat lower than expected and lower than earlier measurements by the CDF collaboration at Fermilab. The three experiments taken together give sin2ß = 0.42 ± 0.24, compared with the Standard Model-expected sin2ß = 0.7 ± 0.15, and discussed by Nir and Ali (DESY). Clearly, within the experimental uncertainties, the measured value is consistent with expectations, which, using among other inputs the observed CP violation in kaon decays, is subject to theoretical uncertainties.

On the other hand, as stressed by Ali, Nir and Silvestrini, improved measurements of sin2ß significantly below 0.5 would signal the presence of new physics contributions – in particular new CP violating phases.

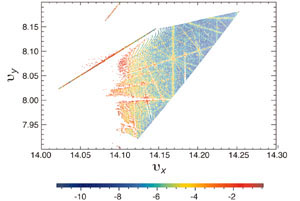

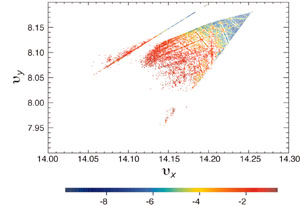

The large variety of CP-violating asymmetries in B-decays should allow for decisive tests of the Standard Model and its extensions. Other CP-violation parameters could be measured, initially by BaBar and Belle in the coming years, as reviewed by Aleksan. The decays of the heavier Bs meson, containing a strange quark, will open up more possibilities. These measurements can only be done by the dedicated experiments LHCB at CERN and BeTeV at Fermilab around the year 2005. Several strategies were reviewed by Robert Fleischer (DESY). However, he also emphasized that the two-body decays of Bd mesons into pions and kaons, measured first by CLEO at Cornell and now studied by BaBar and Belle, despite some hadronic uncertainties, are likely to provide very valuable constraints.