More than 900 physicists participated in the 31st International Conference on High-Energy Physics (ICHEP2002), held in Amsterdam on 24-31 July. The Dutch National Institute for Nuclear Physics and High Energy Physics (NIKHEF) organized the conference, and almost all of its graduate students took part as assistants to the chairpersons, convenors or speakers. The participants stayed all over the city, and converged on the RAI conference centre each morning – easily distinguished by their bright orange conference bags. In the tradition of the Rochester conferences, the subjects were wide-ranging, with a mixture of new results and review talks providing a comprehensive overview of high-energy physics today and directions for the future. Social events were organized in several beautiful old and new buildings, including the conference dinner in the Amsterdam opera house, and a public lecture in the auditorium of the University of Amsterdam, delivered by the winner of the 1999 Nobel Prize for Physics, Professor Gerard ‘t Hooft of Utrecht University.

Collider physics

The conference began with three days of parallel sessions. After a day off on Sunday, another three days of plenary sessions followed. Highlights were the results on CP violation in systems composed of bottom quarks, neutrino oscillations, and the anomalous magnetic moment of the muon.

The plenary sessions started off with reports from the first year of Run IIa operation at Fermilab’s Tevatron. The CDF and D0 detectors have undergone major renovations to take full advantage of the new data set, and the Tevatron is playing its part, with luminosity steadily climbing towards its design value. One exciting development for CDF has been the successful commissioning of a new impact parameter trigger, which uses the newly installed silicon vertex tracker to tag hadronic B-decays, with impressive online reconstruction. Franco Bedeschi of Pisa, reporting in the plenary session, demonstrated the effectiveness of this trigger, showing a signal peak of 33 B-candidates decaying to two charged hadrons. Boston University’s Meenakshi Narain presented the upgraded D0 detector, which has also replaced major system components and is operating with completely new trigger configurations. Both detectors were able to showcase first results at the new centre of mass energy of 1.96 TeV. The results on W- and Z-physics, B-physics, charm and jet physics show that everything is in working order, and we can look forward to exciting new data in the next few years. This includes looking for possible experimental signatures of physics beyond the Standard Model, as discussed by Robert McPherson from the University of Victoria, Canada. This talk drew together data from CERN’s Large Electron Positron (LEP) collider, the Tevatron and DESY’s HERA collider to outline a roadmap towards possible signs of new physics. One new and unusual form this could take is in the form of so-called “little Higgs theories”, as discussed by Martin Schmaltz of Boston. These theories aim to solve the famous hierarchy problem (whereby the forces of nature appear to operate at vastly different and seemingly arbitrary energy scales) and produce a consistent extension of the Standard Model valid up to the 10 TeV range. This new approach is gaining fans in the theoretical community, and is an exciting development to watch out for.

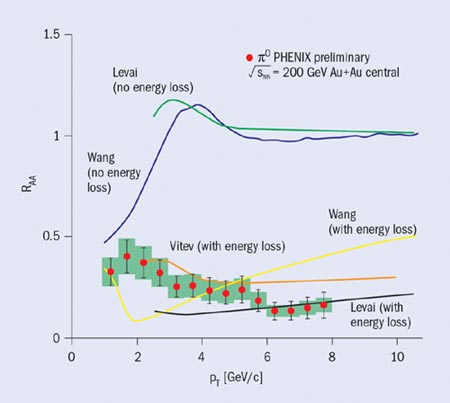

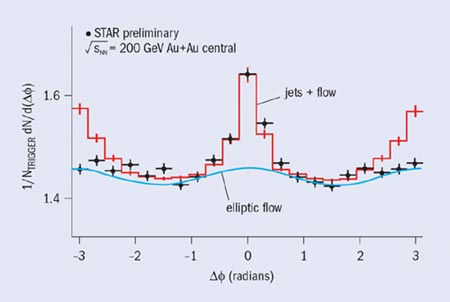

For the field of heavy-ion collisions, John Harris of Yale presented an overview of the various experimental signals for the quark-gluon plasma, while Glasgow’s Mark Alford discussed quark matter at high density and temperature, emphasizing the possible phases of the theory of strong interactions, quantum chromodynamics (QCD). He also reviewed the status of theoretical approaches such as lattice gauge calculations, and technical issues such as the necessary resummation of so-called hard thermal loops in finite temperature field theory. Several other technical issues, results of experiments at CERN, and results and plans for the RHIC accelerator at Brookhaven, as well as the outlook for experiments at CERN’s Large Hadron Collider (LHC) were discussed in parallel sessions convened by Paolo Giubellino of Turin and Raimond Snellings of NIKHEF.

Particle symmetries

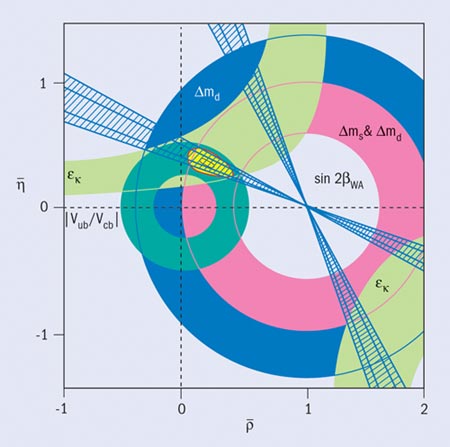

The parallel session on CP violation kicked off with a presentation of the result from the final analysis of data from the NA48 experiment at CERN, and recent results from Fermilab’s KTeV, before moving on to the latest results from the B-factory experiments BaBar at SLAC in the US and BELLE at KEK in Japan. This reflects the culmination of great achievements in the Kaon sector of CP violation – marked by increasingly precise experimental results yet with interpretations blurred by strong interaction effects in the K-meson system – and the opening of the exciting beauty era, where many effects are large and have a cleaner interpretation.

The electron-positron colliders at SLAC and KEK have delivered between them around 180 million b quark-antiquark pairs. To put this in perspective, this is already over a factor 40 more than had been seen in total at LEP – and the data are being put to good use. In the plenary session, Masanori Yamauchi of KEK presented the new results from BELLE, and Jean Karyotakis of LAPP in Annecy, France, showcased the work from BaBar. Both experiments have updated measurements of sin2b (obtained from the asymmetry between neutral B and anti-B meson decay rates), and in addition are starting to explore the more challenging channels, such as B-decays into charged pion pairs where the two experiments currently show intriguingly different asymmetries, and new data are eagerly awaited.

Data on rare decay modes were also presented. As Yossi Nir of the Weizmann Institute in Rehovot, Israel, summarizing for the plenary session, pointed out, the study of CP violation is now firmly experiment driven. He placed the results in theoretical context, illustrating that the constraints on the CKM quark-mixing matrix from CP-violating experiments are more powerful than those from CP-conserving measurements. However, the Standard Model description of CP violation in the B and K sectors still looks healthy. Nir emphasized the importance of future measurements in processes where the contribution from new physics mechanisms is expected to be enhanced, such as the forthcoming electric dipole moment experiments.

In the parallel session on heavy quarks, convened by Sinéad Ryan of Dublin’s Trinity College and Elisabetta Barberio of the Southern Methodist University, the need for precision lattice calculations in heavy-quark physics was mentioned in many experimentalists’ talks. This is being addressed by the lattice community with calculations of a range of important quantities such as quark masses, unitarity triangle parameters, decays and meson-mixing parameters. New lattice calculations were discussed, and a new method for determining heavy-quark masses was reported. In spectroscopy, and for many other quantities, the largest uncertainty is now quenching (in the quenched approximation valence quarks are treated exactly, and the sea quarks are treated as a mean field). The first steps at removing this approximation have already been taken, and the next few years will see remarkable progress in lattice calculations and a new era of precision B-physics from the lattice.

In the plenary sessions, the lattice calculations were summarized by Laurent Lellouch of Marseilles, who discussed the various approximations, treatment of heavy quarks and the use of chiral perturbation theory for extrapolations in the light-quark sector. Achille Stocchi of Orsay summarized experimental results on heavy-hadron physics, including spectroscopy, lifetimes and decay modes. He stressed the richness of charmed baryon spectroscopy, where Cornell’s CLEO experiment dominates and 22 charmed baryons have already been found. A further result from CLEO was the observation of the upsilon-1D state, which is the first new narrow b-b state to be observed in 19 years. He also emphasized how the BaBar and BELLE results in the B-sector have led to a situation where one now has to look for precision effects.

<textbreak=Neutrinos>

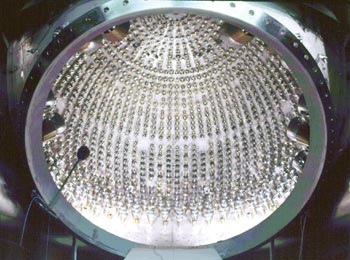

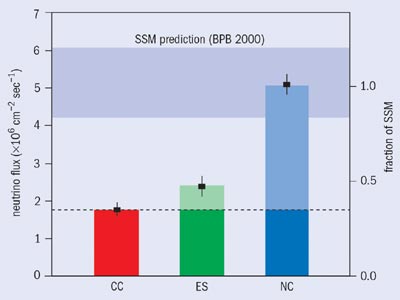

In the parallel session on neutrinos, as well as in the plenary talk by Sussex University’s David Wark, the Sudbury Neutrino Observatory (SNO) results were highlighted. The data show convincingly, and without reference to other experiments, evidence for solar neutrino oscillations, in particular those of electron neutrinos into other types. The data can be combined with new Superkamiokande results on spectral distortions and day-night asymmetries of elastic neutrino interactions to constrain the oscillation parameters and mass-squared difference between the neutrino types. The strongly favoured region lies close to maximal mixing, with maximal mixing itself disfavoured. The possibility of oscillation to a fourth (purely sterile) neutrino is excluded (at the 5 sigma level). SNO has entered a new phase of data-taking where two tonnes of salt have been added to the heavy water to increase the neutron capture efficiency. The precious heavy water will be purified in 2003 via a process of reverse osmosis, and SNO will enter a third phase of data-taking with discrete neutron counters. Many forthcoming experiments that will further probe this region were presented. These included KamLAND, designed to detect neutrinos originating from commercial nuclear reactors, and the forthcoming BOREXINO experiment.

Another oscillation regime is that of atmospheric muon neutrinos, probably oscillating into tau neutrinos. Results were presented from the KEK to Kamioka (K2K) long baseline experiment, which together with most other experiments currently points at a global picture with maximal mixing in the muon-to-tau neutrino oscillation sector. The outstanding issue of the discrepant results from the LSND experiment at Los Alamos will be investigated by MiniBooNE (MiniBOONE goes live at Fermilab), which showed pictures from first data-taking. Speakers looked forward to future results from experiments such as MINOS, OPERA and Hyper-K in discussions of this very active field. The current status was reviewed in the talk of Concha Gonzalez-Garcia of Stony Brook, Valencia and CERN, who reminded us how much has changed since the assumptions of 10 years ago, when the solar neutrino solution was believed to be naturally small mixing angle, and the atmospheric neutrino anomaly was seen as a possible experimental problem. She proceeded to summarize the beautiful advances since then in both experiment and theory, and also discussed the implications – the most direct and yet striking one being the existence of physics beyond the Standard Model. Among possible scenarios, is the idea that leptogenesis coming from CP violation in heavy-lepton decays in the early universe may be transformed into a baryon-antibaryon asymmetry via sphalerons (“lumps” in the field energy where matter and antimatter can be created) at the electroweak energy scale.

Strong interactions

Naomi Makins of Illinois summarized QCD at low momentum transfer (Q2), discussing current electron-nucleon deep-inelastic scattering experiments at DESY, including the spin programme and future programmes at CERN (with the COMPASS experiment) and Brookhaven. In very lively discussions in the soft QCD parallel sessions, many (mainly experimental) results were submitted corresponding to a variety of topics such as Bose-Einstein condensation, colour flow, deep-inelastic spin physics and diffraction in high-energy processes. These represent activities that investigate QCD dynamics at the confinement scale in many different ways.

Theoretical and experimental views of QCD at high energy were discussed by Stefano Frixione of CERN and Ken Long of Imperial College, London. From the theoretical side, considerable progress has been made in implementing higher-order computations in the analysis of jets and heavy-flavour production. The QCD analyses of the experiments are consistent, leading to an accurate determination of the strong coupling constant, as.

Special parallel and plenary sessions were devoted to computational methods in quantum field theory. While Zvi Bern of UCLA emphasized new methods and developments for computational efforts, Matthias Kasemann of Fermilab discussed computing and data analysis for future high-energy experiments. Starting with the present situation at BaBar, BELLE, CDF and D0, he moved on to technological developments such as the Grid, which must form the basis of the LHC computing.

Martin Grünewald of University College, Dublin, presented the major electroweak developments such as the anomalous magnetic moment of the muon, the weak mixing angle from NuTeV, asymmetries at the Z-peak, triple gauge couplings and W-boson parameters. He also discussed the search for Higgs bosons, and the opportunity available for the Tevatron in this search. In the electroweak parallel session, convened by John Hobbs of Stony Brook and Dmitri Bardin of the Joint Institute for Nuclear Research, many precision results were presented by LEP collaborations, who are still carefully analysing data two years after the collider was shut down.

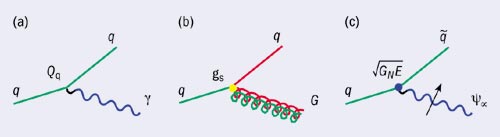

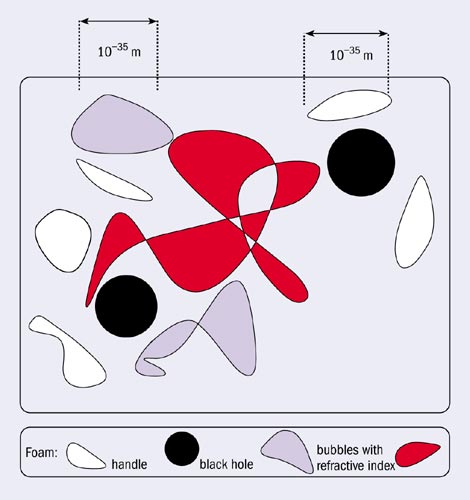

Paris Sphicas of CERN looked at the physics potential of the LHC covering Higgs searches, supersymmetry, other extensions of the Standard Model, extra dimensions and TeV-scale gravity effects such as black hole production.

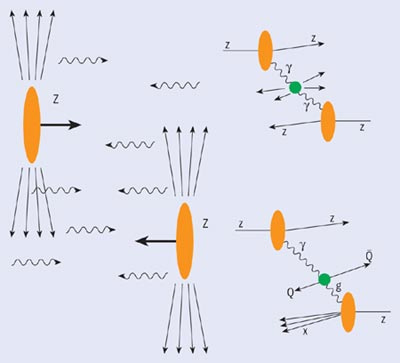

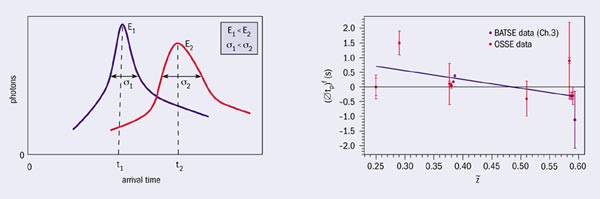

In the crowded astrophysics and cosmology parallel sessions, an important topic was the ultra-high-energy rays, in particular those with energies above the Greisen-Zatsepin-Kuzmin (GZK) cut-off due to collisions of protons with cosmic microwave background photons. Another topic was weakly interacting massive particles (WIMPs), for which new results from the Edelweiss experiment narrow down the windows in a cross-section – mass plot. In his plenary talk, Thomas Gaisser of the Bartol Research Institute also discussed what he called multi-messenger astronomy provided by galactic

protons, photons, neutrinos and gravitons. Marc Kamionkowski of Caltech discussed how astrophysical experiments now indicate that we have a flat universe with an energy density of which 70% is in the form of a negative pressure (cosmological constant), 25% is in the form of dark, as yet unknown, matter, and only 5% is the familiar luminous matter.

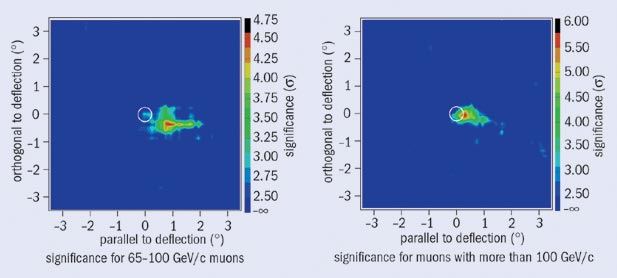

A highlight of the plenary session was a presentation from Yannis Semertzidis of Brookhaven of the new result for the muon’s anomalous magnetic moment (g = 2.0023318406 ± 0.0000000016). This incredibly precise number comes from a measurement of the decays of 4 billion positive muons delivered from Brookhaven’s Alternating Gradient Synchrotron. It represents a challenge to theory, which must calculate the expected value taking into account tiny electroweak corrections. The new measurement implies a 2-3 standard deviation discrepancy with theory and may open a window to new physics interpretations.

Among other notable talks was that of Jan de Boer of Amsterdam University, who summarized developments in string theory and mathematical quantum field theory, with many attempts to get realistic models from string theory. Greg Loew of SLAC discussed future accelerators, emphasizing the recent R&D for linear collider technology, and the future milestones of the International Linear Collider Technical Review Committee. Paula Collins of CERN reviewed the developments in detector technology that will provide the foundation for experiments at future facilities, in particular the increasingly precise and robust silicon-based devices.

Frank Wilczek of MIT gave the conference summary. He emphasized the triumph of quantum field theory both in QCD and electroweak physics, the importance of precision measurements, and the possibilities of extending our knowledge beyond the Standard Model by using and completing the CP violation experiments, the neutrino oscillation experiments and astrophysical experiments.

Immediately after the close, conference chair Ger van Middelkoop was presented with the Dutch royal decoration of Officier in de orde van Oranje-Nassau for his numerous contributions to nuclear and particle physics, marking a fitting conclusion to both a stimulating conference and a long and distinguished scientific career.

All presentations are available at http://www.ichep02.nl/.

Proceedings to be published by Elsevier Science BV.