The town of Ericeira on the Atlantic coast faces Cabo da Roca, the western limit of the European continent. It proved an inspiring setting for Hard Probes 2004, the first International Conference on Hard and Electromagnetic Probes of High Energy Nuclear Collisions.

The conference grew out of a series of Hard Probe Café meetings, the first of which was held in 1994 at CERN. The idea then was to form a collaboration of theorists and experimentalists interested in the interface between hard perturbative quantum chromodynamics (QCD) and relativistic heavy-ion physics. CERN’s Super Proton Synchrotron (SPS), with a beam energy of up to 200 GeV/nucleon, was the highest-energy heavy-ion facility at the time and hard processes were rare. But it was becoming clear that the use of penetrating hard probes – for example, high-mass lepton pairs and high-momentum photons – held promise for understanding the strongly interacting hot medium formed in heavy-ion collisions.

Subsequent experimental results from the SPS, and the commissioning of the Relativistic Heavy Ion Collider (RHIC) at the Brookhaven National Laboratory, put hard processes in the focus of physicists’ attention. After meetings in Europe and the US, when the first published proceedings helped in planning experiments at RHIC and the Large Hadron Collider (LHC) at CERN, the Hard Probe Café could no longer accommodate all the enthusiasts. So Hard Probes 2004 was born, organized by Carlos Lourenço, Helmut Satz, João Seixas and Jorge Dias de Deus, and held on 3-10 November 2004 in the beautiful resort of Ericeira. The 120 or so participants did not have much free time to enjoy the sea breeze; the programme was intense as well as interesting, and local maritime advice underlined the importance of keeping the aim in mind (figure 1).

After a first day of lectures that were more pedagogically oriented, Krishna Rajagopal of MIT opened the conference by surveying what is known about the QCD phase diagram and its new states of matter, from quark-gluon plasma (QGP) to colour superconductors. Jochen Bartels of DESY recalled the parton formulation of high-energy interactions, addressing parton evolution and saturation. These aspects have led to major progress in the understanding of the initial conditions in heavy-ion collisions, forming a new approach to the physics of high-energy hadron and nuclear collisions: the colour glass condensate, reviewed by Edmond Iancu of Saclay and Raju Venugopalan of Brookhaven. Related percolation studies were presented by Carlos Pajares of Santiago de Compostela. It is becoming evident that QCD at high parton density can provide a common framework for describing different high-energy interactions, from deep inelastic scattering to relativistic nuclear collisions.

Probes with charm

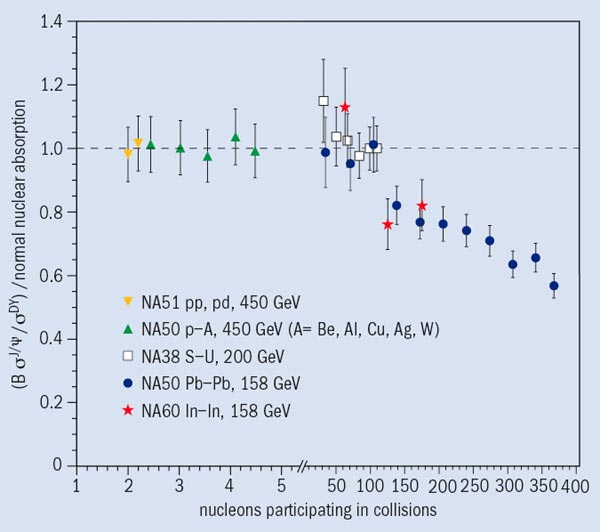

One of the main topics discussed at the Hard Probe Café was the fate of heavy quarkonia – bound states of heavy quarks and anti-quarks – in hot quark-gluon matter. Around 20 years ago, Tetsuo Matsui and Helmut Satz predicted that at sufficiently high temperatures Debye screening in the quark-gluon plasma would lead to the dissociation of quarkonia. At the conference, Frithjof Karsch of Bielefeld surveyed the status of theoretical quarkonium studies; our understanding of the topic has progressed significantly following recent lattice QCD calculations, which were discussed by Tetsuo Hatsuda of Tsukuba, Peter Petreczky of Brookhaven and others.

The different binding energies and bound-state radii of the various quarkonia lead to different dissociation temperatures; while the higher excited charmonium states melt near the deconfinement point, the J/ψ (the cbarc ground state) can survive up to higher temperatures. Such behaviour had been previously obtained from potential model studies, and had shown that the in-medium dissociation pattern of quarkonia constitutes a very effective tool for the study of quark-gluon plasma. It can now provide a direct way to relate QCD calculations to data collected from heavy-ion collisions.

The use of heavy quarks for the diagnostics of QCD matter depends of course on reliable computations of their yields in perturbative QCD; the status of these calculations was reviewed by Stefano Frixione of Genova and Ramona Vogt of Lawrence Berkeley National Laboratory (LBNL). The increase of heavy-quark production at high energies could in fact even lead to enhanced quarkonium yields, as Ralf Rapp of Texas A&M and Bob Thews of Arizona showed for different recombination and coalescence models. A further issue to be resolved is the possibility of initial state quarkonium dissociation by parton percolation, which was reviewed by Marzia Nardi of Torino.

The suppression of charmonium production in nuclear collisions was indeed observed at the SPS (figure 2). Louis Kluberg of CERN and Laboratoire Leprince-Ringuet reviewed the 20 year evolution and the final results of the pioneering NA38 and NA50 experiments. Further studies are being pursued at CERN by NA60, with improved detector capabilities, and at RHIC by PHENIX, where the much lower integrated luminosities, so far, limit the usefulness of the higher collider energies. The HERA-B collaboration presented recent results on χc production in proton-nucleus collisions at HERA, while Mike Leitch of Los Alamos reviewed several issues in quarkonium production. It is particularly puzzling that the ground state resonances J/ψ and Υ show complete absence of polarization, contrary to the expectations of non-relativistic QCD, while the excited states Υ(2S) and Υ(3S) show maximum transverse polarization.

The meeting also discussed measurements of heavy-flavour production. The STAR collaboration reported on open-charm measurements made by reconstructing the D° → K–π+ hadronic decay in d-Au collisions at RHIC. The reconstruction of such hadronic decay modes is difficult to perform in heavy-ion collisions, owing to the high particle multiplicities. The single-electron transverse momentum spectrum provides an alternative, albeit indirect, measurement of charm production at RHIC energies. The charm production cross-sections currently derived from the PHENIX and STAR data differ by a factor of two. Effects that might cause this discrepancy are being investigated and improved results should be available soon.

Another promising direction is the use of electromagnetic probes – leptons and photons; their production has for a long time been considered one of the basic pieces of evidence for the formation of a quark-gluon plasma. There is great interest in these probes because they escape from the medium almost without any interactions, and thus carry valuable information about the early stages in the evolution of dense matter. Moreover, their emission rates can be calculated in lattice QCD as well as in perturbation theory, as discussed by Jean-Paul Blaizot of Saclay, Charles Gale of McGill and others. Rolf Baier of Bielefeld showed that parton-saturation effects also play a crucial role here.

New experimental information on dilepton production was presented by the NA60 experiment at CERN, which took proton-nucleus and In-In data in 2002 and 2003, respectively, with better statistics and mass resolution than previous measurements. Such “second-generation” data should answer some of the questions raised by results previously obtained by CERES at the SPS and lower-energy experiments (such as DLS at LBNL’s BEVALAC and KEK’s E235), reviewed by Itzhak Tserruya of the Weizmann Institute. Currently, PHENIX at RHIC cannot explore the physics of the low-mass dilepton continuum, given the overwhelming combinatorial background levels. This should be solved by a “hadron blind detector”, based on a proximity focus Cherenkov detector, soon to be added to PHENIX.

Jets are another classic hard probe. Colliding beams of protons or heavy nuclei produce jets when partons from the incoming projectiles undergo hard scattering off each other and emerge from the reaction at large angles. In the early 1980s, James Bjorken proposed that jets would interact with the material generated by high-energy nuclear collisions in a way analogous to the more familiar interaction of charged particles in detector material. He suggested that this interaction would lead to energy loss in a quark-gluon plasma (jet quenching). Further theoretical analysis showed that gluon bremsstrahlung is an efficient way of dissipating jet energy to the medium, generating large and potentially observable differences between hot and cold strongly interacting matter.

Jets and RHIC

Jets are the hard probe par excellence at RHIC, where the collision energy is high enough to produce them in vast numbers. The first runs with gold beams at RHIC did indeed reveal strong modifications to jet structure, agreeing with the predictions of jet quenching in matter many times denser than cold nuclear matter. Heavy-ion physicists are now looking more deeply into jet-related measurements and interesting nuclear effects continue to emerge. The diversity and quality of the high-momentum-transfer data from the four RHIC experiments justified eight detailed talks. Data were presented from pp, d-Au and Au-Au collisions at the top RHIC centre-of-mass energy of 200 GeV, together with Au-Au measurements at 62.4 GeV, chosen to match the energy of CERN’s Intersecting Storage Rings (for which extensive pp collision data are available for comparison).

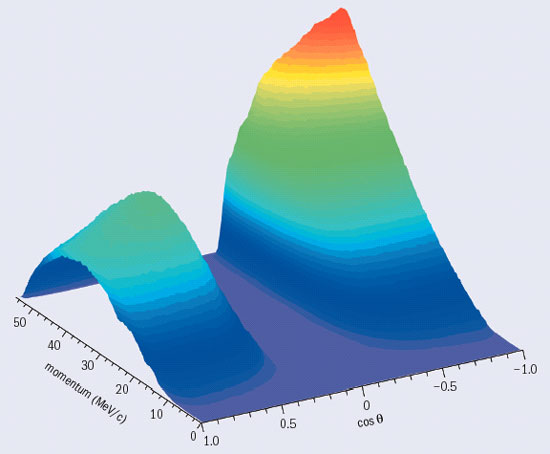

One of the key pieces of evidence for jet quenching is the strong suppression of high-momentum inclusive pion and charged particle production in the most central nuclear collisions, seen by all RHIC experiments and now provided by PHENIX for transverse momenta up to 14 GeV/c. It is crucial to crosscheck such measurements and theoretical calculations in simpler systems. Inclusive particle spectra at high transverse momentum in pp collisions are described well by perturbative QCD calculations, so that the reference spectra for measuring nuclear effects are well understood. Jets and hard photons at high momentum are generated by similar mechanisms, but direct photons should not lose energy in the nuclear medium, since they have no colour charge. Klaus Reygers of Münster showed that, at RHIC, direct photons are indeed produced at the rate expected from QCD calculations, while high-momentum pions are suppressed by a factor of five (figure 3).

On the theory side, Xin-Nian Wang of LBNL and Urs Wiedemann of CERN discussed partonic energy loss in matter, and showed that perturbative QCD calculations incorporating medium-induced bremsstrahlung can describe the main jet-related measurements. A key test is the variation of energy loss with collision energies. Jet quenching generates strong effects at the top RHIC energy of 200 GeV, but does it diminish at lower collision energy? Recently analysed Au-Au data from RHIC at 62.4 GeV found a hadron suppression similar to that at 200 GeV. Model calculations of jet quenching had predicted this, as the result of smaller overall energy loss convoluted with a softer underlying initial partonic spectrum.

It is therefore natural to look at the extensive data amassed by the fixed-target experiments at the SPS, with centre-of-mass energies of 17-20 GeV. Though jet production at SPS energies is rare, high-statistics data sets can probe the lower reaches of the hard-scattering regime. Until recently it was thought that in Pb-Pb collisions at the SPS, production of hadrons with high transfer momentum was enhanced, not suppressed. David D’Enterria of Columbia has re-examined the pp reference data used to measure the hadron production at the SPS, and concludes that its uncertainties were previously underestimated and that signs of jet quenching may indeed also be present at the SPS. This has spurred the SPS heavy-ion collaborations to re-analyse their old data, with more news to be expected by the summer of 2005.

Future prospects

Most of the Au-Au data from RHIC presented at the conference are from the 2002 run, with an integrated luminosity of 250 μb-1. The RHIC collaborations are still analysing the 2004 data set, with a much higher integrated luminosity (3.7 nb-1), and new results on jet physics and other rare probes are expected within a few months.

John Harris of Yale discussed the long-term future of RHIC, including upgrades to the major detectors and the addition of electron cooling to the accelerator which will increase its luminosity for Au-Au by a factor of 10 (RHIC~II). The theoretical interest in low x forward physics was emphasized by Al Mueller of Columbia; this topic should also be high on RHIC’s agenda, as noted by Les Bland of Brookhaven. In 2008, the high-energy frontier in heavy-ion collisions will move to the LHC; Bolek Wyslouch of MIT, Andreas Morsch of CERN and Philippe Crochet of Clermont-Ferrand previewed the possibilities this will open up.

Further heavy-ion runs at the SPS could occur in parallel with operation of the LHC, as advocated by Hans Specht of Heidelberg, to profit from what seems to be an ideal collision energy for the studies of the transition to the QGP phase, associated with the high luminosities offered by fixed target running. High-precision data from such runs could be available several years before the start of GSI’s Facility for Antiproton and Ion Research (FAIR), where heavy-ion collisions will be studied at up to 35 GeV/nucleon (data sets have been taken at the SPS at 20-200 GeV/nucleon).

The wealth of information presented at the meeting was summarized in three talks: on quarkonia and heavy flavours, by Enrico Scomparin of Torino; on jets and high-transverse-momentum physics, by Peter Jacobs of LBNL; and on electromagnetic probes, by Axel Drees of Stony Brook. Dmitri Kharzeev of Brookhaven summarized the theory presentations at the meeting, inspired by the venue’s history. When the Pope divided the unknown world between Portugal and Spain in the 1494 treaty of Tordesillas, he drew a line in what he thought was an empty ocean; 10 years later, South America had been discovered and was being explored. Similarly, the boundaries of 10 years ago, between the old hadronic and the new partonic worlds in the phase diagram of strongly interacting matter, are now more complex and less sharp, thanks to impressive recent progress.

The focused programme, good attendance, spectacular location and extracurricular activities (including a concert of 18th-century popular music in the majestic Convento de Mafra) made this a memorable and successful meeting – one in a new conference series. The second will be held in the spring of 2006 in the San Francisco Bay Area, convened by physicists from Berkeley and Brookhaven. A third is already on the horizon, as Santiago de Compostela in northern Spain would like to welcome a pilgrimage of hard probe physicists.