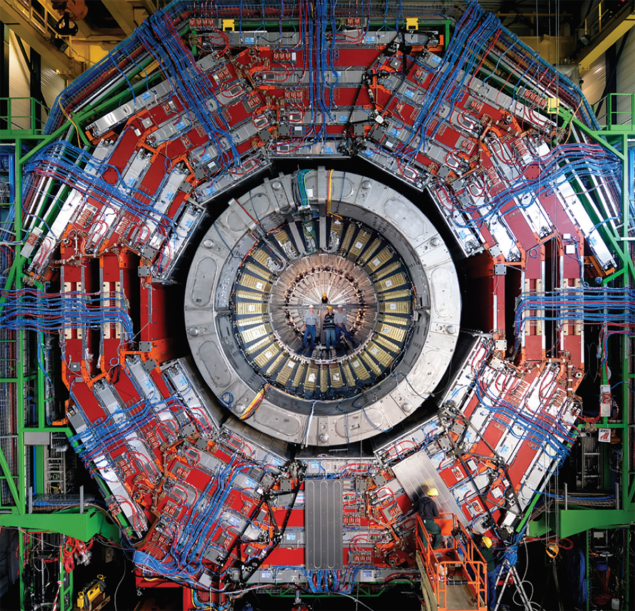

With the imminent start-up of the LHC, particle physics is about to enter a new regime, which should provide solutions to puzzles such as the origin of electroweak symmetry-breaking and the existence of supersymmetry. The LHC will produce head-on collisions between protons or heavy-ions, but at the fundamental level these come down to interactions between partons, that is, quarks and gluons. For this reason, all the interesting new reactions will be initiated essentially by quantum chromodynamic (QCD) hard-scattering, and any claim for new physics will require a precise understanding of known, Standard Model processes.

To prepare for the “precision challenge” at the LHC, the particle theory groups of ETH Zurich and Zurich University organized a workshop on High Precision for Hard Processes (HP2). The three-day workshop took place on the ETH campus in early September 2006, involving about 65 participants. These included 15 diploma and doctoral students, indicating that precision calculations for the LHC is a lively field that attracts many young researchers.

HP2 addressed the precision challenge with reviews of results from Fermilab’s Tevatron, expectations at the LHC and measurements of parton distributions. A few benchmark reactions, such as single-inclusive jet-production and vector-boson production, will already be accessible with very low luminosity at the LHC. These can provide precise constraints on the proton structure, which is relevant to all hadron-collider reactions.

Likewise, precision studies, such as investigating the properties of the top quark, demand a better description of the full characteristics of an event. These will include improved jet-algorithms and a better understanding of soft physics in hard interactions. Searches will often involve multiparticle final states, calling for a precise description of high-multiplicity processes.

Searches for physics beyond the Standard Model will have to aim for a variety of different theoretical scenarios of electroweak symmetry-breaking. These include supersymmetry, higher-dimensional Higgs-less or composite Higgs models, and many other alternatives. Distinguishing models based on experimental observations could become very difficult, since signatures often look similar. The study of this variety of new models, therefore, calls for flexible tools that allow the prediction of all observable consequences of a new model simultaneously. With leading-order event-generator programmes now including generic new physics scenarios, we are clearly on the way to more systematic studies.

While these leading-order studies should give a first overview of the general features of signal and background processes, allowing the design of cuts and the optimization of search strategies, they will often be insufficient when precision is required. This will be the case either in discriminating among similar models or if signals are likely to be spread out over a continuous background.

Until now, next-to-leading order (NLO) calculations have been carried out on a case-by-case basis. Several speakers presented new results at the workshop, including Higgs-plus-2-jet production through QCD processes and vector-boson fusion; top quark plus 1 jet production; scalar-quark effects in Higgs production; and corrections to the decay of the Higgs boson into four fermions.

All of these calculations were major, time-consuming projects, and it is becoming increasingly clear that the large number of phenomenologically relevant reactions for LHC physics calls for automated techniques for NLO computations. While generating the appropriate real and virtual Feynman diagrams can be automated, the evaluation of the one-loop diagrams poses a major bottleneck, since standard one-loop methods applied to multi-particle processes result in over-complicated and numerically unstable results. The search for an efficient and automated method for NLO calculations was a major focus of the workshop.

Techniques proposed for the automated computation of one-loop virtual corrections to multi-particle processes range from purely numerical to fully analytical methods. In the purely numerical approaches, one searches for isolated, potentially singular contributions to the loop integrals at the level of the integrand, and subtracts them using universal subtraction factors. Semi numerical techniques aim to perform a partial simplification of the one-loop integrals to a not-necessarily minimal basis, which ensures maximum numerical stability. The purely analytical methods aim for an expression in a minimal basis. The workshop heard of much progress in this direction.

A substantial number of talks addressed the application of twistor-space techniques. Originally proposed as a new method to understand better the mathematical structure of tree-level amplitudes, the twistor-space formulation has proven fruitful for simplifying loop amplitudes. The twistor-based coefficients are a crucial ingredient in reconstructing one-loop amplitudes from their cuts. In supersymmetric theories, this procedure yields the full one-loop amplitude for any process. In the Standard Model, however, the rational parts of the amplitudes escape the cut construction.

Fortunately, this is not a major difficulty. Exploiting the detailed analytical properties of the amplitudes or generalizing the unitarity-cut method from four dimensions to general dimensions, the rational parts can also be calculated in a systematic manner. Applications of these new tools range from the phenomenology of Higgs bosons and one-loop multi-parton amplitudes to loop corrections in supergravity amplitudes.

For a number of benchmark processes, typically of low multiplicity, even NLO accuracy is not sufficient. The workshop heard progress reports on next-to-next-to-leading-order (NNLO) calculations of the Drell–Yan process and of the three-jet event-shapes in e+e–; annihilation. New methods to perform NNLO calculations and to predict the singularity structure of QCD amplitudes at NNLO and beyond are paving the way for further progress in this area.

Very often, particular terms become large at any order in perturbation theory, necessitating an all-order resummation. For the leading logarithmic corrections to arbitrary processes, this can be performed using parton showers. Over the last few years we have seen major progress in this area, with NLO corrections being included into the parton showers on a process-by-process basis. Additionally, a number of important hard processes have been included recently in the MC@NLO event-generating program. There have also been suggestions for new implementations of parton showers that aim at more efficient systematic methods for the inclusion of the NLO corrections.

Sub-leading corrections need to be determined on a case by case basis, and speakers reported on new results for Higgs and W pair production. On the more formal side, these resummation approaches can be used to predict dominant terms at three loops, and to obtain an improved understanding of universal soft behaviour and of the high-energy limit of QCD. While resummation approaches have long been considered independent of higher-order calculations, the workshop clearly illustrated that both areas can have a fruitful interchange of ideas and methods.

In summarizing the highlights from the conference, Zvi Bern from the University of California at Los Angeles emphasized that theory is taking up the LHC’s precision challenge. Progress on many frontiers of high-precision calculations for hard processes will soon yield a variety of improved results for reactions at the LHC, providing experimental groups with the best possible tools for precision studies and new physics searches.

The next HP2 meeting will be in Buenos Aires, Argentina, in early 2009, when there should be plenty of discussion on the first data from the LHC.