By Pauline Gagnon

MultiMondes

Hardback: €29

E-book: €19

Also available at the CERN bookshop

Pauline Gagnon est bien connue dans la communauté des expérimentateurs au LHC car, en plus de sa contribution à l’expérience ATLAS, elle a été membre du groupe de communication du CERN de 2011 à 2014 et sur le blog Quantum Diaries elle a couvert de nombreux évènements récents liés à l’activité scientifique du laboratoire.

Le titre de son livre rédigé en français, ” Qu’est ce que le Boson de Higgs mange en hiver ” est quelque peu trompeur, car les propos de l’auteur vont bien au delà de la description du mécanisme de Brout-Englert & Higgs et de la découverte expérimentale du boson de Higgs en 2012. Son livre offre non seulement une vue d’ensemble de la physique étudiée dans les expériences au LHC, du complexe d’accélérateurs et de détecteurs réalisés pour cette recherche et des méthodes statistiques employées pour la découverte du Boson de Higgs, mais inclut aussi un chapitre qui décrit l’organisation originale (et probablement unique) des grandes collaborations internationales en physique des hautes énergies ainsi qu’un chapitre sur les transferts de technologie et de connaissance de notre domaine vers le monde économique et le grand public.

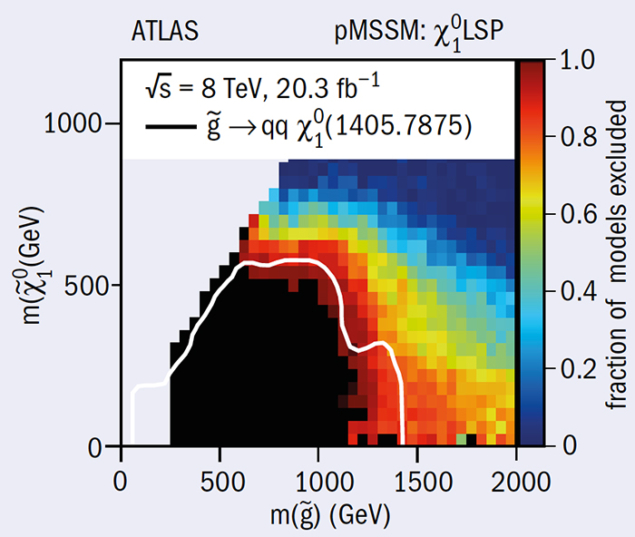

Le livre décrit aussi les liens qui relient la physique des hautes énergies à l’astrophysique, avec un chapitre consacré aux évidences expérimentales qui ont amené à augurer de l’existence de la matière noire, et à une comparaison entre le potentiel de découverte de celle-ci par des expériences sur et hors accélérateurs. Un autre chapitre est consacré à la super-symétrie, la théorie actuellement la plus populaire au delà du modèle standard pour répondre aux questions que celui-ci ne peut résoudre, et aux défis qui attendent les expériences du LHC dans les prochaines années. Le livre se termine par la discussion d’un thème qui est quelque peu déconnecté mais cher au cœur de l’auteur, à savoir la question de la diversité (en particulier l’emploi des femmes) dans le monde de la recherche scientifique.

Le livre n’est pas destiné aux spécialistes mais cible le grand public. A cette fin, l’auteur a banni toute formule mathématique et utilise souvent des analogies pour introduire les différents concepts. Les parties plus complexes ou plus détaillées sont incluses dans des encarts séparés que le lecteur peut éventuellement sauter. Dans le même esprit, chaque chapitre se termine par un résumé d’une page environ qui permet une lecture abrégée du point traité, quitte à y revenir plus tard. Le style est simple et direct, avec souvent une pointe d’humour. Le discours n’est cependant pas superficiel, et il me semble que le livre s’adresse tout de même à des lecteurs avec une certaine connaissance scientifique de base, par exemple des jeunes étudiants qui veulent comprendre l’intérêt et les buts de la recherche en physique des particules.