By Herwig Schopper and Luigi Di Lella (eds.)

World Scientific

Also available at the CERN bookshop

This book is a treasure trove of particle physics, highly recommended for physics teachers, graduate students and professionals of the field. With 17 chapters, it offers a concise essay of 60 years of particle physics at CERN from the point of view of the people in charge of the different experiments.

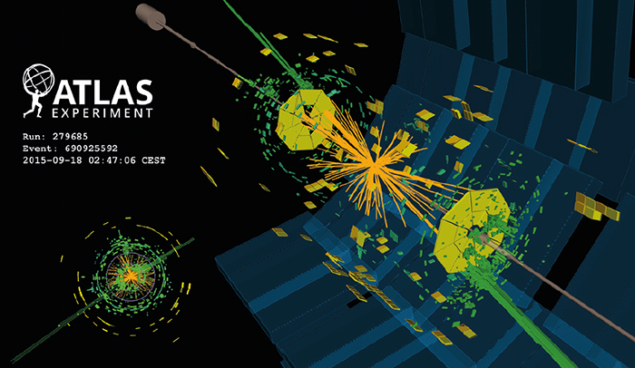

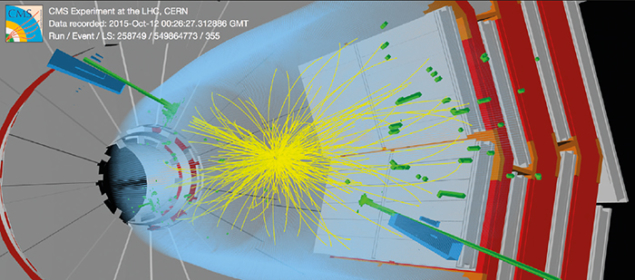

The first three chapters cover the present day at CERN: the full LHC programme, from the Higgs boson discovery to Beauty physics and quark–gluon plasma. They draw a relatively synthetic but precise picture of the four major experiments at LHC (ATLAS, CMS, LHCb, ALICE), giving really useful information to the reader.

The surprises, at least for me, come in the chapters that follow. They explain physics that is already in textbooks, but provide a great deal of detail about each specific endeavour – a pleasure to read if you are interested not only in the results but also in the intellectual journey and historical context.

Chapters 4 (number of light neutrinos) and 5 (gauge-coupling-constants precision physics) are dedicated to LEP results, chapter 6 to the discovery of W and Z bosons in the Super Proton Synchrotron (SPS), and chapter 7 to the fundamental neutral current experiment at Gargamelle. Going back in time to Gargamelle, one can appreciate the ingenuity of the physicists’ community struggling with the data to get a clearer picture of electroweak physics, at a time when the microelectronics revolution was still far off.

From chapter 8 to the end of the book, the reader picks up little gems. CERN is not only the LHC or LEP, but much more. Chapter 8 tells the story of neutrino physics at the SPS, in particular the precise measurement of the Weinberg angle and how that effort paved the way for actual neutrino-oscillation experiments. Chapters 9 and 10 are dedicated to kaon physics, in particular to the direct measurement of CP violation in kaon decay by the NA31/NA28 collaborations at the SPS, and to discrete symmetry (T, CPT and CP) measurements in the neutral-kaon system using the LEAR antiproton storage ring. Here, the reader discovers that the large volume of statistics on π+π– decays possible at LEAR (now evolved to LEIR) enabled testing of the equivalence principle between particles and antiparticles, as well as of EPR correlations.

Chapter 11 highlights the physics discoveries at the Intersecting Storage Rings (ISR). Remembered as the first hadron collider and a technological feat, it also made an important contribution to fundamental physics by discovering the rise of the proton–proton scattering total cross-section. Chapters 12 and 13 discuss topics out of chronological order. Chapter 13 concerns the discovery of partons in hadrons from the ISR to the SPS, with details of the hadron internal structure, revealed by muon scattering in the SPS, given in chapter 12. “Modern” LHC parlance as “gluon colliders” can be traced back to the ISR; jet production, now a workhorse at the LHC programme, was evident from the SPS UA2 experiment. Deep inelastic scattering has been an active field at CERN for more than 35 years, and has had a fundamental impact on the present day understanding of hadronic-matter structure.

But CERN is not only about colliders. Atomic physics is very much alive there, as well as the study of exotic atoms (pionic, muonic, kaonic) and anti-atoms. Chapter 14 traces the history of antimatter–exotic matter at CERN, up to present-day experiments at ALPHA and ATRAP, even for testing the equivalence principle (does antimatter fall down?) with AEgIS or GBAR.

Muon-storage technological challenges and the g-2 measurement at CERN, a hot topic today, come within chapter 15, which contains two special-relativity surprises: a gamma-ray time-of-flight experiment from the Proton Synchrotron (PS) target, demonstrating the independency of c from the source motion, and time dilation in circular orbits for the muon lifetime in flight. Chapter 16 explains the beginning of the accelerator programme at CERN with the physics contribution of the CERN 600 MeV Synchrocyclotron (pi meson decays), in particular the first measurement of the muon anomalous moment.

Closing the book, chapter 17 discusses part of the nuclear-physics programme, specifically with ISOLDE – an “alive and kicking” experiment dedicated to the study of radioactive nuclei, mainly nuclear ground-state properties and excited nuclear states populated in radioactive decays, but now also leading the production of medical isotopes for fundamental studies in cancer research.

As a final remark, I enjoyed this book not only for the range of topics and extensive explanations, but also because it is easily readable – not an easy goal when the number of authors is so high. Definitely a must read.