Image credit: Richard Jacobsson and Daniel Dominguez.

SHiP is an experiment aimed at exploring the domain of very weakly interacting particles and studying the properties of tau neutrinos. It is designed to be installed downstream of a new beam-dump facility at the Super Proton Synchrotron (SPS). The CERN SPS and PS experiments Committee (SPSC) has recently completed a review of the SHiP Technical and Physics Proposal, and it recommended that the SHiP collaboration proceed towards preparing a Comprehensive Design Report, which will provide input into the next update of the European Strategy for Particle Physics, in 2018/2019.

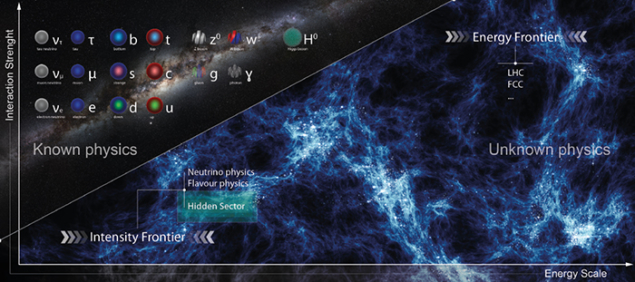

Why is the SHiP physics programme so timely and attractive? We have now observed all the particles of the Standard Model, however it is clear that it is not the ultimate theory. Some yet unknown particles or interactions are required to explain a number of observed phenomena in particle physics, astrophysics and cosmology, the so-called beyond-the-Standard Model (BSM) problems, such as dark matter, neutrino masses and oscillations, baryon asymmetry, and the expansion of the universe.

While these phenomena are well-established observationally, they give no indication about the energy scale of the new physics. The analysis of new LHC data collected at √ = 13 TeV will soon have directly probed the TeV scale for new particles with couplings at O(%) level. The experimental effort in flavour physics, and searches for charged lepton flavour violation and electric dipole moments, will continue the quest for specific flavour symmetries to complement direct exploration of the TeV scale.

However, it is possible that we have not observed some of the particles responsible for the BSM problems due to their extremely feeble interactions, rather than due to their heavy masses. Even in the scenarios in which BSM physics is related to high-mass scales, many models contain degrees of freedom with suppressed couplings that stay relevant at much lower energies.

Given the small couplings and mixings, and hence typically long lifetimes, these hidden particles have not been significantly constrained by previous experiments, and the reach of current experiments is limited by both luminosity and acceptance. Hence the search for low-mass BSM physics should also be pursued at the intensity frontier, along with expanding the energy frontier.

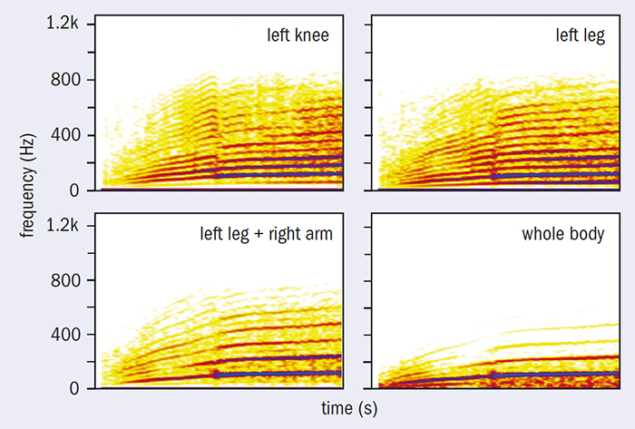

Image credit: SHIP Collaboration.

SHiP is designed to give access to a large class of interesting models. It has discovery potential for the major observational puzzles of modern particle physics and cosmology, and can explore some of the models down to their natural “bottom line”. SHiP also has the unique potential to test lepton flavour universality by comparing interactions of muon and tau neutrinos.

SPS: the ideal machine

SHiP is a new type of intensity-frontier experiment motivated by the possibility to search for any type of neutral hidden particle with mass from sub-GeV up to O(10) GeV with super-weak couplings down to 10–10. The proposal locates the SHiP experiment on a new beam extraction line that branches off from the CERN SPS transfer line to the North Area. The high intensity of the 400 GeV beam and the unique operational mode of the SPS provide ideal conditions. The current design of the experimental facility and estimates of the physics sensitivities assume the SPS accelerator in its present state. Sharing the SPS beam time with other SPS fixed-target experiments and the LHC should allow 2 × 1020 protons on target to be produced in five years of nominal operation.

The key experimental parameters in the phenomenology of the various hidden-sector models are relatively similar. This allows common optimisation of the design of the experimental facility and of the SHiP detector. Because the hidden particles are expected to be predominantly accessible through the decays of heavy hadrons and in photon interactions, the facility is designed to maximise their production and detector acceptance, while providing the cleanest possible environment. As a result, with 2 × 1020 protons on target, the expected yields of different hidden particles greatly exceed those of any other existing and planned facility in decays of both charm and beauty hadrons.

As shown in the figure (left), the next critical component of SHiP after the target is the muon shield, which deflects the high flux of muon background away from the detector. The detector for the hidden particles is designed to fully reconstruct the exclusive decays of hidden particles and to reject the background down to below 0.1 events in the sample of 2 × 1020 protons on target. The detector consists of a large magnetic spectrometer located downstream of a 50 m-long and 5 × 10 m-wide decay volume. To suppress the background from neutrinos interacting in the fiducial volume, the decay volume is maintained under a vacuum. The spectrometer is designed to accurately reconstruct the decay vertex, mass and impact parameter of the decaying particle at the target. A set of calorimeters followed by muon chambers provide identification of electrons, photons, muons and charged hadrons. A dedicated high-resolution timing detector measures the coincidence of the decay products, which allows the rejection of combinatorial backgrounds. The decay volume is surrounded by background taggers to detect neutrino and muon inelastic scattering in the surrounding structures, which may produce long-lived SM V0 particles, such as KL, etc. The experimental facility is also ideally suited for studying interactions of tau neutrinos. The facility will therefore host a tau-neutrino detector largely based on the Opera concept, upstream of the hidden-particle decay volume (CERN Courier November 2015 p24).

Global milestones and next steps

The SHiP experiment aims to start data-taking in 2026, as soon as the SPS resumes operation after Long Shutdown 3 (LS3). The 10 years consist, globally, of three years for the comprehensive design phase and then, following approval, a bit less than five years of civil engineering, starting in 2021, in parallel with four years for detector production and staged installation of the experimental facility, and two years to finish the detector installation and commissioning.

Image credit: SHiP Collaboration.

The key milestones during the upcoming comprehensive design phase are aimed at further optimising the layout of the experimental facility and the geometry of the detectors. This involves a detailed study of the muon-shield magnets and the geometry of the decay volume. It also comprises revisiting the neutrino background in the fiducial volume, together with the background detectors, to decide on the required type of technology for evacuating the decay volume. Many of the milestones related to the experimental facility are of general interest beyond SHiP, such as possible improvements to the SPS extraction, and the design of the target and the target complex. SHiP has already benefitted from seven weeks of beam time in test beams at the PS and SPS in 2015, for studies related to the Technical Proposal (TP). A similar amount of beam time has been requested for 2016, to complement the comprehensive design studies.

The SHiP collaboration currently consists of almost 250 members from 47 institutes in 15 countries. In only two years, the collaboration has formed and taken the experiment from a rough idea in the Expression of Interest to an already mature design in the TP. The CERN task force, consisting of key experts from CERN’s different departments, which was launched by the CERN management in 2014 to investigate the implementation of the experimental facility, brought a fundamental contribution to the TP. The SHiP physics case was demonstrated to be very strong by a collaboration of more than 80 theorists in the SHiP Physics Proposal.

The intensity frontier greatly complements the search for new physics at the LHC. In accordance with the recommendations of the last update of the European Strategy for Particle Physics, a multi-range experimental programme is being actively developed all over the world. Major improvements and new results are expected during the next decade in neutrino and flavour physics, proton-decay experiments and measurements of the electric dipole moments. CERN will be well-positioned to make a unique contribution to exploration of the hidden-particle sector with the SHiP experiment at the SPS.

• For further reading, see cds.cern.ch/record/2007512.