The early 1970s was a pivotal period in the history of particle physics. Following the discovery of asymptotic freedom and the Brout–Englert–Higgs mechanism a few years earlier, it was the time when the Standard Model (SM) of electroweak and strong interactions came into being. After decades of empirical verification, the theory received a final spectacular confirmation with the discovery of the Higgs boson at CERN in 2012, and its formulation has also been recognised by Nobel prizes awarded to theoretical physics in 1979, 1999, 2004 and 2013.

It was clear from the start, however, that the SM, a spontaneously broken gauge theory, had two major shortcomings. First, it is not a truly unified theory because the gluons of the strong (colour) force and the photons of electromagnetism do not emerge from a common symmetry. Second, it leaves aside gravity, the other fundamental force of nature, which is based on the gauge principle of general co-ordinate transformations and is described by general relativity (GR).

In the early 1970s, grand unified theories (GUTs), based on larger gauge symmetries that include the SM’s “SU(3) × SU(2) × U(1)” structure, did unify colour and charge – thereby uniting the strong and electroweak interactions. However, they relied on a huge new energy scale (~1016 GeV), just a few orders of magnitude below the Planck scale of gravity (~1019 GeV) and far above the electroweak Fermi scale (~102 GeV), and on new particles carrying both colour and electroweak charges. As a result, GUTs made the stunning prediction that the proton might decay at detectable rates, which was eventually excluded by underground experiments, and their two widely separated cut-off scales introduced a “hierarchy problem” that called for some kind of stabilisation mechanism.

A possible solution came from a parallel but unrelated development. In 1973, Julius Wess and Bruno Zumino unveiled a new symmetry of 4D quantum field theory: supersymmetry, which interchanges bosons and fermions and, as would be better appreciated later, can also conspire to stabilise scale hierarchies. Supersymmetry was inspired by “dual resonance models”, an early version of string theory pioneered by Gabriele Veneziano and extended by André Neveu, Pierre Ramond and John Schwarz. Earlier work done in France by Jean-Loup Gervais and Benji Sakita, and in the Soviet Union by Yuri Golfand and Evgeny Likhtman, and by Dmitry Volkov and Vladimir Akulov, had anticipated some of supersymmetry’s salient features.

An exact supersymmetry would require the existence of superpartners in the SM, but it would also imply mass degeneracies between the known particles and their superpartners. This option has been ruled out over the years by several experiments at CERN, Fermilab and elsewhere, and therefore supersymmetry can be at best broken, with superpartner masses that seem to lie beyond the TeV energy region currently explored at the LHC. Moreover, a spontaneous breaking of supersymmetry would imply the existence of additional massless (“Goldstone”) fermions.

Supergravity, the supersymmetric extension of GR, came to the rescue in this respect. It predicted the existence of a new particle of spin 3/2 called the gravitino that would receive a mass in the broken phase. In this fashion, one or more gravitinos could be potentially very heavy, while the additional massless fermions would be “eaten” – much as it occurs for part of the Higgs doublet in the SM.

Seeking unification

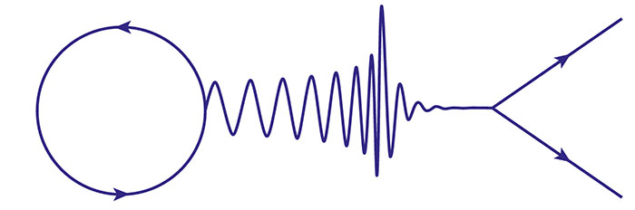

Supergravity, especially when formulated in higher dimensions, was the first concrete realisation of Einstein’s dream of a unified field theory (see diagram opposite). Although the unification of gravity with other forces was the central theme for Einstein during the last part of his life, the beautiful equations of GR were for him a source of frustration. For 30 years he was disturbed by what he considered a deep flaw: one side of the equations contained the curvature of space–time, which he regarded as “marble”, while the other contained the matter energy, which he compared to “wood”. In retrospect, Einstein wanted to turn “wood” into “marble”, but after special and general relativity he failed in this third great endeavour.

GR has, however, proved to be an inestimable source of deep insights for unification. A close scrutiny of general co-ordinate transformations led Theodor Kaluza and Oskar Klein (KK), in the 1920s and 1930s, to link electromagnetism and its Maxwell potentials to internal circle rotations, what we now call a U(1) gauge symmetry. In retrospect, more general rotations could also have led to the Yang–Mills theory, which is a pillar of the SM. According to KK, Maxwell’s theory could be a mere byproduct of gravity, provided the universe contains one microscopic extra dimension beyond time and the three observable spatial ones. In this 5D picture, the photon arises from a portion of the metric tensor – the “marble” in GR – with one “leg” along space–time and the other along the extra dimensions.

Supergravity follows in this tradition: the gravitino is the gauge field of supersymmetry, just like the photon is the gauge field of internal circle rotations. If one or more local supersymmetries (whose number will be denoted by N) accompany general co-ordinate transformations, they grant the consistency of gravitino interactions. In a subclass of “pure” supergravity models, supersymmetry also allows one to connect “marble” and “wood” and therefore goes well beyond the KK mechanism, which does not link Bose and Fermi fields. Curiously, while GR can be formulated in any number of dimensions, seven additional spatial dimensions, at most, are allowed in supergravity due to intricacies of the Fermi–Bose matching.

Last year marked the 40th anniversary of the discovery of supergravity. At its heart lie some of the most beautiful ideas in theoretical physics, and therefore over the years this theory has managed to display different facets or has lived different parallel lives.

Construction begins

The first instance of supergravity, containing a single gravitino (N = 1), was built in the spring of 1976 by Daniel Freedman, Peter van Nieuwenhuizen and one of us (SF). Shortly afterwards, the result was recovered by Stanley Deser and Bruno Zumino, in a simpler and elegant way that extended the first-order (“Palatini”) formalism of GR. Further simplifications emerged once the significance of local supersymmetry was better appreciated. Meanwhile, the “spinning string” – the descendant of dual resonance models that we have already met – was connected to space–time supersymmetry via the so-called Gliozzi–Scherk–Olive (GSO) projection, which reflects a subtle interplay between spin-statistics and strings in space–time. The low-energy spectrum of the resulting models pointed to previously unknown 10D versions of supergravity, which would include the counterparts of several gravitinos, and also to a 4D Yang–Mills theory that is invariant under four distinct supersymmetries (N = 4). A first extended (N = 2) version of 4D supergravity involving two gravitinos came to light shortly after.

When SF visited Caltech in the autumn of 1976, he became aware that Murray Gell-Mann had already worked out many consequences of supersymmetry. In particular, Gell-Mann had realised that the largest “pure” 4D supergravity theory, in which all forces would be connected to the conventional graviton, would include eight gravitinos. Moreover, this N = 8 theory could also allow an SO(8) gauge symmetry, the rotation group in eight dimensions (see table opposite). Although SO(8) would not suffice to accommodate the SU(3) × SU(2) × U(1) symmetry group of the SM, the full interplay between supergravity and supersymmetric matter soon found a proper setting in string theory, as we shall see.

The following years, 1977 and 1978, were most productive and drew many people into the field. Important developments followed readily, including the discovery of reformulations where N = 1 4D supersymmetry is manifest. This technical step was vital to simplify more general constructions involving matter, since only this minimal form of supersymmetry is directly compatible with the chiral (parity-violating) interactions of the SM. Indeed, by the early 1980s, theorists managed to construct complete couplings of supergravity to matter for N = 1 and even for N = 2.

The maximal, pure N = 8 4D supergravity was also derived, via a circle KK reduction, in 1978 by Eugene Cremmer and Bernard Julia. This followed their remarkable construction, with Joel Scherk, of the unique 11D form of supergravity, which displayed a particularly simple structure where a single gravitino accounts for eight 4D ones. In contrast, the N = 8 model is a theory of unprecedented complication. It was built after an inspired guess about the interactions of its 70 scalar fields (see table) and a judicious use of generalised dualities, which extend the manifest symmetry of the Maxwell equations under the interchange of electric and magnetic fields. The N = 8 supergravity with SO(8) gauge symmetry foreseen by Gell-Mann was then constructed by Bernard de Wit and Hermann Nicolai. It revealed a negative vacuum energy, and thus an anti-de Sitter (AdS) vacuum, and was later connected to 11D supergravity via a sphere KK reduction. Regarding the ultraviolet behaviour of supergravity theories, which was vigorously investigated soon after the original discovery, no divergences were found, at one loop, in the “pure” models, and many more unexpected cancellations of divergences have since come to light. The case of N = 8 supergravity is still unsettled, and some authors still expect that this maximal theory be finite to all orders.

The string revolution

Following the discovery of supergravity, the GSO projection opened the way to connect “spinning strings”, or string theory as they came to be known collectively, to supersymmetry. Although the link between strings and gravity had been foreseen by Scherk and Schwarz, and independently by Tamiaki Yoneya, it was only a decade later, in 1984, that widespread activity in this direction began. This followed Schwarz and Michael Green’s unexpected discovery that gauge and gravitational anomalies cancel in all versions of 10D supersymmetric string theory. Anomalies – quantum violations of classical symmetries – are very troublesome when they concern gauge interactions, and their cancellation is a fundamental consistency condition that is automatically granted in the SM by its known particle content.

Anomaly cancellation left just five possible versions of string theory in 10 dimensions: two “heterotic” theories of closed strings, where the SU(3) × SU(2) × U(1) symmetry of the SM is extended to the larger groups SO(32) or E8 × E8; an SO(32) “type-I” theory involving both open and closed strings, akin to segments and circles, respectively; and two other very different and naively less interesting theories called IIA and IIB. At low energies, supergravity emerges from all of these theories in its different 10D realisations, opening up unprecedented avenues for linking 10D strings to the interactions of particle physics. Moreover, the extended nature of strings made all of these enticing scenarios free of the ultraviolet problems of gravity.

Following this 1984 “first superstring revolution”, one might well say that supergravity officially started a second life as a low-energy manifestation of string theory. Anomaly cancellation had somehow connected Einstein’s “marble” and “wood” in a miraculous way dictated by quantum consistency, and definite KK scenarios soon emerged that could recover from string theory both the SM gauge group and its chiral, parity-violating interactions. Remarkably, this construction relied on a specific class of 6D internal manifolds called Calabi–Yau spaces that had been widely studied in mathematics, thereby merging 4D supergravity with algebraic geometry. Calabi–Yau spaces led naturally, in four dimensions, to a GUT gauge group E6, which was known to connect to the SM with right-handed neutrinos, also providing realisations of the see-saw mechanism.

A third life

The early 1990s were marked by many investigations of black-hole-like solutions in supergravity, which soon unveiled new aspects of string theory. Just like the Maxwell field is related to point particles, some of the fields in 10D supergravity are related to extended objects, generically dubbed “p-branes” (p = 0 for particles, p = 1 for strings, p = 2 for membranes, and so on). String theory, being based at low energies on supergravity, therefore could not be merely a theory of strings. Rather, as had been strongly advocated over the years by Michael Duff and Paul Townsend, we face a far more complicated soup of strings and more general p-branes. A novel ingredient was a special class of p-branes, the D-branes, whose role was clarified by Joseph Polchinski, but the electric-magnetic dualities of the low-energy supergravity remained the key tool to analyse the system. The end result, in the mid 1990s, was the awesome, if still somewhat vague, unified picture called M-theory, which was largely due to Edward Witten and marked the “second superstring revolution”. Twenty years after its inception, supergravity thus started a third parallel life, as a deep probe into the mysteries of string theory.

The late 1990s witnessed the emergence of a new duality. The AdS/CFT correspondence, pioneered by Juan Maldacena, is a profound equivalence between supergravity and strings in AdS and conformal field theory (CFT) on its boundary, which connects theories living in different dimensions. This “third superstring revolution” brought to the forefront the AdS versions of supergravity, which thus started a new life as a unique tool to probe quantum field theory in unusual regimes. The last two decades have witnessed many applications of AdS/CFT outside of its original realm. These have touched upon fluid dynamics, quark–gluon plasma, and more recently condensed-matter physics, providing a number of useful insights on strongly coupled matter systems. Perhaps more unexpectedly, AdS/CFT duality has stimulated work related to scattering amplitudes, which may also shed light on the old issue of the ultraviolet behaviour of supergravity. The reverse programme of gaining information about gravity from gauge dynamics has proved harder, and it is difficult to foresee where the next insights will come from. Above all, there is a pressing need to highlight the geometrical principles and the deep symmetries underlying string theory, which have proved elusive over the years.

The interplay between particle physics and cosmology is a natural arena to explore consequences of supergravity. Recent experiments probing the cosmic microwave background, and in particular the results of the Planck mission, lend support to inflationary models of the early universe. An elusive particle, the inflaton, could have driven this primordial acceleration, and although our current grasp of string theory does not allow a detailed analysis of the problem, supergravity can provide fundamental clues on this and the subsequent particle-physics epochs.

Supersymmetry was inevitably broken in a de Sitter-like inflationary phase, where superpartners of the inflaton tend to experience instabilities. The novel ingredient that appears to get around these problems is non-linear supersymmetry, whose foundations lie in the prescient 1973 work of Volkov and Akulov. Non-linear supersymmetry arises when superpartners are exceedingly massive, and seems to play an intriguing role in string theory. The current lack of signals for supersymmetry at the LHC makes one wonder whether it might also hold a prominent place in an eventual picture of particle physics. This resonates with the idea of “split supersymmetry”, which allows for large mass splittings among superpartners and can be accommodated in supergravity at the price of reconsidering hierarchy issues.

In conclusion, attaining a deeper theoretical understanding of broken supersymmetry in supergravity appears crucial today. In breaking supersymmetry, one is confronted with important conceptual challenges: the resulting vacua are deeply affected by quantum fluctuations, and this reverberates on old conundrums related to dark energy and the cosmological constant. There are even signs that this type of investigation could shed light on the backbone of string theory, and supergravity may also have something to say about dark matter, which might be accounted for by gravitinos or other light superpartners. We are confident that supergravity will lead us farther once more.