The accelerating expansion of the universe, first realised 20 years ago, has been confirmed by numerous observations. Remarkably, whatever the source of the acceleration, it is the primary driver of the dynamical evolution of the universe in the present epoch. That we are unable to know the nature of this so-called dark energy is one of the most important puzzles in modern fundamental physics. Whether due to a cosmological constant, a new dynamical field, a deviation from general relativity on cosmological scales, or something else, dark energy has triggered numerous theoretical models and experimental programmes. Physicists and astronomers are convinced that pinning down the nature of this mysterious component of the universe will lead to a revolution in physics.

Based on the current lambda-cold-dark-matter (ΛCDM) model of cosmology – which has only two ingredients: general relativity with a nonzero cosmological constant and cold dark matter – we identify at this time three dominant components of the universe: normal baryonic matter, which makes up only 5% of the total energy density; dark matter (27%); and dark energy (68%). This model is extremely successful in fitting observations, such as the Planck mission’s measurements of the cosmic microwave background, but it gives no clues about the nature of the dark-matter or dark-energy components. It should also be noted that the assumption of a nonzero cosmological constant, implying a nonzero vacuum energy density, leads to what has been called the worst prediction ever made in physics: its value as measured by astronomers falls short of what is predicted by the Standard Model for particle physics by well over 100 orders of magnitude.

It is only by combining several complementary probes that the source of the acceleration of the universe can be understood.

Depending on what form it takes, dark energy changes the dynamical evolution during the expansion history of the universe as predicted by cosmological models. Specifically, dark energy modifies the expansion rate as well as the processes by which cosmic structures form. Whether the acceleration is produced by a new scalar field or by modified laws of gravity will impact differently on these observables, and the two effects can be decoupled using several complementary cosmological probes. Type 1a supernovae and baryon acoustic oscillations (BAO) are very good probes of the expansion rate, for instance, while gravitational lensing and peculiar velocities of galaxies (as revealed by their redshift) are very good probes of gravity and the growth rate of structures (see panel “The geometry of the universe” below). It is only by combining several complementary probes that the source of the acceleration of the universe can be understood. The changes are extremely small and are currently undetectable at the level of individual galaxies, but by observing many galaxies and treating them statistically it is possible to accurately track the evolution and therefore get a handle on what dark energy physically is. This demands new observing facilities capable of both measuring individual galaxies with high precision and surveying large regions of the sky to cover all cosmological scales.

Euclid science parameters

Euclid is a new space-borne telescope under development by the European Space Agency (ESA). It is a medium-class mission of ESA’s Cosmic Vision programme and was selected in October 2011 as the first-priority cosmology mission of the next decade. Euclid will be launched at the end of 2020 and will measure the accelerating expansion of our universe from the time it kicked in around 10 billion years ago to our present epoch, using four cosmological probes that can explore both dark-energy and modified-gravity models. It will capture a 3D picture of the distribution of the dark and baryonic matter from which the acceleration will be measured to per-cent-level accuracy, and measure possible variations in the acceleration to 10% accuracy, improving our present knowledge of these parameters by a factor 20–60. Euclid will observe the dynamical evolution of the universe and the formation of its cosmic structures over a sky area covering more than 30% of the celestial sphere, corresponding to about five per cent of the volume of the observable universe.

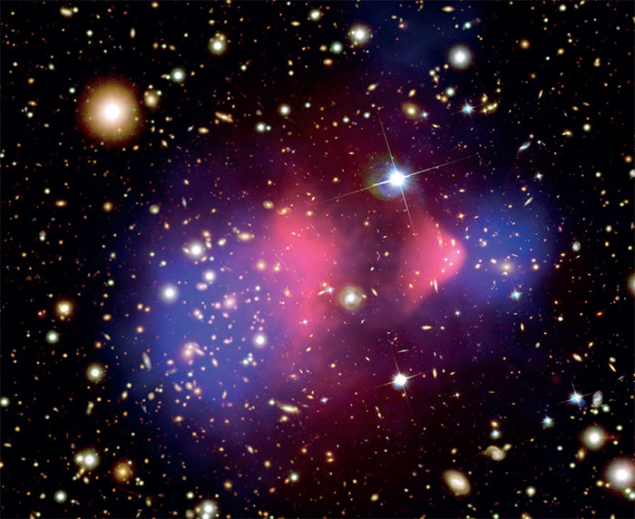

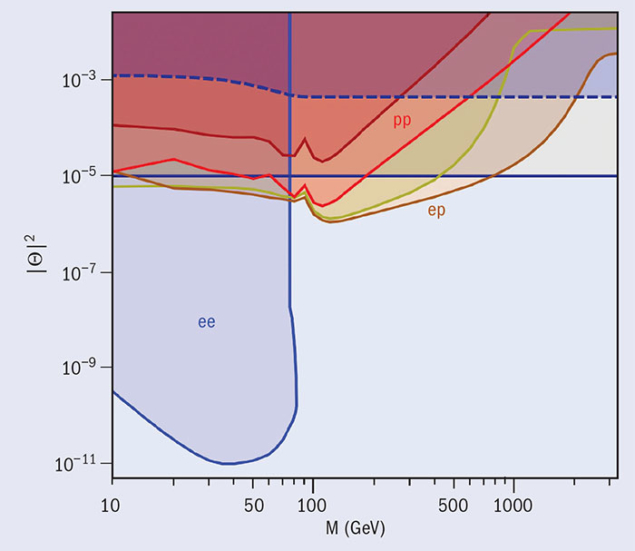

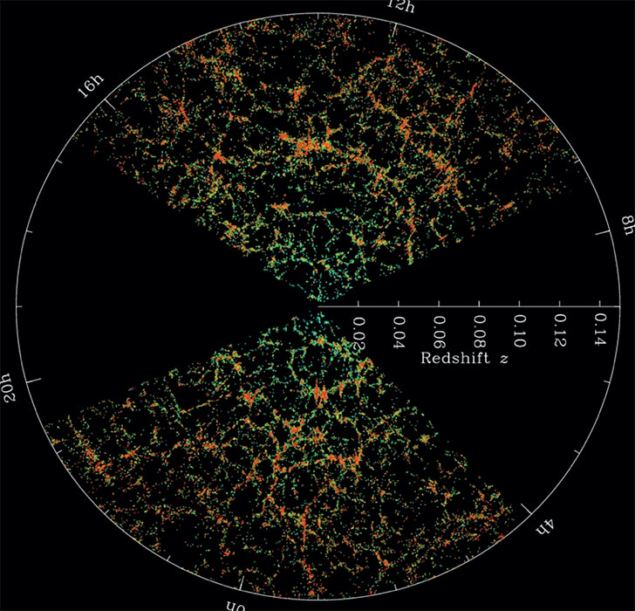

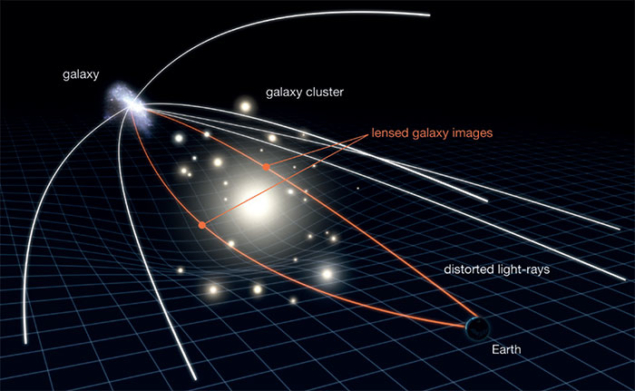

The dark-matter distribution will be probed via weak gravitational-lensing effects on galaxies. Gravitational lensing by foreground objects slightly modifies the shape of distant background galaxies, producing a distortion that directly reveals the distribution of dark matter (see panel “Tracking cosmic structure” below). The way such lensing changes as a function of look-back time, due to the continuing growth of cosmic structure from dark matter, strongly depends on the accelerating expansion of the universe and turns out to be a clear signature of the amount and nature of dark energy. Spectroscopic measurements, meanwhile, will enable us to determine tiny local deviations of the redshift of galaxies from their expected value derived from the general cosmic expansion alone (see image below). These deviations are signatures of peculiar velocities of galaxies produced by the local gravitational fields of surrounding massive structures, and therefore represent a unique test of gravity. Spectroscopy will also reveal the 3D clustering properties of galaxies, in particular baryon acoustic oscillations.

Image credit: SDSS.

Together, weak-lensing and spectroscopy data will reveal signatures of the physical processes responsible for the expansion and the hierarchical formation of structures and galaxies in the presence of dark energy. A cosmological constant, a new dark-energy component or deviations to general relativity will produce different signatures. Since these differences are expected to be very small, however, the Euclid mission is extremely demanding scientifically and also represents considerable technical, observational and data-processing challenges.

By further analysing the Euclid data in terms of power spectra of galaxies and dark matter and a description of massive nonlinear structures like clusters of galaxies, Euclid can address cosmological questions beyond the accelerating expansion. Indeed, we will be able to address any topic related to power spectra or non-Gaussian properties of galaxies and dark-matter distributions. The relationship between the light- and dark-matter distributions of galaxies, for instance, can be derived by comparing the galaxy power spectrum as derived from spectroscopy with the dark-matter power spectrum as derived from gravitational lensing. The physics of inflation can then be explored by combining the non-Gaussian features observed in the dark-matter distribution in Euclid data with the Planck data. Likewise, since Euclid will map the dark-matter distribution with unprecedented accuracy, it will be sensitive to subtle features produced by neutrinos and thereby help to constrain the sum of the neutrino masses. On these and other topics, Euclid will provide important information to constrain models.

Euclid’s science objectives translate into stringent performance requirements.

The definition of Euclid’s science cases, the development of the scientific instruments and the processing and exploitation of the data are under the responsibility of the Euclid Consortium (EC) and carried out in collaboration with ESA. The EC brings together about 1500 scientists and engineers in theoretical physics, particle physics, astrophysics and space astronomy from around 200 laboratories in 14 European countries, Canada and the US. Euclid’s science objectives translate into stringent performance requirements. Mathematical models and detailed complete simulations of the mission were used to derive the full set of requirements for the spacecraft pointing and stability, the telescope, scientific instruments, data-processing algorithms, the sky survey and the system calibrations. Euclid’s performance requirements can be broadly grouped into three categories: image quality, radiometric and spectroscopic performance. The spectroscopic performance in particular puts stringent demands on the ground-processing algorithms and demands a high level of control over cleanliness during assembly and launch.

Dark-energy payload

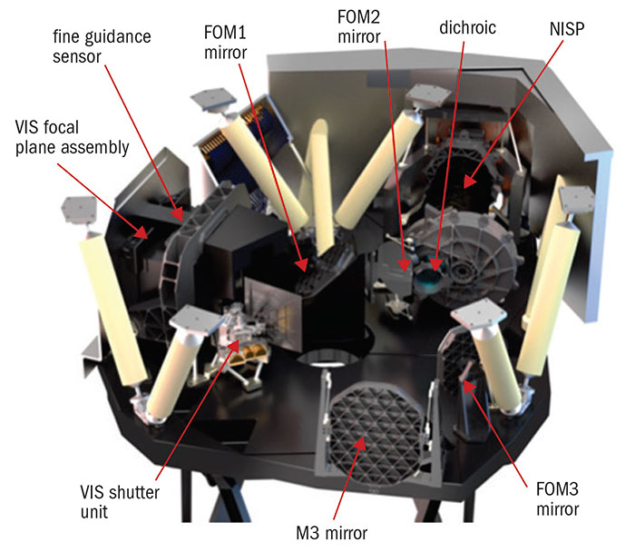

The Euclid satellite consists of a service module (SVM) and a payload module (PLM), developed by ESA’s industrial contractors Thales Alenia Space of Turin and Airbus Defence and Space of Toulouse, respectively. The two modules are substantially thermally and structurally decoupled to ensure that the extremely rigid and cold (around 130 K) optical bench located in the PLM is not disturbed by the warmer (290 K±20 K) and more flexible SVM. The SVM comprises all the conventional spacecraft subsystems and also hosts the instrument’s warm electronics units. The Euclid image-quality requirements demand very precise pointing and minimal “jitter”, while the survey requirements call for fast and accurate movements of the satellite from one field to another. The attitude and orbit control system consists of several sensors to provide sub-arc-second stability during an exposure time, and cold gas thrusters with micronewton resolution are used to actuate the fine pointing. Three star trackers provide the absolute inertial attitude accuracy. Since the trackers are mounted on the SVM, which is separate from the telescope structure and thus subject to thermo-elastic deformation, the fine guidance system is located on the same focal plane of the telescope and endowed with absolute pointing capabilities based on a reference star catalogue.

The PLM is designed to provide an extremely stable detection system enabling the sharpest possible images of the sky. The size of the point spread function (PSF), which is the image of a point source such as an unresolved star, closely resembles the Airy disc, the theoretical limit of the optical system. The PSF of Euclid images is comparable to those of the Hubble space telescope’s, considering Euclid’s smaller primary mirror, and is more than three times smaller compared with what can be achieved by the best ground-based survey telescopes under optimum viewing conditions. The telescope is composed of a 1.2 m-diameter three-mirror “anastigmatic Korsch” arrangement that feeds two instruments: a wide-field visible imager (VIS) for the shape measurement of galaxies, and a near-infrared spectrometer and photometer (NISP) for their spectroscopic and photometric redshift measurements. An important PLM design driver is to maintain a high and stable image quality over a large field of view. Building on the heritage of previous European high-stability telescopes such as Gaia, which is mapping the stars of the Milky Way with high precision, all mirrors, the telescope truss and the optical bench are made of silicon carbide, a ceramic material that combines extreme stiffness with very good thermal conduction. The PLM structure is passively cooled to a stable temperature of around 130 K, and a secondary mirror mechanism will be employed to refocus the telescope image on the VIS detector plane after launch and cool down.

Image credit: Airbus/Thales/ESA.

The VIS instrument receives light in one broad visible band covering the wavelength range 0.55–0.90 μm. To avoid additional image distortions, it has no imaging optics of its own and is equipped with a camera made up of 36 4 k × 4 k-pixel CCDs with a pixel scale of 0.1 arc second that must be aligned to a precision better than 15 μm over a distance of 30 cm. Pixel-wise, the VIS camera is the second largest camera that will be flown in space after Gaia’s and will produce the largest images ever generated in space. Unlike Gaia, VIS will compress and transmit all raw scientific images to Earth for further data processing. The instrument is capable of measuring the shapes of about 55,000 galaxies per image field of 0.5 square degrees. The NISP instrument, on the other hand, provides near-infrared photometry in the wavelength range 0.92–2.0 μm and has a slit-less spectroscopy mode equipped with three identical grisms (grating prisms) covering the wavelength range 1.25–1.85 μm. The grisms are mounted in different orientations to separate overlapping spectra of neighbouring objects, and the NISP device is capable of delivering redshifts for more than 900 galaxies per image field. The NISP focal plane is equipped with 16 near infrared HgCdTe detector arrays of 2 k × 2 k pixels with 0.3 arcsec pixels, which represents the largest near-infrared focal plane ever built for a space mission.

The exquisite accuracy and stability of Euclid’s instruments will provide certainty that any observed galaxy-shape distortions are caused by gravitational lensing and are not a result of artefacts in the optics. The telescope will deliver a field of view of more than 0.5 square degrees, which is an area comparable to two full Moons, and the flat focal plane of the Korsch configuration places no extra requirements on the surface shape of the sensors in the instruments. As the VIS and NISP instruments share the same field of view, Euclid observations can be carried out through both channels in parallel. Besides the Euclid satellite data, the Euclid mission will combine the photometry of the VIS and NISP instruments with complementary ground-based observations from several existing and new telescopes equipped with wide-field imaging or spectroscopic instruments (such as CFHT, ESO/VLT, Keck, Blanco, JST and LSST). These combined data will be used to derive an estimate of redshift for the two billion galaxies used for weak lensing, and to decouple coherent weak gravitational-lensing patterns from intrinsic alignments of galaxies. Organising the ground-based observations over both hemispheres and making these data compatible with the Euclid data turns out to be a very complex operation that involves a huge data volume, even bigger than the Euclid satellite data volume.

Ground control

One Euclid field of 0.5 square degrees will generate 520 Gb/day of VIS compressed data and 240 Gb/day of NISP compressed data, and one such field is obtained in an observing period lasting about 1 hour and 15 minutes. All raw science data are transmitted to the ground via a high-density link. Even though the nominal mission will last for six years, mapping out the 36% of the sky at the required sensitivity and accuracy within this time involves large amounts of data to be transmitted at a rate of around 850 Gb/day during just four hours of contact with the ground station. The complete processing pipeline from Euclid’s raw data to the final data products is a large IT project involving a few hundred software engineers and scientists, and has been broken down into functions handled by almost a dozen separate expert groups. A highly varied collection of data sets must be homogenised for subsequent combination: data from different ground and space-based telescopes, visible and near-infrared data, and slit-less spectroscopy. Very precise and accurate shapes of galaxies are measured, giving two orders of magnitude improvement with respect to current analyses.

Based on the current knowledge of the Euclid mission and the present ground-station development, no showstoppers have been identified. Euclid should meet its performance requirements at all levels, including the design of the mission (a survey of 15,000 square degrees in less than six years) and for the space and ground segments. This is very encouraging and most promising, taking into account the multiplicity of challenges that Euclid presents.

On the scientific side, the Euclid mission meets the precision and accuracy requested to characterise the source of the accelerating expansion of the universe and decisively reveal its nature. On the technical side, there are difficult challenges to be met in achieving the required precision and accuracy of galaxy-shape, photometric and spectroscopic redshift measurements. Our current knowledge of the mission provides a high degree of confidence that we can overcome all of these challenges in time for launch.

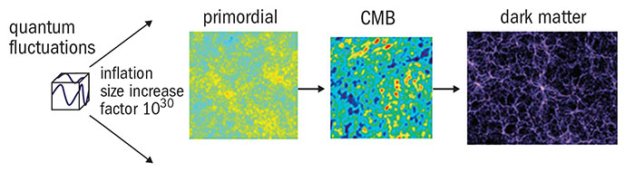

The geometry of the universe

The evolution of structure is seeded by quantum fluctuations in the very early universe, which were amplified by inflation. These seeds grew to create the cosmic microwave background (CMB) anisotropies after approximately 100,000 years and eventually the dark-matter distribution of today. In the same way that supernovae provide a standard candle for astronomical observations, periodic fluctuations in the density of the visible matter called baryon acoustic oscillations (BAO) provide a standard cosmological length scale that can be used to understand the impact of dark energy. By comparing the distance of a supernova or structure with its measured redshift, the geometry of the universe can be obtained.

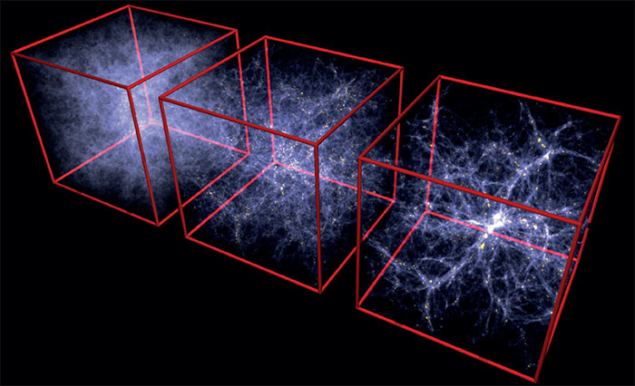

Hydrodynamical cosmological simulations of a ΛCDM universe at three different epochs (left-to-right, image left), corresponding to redshift z = 6, z = 2 and our present epoch. Each white point represents the concentration of dark matter, gas and stars, the brightest regions being the densest. The simulation shows the growth rate of structure and the formation of galaxies, clusters of galaxies, filaments and large-scale structures over cosmic time. Euclid uses the large-scale structures made out of matter and dark matter as a standard yardstick: starting from the CMB, we assume that the typical scale of structures (or the peak in the spatial power spectrum) increases proportionally with the expansion of the universe. Euclid will determine the typical scale as a function of redshift by analysing power spectra at several redshifts from the statistical analysis of the dark-matter structures (using the weak lensing probe) or the ordinary matter structures based on the spectroscopic redshifts from the BAO probe. The structures will evolve with redshift also due to the properties of gravity. Information on the growth of structure at different scales in addition to different redshifts is needed to discriminate between models of dark energy and modified gravity

Tracking cosmic structure

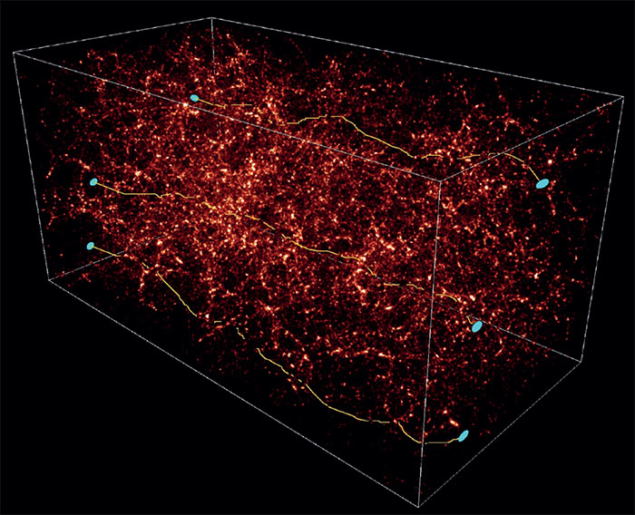

Gravitational-lensing effects produced by cosmic structures on distant galaxies (right). Numerical simulations (below) show the distribution of dark matter (filaments and clumps with brightness proportional to their mass density) over a line of sight of one billion light-years. The yellow lines show how light beams emitted by distant galaxies are deflected by mass concentrations located along the line of sight. Each deflection slightly modifies the original shape of the lensed galaxies, increasing their original intrinsic ellipticity by a small amount.

Since all distant galaxies are lensed, all galaxies eventually show a coherent ellipticity pattern projected on the sky that directly reveals the projected distribution of dark matter and its power spectrum. The 3D distribution of dark matter can then be reconstructed by slicing the universe into redshift bins and recovering the ellipticity pattern at each redshift. The growth rate of cosmic structures derived from this inversion process strongly depends on the nature of dark energy and gravity, and will be detected by the outstanding image quality of Euclid’s VIS instrument.