In 1960, two high-energy physics laboratories were competing for scientific discoveries. The first was Brookhaven National Laboratory on Long Island in New York, US, with its 33 GeV Alternating Gradient Synchrotron (AGS). The second was CERN in Switzerland, with its 28 GeV Proton Synchrotron (PS). That year, the US Atomic Energy Commission (AEC) received several proposals to boost the country’s research programme focusing on the construction of new accelerators with energies between 100–1000 GeV. A joint panel of president Kennedy’s Presidential Science Advisory Committee and the AEC’s General Advisory Committee was formed to consider the submissions, chaired by Harvard physicist and Manhattan Project veteran Norman Ramsey. By May 1963, the panel had decided to have Ernest Lawrence’s Radiation Laboratory in Berkeley, California, design a several-hundred GeV accelerator. The result was a 200 GeV synchrotron costing approximately $340 million.

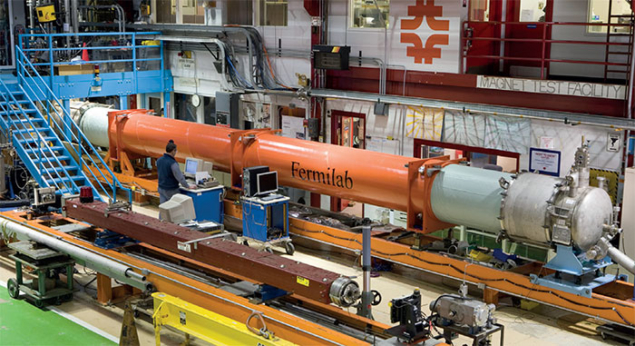

Image credit: Fermilab.

When Cornell physicist Robert Rathbun Wilson, a student of Lawrence’s who also worked on the Manhattan Project, saw Berkeley’s plans he considered them too conservative, unimaginative and too expensive. Wilson, being a modest yet proud man, thought he could design a better accelerator for less money and let his thoughts be known. By September 1965, Wilson had proposed an alternative, innovative, less costly (approximately $250 million) design for the 200 GeV accelerator to the AEC. The Joint Committee on Atomic Energy, the congressional body responsible for AEC projects and budgets, approved of his plan.

During this period, coinciding with the Vietnam war, the US Congress hoped to contain costs. Yet physicists hoped to make breakthrough discoveries, and thought it important to appeal to national interests. The discovery of the Ω– particle at Brookhaven in 1964 led high-energy physicists to conclude that “an accelerator ‘in the range of 200–1000 BeV’ would ‘certainly be crucial’ in exploring the ‘detailed dynamics of this strong SU(3) symmetrical interaction’.” Simultaneously, physicists were expressing frustration with the geographic situation of US high-energy physics facilities. East and West Coast laboratories like Lawrence Berkeley Laboratory and Brookhaven did not offer sufficient opportunity for the nation’s experimental physicists to pursue their research. Managed by regional boards, the programmes at these two labs were directed by and accessible to physicists from nearby universities. Without substantial federal support, other major research universities struggled to compete with these regional laboratories.

Image credit: Fermilab.

Against this backdrop arose a major movement to accommodate physicists in the centre of the country and offer more equal access. Columbia University experimental physicist Leon Lederman championed “the truly national laboratory” that would allow any qualifying proposal to be conducted at a national, rather than a regional, facility. In 1965, a consortium of major US research universities, Universities Research Association (URA), Inc., was established to manage and operate the 200 GeV accelerator laboratory for the AEC (and its successor agencies the Energy Research and Development Administration (ERDA) and the Department of Energy (DOE)) and address the need for a more national laboratory. Ramsey was president of URA for most of the period 1966 to 1981.

Following a nationwide competition organised by the National Academy of Sciences, in December 1966 a 6800 acre site in Weston, Illinois, around 50 km west of Chicago, was selected. Another suburban Chicago site, north of Weston in affluent South Barrington, had withdrawn when local residents “feared that the influx of physicists would ‘disturb the moral fibre of their community’”. Robert Wilson was selected to direct the new 200 GeV accelerator, named the National Accelerator Laboratory (NAL). Wilson asked Edwin Goldwasser, an experimental physicist from the University of Illinois, Urbana-Champaign, and member of Ramsey’s panel, to be his deputy director and the pair set up temporary offices in Oak Brook, Illinois, on 15 June 1967. They began to recruit physicists from around the country to staff the new facility and design the 200 GeV accelerator, also attracting personnel from Chicago and its suburbs. President Lyndon Johnson signed the bill authorising funding for the National Accelerator Laboratory on 21 November 1967.

Chicago calling

Image credit: Fermilab.

It wasn’t easy to recruit scientific staff to the new laboratory in open cornfields and farmland with few cultural amenities. That picture lies in stark contrast to today, with the lab encircled by suburban sprawl encouraged by highway construction and development of a high-tech corridor with neighbours including Bell Labs/AT&T and Amoco. Wilson encouraged people to join him in his challenge, promising higher energy and more experimental capability than originally planned. He and his wife, Jane, imbued the new laboratory with enthusiasm and hospitality, just as they had experienced in the isolated setting of wartime-era Los Alamos while Wilson carried out his work on the Manhattan Project.

Wilson and Goldwasser worked on the social conscience of the laboratory and in March 1968, a time of racial unrest in the US, they released a policy statement on human rights. They intended to: “seek the achievement of its scientific goals within a framework of equal employment opportunity and of a deep dedication to the fundamental tenets of human rights and dignity…The formation of the Laboratory shall be a positive force…toward open housing…[and] make a real contribution toward providing employment opportunities for minority groups…Special opportunity must be provided to the educationally deprived…to exploit their inherent potential to contribute to and to benefit from the development of our Laboratory. Prejudice has no place in the pursuit of knowledge…It is essential that the Laboratory provide an environment in which both its staff and its visitors can live and work with pride and dignity. In any conflict between technical expediency and human rights we shall stand firmly on the side of human rights. This stand is taken because of, rather than in spite of, a dedication to science.” Wilson and Goldwasser brought inner-city youth out to the suburbs for employment, training them for many technical jobs. Congress supported this effort and was pleased to recognise it during the civil-rights movement of the late 1960s. Its affirmative spirit endures today.

Image credit: Fermilab.

When asked by a congressional committee authorising funding for NAL in April 1969 about the value of the research to be conducted at NAL, and if it would contribute to national defence, Wilson famously answered: “It has only to do with the respect with which we regard one another, the dignity of men, our love of culture…It has to do with, are we good painters, good sculptors, great poets? I mean all the things we really venerate and honour in our country and are patriotic about. It has nothing to do directly with defending our country except to help make it worth defending.”

A harmonious whole

Wilson, who had promised to complete his project on time and under budget, perceived of the new laboratory as a beautiful, harmonious whole. He felt that science, technology, and art are importantly connected, and brought a graphic artist, Angela Gonzales, with him from Cornell to give the laboratory site and its publications a distinctive aesthetic. He had his engineers work with a Berkeley colleague, William Brobeck, and an architectural-engineering group, DUSAF, to make designs and cost estimates for early submissions to the AEC, in time for their submissions to the congressional committees that controlled NAL’s budget. Wilson appreciated frugality and minimal design, but also tried to leave room for improvements and innovation. He thought design should be ongoing, with changes implemented as they are demonstrated, before they became conservative.

Image credit: Fermilab.

There were many decisions to be made in creating the laboratory Wilson envisioned. Many had to be modified, but this was part of his approach: “I came to understand that a poor decision was usually better than no decision at all, for if a necessary decision was not made, then the whole effort would just wallow – and, after all, a bad decision could be corrected later on,” he wrote in 1987. An example was the magnets in the Main Ring, the first name of the 200 GeV synchrotron accelerator, which had to be redesigned as did the plans for the layout of the experimental areas. Even the design of the distinctive Central Laboratory building, constructed after the accelerator achieved its design energy and renamed Robert Rathbun Wilson Hall in 1980, had to have certain adjustments from its initial concepts. Wilson said that “a building does not have to be ugly to be inexpensive” and he orchestrated a competition among his selected architects to create the final design of this visually striking structure. To save money he set up competitions between contractors so that the fastest to finish a satisfactory project were rewarded with more jobs. Consequently, the Main Ring was completed on time by 30 March 1972 and under the $250 million budget. NAL was dedicated and renamed Fermilab on 11 May 1974.

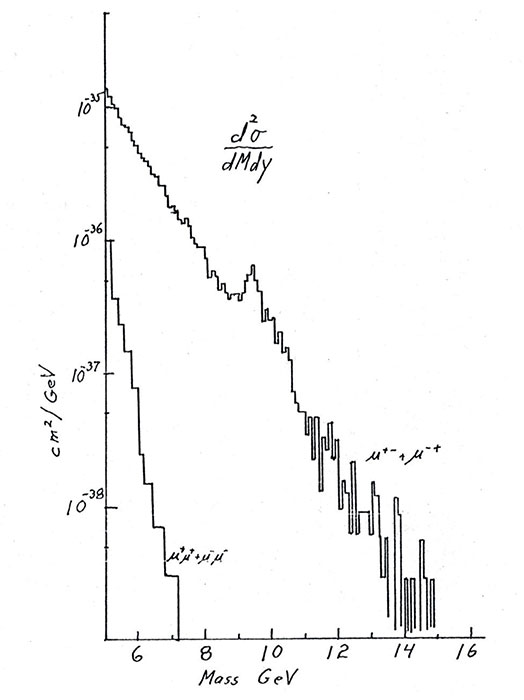

International attraction

Experimentalists from Europe and Asia flocked to propose research at the new frontier facility in the US, forging larger collaborations with American colleagues. Its forefront position and philosophy attracted the top physicists of the world, with Russian physicists making news working on the first approved experiment at Fermilab in the height of the Cold War. Congress was pleased and the scientists were overjoyed with more experimental areas than originally planned and with higher energy, as the magnets were improved to attain 400 GeV and 500 GeV within two years. The higher energy in a fixed-target accelerator complex allowed more innovative experiments, in particular enabling the discovery of the bottom quark in 1977.

Image credit: Fermilab.

Fermilab’s early intellectual environment was influenced by theoretical physicists Robert Serber, Sam Trieman, J D Jackson and Ben Lee, who later brought Chris Quigg and Bill Bardeen, who in turn invited many distinguished visitors to add to the creative milieu of the laboratory. Already on Wilson’s mind was a colliding-beams accelerator he called an “energy doubler”, which would employ superconductivity, and he had established working groups to study the idea. But Wilson encountered budget conflicts with the AEC’s successor, the new Department of Energy, which led to his resignation in 1978. He joined the faculties of the University of Chicago and Columbia University briefly before returning to Cornell in 1982.

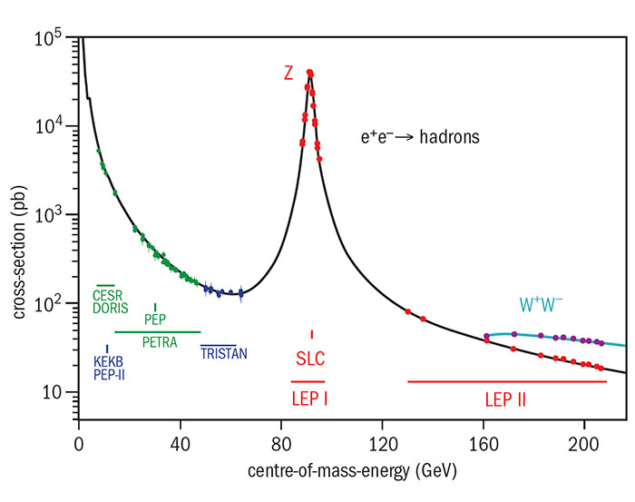

Fermilab’s future was destined to move forward with Wilson’s ideas of superconducting-magnet technology, and a new director was sought. Lederman, who was spokesperson of the Fermilab study that discovered the bottom quark, accepted the position in late 1978 and immediately set out to win support for Wilson’s energy doubler. An accomplished scientific spokesman, Lederman achieved the necessary funding by 1979 and promoted the energy-enhancing idea of introducing an antiproton source to the accelerator complex to enable proton–antiproton collisions. Experts from Brookhaven and CERN, as well as the former USSR, shared ideas with Fermilab physicists to bring superconducting-magnet technology to fruition at Fermilab. Under the leadership of Helen Edwards, Richard Lundy, Rich Orr and Alvin Tollestrup, the Main Ring evolved into the energy doubler/saver in 1983 with a new ring of superconducting magnets installed below the early Main Ring magnets. This led to a trailblazing era during which Fermilab’s accelerator complex, now called the Tevatron, would lead the world in high-energy physics experiments. By 1985 the Tevatron had achieved 800 GeV in fixed-target experiments and 1.6 TeV in colliding-beam experiments, and by the time of its closure in 2011 it had reached 1.96 TeV in the centre of mass – just shy of its original goal of 2 TeV.

Theory also thrived at Fermilab in this period. Lederman had brought James Bjorken to Fermilab’s theoretical physics group in 1980 and a theoretical astrophysics group founded by Rocky Kolb and Michael Turner was added to Fermilab’s research division in 1983 to address research at the intersection of particle physics and cosmology. Lederman also expanded the laboratory’s mission to include science education, offering programmes to local high-school students and teachers, and in 1980 opened the first children’s centre for employees of any DOE facility. He founded the Illinois Mathematics and Science Academy in 1985 and the Chicago Teachers Academy for Mathematics and Science in 1990, and the Lederman Science Education Center on the Fermilab site is named after him. Lederman also reached out to many regions including Latin America and partnered with businesses to support the lab’s research and encourage technology transfer. The latter included Wilson’s early Fermilab initiative of neutron therapy for certain cancers, which later would see Fermilab build the 70–250 MeV proton synchrotron for the Loma Linda Medical Center in California.

Scientifically, the target in this period was the top quark. Fermilab and CERN had planned for a decade to detect the elusive top, with Fermilab deploying two large international experimental teams at the Tevatron – CDF (founded by Tollestrup) and DZero (founded by Paul Grannis) – from 1976 to 1995. In 1988 Lederman shared the Nobel prize for the discovery of the muon neutrino at Brookhaven 25 years previously, and in 1989 he stepped down as Fermilab director and joined the faculty of the University of Chicago and later the Illinois Institute of Technology.

Lederman was succeeded by John Peoples, a machine builder and Fermilab experimentalist since 1970, and leader of the Fermilab antiproton source from 1981 to 1985. Peoples had his hands full not only with Fermilab and its research programme but also with the Superconducting Super Collider (SSC) laboratory in Texas. In 1993 the SSC was cancelled and Peoples was asked by the DOE to close down the project and its many contracts. The only person to direct two national laboratories at the same time, Peoples successfully managed both tasks and returned to Fermilab to see the discovery of the top quark in 1995. He had also launched the luminosity-enhancing upgrade to the Tevatron, the Main Injector, in 1999. Peoples stepped down as laboratory director that summer and became director of the Sloan Digital Sky Survey (SDSS) – Fermilab’s first astrophysics experiment. He later directed the Dark Energy Survey and in 2010 he retired, continuing to serve as director emeritus of the laboratory.

Intense future

Image credit: Fermilab.

In 1999, experimentalist and former Fermilab user Michael Witherell of the University of California at Santa Barbara became Fermilab’s fourth director. Ongoing fixed-target and colliding-beam experiments continued under Witherell, as did the SDSS and the Pierre Auger cosmic ray experiments, and the neutrino programme with the Main Injector. Mirroring the spirt of US–European competition of the 1960s, this period saw CERN begin construction of the Large Hadron Collider (LHC) to search for the Higgs boson at a lower energy than the cancelled SSC. Accordingly, the luminosity of the Tevatron became a priority, as did discussions about a possible future international linear collider. After launching the Neutrinos at the Main Injector (NuMI) research programme, including sending the underground particle beam off-site to the MINOS detector in Minnesota, Witherell returned to Santa Barbara in 2005 and in 2016 he became director of the Lawrence Berkeley Laboratory.

Physicist Piermaria Oddone from Lawrence Berkeley Laboratory became Fermilab’s fifth director in 2005. He pursued the renewal of the Tevatron in order to exploit the intensity frontier and explore new physics with a plan called “Project X”, part of the “Proton Improvement Plan”. Yet the last decade has been a challenging time for Fermilab, with budget cuts, reductions in staff and a redefinition of its mission. The CDF and DZero collaborations continued their search for the Higgs boson, narrowing the region where it could exist, but the more energetic LHC always had the upper hand. In the aftermath of the global economic crisis of 2008, as the LHC approached switch-on, Oddone oversaw the shutdown of the Tevatron in 2011. A Remote Operations Center in Wilson Hall and a special US Observer agreement allowed Fermilab physicists to co-operate with CERN on LHC research and participate in the CMS experiment. The Higgs boson was duly discovered at CERN in 2012 and Oddone retired the following year.

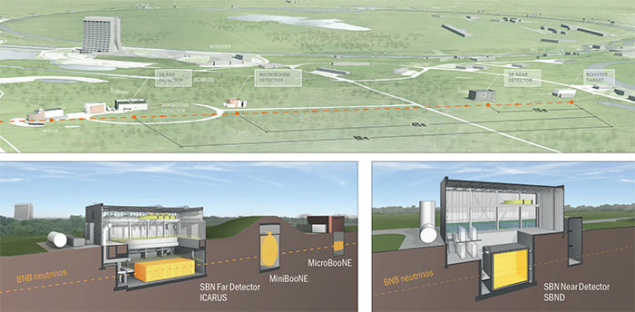

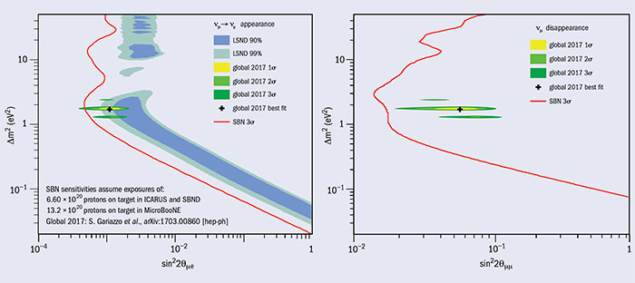

Image credit: Fermilab.

Under its sixth director, former Fermilab user and director of TRIUMF laboratory in Vancouver, Nigel Lockyer, Fermilab now looks ahead to shine once more through continued exploration of the intensity frontier and understanding the properties of neutrinos. In the next few years, Fermilab’s Long-Baseline Neutrino Facility (LBNF) will send neutrinos to the underground DUNE experiment 1300 km away in South Dakota, prototype detectors for which are currently being built at CERN. Meanwhile, Fermilab’s Short-Baseline Neutrino programme has just taken delivery of the 760 tonne cryostat for its ICARUS experiment after its recent refurbishment at CERN, while a major experiment called Muon g-2 is about to take its first results. This suite of experiments, with co-operation with CERN and other international labs, puts Fermilab at the leading edge of the intensity frontier and continues Wilson’s dreams of exploration and discovery.