Image credit: J Ordan/CERN.

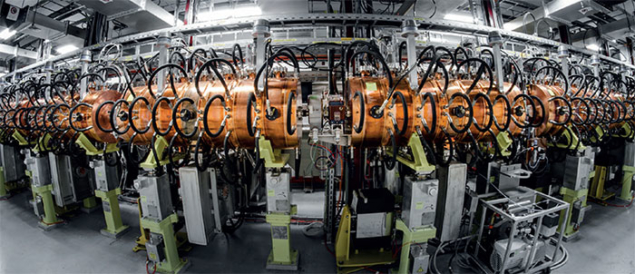

The Large Hadron Collider (LHC) is the most famous and powerful of all CERN’s machines, colliding intense beams of protons at an energy of 13 TeV. But its success relies on a series of smaller machines in CERN’s accelerator complex that serve it. The LHC’s proton injectors have already been providing beams with characteristics exceeding the LHC’s design specifications. This decisively contributed to the excellent performance of the 2010–2013 LHC physics operation and, since 2015, has allowed CERN to push the machine beyond its nominal beam performance.

Built between 1959 and 1976, the CERN injector complex accelerates proton beams to a kinetic energy of 450 GeV. It does this via a succession of accelerators: a linear accelerator called Linac 2 followed by three synchrotrons – the Proton Synchrotron Booster (PSB), the Proton Synchrotron (PS) and the Super Proton Synchrotron (SPS). The complex also provides the LHC with ion beams, which are first accelerated through a linear accelerator called Linac 3 and the Low Energy Ion Ring (LEIR) synchrotron before being injected into the PS and the SPS. The CERN injectors, besides providing beams to the LHC, also serve a large number of fixed-target experiments at CERN – including the ISOLDE radioactive-beam facility and many others.

Part of the LHC’s success lies in the flexibility of the injectors to produce various beam parameters, such as the intensity, the spacing between proton bunches and the total number of bunches in a bunch train. This was clearly illustrated in 2016 when the LHC reached peak luminosity values 40% higher than the design value of 1034 cm–2 s–1, although the number of bunches in the LHC was still about 27% below the maximum achievable. This gain was due to the production of a brighter beam with roughly the same intensity per bunch but in a beam envelope of just half the size.

Image credit: H Bartosik & OP team.

Despite the excellent performance of today’s injectors, the beams produced are not sufficient to meet the very demanding proton beam parameters specified by the high-luminosity upgrade of the LHC (HL-LHC). Indeed, as of 2025, the HL-LHC aims to accumulate an integrated luminosity of around 250 fb–1 per year, to be compared with the 40 fb–1 achieved in 2016. For heavy-ion operations, the goals are just as challenging: with lead ions the objective is to obtain an integrated luminosity of 10 nb–1 during four runs starting from 2021 (compared to the 2015 achievement of less than 1 nb–1). This has demanded a significant upgrade programme that is now being implemented.

Immense challenges

To prepare the CERN accelerator complex for the immense challenges of the HL-LHC, the LHC Injectors Upgrade project (LIU) was launched in 2010. In addition to enabling the necessary proton and ion injector chains to deliver beams of ions and protons required for the HL-LHC, the LIU project must ensure the reliable operation and lifetime of the injectors throughout the HL-LHC era, which is expected to last until around 2035. Hence, the LIU project is also tasked with replacing ageing equipment (such as power supplies, magnets and radio-frequency cavities) and improving radioprotection measures such as shielding and ventilation.

One of the first challenges faced by the LIU team members was to define the beam-performance limitations of all the accelerators in the injector chain and identify the actions needed to overcome them by the required amount. Significant machine and simulation studies were carried out over a period of years, while functional and engineering specifications were prepared to provide clear guidelines to the equipment groups. This was followed by the production of the first hardware prototype devices and their installation in the machines for testing and, where possible, early exploitation.

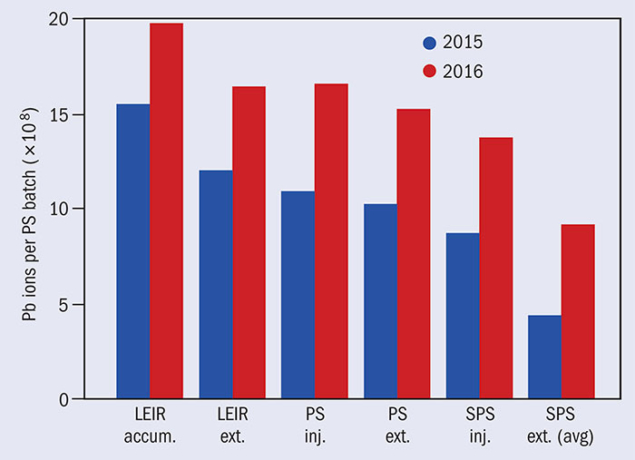

Significant progress has already been made concerning the production of ion beams. Thanks to the modifications in Linac 3 and LEIR implemented after 2015 and the intensive machine studies conducted within the LIU programme over the last three years, the excellent performance of the ion injector chain could be further improved in 2016 (figure 1). This enabled the recorded luminosity for the 2016 proton–lead run to exceed the target value by a factor of almost eight. The main remaining challenges for the ion beams will be to more than double the number of bunches in the LHC through complex RF manipulations in the SPS known as “momentum slip stacking”, as well as to guarantee continued and stable performance of the ion injector chain without constant expert monitoring.

Along the proton injector chain, the higher-intensity beams within a comparatively small beam envelope required by the HL-LHC can only be demonstrated after the installation of all the LIU equipment during Long Shutdown 2 (LS2) in 2019–2020. The main installations feature: a new injection region, a new main power supply and RF system in the PSB; a new injection region and RF system to stabilise the future beams in the PS; an upgraded main RF system; and the shielding of vacuum flanges together with partial coating of the beam chambers in order to stabilise future beams against parasitic electromagnetic interaction and electron clouds in the SPS. Beam instrumentation, protection devices and beam dumps also need to be upgraded in all the machines to match the new beam parameters. The baseline goals of the LIU project to meet the challenging HL-LHC requirements are summarised in the panel (final page of feature).

Execution phase

Having defined, designed and endorsed all of the baseline items during the last seven years, the LIU project is presently in its execution phase. New hardware is being produced, installed and tested in the different machines. Civil-engineering work is proceeding for the buildings that will host the new PSB main power supply and the upgraded SPS RF equipment, and to prepare the area in which the new SPS internal beam dump will be located.

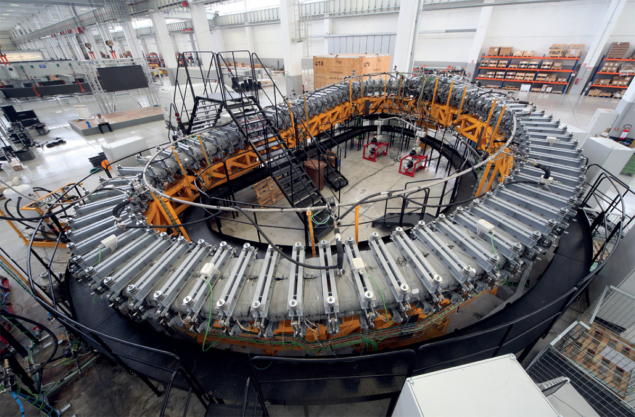

The 86 m-long Linac 4, which will eventually replace Linac 2, is an essential component of the HL-LHC upgrade (see panel opposite). The machine, based on newly developed technology, became operational at the end of 2016 following the successful completion of acceleration tests at its nominal energy of 160 MeV. It is presently undergoing an important reliability run that will be instrumental to reach beams with characteristics matching the requirements of the LIU project and to achieve an operational availability higher than 95%, which is an essential level for the first link in the proton injector chain. On 26 October 2016, the first 160 MeV negative hydrogen-ion beam was successfully sent to the injection test stand, which operated until the beginning of April 2017 and demonstrated the correct functioning of this new and critical CERN injection system as well as of the related diagnostics and controls.

Image credit: M Van Gompel.

The PSB upgrade has mostly completed the equipment needed for the injection of negative hydrogen ions from Linac 4 into the PSB and is progressing with the 2 GeV energy upgrade of the PSB rings and extraction, with a planned installation date of 2019–2020 during LS2. On the beam-physics side, studies have mainly focused on the deployment of the new wideband RF system, commissioning of beam diagnostics and investigation of space-charge effects. During the 2016–2017 technical stop, the principal LIU-related activities were the removal of a large volume of obsolete cables and the installation of new beam instrumentation (e.g. a prototype transverse size measurement device and turn-by-turn orbit measurement systems). The unused cables, which had been individually identified and labelled beforehand, could be safely removed from the machine to allow cables for the new LIU equipment to be pulled.

The procurement, construction, installation and testing of upgrade items for the PS is also progressing. Some hardware, such as new corrector magnets and power supplies, a newly developed beam gas-ionisation monitor and new injection vacuum chambers to remove aperture limitations, was already installed during past technical stops. Mitigating anticipated longitudinal beam instabilities in the PS is essential for achieving the LIU baseline beam parameters. This requires that the parasitic electromagnetic interaction of the beam with the multiple RF systems has to be reduced and a new feedback system has to be deployed to keep the beam stable. Beam-dynamics studies will determine the present intensity reach of the PS and identify any remaining needs to comfortably achieve the value required for the HL-LHC. Improved schemes of bunch rotation are also under investigation to better match the beam extracted from the PS to the SPS RF system and thus limit the beam losses at injection energy in the SPS.

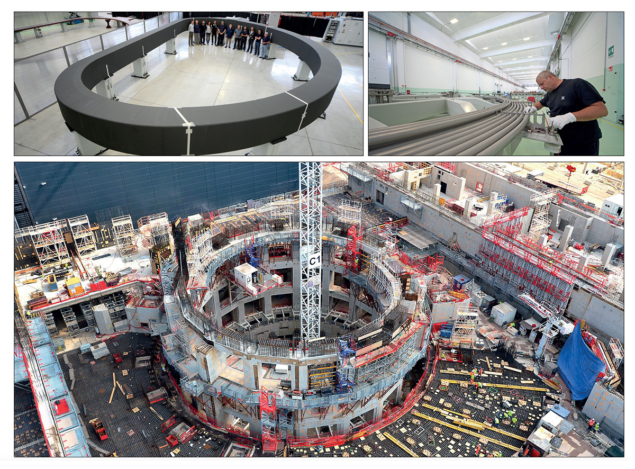

In the SPS, the LIU deployment in the tunnel has begun in earnest, with the re-arrangement and improvement of the extraction kicker system, the start of civil engineering for the new beam-dump system in LSS5 and the shielding of vacuum flanges in 10 half-cells together with the amorphous carbon coating of the adjacent beam chambers (to mitigate against electron-cloud effects). In a notable first, eight dipole and 10 focusing quadrupole magnet chambers were amorphous carbon coated in-situ during the 2016–2017 technical stop, proving the industrialisation of this process (figure 2). The new overground RF building needed to accommodate the power amplifiers of the upgraded main RF system has been completed, while procurement and testing of the solid-state amplifiers has also commenced. The prototyping and engineering for the LIU beam-dump is in progress with the construction and installation of a new SPS beam-dump block, which will be able to cope with the higher beam intensities of the HL-LHC and minimise radiation issues.

Image credit: G Trad.

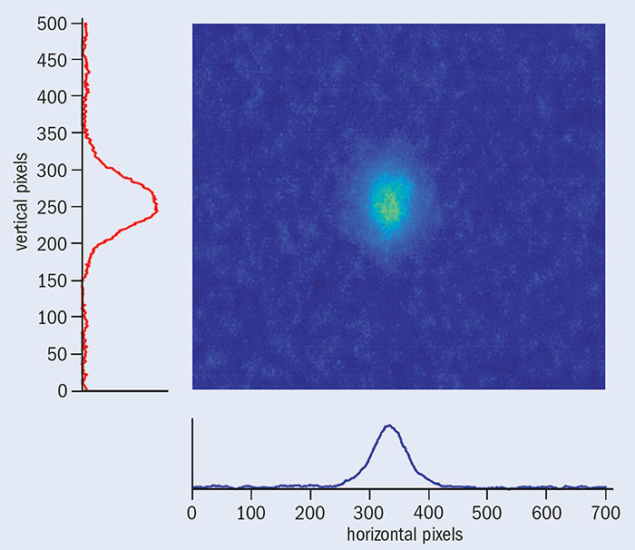

Regarding diagnostics, the development of beam-size measurement devices based on flying wire, gas ionisation and synchrotron radiation, all of which are part of the LIU programme, is already providing meaningful results (figure 3) addressing the challenges of measuring the operating high-intensity and high-brightness beams with high precision. From the machine performance and beam dynamics side, measurements in 2015–2016 made with the very high intensities available from the PS meant that new regimes were probed in terms of electron-cloud instabilities, RF power and losses at injection. More studies are planned in 2017–2018 to clearly identify a path for the mitigation of the injection losses when operating with higher beam currents.

Looking forward to LS2

The success of LIU in delivering beams with the desired parameters is the key to achieving the HL-LHC luminosity target. Without the LIU beams, all of the other necessary HL-LHC developments – including high-field triplet magnets, crab cavities and new collimators – would only allow a fraction of the desired luminosity to be delivered to experiments.

Whenever possible, LIU installation work is taking place during CERN’s regular year-end technical stops. But the great majority of the upgrade requires an extended machine stop and therefore will have to wait until LS2 for implementation. The duration of access to the different accelerators during LS2 is being defined and careful preparation is ongoing to manage the work on site, ensure safety and level the available resources among the different machines in the CERN accelerator complex. After all of the LIU upgrades are in place, beams will be commissioned with the newly installed systems. The LIU goals in terms of beam characteristics are, by definition, uncharted territory. Reaching them will require not only a high level of expertise, but also careful optimisation and extensive beam-physics and machine-development studies in all of CERN’s accelerators.

Linac 4 complete and preparing to serve the high-luminosity LHC

Inaugurated in May, Linac 4 is CERN’s newest accelerator acquisition since the LHC and is key to increasing the luminosity delivered to the LHC. Linac 4 will send negative hydrogen ions with an energy of 160 MeV – more than three times the energy of its predecessor Linac 2 (which has been in service since 1978) – to the Proton Synchrotron Booster, which further accelerates the negative ions and removes the electrons. Almost 90 m long, it took nearly 10 years to build. After an extensive testing period that is already under way, Linac 4 will be connected to CERN’s accelerator complex during the long technical shutdown in 2019–2020.

Overhauling CERN’s accelerator complex

The demands of the high-luminosity LHC (HL-LHC) will see major changes across CERN’s accelerator complex (above). The LHC Injectors Upgrade project is organised into five baseline work packages to boost the performance of the LHC injectors and match the challenging HL-LHC requirements:• Improving ion source and low-energy transport in Linac 3, alleviating ion losses in LEIR and SPS, and implementing momentum slip stacking for ion beams in the SPS.

The demands of the high-luminosity LHC (HL-LHC) will see major changes across CERN’s accelerator complex (above). The LHC Injectors Upgrade project is organised into five baseline work packages to boost the performance of the LHC injectors and match the challenging HL-LHC requirements:• Improving ion source and low-energy transport in Linac 3, alleviating ion losses in LEIR and SPS, and implementing momentum slip stacking for ion beams in the SPS.

• Replacing Linac 2 with Linac 4 and using H– charge-exchange injection into the PSB at the increased energy of 160 MeV.

• Raising the injection energy into the PS from the present 1.4 GeV to 2 GeV.

• Doubling the RF power, reducing the longitudinal impedance and mitigating the electron cloud in the SPS.

• Putting in place all of the other necessary equipment and operational upgrades across PSB, PS and SPS to make them capable of accelerating and manipulating higher-intensity/brightness beams (e.g. intercepting and dump devices, feedback systems, beam instrumentation and resonance compensation).